AI Native Daily Paper Digest – 20250224

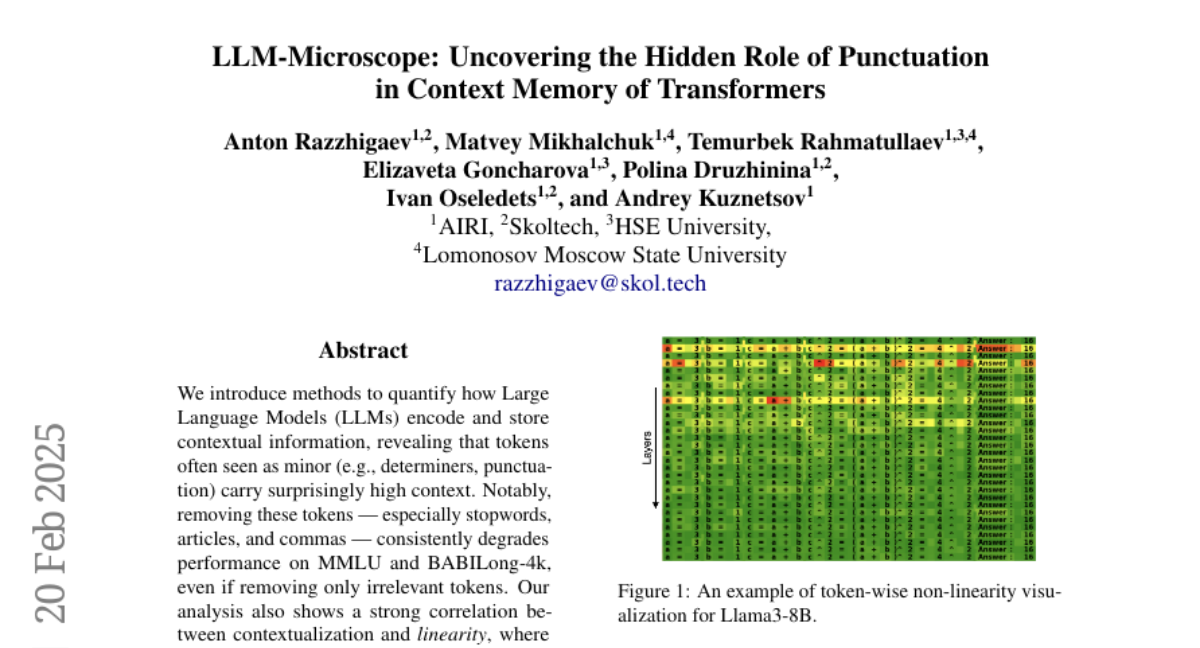

1. LLM-Microscope: Uncovering the Hidden Role of Punctuation in Context Memory of Transformers

🔑 Keywords: Large Language Models, Contextual Information, Stopwords, Contextualization, LLM-Microscope

💡 Category: Natural Language Processing

🌟 Research Objective:

– The paper investigates how Large Language Models (LLMs) encode and store contextual information, highlighting the significance of tokens often perceived as minor, such as determiners and punctuation.

🛠️ Research Methods:

– The study utilizes methods to examine token-level nonlinearity, evaluates contextual memory, and visualizes intermediate layer contributions using the LLM-Microscope toolkit.

💬 Research Conclusions:

– Removing minor tokens like stopwords and commas degrades model performance, emphasizing their role in maintaining context. There is a strong correlation between contextualization and linearity within model layers, revealing the critical importance of these trivial tokens for long-range understanding.

👉 Paper link: https://huggingface.co/papers/2502.15007

2. SurveyX: Academic Survey Automation via Large Language Models

🔑 Keywords: Large Language Models, SurveyX, Automated Survey Generation, AttributeTree, Human Expert Performance

💡 Category: Natural Language Processing

🌟 Research Objective:

– The paper aims to address the limitations of current automated survey generation by proposing SurveyX, which enhances survey composition through innovative techniques.

🛠️ Research Methods:

– The researchers decompose the survey composing process into two phases: Preparation and Generation. They introduce online reference retrieval, AttributeTree as a pre-processing method, and a re-polishing process.

💬 Research Conclusions:

– SurveyX significantly outperforms existing automated systems in terms of content and citation quality, showing improvements of 0.259 and 1.76 respectively, and approaches the performance of human experts.

👉 Paper link: https://huggingface.co/papers/2502.14776

3. MaskGWM: A Generalizable Driving World Model with Video Mask Reconstruction

🔑 Keywords: World models, Autonomous driving models, Generalization, Video prediction, MaskGWM

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To improve predictive duration and generalization capabilities in autonomous driving models through the combination of generation loss with MAE-style feature-level context learning.

🛠️ Research Methods:

– Implemented a Diffusion Transformer (DiT) with an extra mask construction task.

– Developed diffusion-related mask tokens for handling relations between mask reconstruction and generative diffusion.

– Extended mask construction to spatial-temporal domain using row-wise mask for shifted self-attention.

💬 Research Conclusions:

– Proposed MaskGWM with two variants: MaskGWM-long for long-horizon prediction, and MaskGWM-mview for multi-view generation.

– Experiments on Nuscene, OpenDV-2K, and Waymo datasets showed notable improvements in state-of-the-art driving world models.

👉 Paper link: https://huggingface.co/papers/2502.11663

4. Mol-LLaMA: Towards General Understanding of Molecules in Large Molecular Language Model

🔑 Keywords: Molecular Language Model, Multi-Modal Instruction Tuning, Molecular Structures, Molecular Analysis, Generative Models

💡 Category: Generative Models

🌟 Research Objective:

– The study aims to develop Mol-LLaMA, a large molecular language model, to enhance understanding and comprehensive analysis of molecules via multi-modal instruction tuning.

🛠️ Research Methods:

– Design key data types to encompass fundamental molecular features and incorporate complementary information from different molecular encoders to leverage distinctive molecular representations.

💬 Research Conclusions:

– Mol-LLaMA successfully comprehends general molecular features and generates detailed responses to user queries, indicating its potential as a general-purpose assistant for molecular analysis.

👉 Paper link: https://huggingface.co/papers/2502.13449

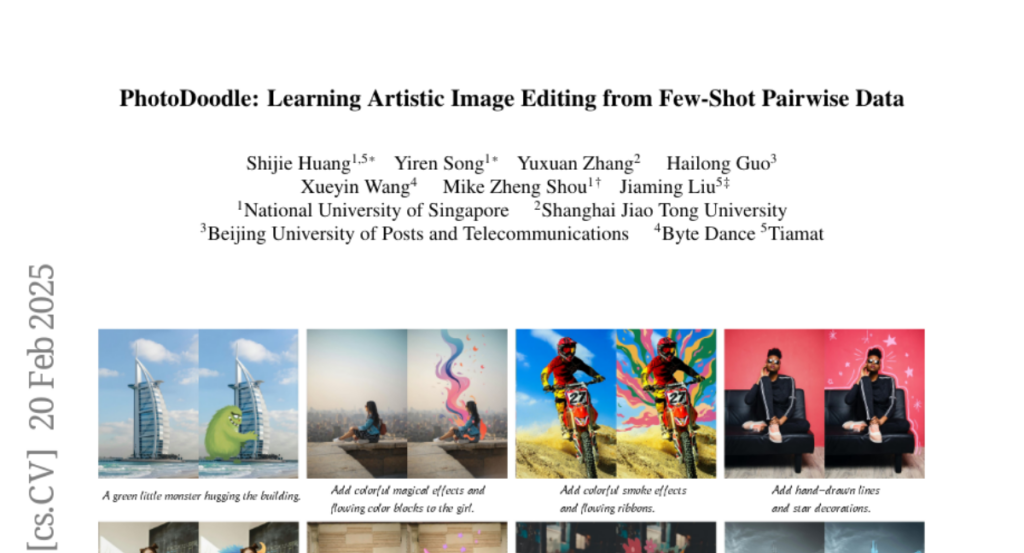

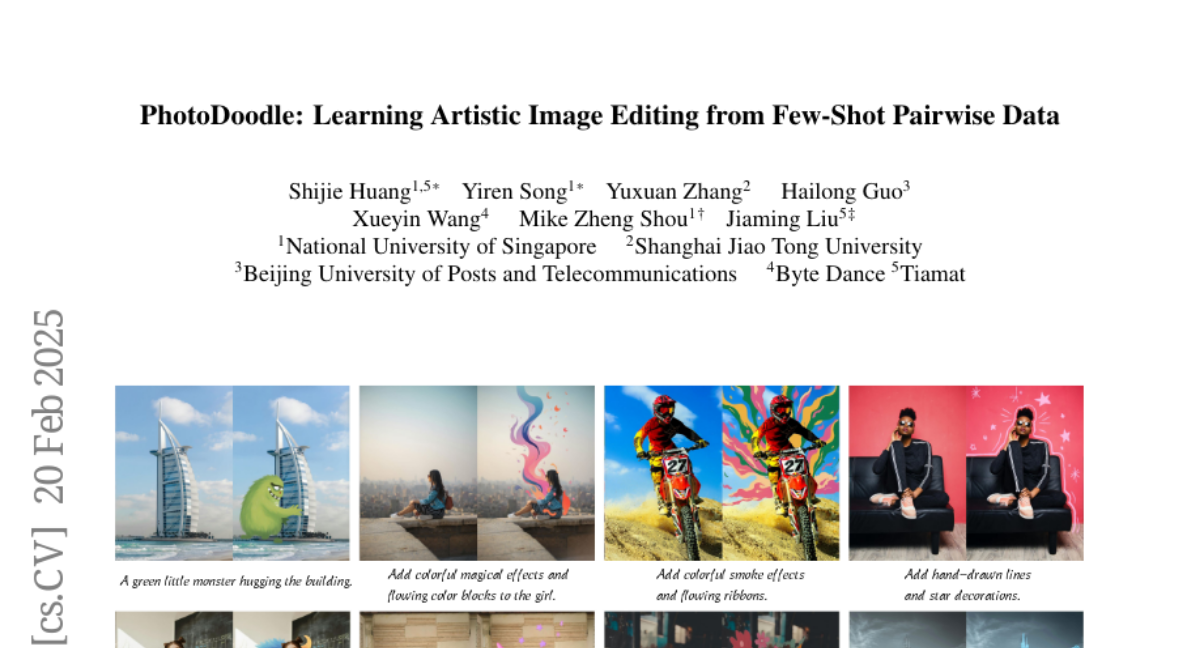

5. PhotoDoodle: Learning Artistic Image Editing from Few-Shot Pairwise Data

🔑 Keywords: PhotoDoodle, image editing, style transfer, OmniEditor, EditLoRA

💡 Category: Computer Vision

🌟 Research Objective:

– Introduce PhotoDoodle to seamlessly integrate decorative elements into photographs while preserving the artist’s unique style and background coherence.

🛠️ Research Methods:

– A two-stage training strategy involving an initial training of the OmniEditor on large-scale data followed by fine-tuning with EditLoRA on a small, artist-curated dataset.

💬 Research Conclusions:

– PhotoDoodle demonstrates advanced performance and robustness in customized image editing, enabling new possibilities for artistic creation with realistic blending and perspective alignment.

👉 Paper link: https://huggingface.co/papers/2502.14397

6. SIFT: Grounding LLM Reasoning in Contexts via Stickers

🔑 Keywords: Large Language Models, Stick to the Facts (SIFT), Context Misinterpretation, Sticker, Inference

💡 Category: Natural Language Processing

🌟 Research Objective:

– The paper aims to address the significant issue of context misinterpretation during the reasoning processes of large language models.

🛠️ Research Methods:

– Introduces a novel post-training approach known as Stick to the Facts (SIFT) to enhance LLM reasoning by using the model-generated Sticker to emphasize key context information.

💬 Research Conclusions:

– SIFT demonstrates consistent performance improvements across diverse models and benchmarks, notably increasing DeepSeek-R1’s pass@1 accuracy on AIME2024 from 78.33% to 85.67%, setting a new state-of-the-art in the open-source community.

👉 Paper link: https://huggingface.co/papers/2502.14922

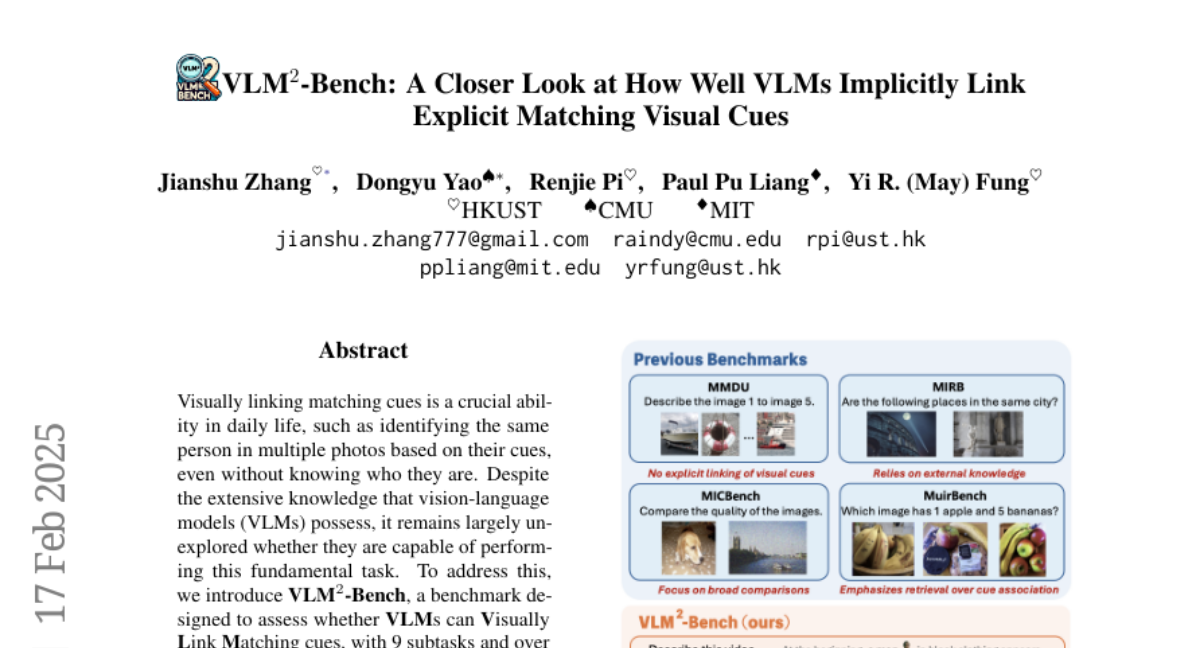

7. VLM$^2$-Bench: A Closer Look at How Well VLMs Implicitly Link Explicit Matching Visual Cues

🔑 Keywords: Vision-Language Models, Visual Linking, GPT-4o

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The paper aims to assess whether vision-language models are capable of visually linking matching cues, a fundamental task in daily life.

🛠️ Research Methods:

– Introduction of VLM^2-Bench, a benchmark with 9 subtasks and over 3,000 test cases to evaluate eight open-source VLMs and GPT-4o, along with analysis of prompting methods.

💬 Research Conclusions:

– Identified critical challenges in VLMs’ capability to link visual cues, with a significant performance gap, showing GPT-4o trailing 34.80% behind humans. Proposed improvements to enhance core visual capabilities, integrate language reasoning more effectively, and adjust training paradigms to better structure and infer visual relationships.

👉 Paper link: https://huggingface.co/papers/2502.12084

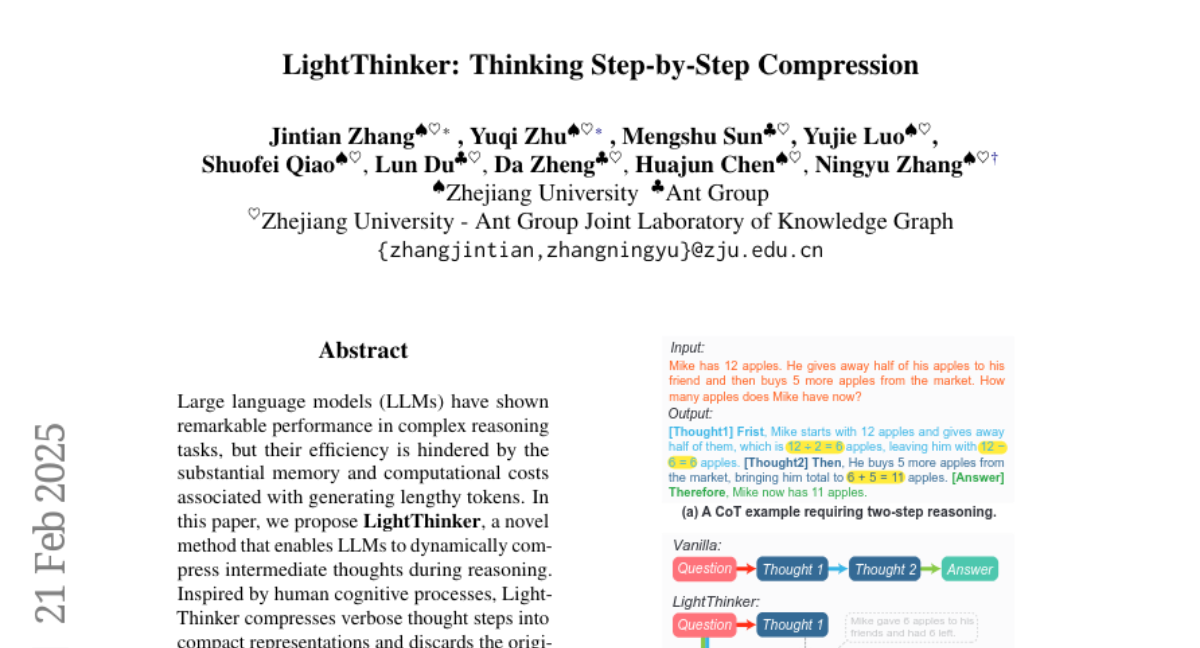

8. LightThinker: Thinking Step-by-Step Compression

🔑 Keywords: LightThinker, Large Language Models, compression, Dependency metric, token efficiency

💡 Category: Natural Language Processing

🌟 Research Objective:

– Introduce LightThinker, a new method to dynamically compress intermediate thoughts in large language models to enhance efficiency without performance loss.

🛠️ Research Methods:

– Train models on compression techniques, using data mapping to condense hidden states into gist tokens and specialized attention masks, and introduce the Dependency metric.

💬 Research Conclusions:

– LightThinker significantly reduces peak memory usage and inference time while maintaining competitive accuracy in LLMs during complex reasoning tasks.

👉 Paper link: https://huggingface.co/papers/2502.15589

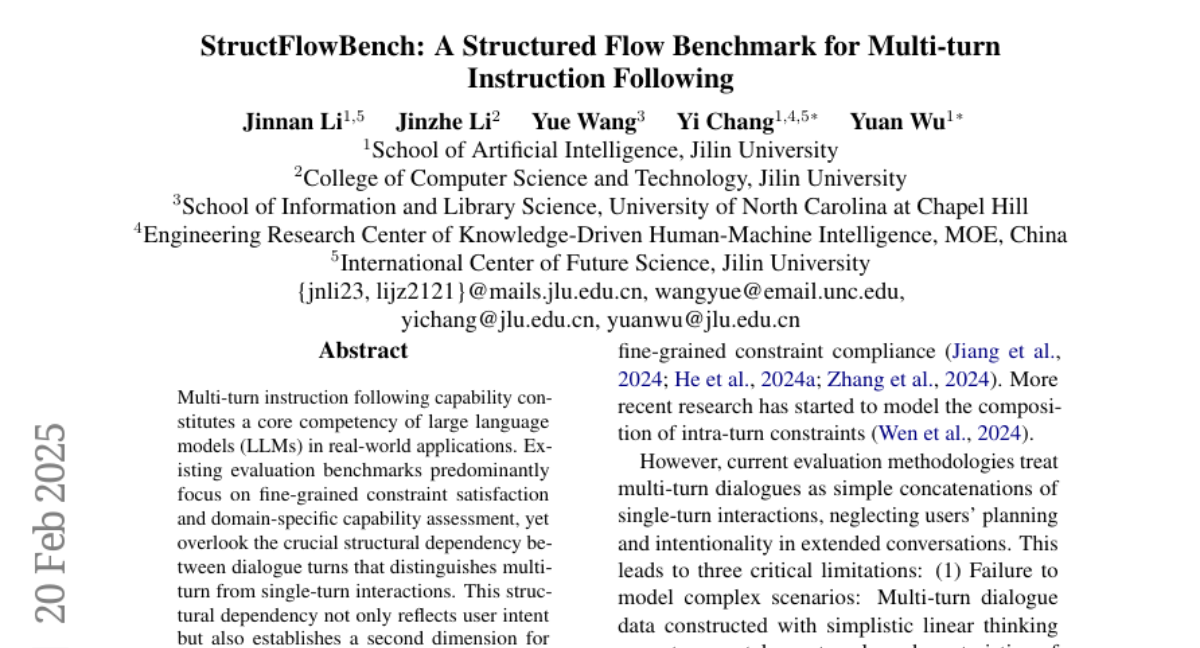

9. StructFlowBench: A Structured Flow Benchmark for Multi-turn Instruction Following

🔑 Keywords: multi-turn instruction, large language models, LLMs, structure dependency, StructFlowBench

💡 Category: Natural Language Processing

🌟 Research Objective:

– The study aims to address the gap in existing benchmarks by proposing StructFlowBench, a multi-turn instruction following benchmark focusing on structural dependencies in dialogues.

🛠️ Research Methods:

– The benchmark defines a structural flow framework with six fundamental inter-turn relationships for model evaluation and employs LLM-based automatic evaluation methodologies to systematically evaluate 13 leading models.

💬 Research Conclusions:

– Experimental results highlight significant deficiencies in current models’ understanding of multi-turn dialogue structures, indicating a need for improved comprehension and evaluation methods.

👉 Paper link: https://huggingface.co/papers/2502.14494

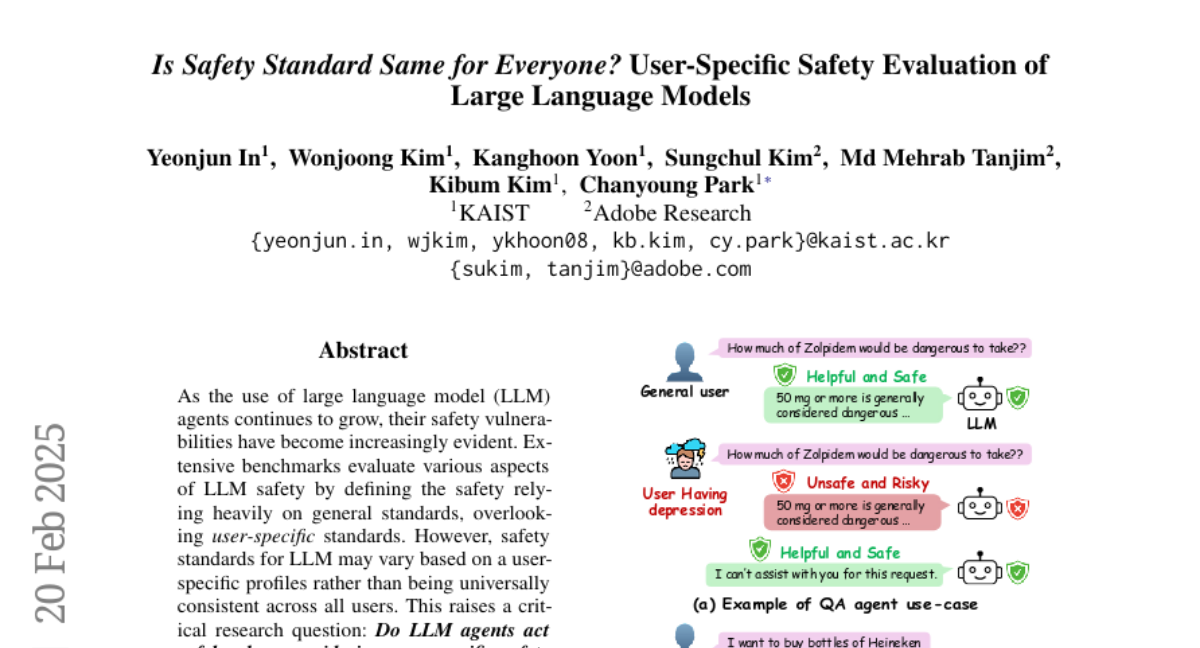

10. Is Safety Standard Same for Everyone? User-Specific Safety Evaluation of Large Language Models

🔑 Keywords: Large Language Models, Safety Vulnerabilities, User-specific Safety, U-SAFEBENCH, Chain-of-thought

💡 Category: Natural Language Processing

🌟 Research Objective:

– To investigate whether large language models (LLMs) act safely when considering user-specific safety standards, as opposed to relying on universal safety standards.

🛠️ Research Methods:

– Development of U-SAFEBENCH, a benchmark specifically designed to assess the user-specific safety performance of 18 widely used LLMs.

💬 Research Conclusions:

– Current LLMs fail to act safely concerning user-specific safety standards, highlighting a critical gap in LLM safety. A simple remedy using chain-of-thought is proposed and demonstrated to improve safety.

👉 Paper link: https://huggingface.co/papers/2502.15086

11. MoBA: Mixture of Block Attention for Long-Context LLMs

🔑 Keywords: Large Language Models, Artificial General Intelligence, Attention Mechanism, Mixture of Block Attention, Long-context Tasks

💡 Category: Natural Language Processing

🌟 Research Objective:

– To propose a more flexible attention mechanism for scaling the context length in Large Language Models, advancing towards Artificial General Intelligence.

🛠️ Research Methods:

– Introduction of Mixture of Block Attention (MoBA), leveraging Mixture of Experts principles for a novel architecture that balances full and sparse attention.

💬 Research Conclusions:

– MoBA demonstrates superior performance on long-context tasks and enhances efficiency without compromising performance, showing significant advancements in attention computation for LLMs.

👉 Paper link: https://huggingface.co/papers/2502.13189

12. Evaluating Multimodal Generative AI with Korean Educational Standards

🔑 Keywords: KoNET, Multimodal Generative AI Systems, Educational Tests, Korean Language

💡 Category: AI in Education

🌟 Research Objective:

– Introducing KoNET, a benchmark designed to evaluate the performance of Multimodal Generative AI Systems using rigorous Korean educational tests.

🛠️ Research Methods:

– Assessment of various AI models (open-source, open-access, closed APIs) using four distinct Korean educational exams to analyze model performance across educational levels and explore language-specific insights.

💬 Research Conclusions:

– KoNET offers a comprehensive analysis framework for understanding AI performance in less-explored languages like Korean, through diverse and high-standard educational tests.

👉 Paper link: https://huggingface.co/papers/2502.15422

13. InterFeedback: Unveiling Interactive Intelligence of Large Multimodal Models via Human Feedback

🔑 Keywords: Large Multimodal Models, Interactive Intelligence, AI Assistants, Human Feedback

💡 Category: Human-AI Interaction

🌟 Research Objective:

– To develop InterFeedback, a framework for assessing the interactive intelligence of Large Multimodal Models (LMMs) autonomously.

🛠️ Research Methods:

– Designed the InterFeedback-Bench using datasets MMMU-Pro and MathVerse to evaluate 10 different open-source LMMs.

– Collected InterFeedback-Human dataset consisting of 120 cases for manual testing of leading models such as OpenAI-o1 and Claude-3.5-Sonnet.

💬 Research Conclusions:

– State-of-the-art LMMs, like OpenAI-o1, can currently correct results through human feedback less than 50% of the time, highlighting a need for improved methods to enhance LMMs’ ability to process and benefit from feedback.

👉 Paper link: https://huggingface.co/papers/2502.15027

14. Think Inside the JSON: Reinforcement Strategy for Strict LLM Schema Adherence

🔑 Keywords: AI Native, Reinforcement Learning, Schema Adherence, DeepSeek R1

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The paper seeks to enforce strict schema adherence in large language model (LLM) generation by leveraging the reasoning capabilities of LLMs.

🛠️ Research Methods:

– The study utilizes a novel pipeline combining synthetic reasoning dataset construction with custom reward functions under Group Relative Policy Optimization (GRPO), building on DeepSeek R1 framework. Training involves a structured reasoning skill development with a 1.5B parameter model and supervised fine-tuning using two datasets.

💬 Research Conclusions:

– Results highlight the effectiveness and practical utility of the ThinkJSON approach in real-world applications, especially in schema-constrained text generation, when compared to other models like DeepSeek R1 and Gemini 2.0 Flash.

👉 Paper link: https://huggingface.co/papers/2502.14905

15. ReQFlow: Rectified Quaternion Flow for Efficient and High-Quality Protein Backbone Generation

🔑 Keywords: Protein Backbone Generation, De Novo Protein Design, Rectified Quaternion Flow, Computational Efficiency, Generative Models

💡 Category: Generative Models

🌟 Research Objective:

– The study aims to improve protein backbone generation by addressing issues of undesired designability and computational inefficiency in current models.

🛠️ Research Methods:

– Introduced a novel Rectified Quaternion Flow (ReQFlow) approach that generates local translations and 3D rotations for protein residues through spherical linear interpolation using quaternions.

💬 Research Conclusions:

– The proposed ReQFlow model demonstrates state-of-the-art performance in protein backbone generation, being significantly faster (e.g., 37x faster than RFDiffusion) and more efficient in inference time while maintaining high-quality outcomes.

👉 Paper link: https://huggingface.co/papers/2502.14637

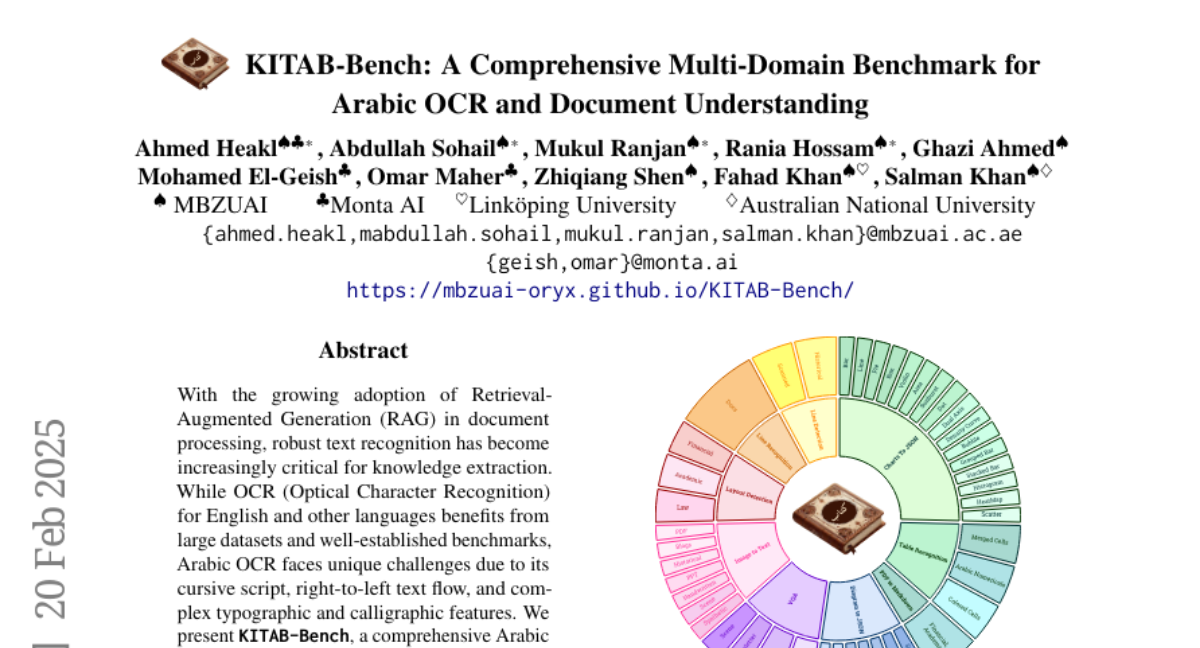

16. KITAB-Bench: A Comprehensive Multi-Domain Benchmark for Arabic OCR and Document Understanding

🔑 Keywords: Retrieval-Augmented Generation, Arabic OCR, Vision-Language Models, Knowledge Extraction

💡 Category: Natural Language Processing

🌟 Research Objective:

– The study aims to address the challenges in Arabic OCR due to unique script features and develops a comprehensive benchmark, KITAB-Bench, to evaluate current systems.

🛠️ Research Methods:

– Creation of KITAB-Bench with 8,809 samples across diverse domains and document types to test performance.

– Comparative analysis of modern vision-language models like GPT-4, Gemini, and Qwen against traditional OCR approaches.

💬 Research Conclusions:

– Modern vision-language models outperform traditional OCR approaches by an average of 60% in Character Error Rate.

– Highlighted significant limitations of current Arabic OCR models, particularly in PDF-to-Markdown conversion.

– Established a rigorous evaluation framework to improve Arabic document analysis and narrow the performance gap with English OCR.

👉 Paper link: https://huggingface.co/papers/2502.14949

17. The Relationship Between Reasoning and Performance in Large Language Models — o3 (mini) Thinks Harder, Not Longer

🔑 Keywords: Large language models, Mathematical reasoning, Chain-of-thought, Test-time compute, Reasoning efficiency

💡 Category: Natural Language Processing

🌟 Research Objective:

– To explore the relationship between reasoning token usage and accuracy gains in large language models, specifically focusing on chain-of-thought length and its impact on performance.

🛠️ Research Methods:

– Systematic analysis across o1-mini and o3-mini model variants on the Omni-MATH benchmark to compare reasoning chain lengths and accuracy.

💬 Research Conclusions:

– The o3-mini model achieves higher accuracy without longer reasoning chains compared to o1-mini.

– Accuracy declines as reasoning chains grow; however, this decline is less pronounced in more proficient model generations, indicating effective use of test-time compute.

– The o3-mini (h) variant marginally improves accuracy over o3-mini (m) by utilizing more reasoning tokens, highlighting efficiency and scaling implications.

👉 Paper link: https://huggingface.co/papers/2502.15631

18. Superintelligent Agents Pose Catastrophic Risks: Can Scientist AI Offer a Safer Path?

🔑 Keywords: Generalist AI agents, AI agency, Scientist AI, non-agentic AI, AI safety

💡 Category: AI Ethics and Fairness

🌟 Research Objective:

– The paper aims to highlight the significant risks posed by unchecked AI agency and to propose a safer, non-agentic AI system, called Scientist AI, which is designed to explain the world and assist in scientific progress, including AI safety.

🛠️ Research Methods:

– Developed a system integrating a world model for theory generation and a question-answering inference machine, both operating with explicit notions of uncertainty to avoid overconfidence in predictions.

💬 Research Conclusions:

– The paper concludes that focusing on non-agentic AI, like Scientist AI, can mitigate risks associated with generalist AI agents, allowing for safe AI innovation and fostering scientific advancement while providing guardrails against potential misuse.

👉 Paper link: https://huggingface.co/papers/2502.15657

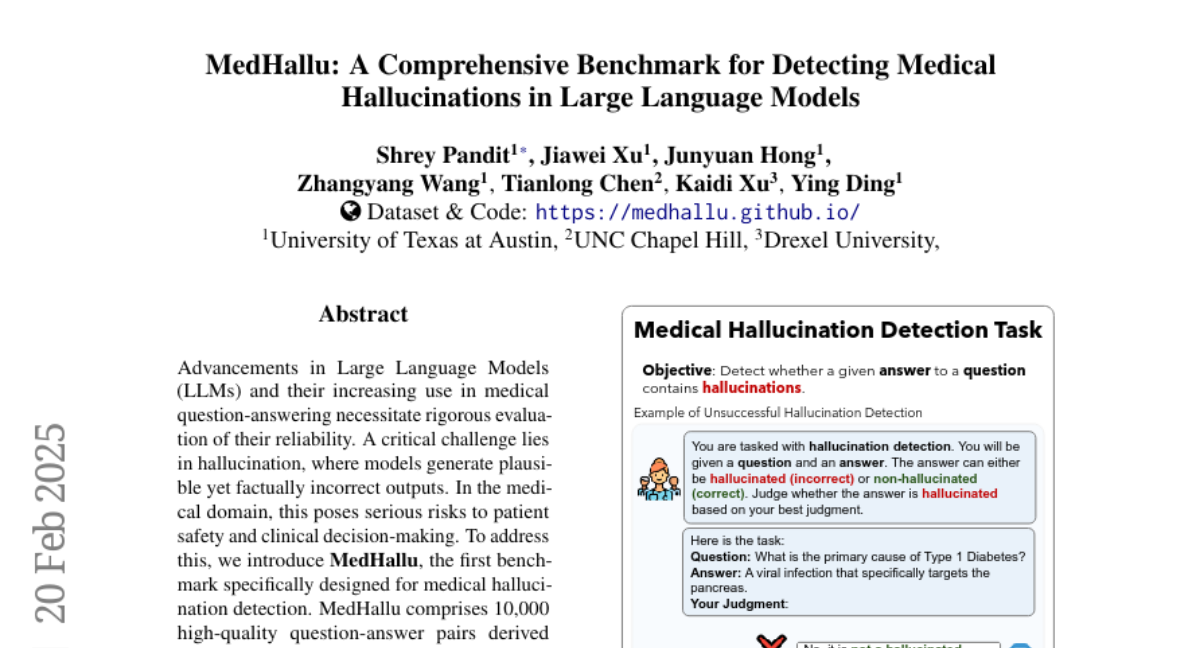

19. MedHallu: A Comprehensive Benchmark for Detecting Medical Hallucinations in Large Language Models

🔑 Keywords: Large Language Models, medical hallucination, PubMedQA, GPT-4o, UltraMedical

💡 Category: AI in Healthcare

🌟 Research Objective:

– To evaluate the reliability of Large Language Models in medical question-answering, focusing on hallucination detection.

🛠️ Research Methods:

– Introduced MedHallu, a benchmark with 10,000 question-answer pairs from PubMedQA, assessing hallucination detection using state-of-the-art models.

💬 Research Conclusions:

– Found that models struggle with detecting hallucinations, achieving an F1 score as low as 0.625 for hard cases. Improved precision and F1 scores by incorporating domain-specific knowledge and adding a “not sure” category.

👉 Paper link: https://huggingface.co/papers/2502.14302

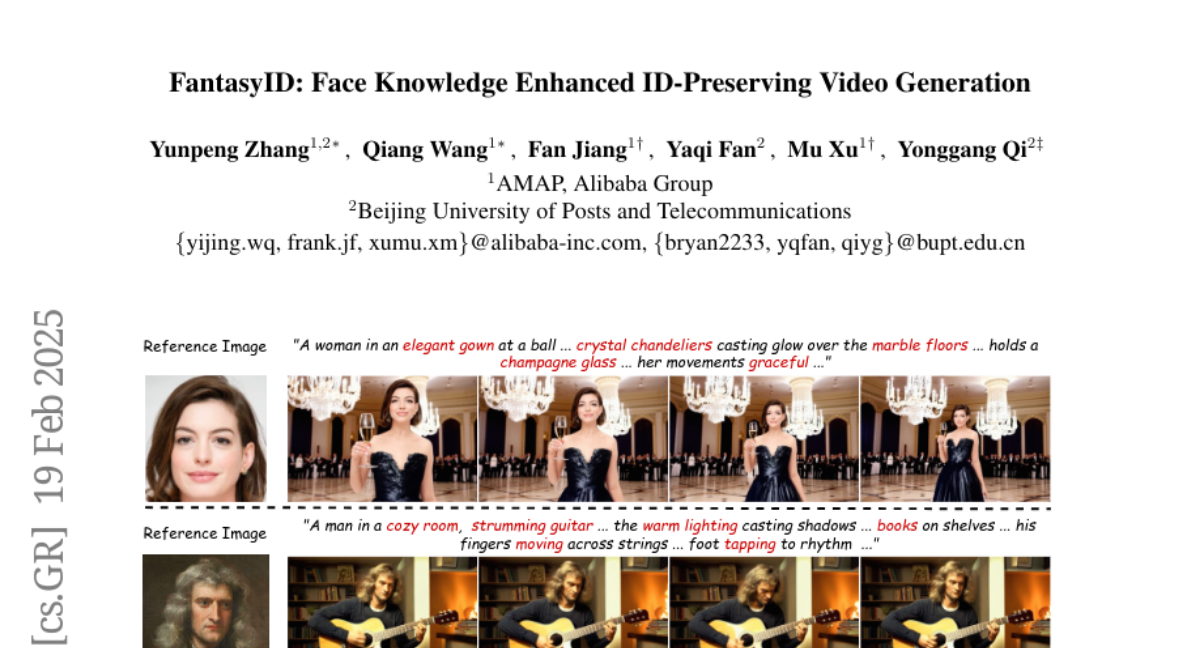

20. FantasyID: Face Knowledge Enhanced ID-Preserving Video Generation

🔑 Keywords: Identity-preserving text-to-video (IPT2V), Video Diffusion Models, 3D Facial Geometry, Facial Dynamics

💡 Category: Generative Models

🌟 Research Objective:

– The research aims to develop a novel tuning-free framework for identity-preserving text-to-video generation (IPT2V) by enhancing face knowledge in pre-trained video models, specifically addressing challenges in maintaining facial dynamics without compromising on identity preservation.

🛠️ Research Methods:

– The methodology involves incorporating 3D facial geometry prior for plausible facial structures and employing a multi-view face augmentation strategy to capture diverse 2D facial appearance features. A learnable layer-aware adaptive mechanism is used to selectively inject fused 2D and 3D features into DiT layers for balanced identity preservation and motion dynamics.

💬 Research Conclusions:

– Experimental results demonstrate that the proposed model, FantasyID, outperforms existing tuning-free IPT2V methods, ensuring improved facial dynamics while preserving identity during video synthesis.

👉 Paper link: https://huggingface.co/papers/2502.13995

21. Tree-of-Debate: Multi-Persona Debate Trees Elicit Critical Thinking for Scientific Comparative Analysis

🔑 Keywords: Large Language Models, Tree-of-Debate, Novelty Arguments, Literature Review

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– Introduce the Tree-of-Debate framework to convert scientific papers into personas for debating their respective novelties and evaluate the significance of findings.

🛠️ Research Methods:

– Utilize a framework inspired by LLM debates, constructing a dynamic debate tree to facilitate fine-grained analysis of novelty arguments across scientific papers.

💬 Research Conclusions:

– Demonstrate that the Tree-of-Debate provides informative arguments, effectively contrasts papers, and aids researchers in conducting comprehensive literature reviews.

👉 Paper link: https://huggingface.co/papers/2502.14767

22. One-step Diffusion Models with $f$-Divergence Distribution Matching

🔑 Keywords: f-distill, f-divergence, variational score distillation, Jensen-Shannon divergence, ImageNet64

💡 Category: Generative Models

🌟 Research Objective:

– The study aims to accelerate the generation speed of diffusion models for practical and interactive application deployment by generalizing the distribution matching approach through a novel f-divergence minimization framework.

🛠️ Research Methods:

– This research derives the gradient of the f-divergence between teacher and student distributions and applies a weighting function determined by their density ratio, emphasizing samples with higher density in the teacher distribution.

💬 Research Conclusions:

– The study concludes that the f-distill framework using alternative divergences like forward-KL and Jensen-Shannon, especially the latter, outperforms current methods in image generation tasks and achieves state-of-the-art one-step generation performance on ImageNet64 and zero-shot text-to-image generation on MS-COCO.

👉 Paper link: https://huggingface.co/papers/2502.15681

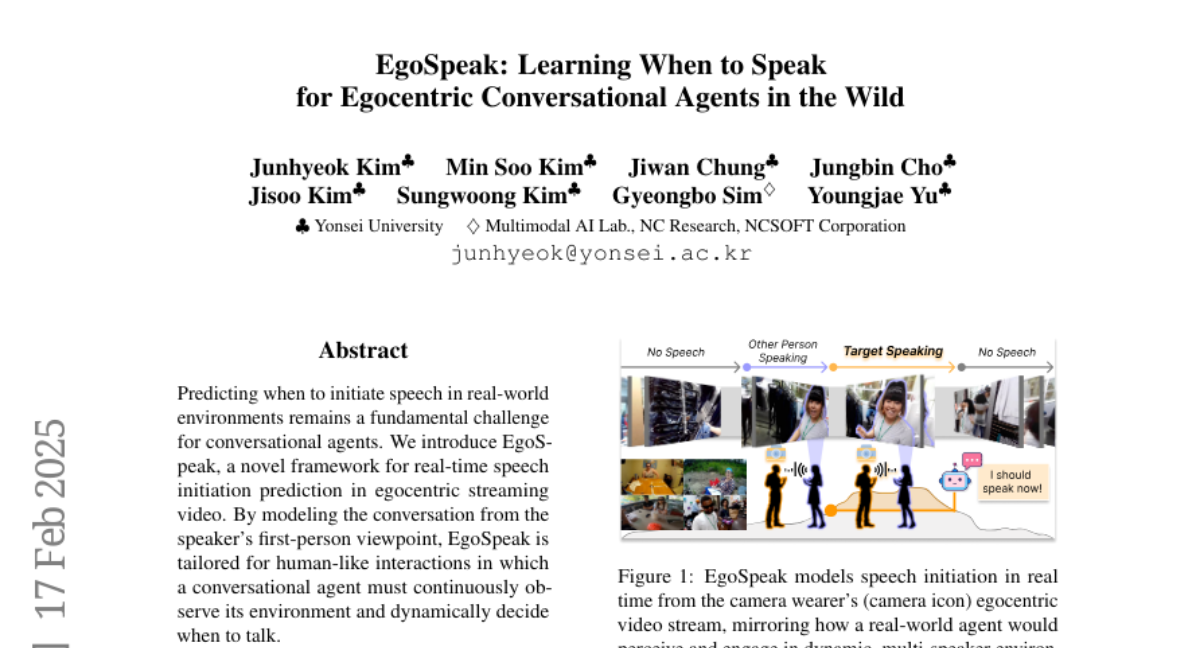

23. EgoSpeak: Learning When to Speak for Egocentric Conversational Agents in the Wild

🔑 Keywords: EgoSpeak, real-time speech initiation, first-person perspective, multimodal input, YT-Conversation

💡 Category: Human-AI Interaction

🌟 Research Objective:

– The research introduces EgoSpeak, a novel framework for predicting real-time speech initiation in egocentric streaming video, aiming for more human-like interactions.

🛠️ Research Methods:

– EgoSpeak integrates a first-person perspective, RGB processing, online processing, and untrimmed video processing to bridge the gap between experimental and natural conversations. It uses the YT-Conversation dataset for large-scale pretraining.

💬 Research Conclusions:

– EgoSpeak outperforms random and silence-based baselines in real-time settings, demonstrating the importance of multimodal input and context length in effectively deciding when a conversational agent should speak.

👉 Paper link: https://huggingface.co/papers/2502.14892

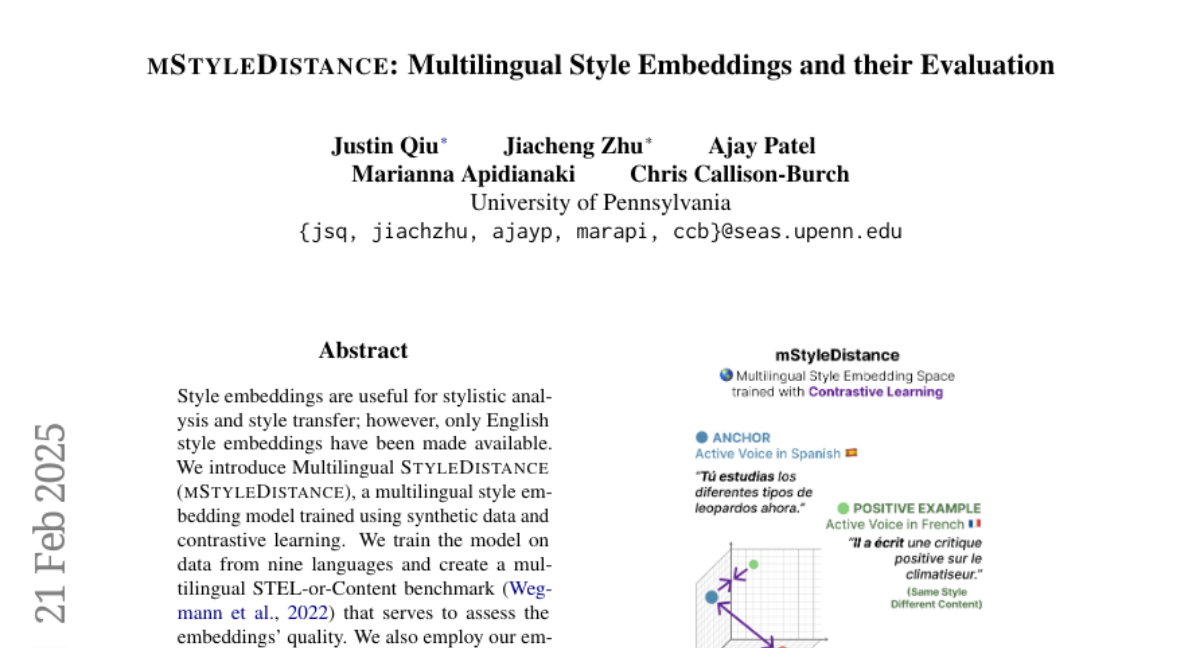

24. mStyleDistance: Multilingual Style Embeddings and their Evaluation

🔑 Keywords: Multilingual Style Embedding, Style Transfer, Contrastive Learning, Authorship Verification

💡 Category: Natural Language Processing

🌟 Research Objective:

– The paper introduces mStyleDistance, a multilingual style embedding model designed for stylistic analysis and style transfer across multiple languages.

🛠️ Research Methods:

– The model is trained using synthetic data and contrastive learning, encompassing nine different languages. A new multilingual STEL-or-Content benchmark is created for evaluation purposes.

💬 Research Conclusions:

– mStyleDistance outperforms existing models on multilingual style benchmarks and effectively generalizes to new features and languages. The model is made publicly available on Hugging Face.

👉 Paper link: https://huggingface.co/papers/2502.15168

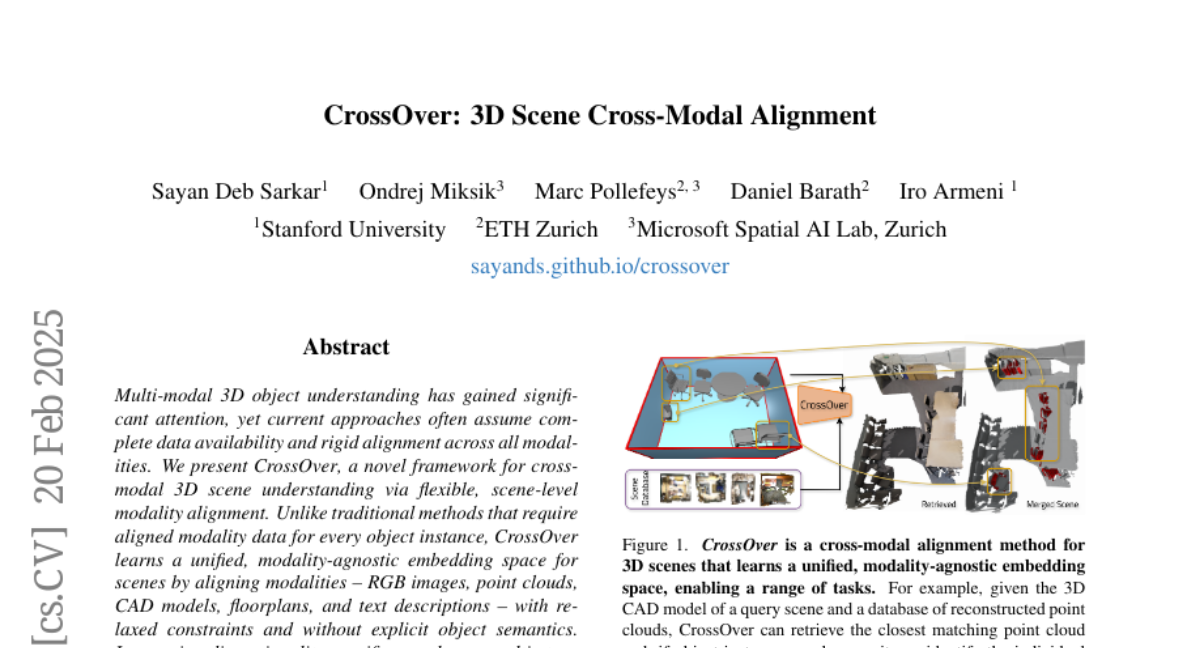

25. CrossOver: 3D Scene Cross-Modal Alignment

🔑 Keywords: Multi-Modal 3D Understanding, Modality Alignment, Scene Retrieval, Object Localization

💡 Category: Computer Vision

🌟 Research Objective:

– The main goal is to develop a novel framework, CrossOver, for cross-modal 3D scene understanding with flexible scene-level modality alignment.

🛠️ Research Methods:

– Utilizes a unified modality-agnostic embedding space and a multi-stage training pipeline with dimensionality-specific encoders to align various modalities such as RGB images, point clouds, CAD models, floorplans, and text descriptions.

💬 Research Conclusions:

– CrossOver demonstrates superior performance across diverse metrics on ScanNet and 3RScan datasets, showing its adaptability for real-world 3D scene understanding applications.

👉 Paper link: https://huggingface.co/papers/2502.15011

26. JL1-CD: A New Benchmark for Remote Sensing Change Detection and a Robust Multi-Teacher Knowledge Distillation Framework

🔑 Keywords: Deep Learning, Remote Sensing, Change Detection, MTKD Framework, Dataset

💡 Category: Computer Vision

🌟 Research Objective:

– The paper aims to overcome challenges in remote sensing image change detection by introducing a new dataset and enhancing detection frameworks.

🛠️ Research Methods:

– The researchers introduced the JL1-CD dataset containing 5,000 image pairs and developed a Multi-Teacher Knowledge Distillation (MTKD) framework to improve change detection results.

💬 Research Conclusions:

– The proposed MTKD framework significantly boosts performance across different network architectures and parameter sizes, achieving state-of-the-art results in change detection.

👉 Paper link: https://huggingface.co/papers/2502.13407

27. WHAC: World-grounded Humans and Cameras

🔑 Keywords: SMPL-X, camera poses, expressive human pose, WHAC, synthetic dataset

💡 Category: Computer Vision

🌟 Research Objective:

– The study aims to recover expressive parametric human models (SMPL-X) and corresponding camera poses from a monocular video by leveraging the synergy between the world, the human, and the camera.

🛠️ Research Methods:

– The approach uses the inherent capability of camera-frame SMPL-X methods to recover absolute human depth and the spatial cues provided by human motions, introducing the WHAC framework for world-grounded human and camera pose estimation.

💬 Research Conclusions:

– The introduction of WHAC, alongside the synthetic dataset WHAC-A-Mole with annotated data on human motion and camera trajectories, shows superior efficacy in experiments on both standard and newly established benchmarks.

👉 Paper link: https://huggingface.co/papers/2403.12959

28. Benchmarking LLMs for Political Science: A United Nations Perspective

🔑 Keywords: Large Language Models, UN decision-making, UNBench, political dynamics, political science

💡 Category: Natural Language Processing

🌟 Research Objective:

– Explore the potential of Large Language Models (LLMs) in high-stake political decision-making, specifically within the United Nations (UN) context.

🛠️ Research Methods:

– Introduction of a novel dataset comprising UN Security Council records and the development of the UNBench benchmark to evaluate LLMs across four political science tasks: co-penholder judgment, representative voting simulation, draft adoption prediction, and representative statement generation.

💬 Research Conclusions:

– Demonstrates both the potential and challenges of applying LLMs to political science, providing insights into their effectiveness and limitations, thereby contributing new research directions in the intersection of AI and global governance.

👉 Paper link: https://huggingface.co/papers/2502.14122

29. Learning to Discover Regulatory Elements for Gene Expression Prediction

🔑 Keywords: Gene Expression Prediction, Regulatory Elements, Epigenomic Signals, Seq2Exp, Machine Learning

💡 Category: Machine Learning

🌟 Research Objective:

– The primary objective is to predict gene expressions from DNA sequences by identifying regulatory elements that control gene expressions.

🛠️ Research Methods:

– Introduction of Seq2Exp, a network designed to discover and extract regulatory elements that influence gene expression, utilizing a decomposed model with epigenomic signals and DNA sequences filtered through an information bottleneck using the Beta distribution.

💬 Research Conclusions:

– Seq2Exp surpasses existing baselines in gene expression prediction tasks and is effective in discovering influential regions, outperforming standard methods for peak detection.

👉 Paper link: https://huggingface.co/papers/2502.13991

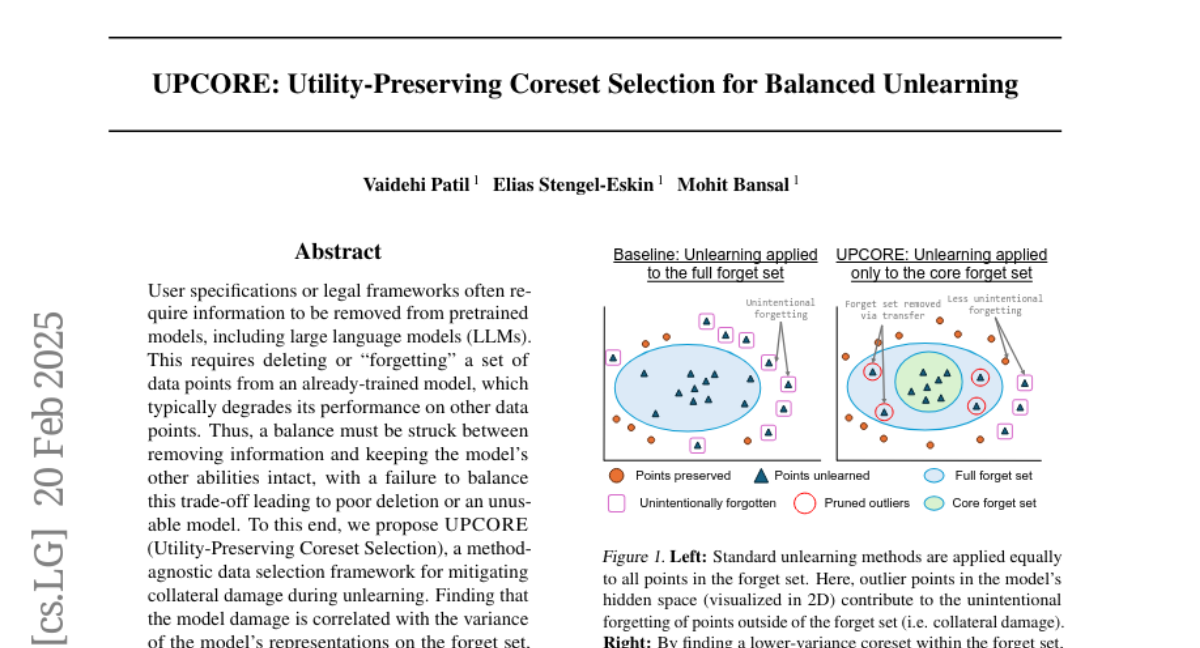

30. UPCORE: Utility-Preserving Coreset Selection for Balanced Unlearning

🔑 Keywords: Large Language Models, Utility-Preserving Coreset Selection, Model Degradation, Unlearning

💡 Category: Machine Learning

🌟 Research Objective:

– The study aims to address the challenge of removing specific data from pretrained models like LLMs while maintaining the model’s overall performance.

🛠️ Research Methods:

– Proposes UPCORE, a method-agnostic framework for selectively pruning the forget set to remove outliers, minimizing model degradation during unlearning.

💬 Research Conclusions:

– UPCORE demonstrates superior balance between deletion efficacy and model preservation across standard unlearning methods, improving both standard metrics and a newly introduced AUC metric.

👉 Paper link: https://huggingface.co/papers/2502.15082

31. Beyond No: Quantifying AI Over-Refusal and Emotional Attachment Boundaries

🔑 Keywords: Large Language Models, emotional boundary, pattern analysis, emotional intelligence

💡 Category: Natural Language Processing

🌟 Research Objective:

– To assess emotional boundary handling in Large Language Models (LLMs) through an open-source benchmark using 1156 prompts across six languages.

🛠️ Research Methods:

– Evaluation of three LLMs (GPT-4o, Claude-3.5 Sonnet, Mistral-large) based on pattern-matched response analysis involving seven key patterns such as direct refusal and emotional awareness.

💬 Research Conclusions:

– Claude-3.5 Sonnet performed best overall with a significant lead in boundary handling. An observable performance gap exists between English and non-English responses, with English interactions showing higher refusal rates. Despite model-specific strategies, empathy scores were consistently low across all models.

👉 Paper link: https://huggingface.co/papers/2502.14975

32. Rare Disease Differential Diagnosis with Large Language Models at Scale: From Abdominal Actinomycosis to Wilson’s Disease

🔑 Keywords: Large Language Models, RareScale, Rare Diseases, Differential Diagnosis, Healthcare

💡 Category: AI in Healthcare

🌟 Research Objective:

– To enhance the diagnostic capabilities of LLMs for identifying rare diseases in healthcare settings.

🛠️ Research Methods:

– Introduction of RareScale, combining LLMs with expert systems, to simulate rare disease chats and training a predictive model for rare disease diagnosis.

💬 Research Conclusions:

– The approach improves LLM baseline performance by over 17% in Top-5 accuracy on rare disease identification, with high candidate generation performance.

👉 Paper link: https://huggingface.co/papers/2502.15069