AI Native Daily Paper Digest – 20250303

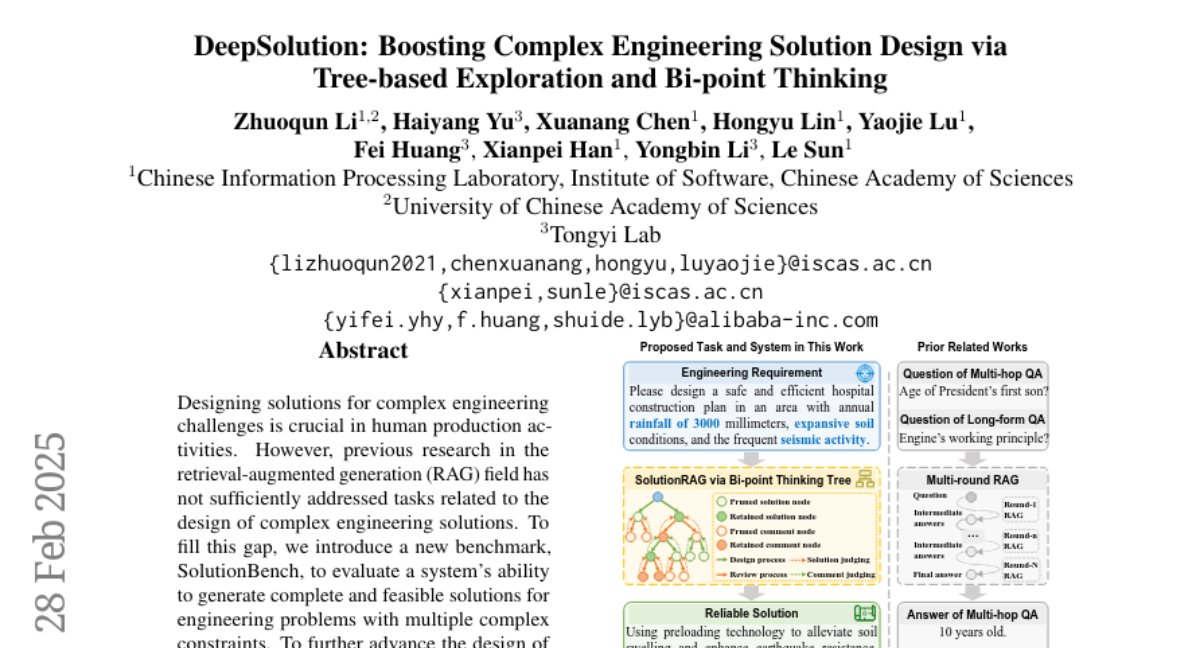

1. DeepSolution: Boosting Complex Engineering Solution Design via Tree-based Exploration and Bi-point Thinking

🔑 Keywords: SolutionBench, SolutionRAG, Complex Engineering Solutions, Retrieval-Augmented Generation

💡 Category: Generative Models

🌟 Research Objective:

– To address the gap in Retrieval-Augmented Generation (RAG) research concerning the design of complex engineering solutions.

🛠️ Research Methods:

– Introduction of a new benchmark called SolutionBench for evaluating system capabilities in generating solutions.

– Development of a novel system, SolutionRAG, using tree-based exploration and bi-point thinking mechanism.

💬 Research Conclusions:

– SolutionRAG demonstrates state-of-the-art performance on SolutionBench, suggesting its potential to improve automation and reliability in real-world complex engineering solution designs.

👉 Paper link: https://huggingface.co/papers/2502.20730

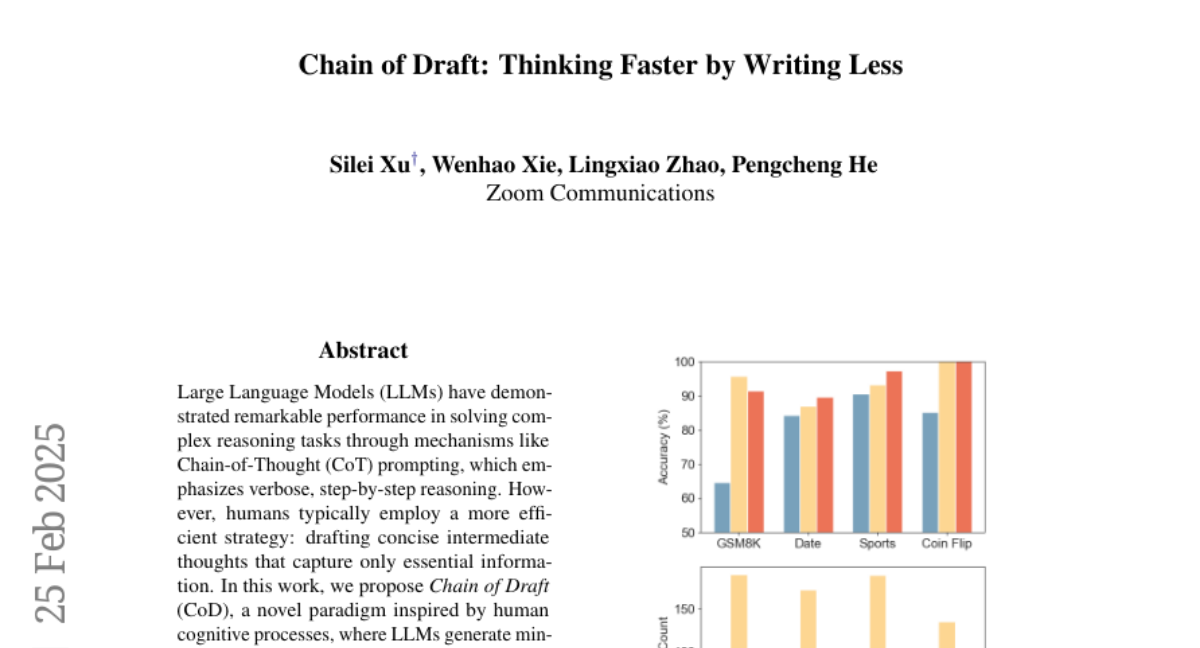

2. Chain of Draft: Thinking Faster by Writing Less

🔑 Keywords: Large Language Models (LLMs), Chain of Thought (CoT), Chain of Draft (CoD), reasoning tasks, cognitive processes

💡 Category: Natural Language Processing

🌟 Research Objective:

– Introduce Chain of Draft (CoD), a new paradigm inspired by human cognitive processes, to improve efficiency in solving reasoning tasks with LLMs.

🛠️ Research Methods:

– Utilize a minimalistic approach for LLMs to generate concise intermediate reasoning outputs, focusing on critical insights rather than verbosity.

💬 Research Conclusions:

– CoD matches or surpasses the accuracy of CoT with significantly reduced token usage (only 7.6%), consequently lowering both cost and latency in various reasoning tasks.

👉 Paper link: https://huggingface.co/papers/2502.18600

3. Multi-Turn Code Generation Through Single-Step Rewards

🔑 Keywords: Code Generation, Reinforcement Learning, muCode, Execution Feedback

💡 Category: Reinforcement Learning

🌟 Research Objective:

– To solve the problem of multi-turn code generation from execution feedback using a novel approach called muCode.

🛠️ Research Methods:

– Developed muCode, a simple approach leveraging single-step rewards in a one-step recoverable Markov Decision Process (MDP).

– Iteratively trained both a code generator and a verifier to improve code solutions with multi-turn feedback.

💬 Research Conclusions:

– The proposed muCode approach significantly outperforms existing state-of-the-art baselines in multi-turn code generation.

– The study illustrates the effectiveness of utilizing execution feedback with muCode.

👉 Paper link: https://huggingface.co/papers/2502.20380

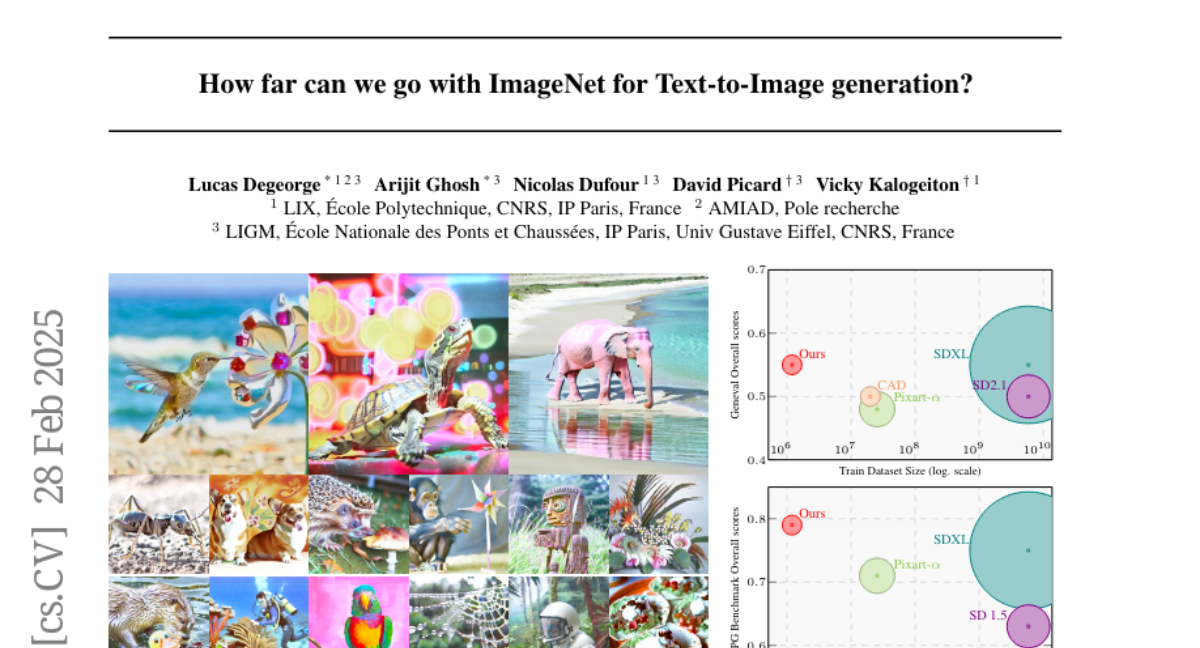

4. How far can we go with ImageNet for Text-to-Image generation?

🔑 Keywords: Text-to-Image, Data Augmentation, ImageNet, Sustainable

💡 Category: Generative Models

🌟 Research Objective:

– Challenge the ‘bigger is better’ paradigm in T2I generation by using strategic data augmentation on small, curated datasets.

🛠️ Research Methods:

– Utilize ImageNet with well-designed text and image augmentations, resulting in improved efficiency and performance.

💬 Research Conclusions:

– Strategic data augmentation can achieve equal or superior results compared to models trained on massive datasets, offering a more sustainable approach to T2I generation.

👉 Paper link: https://huggingface.co/papers/2502.21318

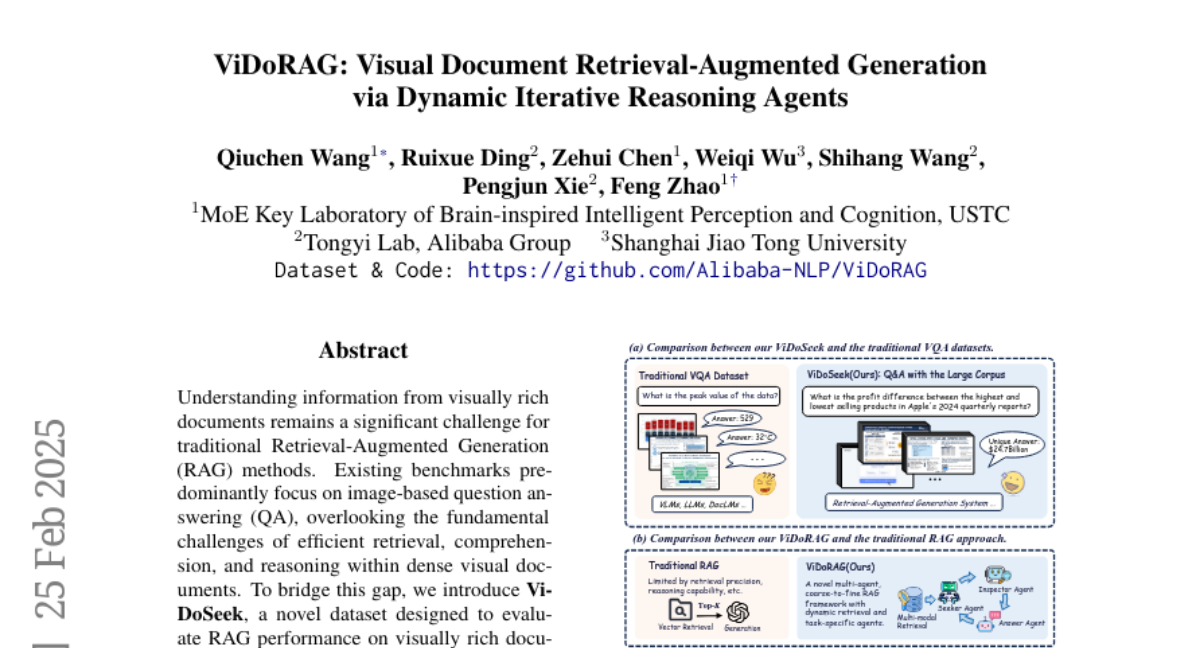

5. ViDoRAG: Visual Document Retrieval-Augmented Generation via Dynamic Iterative Reasoning Agents

🔑 Keywords: Retrieval-Augmented Generation, ViDoSeek, Visual Documents, Multi-modal Retrieval, Complex Reasoning

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Introduce ViDoSeek, a dataset for evaluating RAG performance in visually rich documents requiring complex reasoning.

– Identify limitations in current RAG approaches concerning visual retrieval and reasoning capabilities.

🛠️ Research Methods:

– Propose ViDoRAG, a multi-agent RAG framework with a Gaussian Mixture Model-based hybrid strategy for multi-modal retrieval.

– Implement an iterative agent workflow including exploration, summarization, and reflection.

💬 Research Conclusions:

– ViDoRAG significantly outperforms existing methods by over 10% in the competitive ViDoSeek benchmark, validating its effectiveness and generalization capabilities.

👉 Paper link: https://huggingface.co/papers/2502.18017

6. SoS1: O1 and R1-Like Reasoning LLMs are Sum-of-Square Solvers

🔑 Keywords: Large Language Models, Mathematical Problem Solving, SoS-1K, Hilbert’s Seventeenth Problem

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– Investigate the ability of Large Language Models to solve rigorous mathematical problems, specifically the problem of determining nonnegativity of multivariate polynomials.

🛠️ Research Methods:

– Introduced the SoS-1K dataset consisting of approximately 1,000 polynomials with expert-designed reasoning instructions based on five progressively challenging criteria.

💬 Research Conclusions:

– High-quality reasoning instructions significantly boost model accuracy, with SoS-7B outperforming larger models such as DeepSeek-V3 and GPT-4o-mini while requiring significantly less computation time.

👉 Paper link: https://huggingface.co/papers/2502.20545

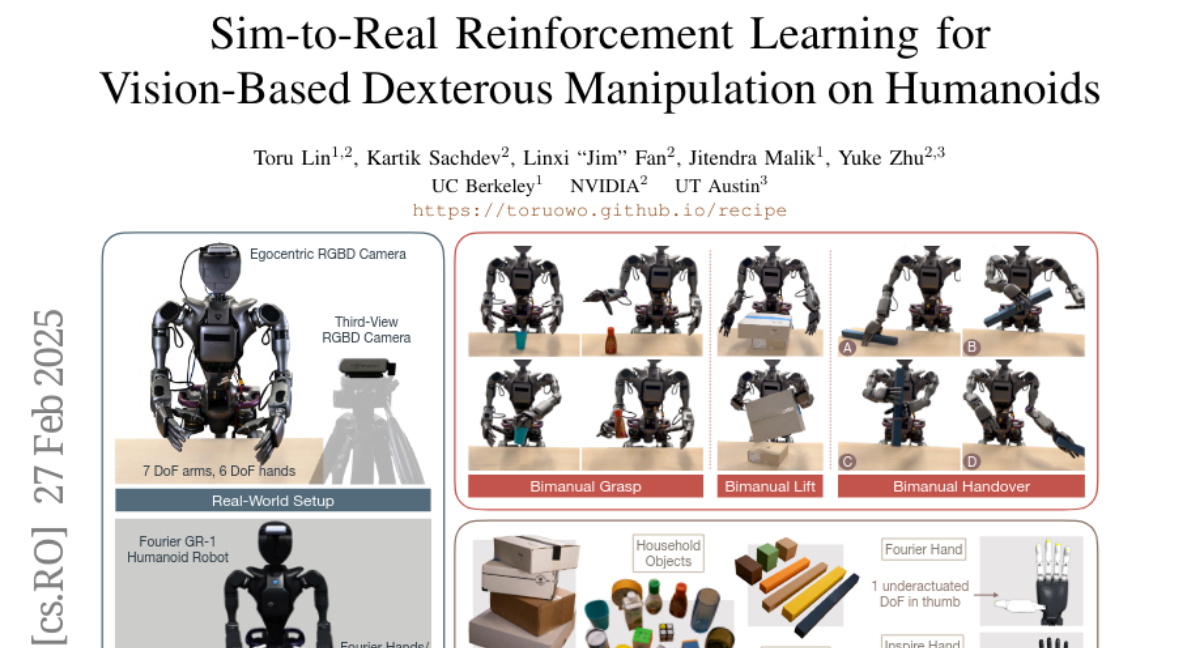

7. Sim-to-Real Reinforcement Learning for Vision-Based Dexterous Manipulation on Humanoids

🔑 Keywords: Reinforcement Learning, Dexterous Manipulation, Sim-to-Real, Reward Design, Sample Efficiency

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– The study aims to address the challenges of applying reinforcement learning to achieve successful dexterous manipulation in humanoid robots.

🛠️ Research Methods:

– The introduction of novel techniques such as a real-to-sim tuning module, a generalized reward design scheme, and a divide-and-conquer distillation process.

💬 Research Conclusions:

– The proposed methods show robust generalization and high performance in humanoid dexterous manipulation tasks without the need for human demonstration.

👉 Paper link: https://huggingface.co/papers/2502.20396

8. Tell me why: Visual foundation models as self-explainable classifiers

🔑 Keywords: Visual Foundation Models, Interpretability, Self-Explainable Models, Prototypical Architecture

💡 Category: Computer Vision

🌟 Research Objective:

– This study focuses on enhancing the interpretability of Visual Foundation Models (VFMs), especially for critical applications, by integrating a novel prototypical architecture and specialized training objectives.

🛠️ Research Methods:

– The research involves training a lightweight head with approximately 1M parameters atop frozen VFMs, creating an efficient and interpretable solution named ProtoFM.

💬 Research Conclusions:

– ProtoFM not only offers competitive classification performance but also surpasses existing models in interpretability metrics, validating the approach’s effectiveness.

👉 Paper link: https://huggingface.co/papers/2502.19577

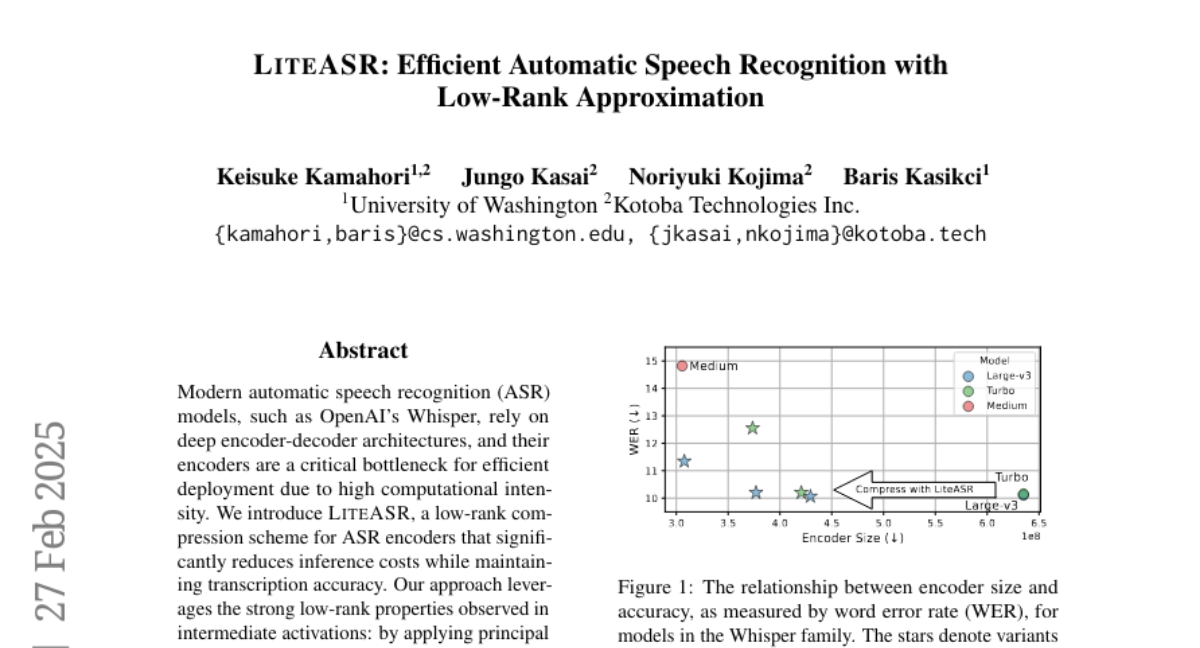

9. LiteASR: Efficient Automatic Speech Recognition with Low-Rank Approximation

🔑 Keywords: ASR, LiteASR, low-rank compression, Whisper, transcription accuracy

💡 Category: Natural Language Processing

🌟 Research Objective:

– The study aims to reduce inference costs of automatic speech recognition (ASR) models like OpenAI’s Whisper while maintaining transcription accuracy through a new method called LiteASR.

🛠️ Research Methods:

– Implement a low-rank compression scheme using principal component analysis (PCA) on ASR encoders to approximate linear transformations with a chain of low-rank matrix multiplications and optimize self-attention in the reduced dimension.

💬 Research Conclusions:

– Findings demonstrate that LiteASR can compress the encoder size of Whisper large-v3 by over 50% while enhancing transcription accuracy, achieving a new efficient and performance-optimal balance. The code for LiteASR is made publicly available.

👉 Paper link: https://huggingface.co/papers/2502.20583

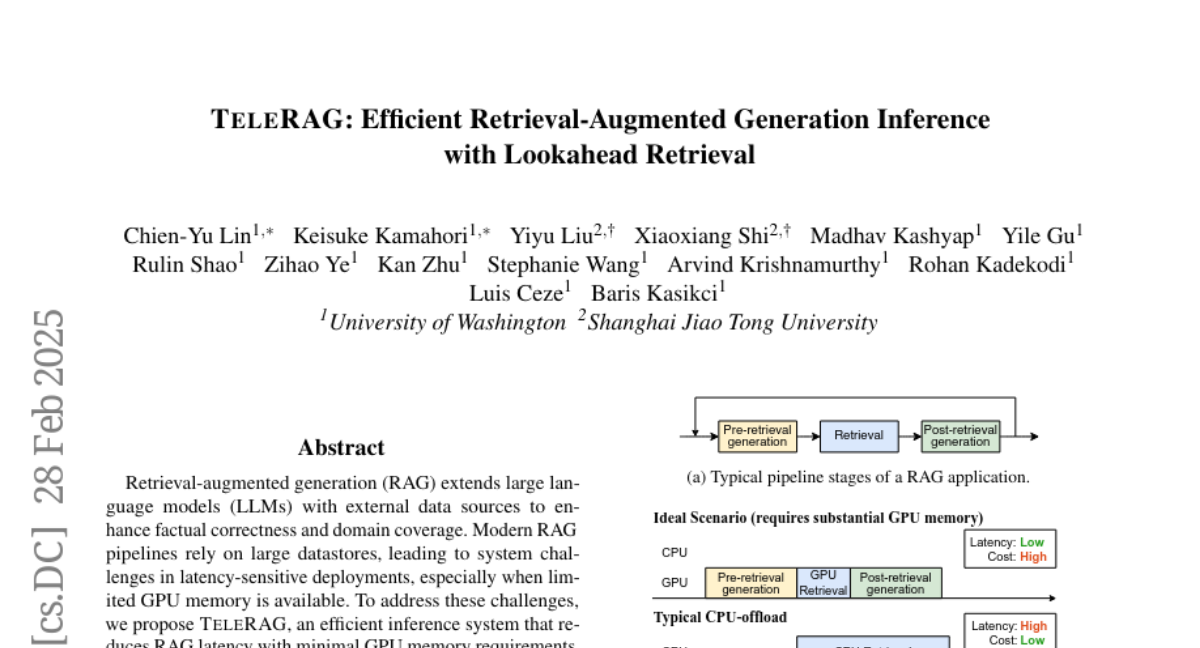

10. TeleRAG: Efficient Retrieval-Augmented Generation Inference with Lookahead Retrieval

🔑 Keywords: Retrieval-augmented generation, Large language models, Inference latency, GPU memory, Lookahead retrieval

💡 Category: AI Systems and Tools

🌟 Research Objective:

– Introduce TeleRAG, a system designed to minimize inference latency and GPU memory usage in Retrieval-augmented generation (RAG) systems.

🛠️ Research Methods:

– Implement a lookahead retrieval mechanism that prefetches data from CPU to GPU, leveraging the modularity of RAG pipelines and the inverted file index (IVF) search algorithm.

💬 Research Conclusions:

– TeleRAG achieves up to 1.72x reduction in end-to-end inference latency, facilitating faster and more memory-efficient deployment of RAG applications compared to current systems.

👉 Paper link: https://huggingface.co/papers/2502.20969

11. Optimal Brain Apoptosis

🔑 Keywords: Pruning, Convolutional Neural Networks, Transformers, Optimal Brain Apoptosis, Hessian Matrix

💡 Category: Machine Learning

🌟 Research Objective:

– To enhance computational efficiency in CNNs and Transformers through an advanced pruning method.

🛠️ Research Methods:

– Introduced Optimal Brain Apoptosis, a pruning method using direct Hessian-vector product calculations.

– Decomposed the Hessian matrix across network layers for efficient computation of second-order Taylor expansion.

💬 Research Conclusions:

– The proposed pruning method, OBA, allows for precise optimization in CNNs and Transformers, as demonstrated in experiments with VGG19, ResNet32, ResNet50, and ViT-B/16 on CIFAR10, CIFAR100, and Imagenet.

👉 Paper link: https://huggingface.co/papers/2502.17941

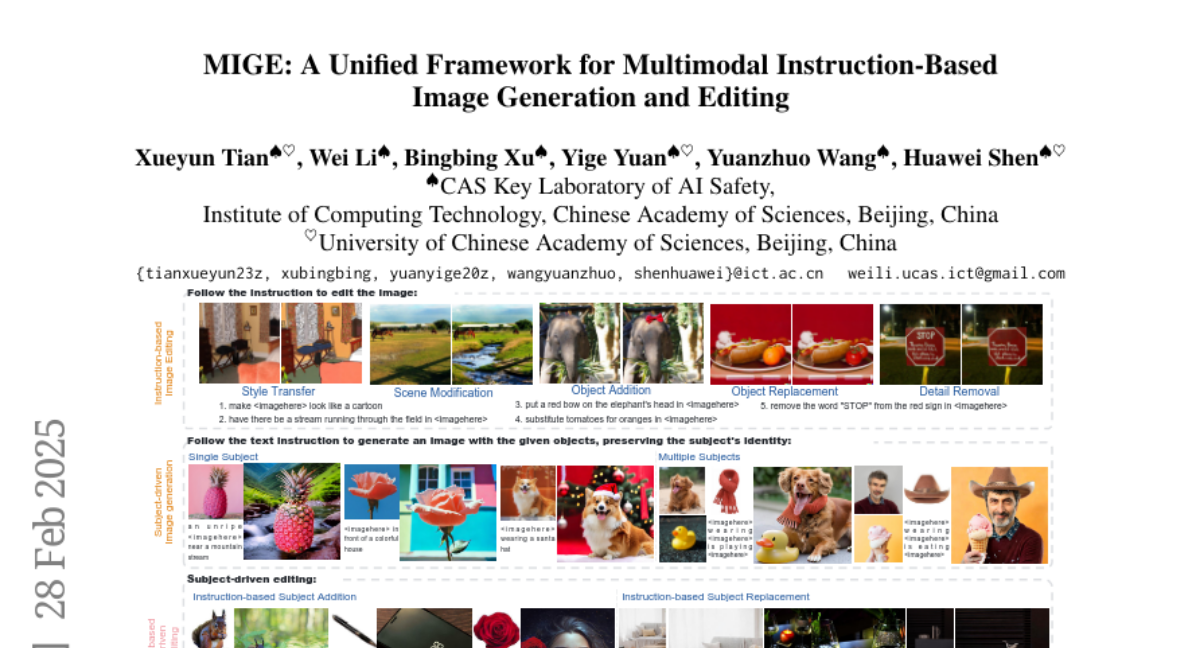

12. MIGE: A Unified Framework for Multimodal Instruction-Based Image Generation and Editing

🔑 Keywords: Diffusion-based image generation, Subject-driven generation, Instruction-based editing, Multimodal instructions, Cross-Task Enhancement

💡 Category: Generative Models

🌟 Research Objective:

– The research aims to address challenges in subject-driven generation and instruction-based editing by proposing MIGE, a unified framework that standardizes task representations through multimodal instructions.

🛠️ Research Methods:

– MIGE uses a novel multimodal encoder to map instructions into a unified vision-language space, integrating visual and semantic features, enabling joint training for better instruction adherence and visual consistency.

💬 Research Conclusions:

– Experiments demonstrate that MIGE excels in subject-driven generation and instruction-based editing, setting a state-of-the-art in instruction-based subject-driven editing while enabling cross-task knowledge transfer for generalization to novel compositional tasks.

👉 Paper link: https://huggingface.co/papers/2502.21291

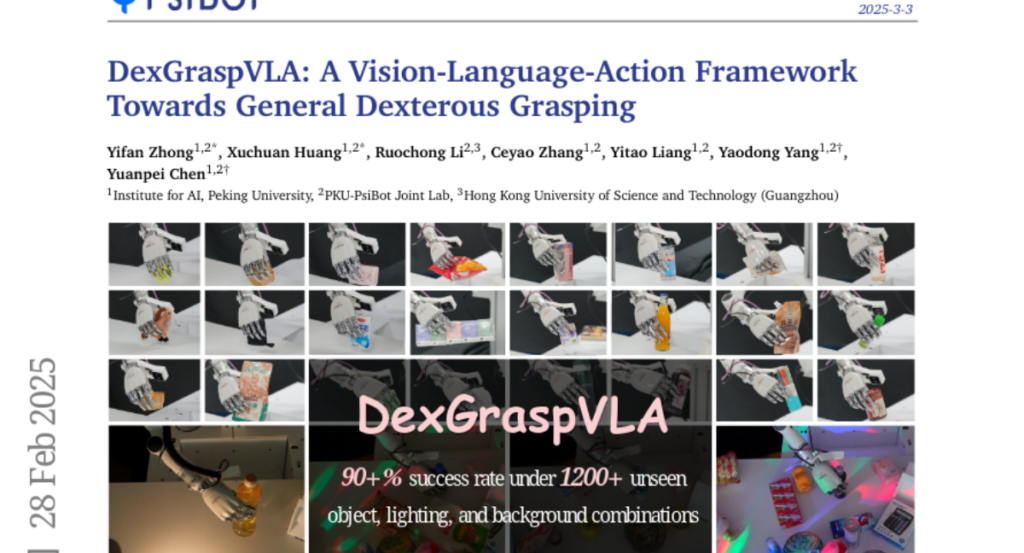

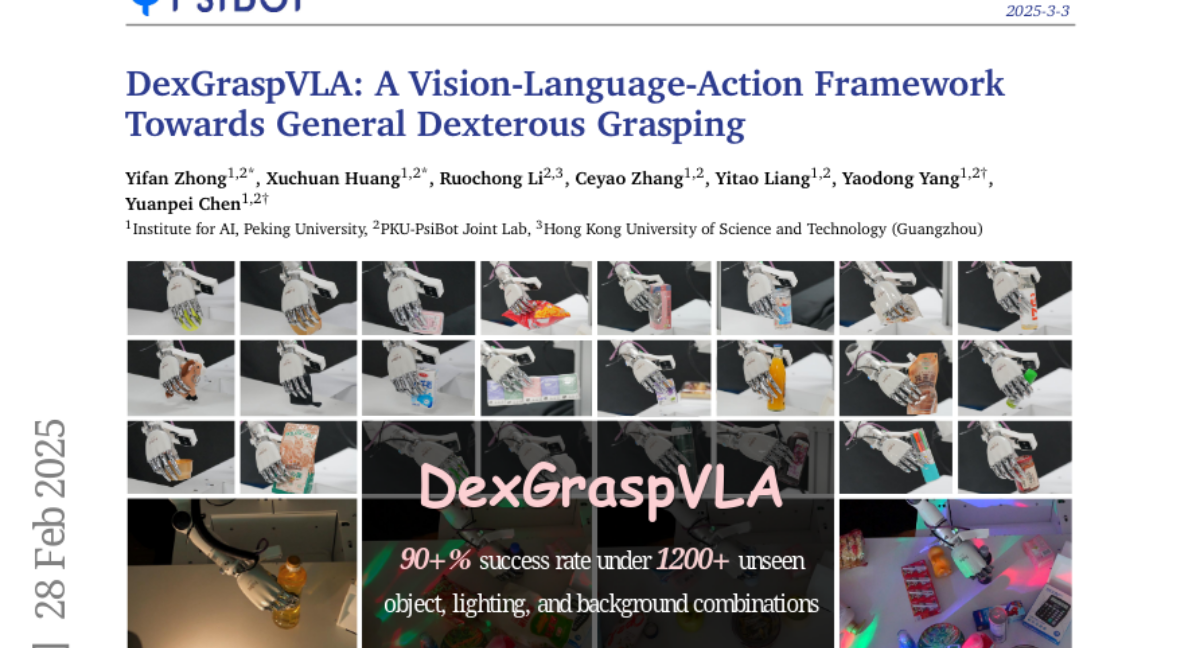

13. DexGraspVLA: A Vision-Language-Action Framework Towards General Dexterous Grasping

🔑 Keywords: Dexterous Grasping, Vision-Language Model, Zero-Shot, Diffusion-based Policy, Imitation Learning

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To develop a general-purpose robotic grasping framework capable of handling diverse objects in arbitrary scenarios with high success rates.

🛠️ Research Methods:

– Introduced DexGraspVLA, a hierarchical framework leveraging a pre-trained Vision-Language model for high-level task planning and a diffusion-based policy for low-level action control.

– Utilized imitation learning to enhance domain-invariant representations and improve generalization across variations in environments.

💬 Research Conclusions:

– The proposed method achieved over 90% success rate in grasping tasks across thousands of unseen combinations of objects, lighting, and background in a zero-shot setting.

– Empirical analyses confirmed consistent internal model behavior, supporting the robust generalization performance observed in diverse real-world scenarios.

👉 Paper link: https://huggingface.co/papers/2502.20900

14. Preference Learning Unlocks LLMs’ Psycho-Counseling Skills

🔑 Keywords: Large Language Models, Psycho-Counseling, Privacy Concerns, Professional Principles, Preference Dataset

💡 Category: AI in Healthcare

🌟 Research Objective:

– To bridge the gap between patient needs and mental health support using large language models (LLMs) by addressing the challenges of inconsistent response quality and privacy concerns.

🛠️ Research Methods:

– Development of a set of professional and comprehensive principles to evaluate therapists’ responses.

– Creation of a dataset called PsychoCounsel-Preference with 36k high-quality preference comparison pairs, aligning with professional psychotherapists’ preferences.

💬 Research Conclusions:

– PsychoCounsel-Preference serves as a robust resource for LLMs to improve their skills in psycho-counseling.

– The model PsychoCounsel-Llama3-8B shows a high win rate against GPT-4o, indicating its efficacy in providing quality counseling responses.

– Release of the dataset and models aims to advance research in applying LLMs to psycho-counseling.

👉 Paper link: https://huggingface.co/papers/2502.19731

15. LettuceDetect: A Hallucination Detection Framework for RAG Applications

🔑 Keywords: Hallucinated Answers, Retrieval Augmented Generation (RAG), ModernBERT, RAGTruth

💡 Category: Natural Language Processing

🌟 Research Objective:

– The research aims to address limitations in hallucination detection within Retrieval Augmented Generation systems by overcoming context window constraints and improving computational efficiency.

🛠️ Research Methods:

– The LettuceDetect framework uses a token-classification model for context-question-answer triples, built on ModernBERT and trained on the RAGTruth benchmark dataset.

💬 Research Conclusions:

– LettuceDetect outperforms previous encoder-based and most prompt-based models, achieving a 14.8% improvement in F1 score over existing solutions while being more computationally efficient.

👉 Paper link: https://huggingface.co/papers/2502.17125

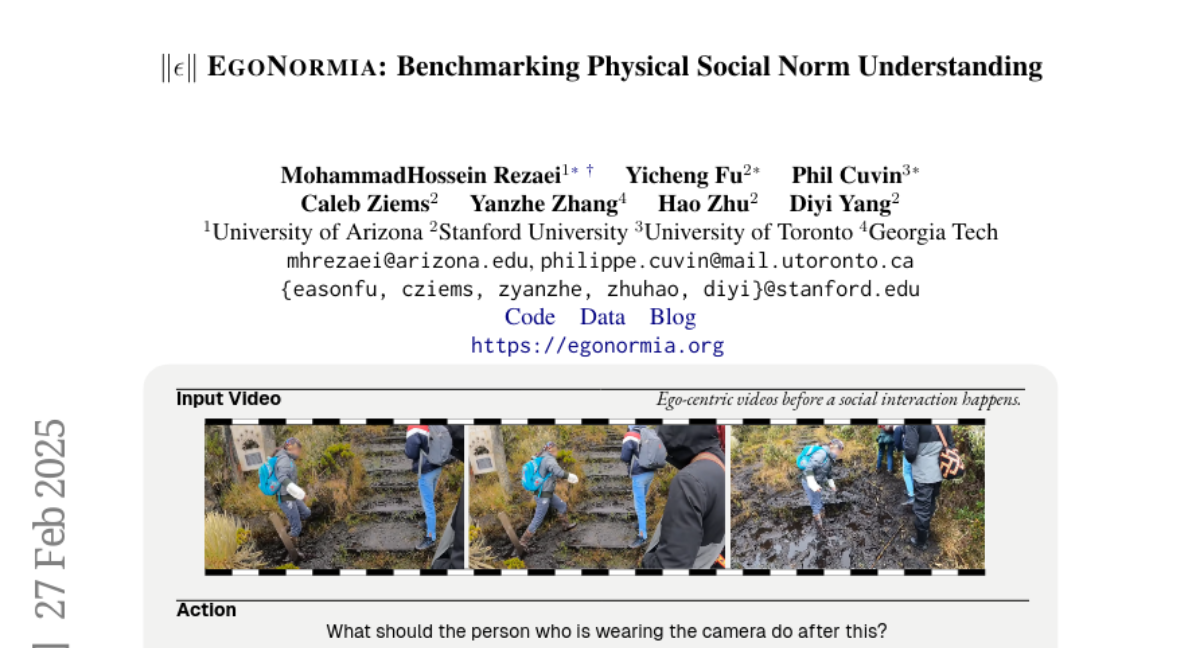

16. EgoNormia: Benchmarking Physical Social Norm Understanding

🔑 Keywords: Normative Reasoning, Vision-Language Models, AI Ethics, Human-AI Interaction

💡 Category: Human-AI Interaction

🌟 Research Objective:

– The study aims to improve and evaluate normative reasoning capability in vision-language models (VLMs) using a novel dataset, EgoNormia |epsilon|.

🛠️ Research Methods:

– A novel pipeline leveraging video sampling, automatic answer generation, and human validation was utilized to compile a dataset featuring 1,853 ego-centric videos, each with questions on prediction and justification of normative actions.

💬 Research Conclusions:

– Current state-of-the-art VLMs exhibit inadequate norm understanding, with a performance significantly lower than human benchmarks, highlighting risks in safety, privacy, and collaboration when applied in real-world scenarios. The research also suggests that a retrieval-based generation method has potential to enhance normative reasoning in VLMs.

👉 Paper link: https://huggingface.co/papers/2502.20490

17. HAIC: Improving Human Action Understanding and Generation with Better Captions for Multi-modal Large Language Models

🔑 Keywords: Multi-modal Large Language Models, Video Understanding, Human Actions, Data Annotation, Datasets

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The research aims to enhance video understanding, especially in scenarios involving human actions, by overcoming the limitations caused by the lack of high-quality data.

🛠️ Research Methods:

– A two-stage data annotation pipeline was developed, involving the collection of videos with clear human actions and annotating these in a standardized caption format to detail individual actions and interactions.

💬 Research Conclusions:

– The newly curated datasets, HAICTrain and HAICBench, significantly improve human action understanding abilities across benchmarks and enhance text-to-video generation results.

👉 Paper link: https://huggingface.co/papers/2502.20811