AI Native Daily Paper Digest – 20250819

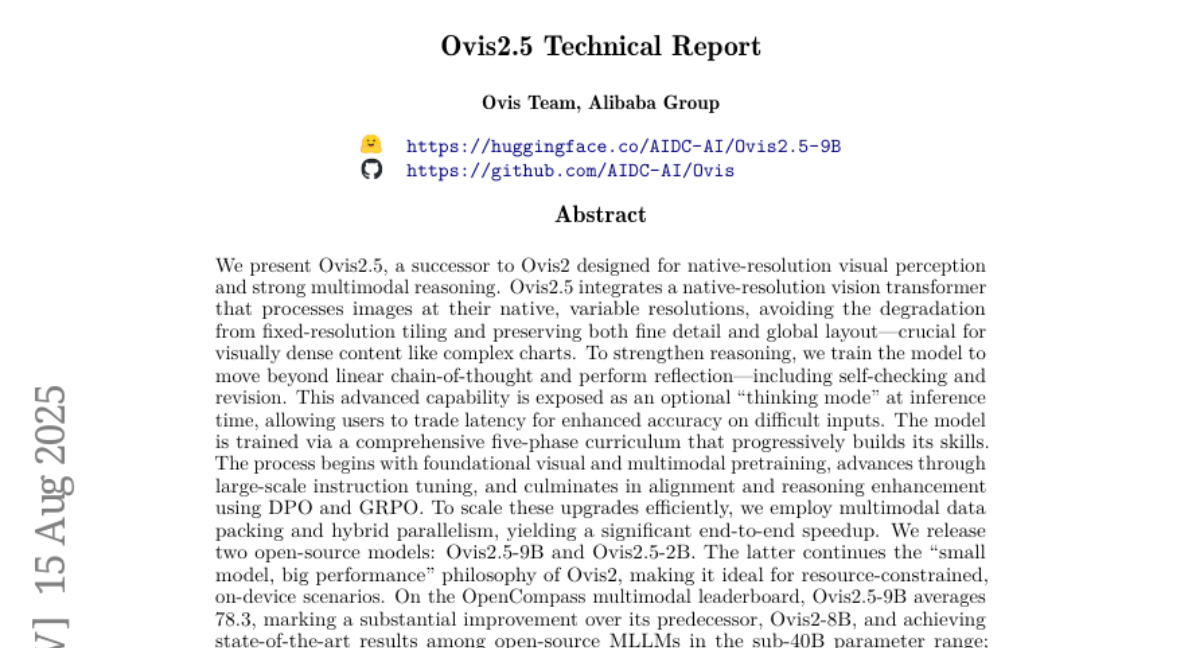

1. Ovis2.5 Technical Report

🔑 Keywords: AI Native, Vision Transformer, Multimodal Reasoning, Native-Resolution, Thinking Mode

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Present Ovis2.5 as a vision transformer with native-resolution capabilities and multimodal reasoning to achieve state-of-the-art performance.

🛠️ Research Methods:

– Integration of native-resolution processing and multimodal reasoning.

– Advanced training techniques using a five-phase curriculum, including multimodal data packing and hybrid parallelism.

💬 Research Conclusions:

– Ovis2.5 achieves significant performance improvements over its predecessor and sets new benchmarks in open-source MLLMs for its size, excelling in complex chart analysis and various STEM benchmarks.

👉 Paper link: https://huggingface.co/papers/2508.11737

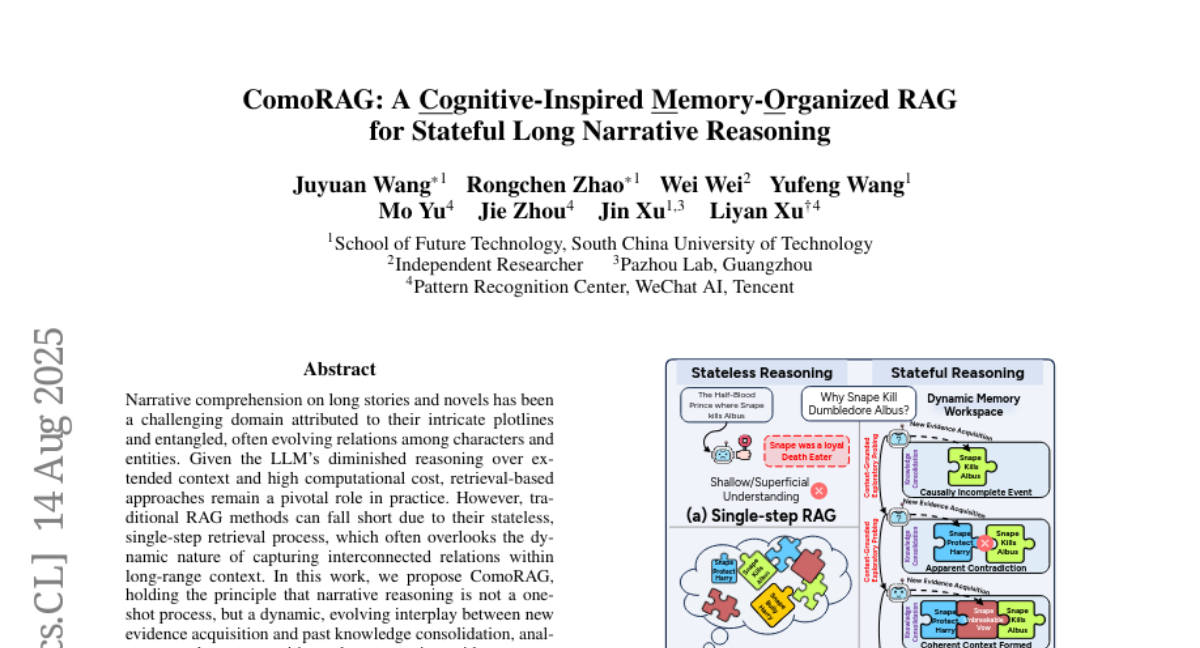

2. ComoRAG: A Cognitive-Inspired Memory-Organized RAG for Stateful Long Narrative Reasoning

🔑 Keywords: ComoRAG, retrieval-based approaches, narrative comprehension, dynamic memory workspace, probing queries

💡 Category: Natural Language Processing

🌟 Research Objective:

– Enhance long-context narrative comprehension by improving upon traditional RAG methods using iterative retrieval and dynamic memory updates.

🛠️ Research Methods:

– Develop ComoRAG, which utilizes iterative reasoning cycles to generate probing queries and integrate new evidence into a global memory pool for improved context comprehension.

💬 Research Conclusions:

– ComoRAG achieves substantial performance improvements, showing up to 11% gains over strong RAG baselines, particularly in handling complex queries requiring stateful reasoning.

👉 Paper link: https://huggingface.co/papers/2508.10419

3. 4DNeX: Feed-Forward 4D Generative Modeling Made Easy

🔑 Keywords: 4DNeX, video diffusion model, dynamic 3D scene, 4D data, novel-view video synthesis

💡 Category: Generative Models

🌟 Research Objective:

– To develop 4DNeX, a feed-forward framework for generating dynamic 3D scene representations from a single image efficiently and generally.

🛠️ Research Methods:

– Fine-tuning a pretrained video diffusion model.

– Creating a large-scale 4DNeX-10M dataset with advanced reconstruction.

– Introducing a unified 6D video representation to model RGB and XYZ sequences.

– Proposing adaptation strategies for pretrained video diffusion models.

💬 Research Conclusions:

– 4DNeX efficiently produces high-quality dynamic point clouds and novel-view video synthesis, outperforming existing methods in scalability and generalizability for image-to-4D modeling.

👉 Paper link: https://huggingface.co/papers/2508.13154