AI Native Daily Paper Digest – 20250822

1. Intern-S1: A Scientific Multimodal Foundation Model

🔑 Keywords: Multimodal Mixture-of-Experts, reinforcement learning, scientific domains, open-source models, Artificial General Intelligence

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Intern-S1 aims to bridge the performance gap between open-source and closed-source models in challenging scientific fields to advance towards Artificial General Intelligence.

🛠️ Research Methods:

– Utilizes a multimodal Mixture-of-Experts model with 28 billion activated parameters, pre-trained on 5 trillion tokens, followed by offline and online reinforcement learning, leveraging a Mixture-of-Rewards strategy.

💬 Research Conclusions:

– Intern-S1 demonstrates top-tier performance in general reasoning and significantly outperforms both open-source and closed-source models in scientific tasks, indicating substantial improvements in professional applications such as molecular synthesis and thermodynamic stability prediction.

👉 Paper link: https://huggingface.co/papers/2508.15763

2. Mobile-Agent-v3: Foundamental Agents for GUI Automation

🔑 Keywords: GUI-Owl, Mobile-Agent-v3, Scalable Reinforcement Learning, State-of-the-art, GUI agent

💡 Category: Reinforcement Learning

🌟 Research Objective:

– Introduce GUI-Owl, a foundational GUI agent model, achieving state-of-the-art performance across multiple GUI benchmarks in desktop and mobile environments.

🛠️ Research Methods:

– Developed a cloud-based virtual environment infrastructure enabling the Self-Evolving GUI Trajectory Production framework.

– Integrated diverse foundational agent capabilities for end-to-end decision-making.

– Implemented a scalable reinforcement learning framework with asynchronous training and Trajectory-aware Relative Policy Optimization (TRPO).

💬 Research Conclusions:

– GUI-Owl and Mobile-Agent-v3 achieve leading performance, noted as a new state-of-the-art framework for open-source GUI agents.

– Enhancements achieved include improved self-improving loops and reductions in manual annotation efforts.

👉 Paper link: https://huggingface.co/papers/2508.15144

3. Deep Think with Confidence

🔑 Keywords: DeepConf, model-internal confidence signals, reasoning efficiency, token generation

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– Introduce Deep Think with Confidence (DeepConf) to improve reasoning efficiency and performance in large language models.

🛠️ Research Methods:

– Utilize model-internal confidence signals to dynamically filter low-quality reasoning traces without additional training or hyperparameter tuning.

💬 Research Conclusions:

– DeepConf achieves up to 99.9% accuracy and reduces generated tokens by up to 84.7% compared to traditional methods on benchmarks like AIME 2025.

👉 Paper link: https://huggingface.co/papers/2508.15260

4. LiveMCP-101: Stress Testing and Diagnosing MCP-enabled Agents on Challenging Queries

🔑 Keywords: LiveMCP-101, AI agents, tool orchestration, Model Context Protocol, token usage

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The study aims to benchmark AI agents’ ability to use multiple tools in real-world scenarios, highlighting the gaps in tool orchestration and token usage efficiency.

🛠️ Research Methods:

– Introduction of LiveMCP-101, a benchmark consisting of 101 real-world queries requiring coordinated use of multiple MCP tools through iterative LLM rewriting and manual review. A novel evaluation approach using ground-truth execution plans is also introduced.

💬 Research Conclusions:

– Experimentation reveals even advanced LLMs show a success rate below 60%, underscoring significant challenges in tool orchestration. Detailed analyses identify distinct failure modes and highlight areas for improvement in current AI models.

👉 Paper link: https://huggingface.co/papers/2508.15760

5. Waver: Wave Your Way to Lifelike Video Generation

🔑 Keywords: Waver, Hybrid Stream DiT, AI-generated summary, T2V, I2V

💡 Category: Generative Models

🌟 Research Objective:

– To develop Waver, a high-performance foundation model for generating high-quality videos and images using a unified framework.

🛠️ Research Methods:

– Introduction of a Hybrid Stream DiT architecture for enhancing modality alignment and accelerating training convergence.

– Establishment of a comprehensive data curation pipeline and an MLLM-based video quality model for selecting high-quality samples.

💬 Research Conclusions:

– Waver excels in text-to-video, image-to-video, and text-to-image generation, ranking among the Top 3 on T2V and I2V leaderboards, surpassing state-of-the-art models and solutions.

👉 Paper link: https://huggingface.co/papers/2508.15761

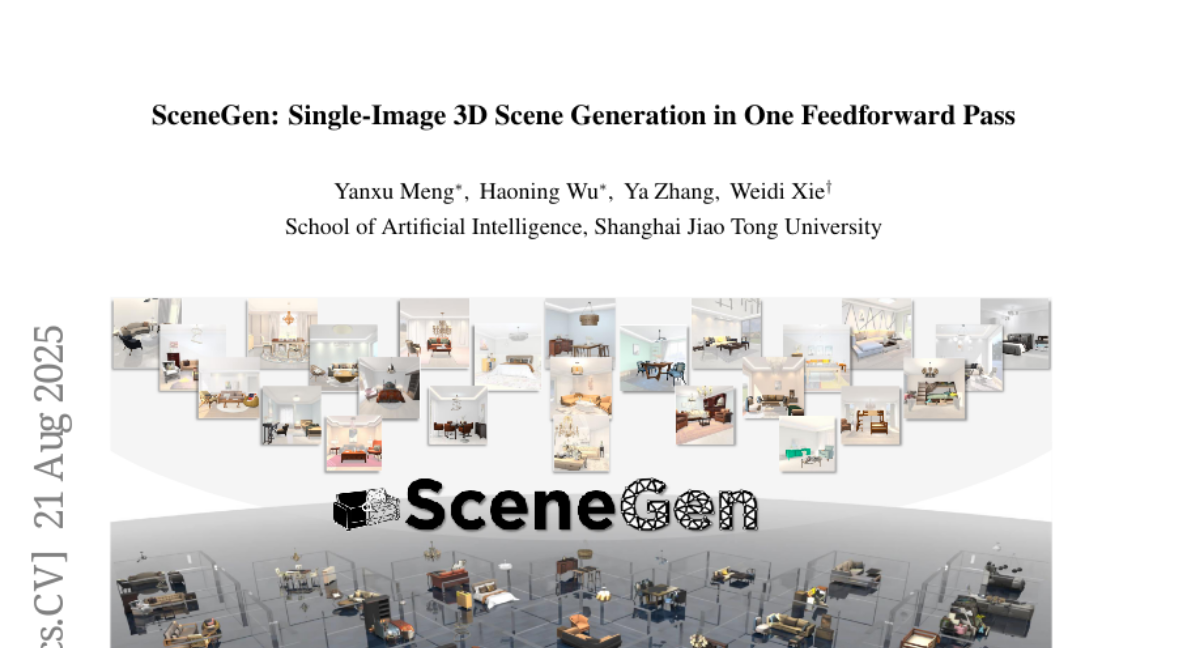

6. SceneGen: Single-Image 3D Scene Generation in One Feedforward Pass

🔑 Keywords: 3D content generation, VR/AR, embodied AI, SceneGen, feature aggregation module

💡 Category: Generative Models

🌟 Research Objective:

– To develop SceneGen, a framework that generates multiple 3D assets from a single scene image, leveraging local and global scene information.

🛠️ Research Methods:

– Introducing a novel feature aggregation module integrating scene information from visual and geometric encoders to produce 3D assets without optimization or asset retrieval.

– Implementing a position head that determines relative spatial positions of 3D assets in one feedforward pass.

– Showcasing the framework’s extensibility to scenarios with multi-image inputs despite being trained on single-image data.

💬 Research Conclusions:

– SceneGen demonstrates efficient and robust 3D content generation, validated through extensive quantitative and qualitative evaluations, paving the way for advancements in applications like VR/AR.

👉 Paper link: https://huggingface.co/papers/2508.15769

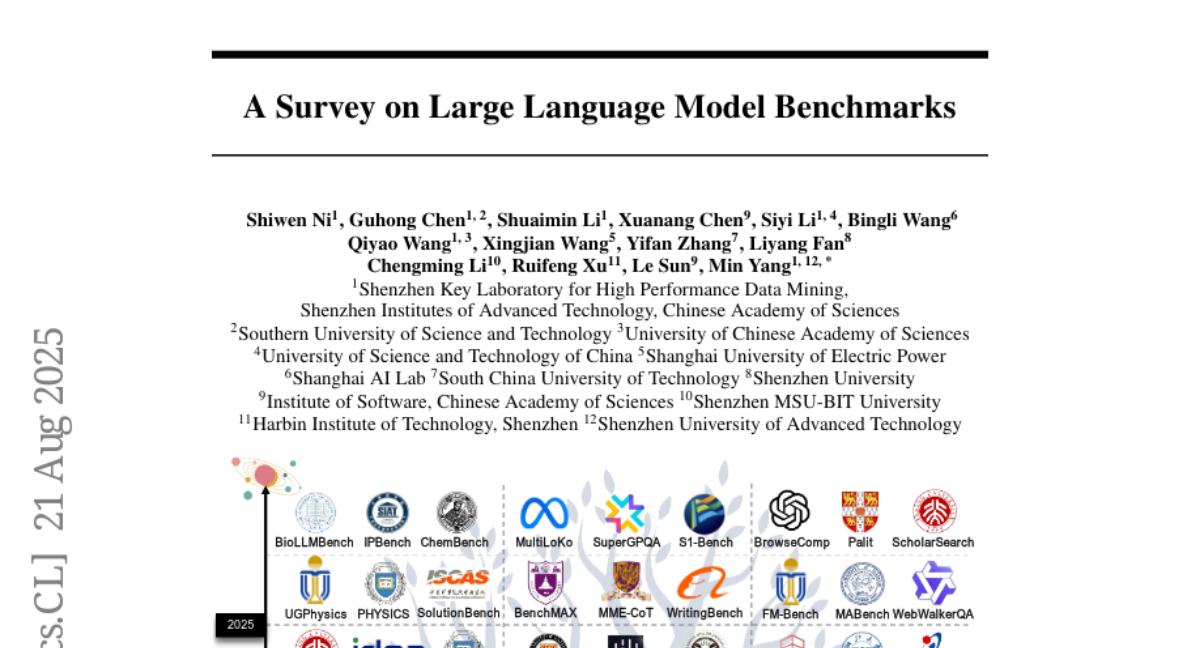

7. A Survey on Large Language Model Benchmarks

🔑 Keywords: large language models, evaluation benchmarks, data contamination, cultural biases, process credibility

💡 Category: Natural Language Processing

🌟 Research Objective:

– The paper aims to systematically review and categorize the current status of large language model benchmarks to improve their effectiveness.

🛠️ Research Methods:

– A systematic review categorizing 283 benchmarks into general capabilities, domain-specific, and target-specific categories.

💬 Research Conclusions:

– The study identifies issues like data contamination, cultural biases, and lack of process credibility in benchmarks, proposing a design paradigm for future improvements.

👉 Paper link: https://huggingface.co/papers/2508.15361

8. Visual Autoregressive Modeling for Instruction-Guided Image Editing

🔑 Keywords: Visual autoregressive, Scale-Aligned Reference, Image editing, Diffusion models

💡 Category: Generative Models

🌟 Research Objective:

– Introduce VAREdit, a visual autoregressive framework, for improved image editing adherence and efficiency.

🛠️ Research Methods:

– Utilize a Scale-Aligned Reference module to condition source image tokens for multi-scale target feature generation.

– Reframe image editing as a next-scale prediction problem.

💬 Research Conclusions:

– VAREdit excels in both adherence and efficiency, outperforming diffusion-based methods by over 30% in GPT-Balance score.

– It achieves faster editing completion, specifically completing a 512×512 editing in 1.2 seconds, which is 2.2 times faster than UltraEdit.

👉 Paper link: https://huggingface.co/papers/2508.15772

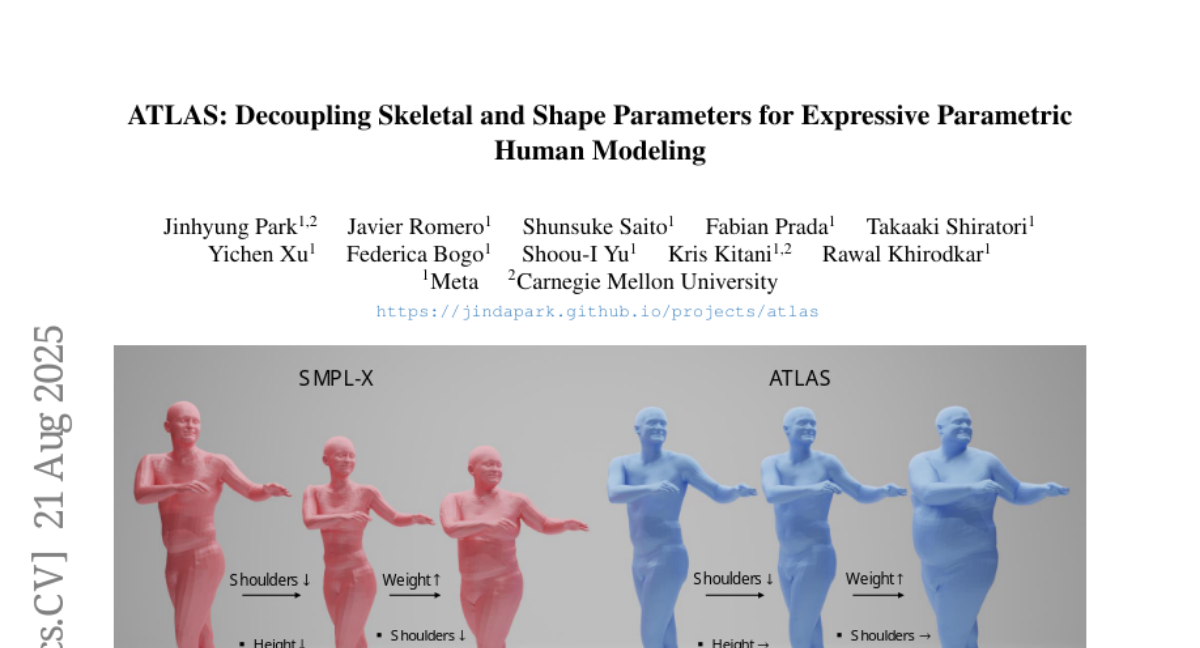

9. ATLAS: Decoupling Skeletal and Shape Parameters for Expressive Parametric Human Modeling

🔑 Keywords: ATLAS, 3D representation, decoupling, shape expressivity, non-linear pose correctives

💡 Category: Computer Vision

🌟 Research Objective:

– The study aims to improve pose accuracy and shape expressivity of parametric body models by decoupling shape and skeleton bases using the ATLAS model.

🛠️ Research Methods:

– Utilized 600k high-resolution scans captured by 240 synchronized cameras to create a high-fidelity body model.

– Focused on decoupling shape and skeleton bases, grounded in the human skeleton, for enhanced expressivity and customization.

💬 Research Conclusions:

– ATLAS outperforms existing models by accurately fitting unseen subjects in diverse poses.

– The non-linear pose correctives in ATLAS capture complex poses more effectively than linear models.

👉 Paper link: https://huggingface.co/papers/2508.15767

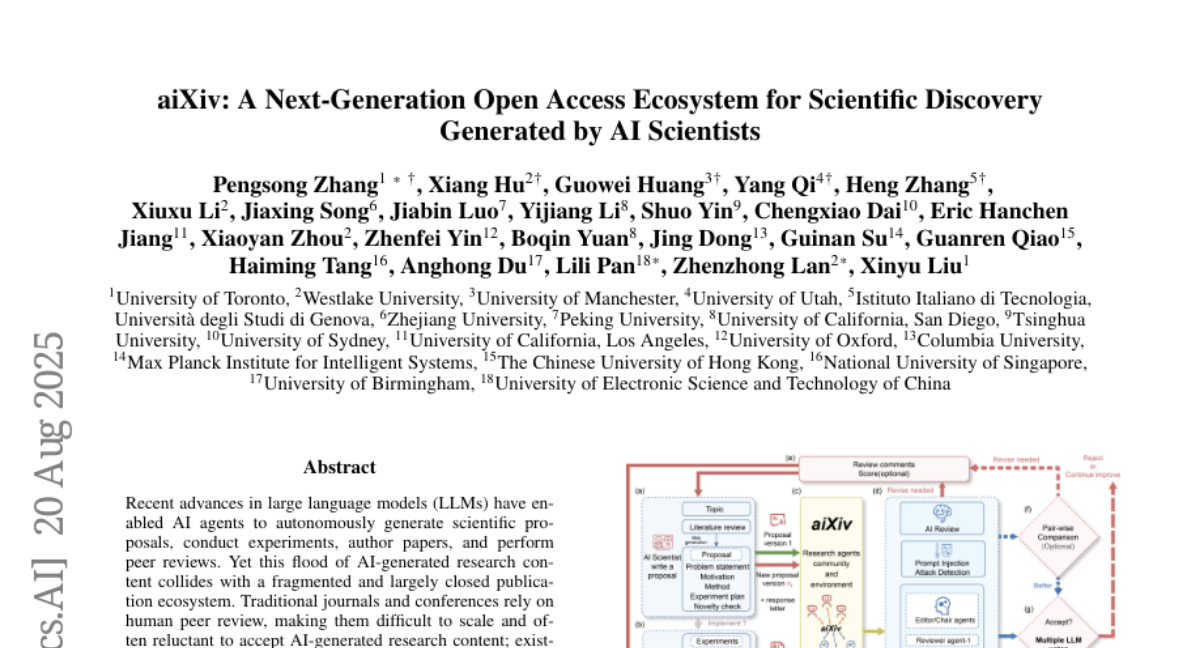

10. aiXiv: A Next-Generation Open Access Ecosystem for Scientific Discovery Generated by AI Scientists

🔑 Keywords: AI-generated research, AI Native, open-access platform, multi-agent architecture, autonomous scientific discovery

💡 Category: AI Systems and Tools

🌟 Research Objective:

– Introduce aiXiv, an open-access platform designed to enhance the submission, review, and dissemination process of AI-generated research by using a multi-agent architecture that integrates both human and AI scientists.

🛠️ Research Methods:

– Extensive experiments demonstrating the platform’s capability in improving the quality of AI-generated proposals and papers through iterative revision and reviewing by both human and AI agents.

💬 Research Conclusions:

– aiXiv creates a scalable ecosystem facilitating high-quality AI research dissemination, addressing the gaps in current publishing systems that hinder AI-generated content’s potential to advance scientific progress.

👉 Paper link: https://huggingface.co/papers/2508.15126

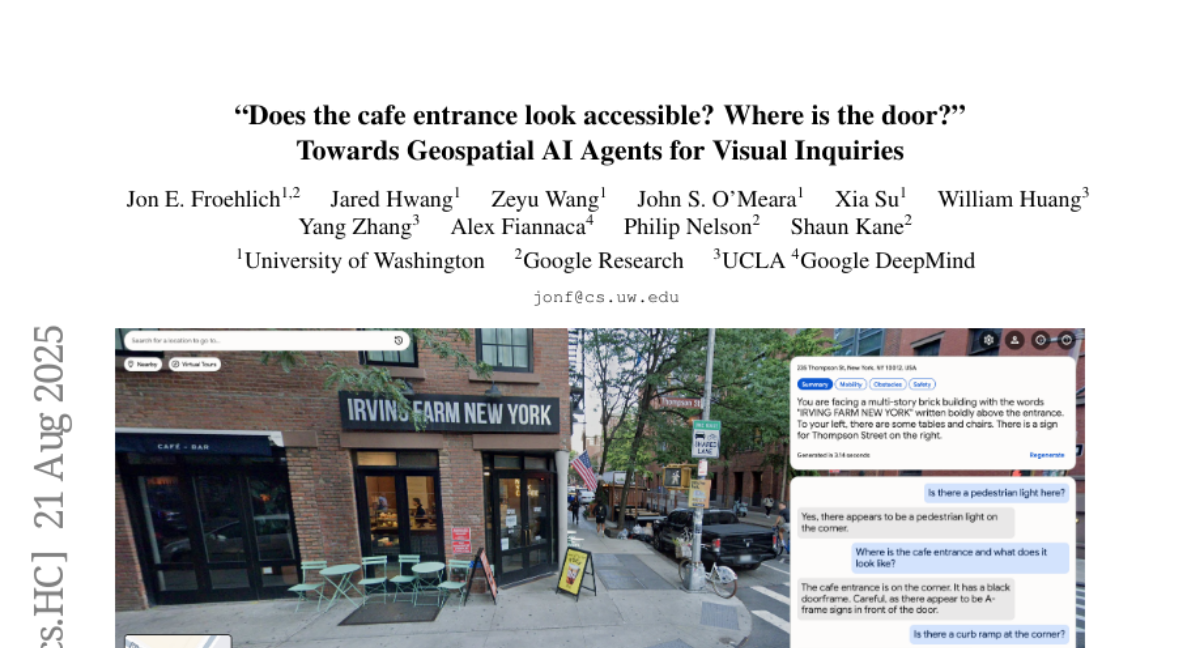

11. “Does the cafe entrance look accessible? Where is the door?” Towards Geospatial AI Agents for Visual Inquiries

🔑 Keywords: Geo-Visual Agents, multimodal AI agents, geospatial images, GIS data

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To introduce and define Geo-Visual Agents, aiming to enable these multimodal AI systems to respond to complex visual-spatial inquiries by integrating geospatial imagery with GIS data.

🛠️ Research Methods:

– Combination of diverse geospatial images, including streetscapes, place-based photos, and aerial imagery, with traditional GIS data sources to enhance visual-spatial inquiry responses.

💬 Research Conclusions:

– Geo-Visual Agents represent a significant advancement in digital maps, highlighting opportunities for future research in overcoming challenges associated with the integration of large-scale geospatial image repositories and GIS data.

👉 Paper link: https://huggingface.co/papers/2508.15752

12. INTIMA: A Benchmark for Human-AI Companionship Behavior

🔑 Keywords: AI companionship, emotional bonds, companionship-reinforcing, boundary-maintaining, user well-being

💡 Category: Human-AI Interaction

🌟 Research Objective:

– The study introduces the Interactions and Machine Attachment Benchmark (INTIMA) to evaluate companionship behaviors in language models.

🛠️ Research Methods:

– Development of a taxonomy with 31 behaviors across four categories using psychological theories and user data. Evaluation of responses to 368 targeted prompts.

💬 Research Conclusions:

– Companionship-reinforcing behaviors are prevalent across all models, but notable differences exist between them. The need for consistent handling of emotionally charged interactions is emphasized to ensure user well-being.

👉 Paper link: https://huggingface.co/papers/2508.09998

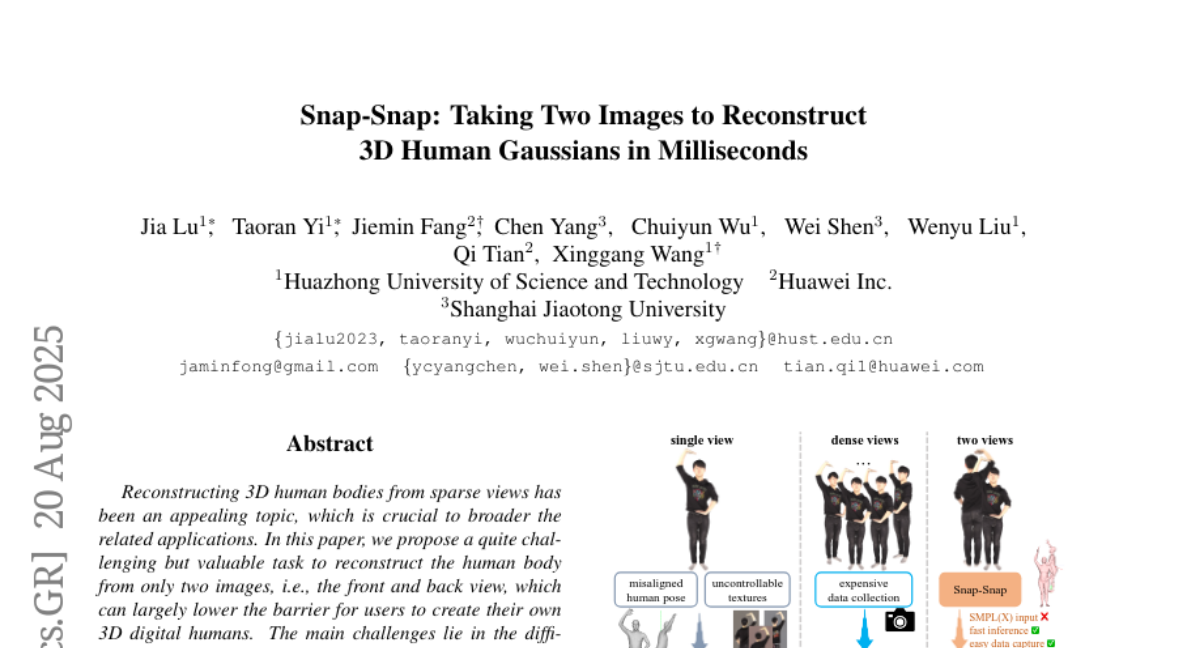

13. Snap-Snap: Taking Two Images to Reconstruct 3D Human Gaussians in Milliseconds

🔑 Keywords: 3D reconstruction, geometry reconstruction model, enhancement algorithm, point clouds, rendering quality

💡 Category: Computer Vision

🌟 Research Objective:

– The research aims to reconstruct 3D human bodies from just two sparse views (front and back), significantly lowering the barrier for users wishing to create 3D digital humans.

🛠️ Research Methods:

– Utilizes a redesigned geometry reconstruction model to predict consistent point clouds from sparse input images.

– Implements an enhancement algorithm to fill in missing color information for improved rendering quality.

💬 Research Conclusions:

– The method demonstrated state-of-the-art performance, reconstructing a full human body in 190 ms using a NVIDIA RTX 4090, and performed robustly even with images from low-cost mobile devices.

👉 Paper link: https://huggingface.co/papers/2508.14892

14. Fin-PRM: A Domain-Specialized Process Reward Model for Financial Reasoning in Large Language Models

🔑 Keywords: Fin-PRM, domain-specialized, financial logic, trajectory-aware, reinforcement learning

💡 Category: AI in Finance

🌟 Research Objective:

– The study introduces Fin-PRM, a specialized reward model designed to enhance the intermediate reasoning capabilities of large language models specifically in the financial domain.

🛠️ Research Methods:

– Fin-PRM employs step-level and trajectory-level supervision to evaluate and enhance reasoning traces in both offline and online reward learning settings, supporting applications such as distillation-based supervised fine-tuning and best-of-N inference at test time.

💬 Research Conclusions:

– Experimental results reveal that Fin-PRM significantly outperforms general-purpose and strong domain-specific baselines in financial reasoning tasks, demonstrating improvements of 12.9% in supervised learning, 5.2% in reinforcement learning, and 5.1% in test-time performance.

👉 Paper link: https://huggingface.co/papers/2508.15202

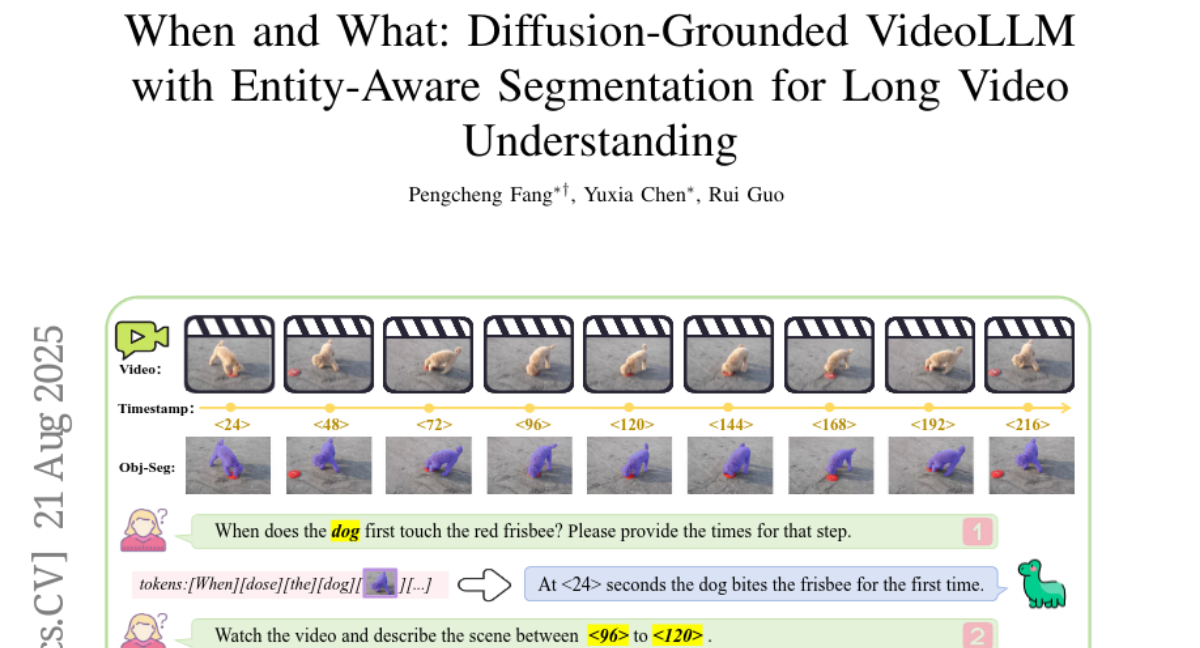

15. When and What: Diffusion-Grounded VideoLLM with Entity Aware Segmentation for Long Video Understanding

🔑 Keywords: Diffusion Temporal Latent encoder, object grounded representations, mixed token scheme, VideoQA

💡 Category: Computer Vision

🌟 Research Objective:

– To address limitations in temporal perception and entity interaction in Video LLMs for VideoQA benchmarks.

🛠️ Research Methods:

– Introduction of a Diffusion Temporal Latent (DTL) encoder for boundary sensitivity and temporal consistency.

– Use of object grounded representations to strengthen alignment between query entities and visual evidence.

– Implementation of a mixed token scheme for explicit timestamp modeling to enhance temporal reasoning.

💬 Research Conclusions:

– Grounded VideoDiT achieves state-of-the-art results in VideoQA tasks, validated by its performance on Charades STA, NExT GQA, and various VideoQA benchmarks.

👉 Paper link: https://huggingface.co/papers/2508.15641

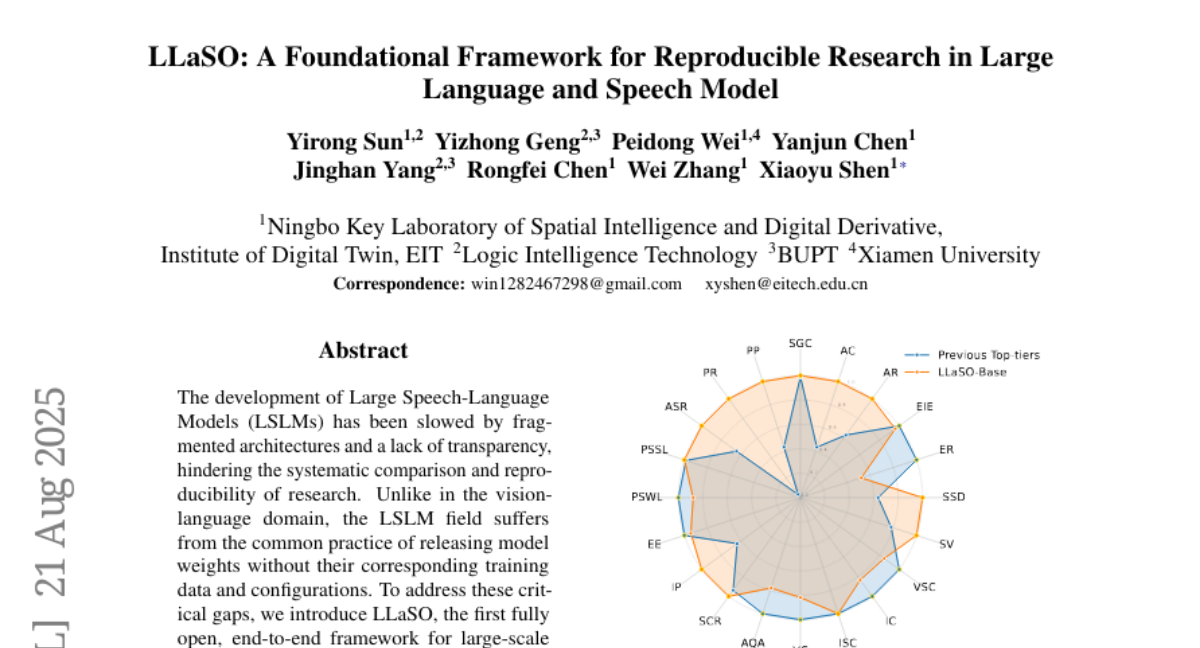

16. LLaSO: A Foundational Framework for Reproducible Research in Large Language and Speech Model

🔑 Keywords: LLaSO, Large Speech-Language Models, speech-text alignment, instruction-tuning, reproducible benchmark

💡 Category: Natural Language Processing

🌟 Research Objective:

– To introduce LLaSO, an open framework aimed at addressing gaps in large-scale speech-language modeling by providing alignment data, instruction-tuning datasets, and evaluation benchmarks.

🛠️ Research Methods:

– Developed and released LLaSO-Align for speech-text alignment, LLaSO-Instruct for multi-task instruction-tuning, and LLaSO-Eval for standardized evaluation.

– Built and released LLaSO-Base, a 3.8B-parameter model trained on public data.

💬 Research Conclusions:

– Established a strong, reproducible baseline with LLaSO-Base, which surpasses comparable models with a normalized score of 0.72.

– Highlighted ongoing generalization challenges, particularly in pure audio tasks, despite broader training coverage.

– Positioned LLaSO as a foundational open standard to unify and accelerate research in LSLMs.

👉 Paper link: https://huggingface.co/papers/2508.15418

17.