AI Native Daily Paper Digest – 20260420

1. Elucidating the SNR-t Bias of Diffusion Probabilistic Models

🔑 Keywords: Diffusion Probabilistic Models, SNR-timestep bias, differential correction, frequency components, generation quality

💡 Category: Generative Models

🌟 Research Objective:

– Address the Signal-to-Noise Ratio-timestep (SNR-t) bias in diffusion probabilistic models that affects generation quality.

🛠️ Research Methods:

– Proposed a differential correction method that processes frequency components separately in samples to mitigate SNR-t bias.

💬 Research Conclusions:

– The differential correction method significantly enhances the generation quality of multiple diffusion models across datasets with minimal computational impact.

👉 Paper link: https://huggingface.co/papers/2604.16044

2. PersonaVLM: Long-Term Personalized Multimodal LLMs

🔑 Keywords: PersonaVLM, Multimodal Language Models, Long-term Personalization, Multi-turn Reasoning, Response Alignment

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The paper introduces PersonaVLM, a novel multimodal language model framework designed to achieve long-term personalization, enhancing user interactions through memory retention, multi-turn reasoning, and response alignment.

🛠️ Research Methods:

– The framework transforms existing multimodal large language models into personalized assistants by integrating capabilities like remembering chronological multimodal memories, conducting multi-turn reasoning, and ensuring response alignment with the user’s evolving personality.

💬 Research Conclusions:

– Extensive evaluations using the Persona-MME benchmark demonstrate the framework’s effectiveness, showing a significant improvement in long-term personalization tasks and outperforming existing models such as GPT-4o.

👉 Paper link: https://huggingface.co/papers/2604.13074

3. Qwen3.5-Omni Technical Report

🔑 Keywords: Qwen3.5-Omni, Audio-Visual Vibe Coding, Hybrid Attention Mixture-of-Experts, ARIA, Multimodal Model

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Introducing Qwen3.5-Omni, a multimodal model with advanced audio-visual capabilities and a significant scale of hundreds of billions of parameters.

🛠️ Research Methods:

– Utilizing a Hybrid Attention Mixture-of-Experts framework for efficient long-sequence inference and integrating a massive heterogeneous text-vision dataset.

💬 Research Conclusions:

– Qwen3.5-Omni delivers state-of-the-art results in audio and audio-visual tasks, supports multilingual understanding and speech generation across 10 languages, and introduces Audio-Visual Vibe Coding for advanced interaction.

👉 Paper link: https://huggingface.co/papers/2604.15804

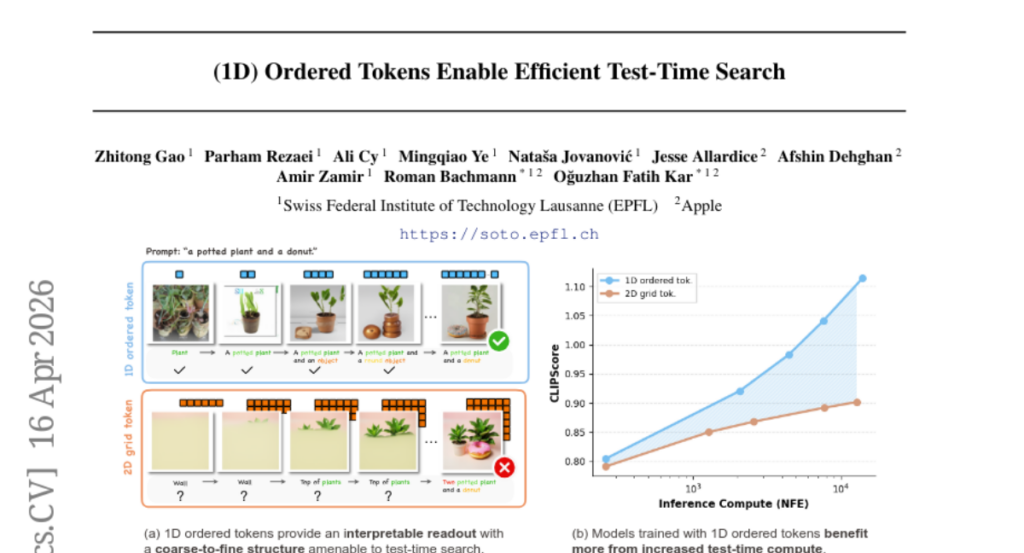

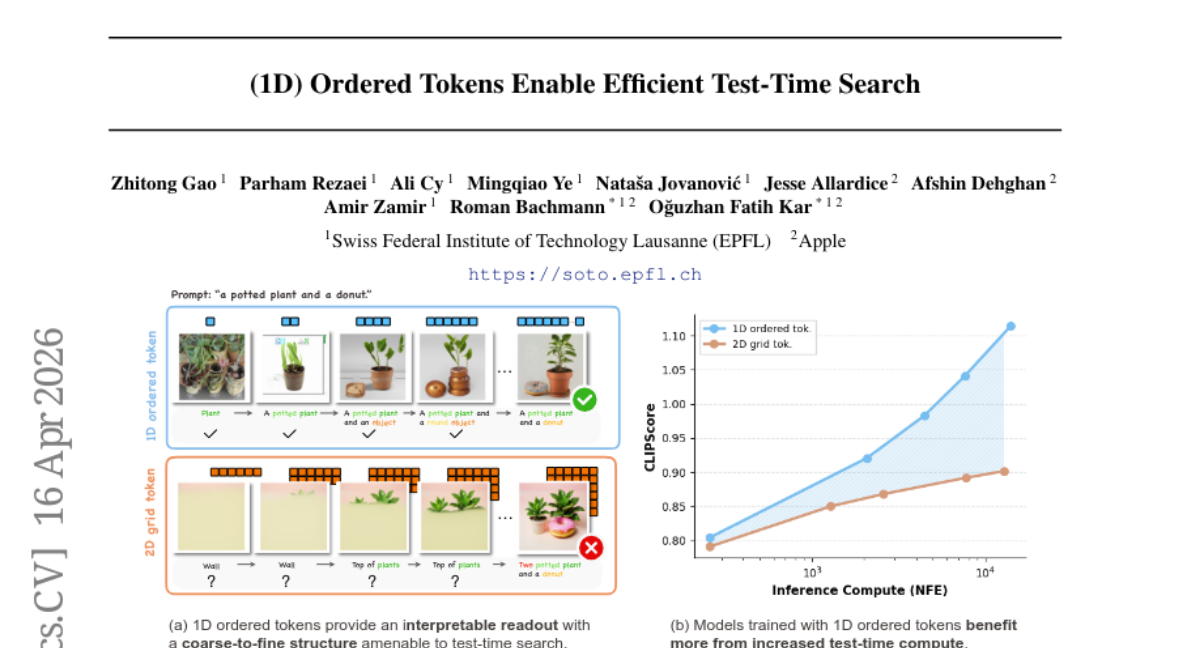

4. (1D) Ordered Tokens Enable Efficient Test-Time Search

🔑 Keywords: Autoregressive models, Coarse-to-fine token structure, Test-time search, Text-to-image generation, Image-text verifier

💡 Category: Generative Models

🌟 Research Objective:

– The study aims to explore the effects of token structures on the steering ability of Autoregressive models through test-time search, with a focus on the text-to-image generation process.

🛠️ Research Methods:

– Conducted controlled experiments comparing autoregressive models trained on coarse-to-fine ordered tokens with classical 2D grid-based counterparts.

– Analyzed the performance of various classical search algorithms (best-of-N, beam search, lookahead search) with different token structures and their interaction with image-text verifiers and AR priors.

💬 Research Conclusions:

– Coarse-to-fine ordered token structures enhance test-time scaling behavior in AR models compared to grid-based structures.

– It’s possible to achieve training-free text-to-image generation through test-time search over token sequences guided by an image-text verifier.

– The study provides insights into the impact of token structures on inference-time scalability and offers practical guidance for improving test-time scaling in AR models.

👉 Paper link: https://huggingface.co/papers/2604.15453

5. Repurposing 3D Generative Model for Autoregressive Layout Generation

🔑 Keywords: 3D layout generation, 3D diffusion model, geometric relations, physical constraints, dual-guidance self-rollout distillation

💡 Category: Generative Models

🌟 Research Objective:

– Introduce LaviGen, a framework for 3D layout generation using an adapted 3D diffusion model with dual-guidance self-rollout distillation to improve efficiency and spatial accuracy.

🛠️ Research Methods:

– Repurpose 3D generative models to operate directly in native 3D space, formulating layout generation as an autoregressive process that models geometric relations and physical constraints among objects.

💬 Research Conclusions:

– LaviGen demonstrates superior 3D layout generation performance with 19% higher physical plausibility and 65% faster computation compared to the state of the art.

👉 Paper link: https://huggingface.co/papers/2604.16299

6. Learning Adaptive Reasoning Paths for Efficient Visual Reasoning

🔑 Keywords: Adaptive visual reasoning, Visual reasoning models, Cross-modal reasoning, Reasoning path redundancy, FS-GRPO

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The study aims to address and reduce overthinking in visual reasoning models by introducing an adaptive framework called AVR.

🛠️ Research Methods:

– Use of AVR, which decomposes visual reasoning into visual perception, logical reasoning, and answer application to dynamically select reasoning formats.

– Implementation of FS-GRPO, an adaptation of Group Relative Policy Optimization, to train the model effectively.

💬 Research Conclusions:

– The AVR framework significantly reduces token usage by 50-90% while maintaining overall accuracy, particularly in perception-intensive tasks.

– Demonstrates the efficacy of adaptive visual reasoning in improving efficiency and mitigating overthinking in VRMs.

👉 Paper link: https://huggingface.co/papers/2604.14568

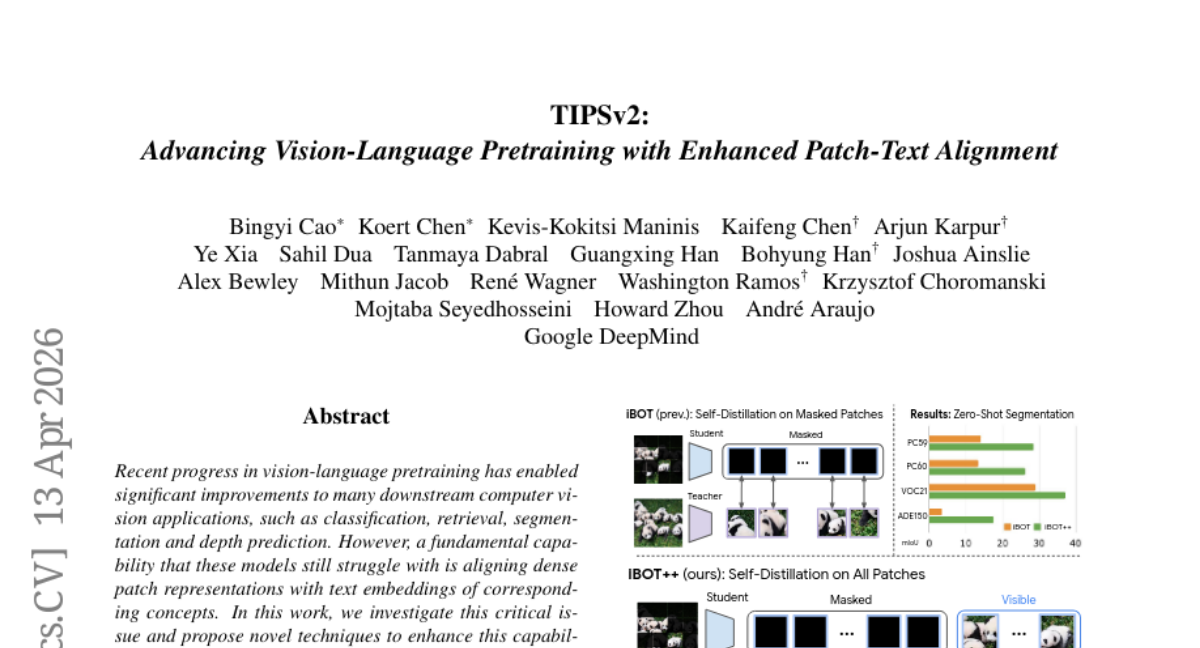

7. TIPSv2: Advancing Vision-Language Pretraining with Enhanced Patch-Text Alignment

🔑 Keywords: vision-language pretraining, dense patch-text alignment, patch-level distillation, TIPSv2, iBOT++

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Enhance dense patch-text alignment in vision-language models by introducing improved pretraining techniques.

🛠️ Research Methods:

– Employed patch-level distillation, modified masked image objectives, and optimized caption sampling strategies to boost model performance.

– Developed iBOT++ and TIPSv2 to improve pretraining efficiency and model alignment capability.

💬 Research Conclusions:

– Demonstrated significant performance improvements across 9 tasks and 20 datasets, showcasing the effectiveness of the proposed methods, with results on par or exceeding recent vision encoder models.

👉 Paper link: https://huggingface.co/papers/2604.12012

8. AccelOpt: A Self-Improving LLM Agentic System for AI Accelerator Kernel Optimization

🔑 Keywords: AccelOpt, AI accelerators, optimization memory, NKIBench, AWS Trainium

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To develop AccelOpt, an autonomous LLM agentic system that optimizes kernels for AI accelerators without needing expert optimization knowledge.

🛠️ Research Methods:

– Utilizes iterative generation and optimization memory to explore kernel optimization for AI accelerators.

– Evaluates AccelOpt using a new benchmark suite, NKIBench, derived from AWS Trainium accelerator kernels.

💬 Research Conclusions:

– AccelOpt significantly improves throughput, increasing peak performance on Trainium kernels from 49% to 61% and 45% to 59% respectively.

– It is cost-effective, achieving similar improvements as Claude Sonnet 4 at a fraction of the cost, being 26 times cheaper.

👉 Paper link: https://huggingface.co/papers/2511.15915

9. Hierarchical Codec Diffusion for Video-to-Speech Generation

🔑 Keywords: Video-to-Speech, Hierarchical Codec, audio-visual alignment, Residual Vector Quantization, Dual-scale normalization

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To achieve enhanced audio-visual alignment in Video-to-Speech generation by utilizing the hierarchical nature of discrete speech tokens.

🛠️ Research Methods:

– Implementation of HiCoDiT, a Hierarchical Codec Diffusion Transformer that uses Residual Vector Quantization-based codec for coarse-to-fine conditioning with dual-scale normalization.

💬 Research Conclusions:

– HiCoDiT outperforms existing methods in terms of fidelity and expressiveness, showcasing the potential of discrete modeling for Video-to-Speech generation.

👉 Paper link: https://huggingface.co/papers/2604.15923

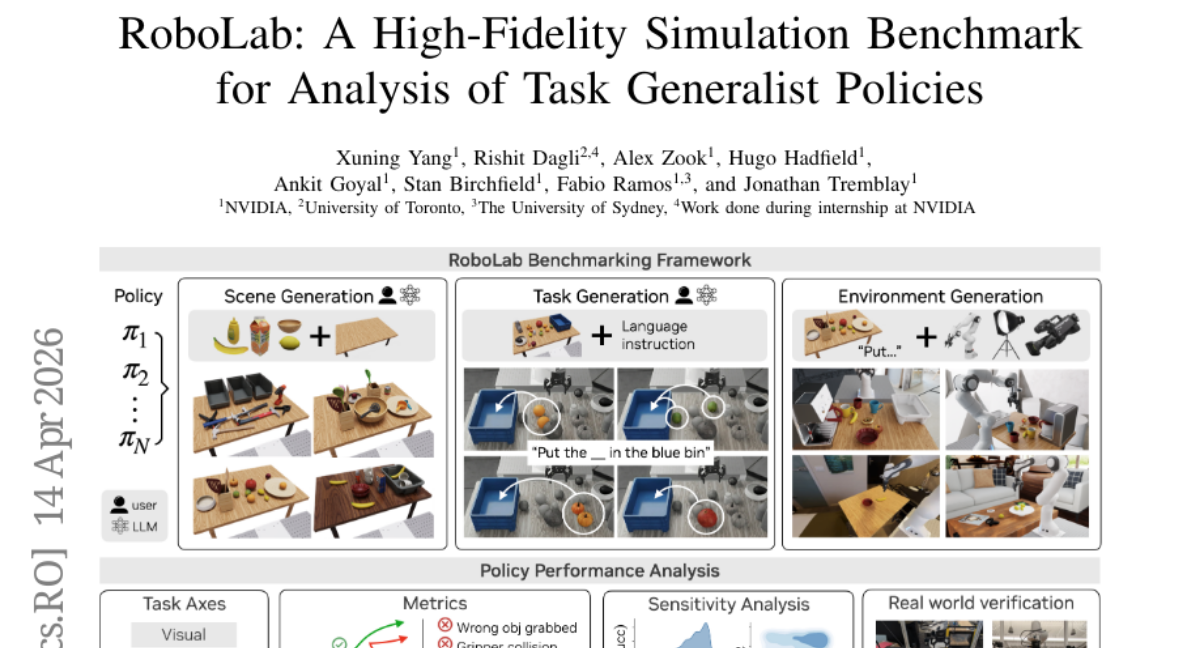

10. RoboLab: A High-Fidelity Simulation Benchmark for Analysis of Task Generalist Policies

🔑 Keywords: RoboLab, simulation benchmarking, controlled perturbations, photorealistic simulation, task-generalist robotic policies

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To address the limitations in robot policy evaluation through scalable, realistic task generation and systematic policy behavior analysis under controlled perturbations.

🛠️ Research Methods:

– Introduction of RoboLab framework enabling human-authored and LLM-enabled generation of scenes and tasks in a realistic simulation environment, and the creation of RoboLab-120 benchmark with competency axes.

💬 Research Conclusions:

– High-fidelity simulation can serve as a proxy for analyzing real-world policy performance, revealing sensitivity to external factors and exposing performance gaps in current models.

👉 Paper link: https://huggingface.co/papers/2604.09860

11. PRL-Bench: A Comprehensive Benchmark Evaluating LLMs’ Capabilities in Frontier Physics Research

🔑 Keywords: AI systems, end-to-end physics research, PRL-Bench, Language Models, autonomous scientific discovery

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– To evaluate the capabilities of Language Models in performing end-to-end physics research and to identify the gaps between AI systems and real scientific discovery.

🛠️ Research Methods:

– Introduction of PRL-Bench, a benchmark constructed using 100 curated papers from Physical Review Letters, covering subfields like astrophysics, condensed matter physics, and quantum information. It is designed to test domain knowledge, complex reasoning, and research workflows.

💬 Research Conclusions:

– Current Language Models show limited performance with the best scores below 50, indicating a significant gap in their capabilities for autonomous scientific discovery. PRL-Bench provides a reliable testbed for advancing AI scientists.

👉 Paper link: https://huggingface.co/papers/2604.15411

12. TwinTrack: Post-hoc Multi-Rater Calibration for Medical Image Segmentation

🔑 Keywords: TwinTrack, Post-hoc Calibration, Ensemble Segmentation, Inter-rater Disagreement, Calibration Metrics

💡 Category: AI in Healthcare

🌟 Research Objective:

– Address the ambiguity in pancreatic cancer segmentation on CT scans by calibrating ensemble segmentation outputs to human-derived probabilities.

🛠️ Research Methods:

– Implement a framework called TwinTrack that uses post-hoc calibration of ensemble probabilities to align with the empirical mean human response, modeling inter-rater disagreement.

💬 Research Conclusions:

– The TwinTrack framework significantly improves calibration metrics over traditional deep learning approaches on the MICCAI 2025 CURVAS-PDACVI multi-rater benchmark by directly interpreting calibrated probabilities as the expected proportion of annotators labeling a voxel as a tumor.

👉 Paper link: https://huggingface.co/papers/2604.15950

13. ArtifactNet: Detecting AI-Generated Music via Forensic Residual Physics

🔑 Keywords: AI-generated music, ArtifactNet, forensic physics, codec-aware training, codec-level artifacts

💡 Category: Machine Learning

🌟 Research Objective:

– The main objective is to detect AI-generated music by analyzing codec-specific artifacts, utilizing a more generalizable and efficient approach than previous methods.

🛠️ Research Methods:

– Introduced a lightweight neural network framework called ArtifactNet, which uses a bounded-mask UNet to extract codec residuals, processed into forensic features by a compact CNN.

– Developed a multi-generator evaluation benchmark, ArtifactBench, involving diverse tracks for zero-shot evaluation.

– Applied codec-aware training to improve cross-codec consistency.

💬 Research Conclusions:

– ArtifactNet significantly outperformed other methods like CLAM and SpecTTTra, achieving high F1 score with low false positive rate.

– The research establishes forensic physics as a viable approach for AI music detection, requiring far fewer parameters than existing models.

👉 Paper link: https://huggingface.co/papers/2604.16254

14.

15. VEFX-Bench: A Holistic Benchmark for Generic Video Editing and Visual Effects

🔑 Keywords: video editing, human-annotated dataset, reward model, VEFX-Dataset, VEFX-Reward

💡 Category: Computer Vision

🌟 Research Objective:

– This research introduces a large-scale human-annotated video editing dataset, VEFX-Dataset, and a specialized reward model, VEFX-Reward, aimed at assessing video editing quality.

🛠️ Research Methods:

– The study involves the creation of the VEFX-Dataset with 5,049 video editing examples across multiple categories, and the development of the VEFX-Reward model, which uses ordinal regression to predict quality scores based on multiple dimensions.

💬 Research Conclusions:

– The VEFX-Reward model aligns more closely with human judgments compared to generic vision-language models, and highlights areas of improvement in current video editing systems concerning visual plausibility, instruction adherence, and edit uniqueness.

👉 Paper link: https://huggingface.co/papers/2604.16272

16. Universal statistical signatures of evolution in artificial intelligence architectures

🔑 Keywords: AI architectural evolution, fitness effects distribution, Student’s t-distribution, logistic dynamics, adaptive radiation

💡 Category: Foundations of AI

🌟 Research Objective:

– The study aims to explore whether AI architectural evolution follows the same statistical laws as biological evolution.

🛠️ Research Methods:

– Analysis involves 935 ablation experiments from 161 publications to evaluate the distribution of fitness effects (DFE) of architectural modifications, comparing them to biological systems.

💬 Research Conclusions:

– AI architecture evolution mirrors biological evolution in its statistical patterns, evidenced by similar DFE and convergence dynamics. This process is substrate-independent, determined by fitness landscape topology.

👉 Paper link: https://huggingface.co/papers/2604.10571

17. DiPO: Disentangled Perplexity Policy Optimization for Fine-grained Exploration-Exploitation Trade-Off

🔑 Keywords: Large Language Models, Reinforcement Learning, Exploration-Exploitation Trade-off, Perplexity Space, Policy Optimization

💡 Category: Reinforcement Learning

🌟 Research Objective:

– To address the exploration-exploitation trade-off in training Large Language Models by utilizing a novel approach with perplexity-based sample partitioning and bidirectional reward allocation.

🛠️ Research Methods:

– Introducing a perplexity space disentangling strategy to classify samples into exploration and exploitation subspaces.

– Implementing a bidirectional reward allocation mechanism to enhance policy optimization with minimal impact on verification rewards.

💬 Research Conclusions:

– The proposed method significantly improves LLM performance in tasks like mathematical reasoning and function calling through a fine-grained exploration-exploitation trade-off.

👉 Paper link: https://huggingface.co/papers/2604.13902

18. NTIRE 2026 Challenge on Video Saliency Prediction: Methods and Results

🔑 Keywords: Video Saliency Prediction, Saliency Map, Crowdsourced Mouse Tracking

💡 Category: Computer Vision

🌟 Research Objective:

– To develop automatic saliency map prediction methods for video sequences as part of the NTIRE 2026 Challenge.

🛠️ Research Methods:

– Utilization of a novel dataset comprising 2,000 diverse videos with an open license alongside crowdsourced mouse tracking to collect fixation and saliency map data from over 5,000 assessors.

💬 Research Conclusions:

– Over 20 teams participated with submissions, and 7 teams successfully passed the final phase involving code review. Public availability of all challenge data.

👉 Paper link: https://huggingface.co/papers/2604.14816

19. The Amazing Agent Race: Strong Tool Users, Weak Navigators

🔑 Keywords: DAG-based puzzles, Navigation errors, Tool-use capabilities, Verifiable answer, Finish-line accuracy

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To introduce The Amazing Agent Race (AAR) benchmark to evaluate LLM agents’ navigation and tool-use capabilities using DAG-based puzzles rather than traditional linear benchmarks.

🛠️ Research Methods:

– Developed 1,400 instances of DAG puzzles, categorized into sequential and compositional variants, using Wikipedia seeds with procedural generation and live-API validation, and evaluated agents using metrics such as finish-line accuracy, pit-stop visit rate, and roadblock completion rate.

💬 Research Conclusions:

– Navigation errors are the primary source of performance issues in LLM agents within AAR, revealing deficiencies not visible in linear benchmarks; agent framework and architecture significantly impact performance, underscoring the need for improved navigation capabilities.

👉 Paper link: https://huggingface.co/papers/2604.10261

20. EdgeDetect: Importance-Aware Gradient Compression with Homomorphic Aggregation for Federated Intrusion Detection

🔑 Keywords: 6G-IoT, Federated Learning, Intrusion Detection, Gradient Binarization, Homomorphic Encryption

💡 Category: Machine Learning

🌟 Research Objective:

– The study aims to develop an efficient and secure federated intrusion detection system (IDS) for 6G-IoT environments using gradient binarization and homomorphic encryption to achieve high accuracy, reduced communication overhead, and strong privacy protection.

🛠️ Research Methods:

– EdgeDetect employs gradient smartification, a median-based statistical binarization technique, to compress local updates and integrate Paillier homomorphic encryption for privacy preservation against servers.

💬 Research Conclusions:

– The experimental results indicate that EdgeDetect achieves 98.0% multi-class accuracy while significantly reducing communication overhead and maintaining strong privacy and feasibility on edge devices, even under challenging conditions like 5% poisoning attacks.

👉 Paper link: https://huggingface.co/papers/2604.14663

21. GTA-2: Benchmarking General Tool Agents from Atomic Tool-Use to Open-Ended Workflows

🔑 Keywords: General Tool Agents, AI-generated summary, execution frameworks, open-ended workflows, recursive checkpoint-based evaluation

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The study aims to enhance General Tool Agents’ ability to complete complex, real-world workflows by proposing an improved execution framework.

🛠️ Research Methods:

– Introduced a hierarchical benchmark named GTA-2 comprising GTA-Atomic for short-horizon tasks and GTA-Workflow for long-horizon tasks. Additionally, a recursive checkpoint-based evaluation mechanism was used to assess both model capabilities and execution frameworks.

💬 Research Conclusions:

– The research identifies a significant capability drop when moving from atomic tasks to workflows. The recursive checkpoint-guided feedback, and advanced frameworks like Manus and OpenClaw, significantly improve workflow completion, emphasizing the value of execution harness design over merely enhancing model capacity.

👉 Paper link: https://huggingface.co/papers/2604.15715

22. Can Large Language Models Reinvent Foundational Algorithms?

🔑 Keywords: LLMs, foundational innovation, generative verifier, reinforcement learning

💡 Category: Foundations of AI

🌟 Research Objective:

– To investigate whether large language models (LLMs) can reinvent foundational computer science algorithms after an unlearning process.

🛠️ Research Methods:

– Utilized an Unlearn-and-Reinvent pipeline with a GRPO-based, on-policy unlearning method across 10 target algorithms and varying hint levels, leveraging 3 strong open-weight models.

💬 Research Conclusions:

– The strongest model, Qwen3-4B-Thinking-2507, successfully reinvents up to 90% of algorithms at hint level 2.

– High-level hints improve reinvention success, although complex algorithms remain challenging despite detailed guidance.

– Test-time reinforcement learning aids in reinventing complex algorithms like Strassen at higher hint levels.

– Generative verifier plays a crucial role in maintaining reasoning capabilities during the reinvention phase.

👉 Paper link: https://huggingface.co/papers/2604.05716

23. QuantCode-Bench: A Benchmark for Evaluating the Ability of Large Language Models to Generate Executable Algorithmic Trading Strategies

🔑 Keywords: Large language models, algorithmic trading, financial logic, Backtrader framework, code generation

💡 Category: AI in Finance

🌟 Research Objective:

– To evaluate large language models’ ability to translate natural language descriptions into executable trading strategies.

🛠️ Research Methods:

– Developed QuantCode-Bench, a benchmark comprising 400 tasks from various sources.

– Utilized a multi-stage pipeline for evaluation focusing on syntactic correctness, backtest execution, trades presence, and semantic alignment.

💬 Research Conclusions:

– Current models face limitations not in syntax but in operationalizing trading logic, proper API usage, and task semantics adherence.

– Trading strategy generation emerges as a domain-specific code generation task requiring technical correctness and alignment with natural language and financial logic.

👉 Paper link: https://huggingface.co/papers/2604.15151

24. Where does output diversity collapse in post-training?

🔑 Keywords: Output diversity collapse, Post-trained language models, Training data composition, Generation format, Model weights

💡 Category: Natural Language Processing

🌟 Research Objective:

– To investigate the causes behind output diversity collapse in post-trained language models, focusing on the role of training data composition versus generation format.

🛠️ Research Methods:

– Analysis of output diversity across three post-training lineages (Olmo 3, Think, Instruct, RL-Zero) over 15 tasks using four text diversity metrics.

💬 Research Conclusions:

– Found that output diversity collapse is primarily determined by the training data composition rather than the generation format.

– Demonstrated that diversity loss is task-dependent and cannot be solely addressed at inference time; training data heavily influences model outputs.

👉 Paper link: https://huggingface.co/papers/2604.16027

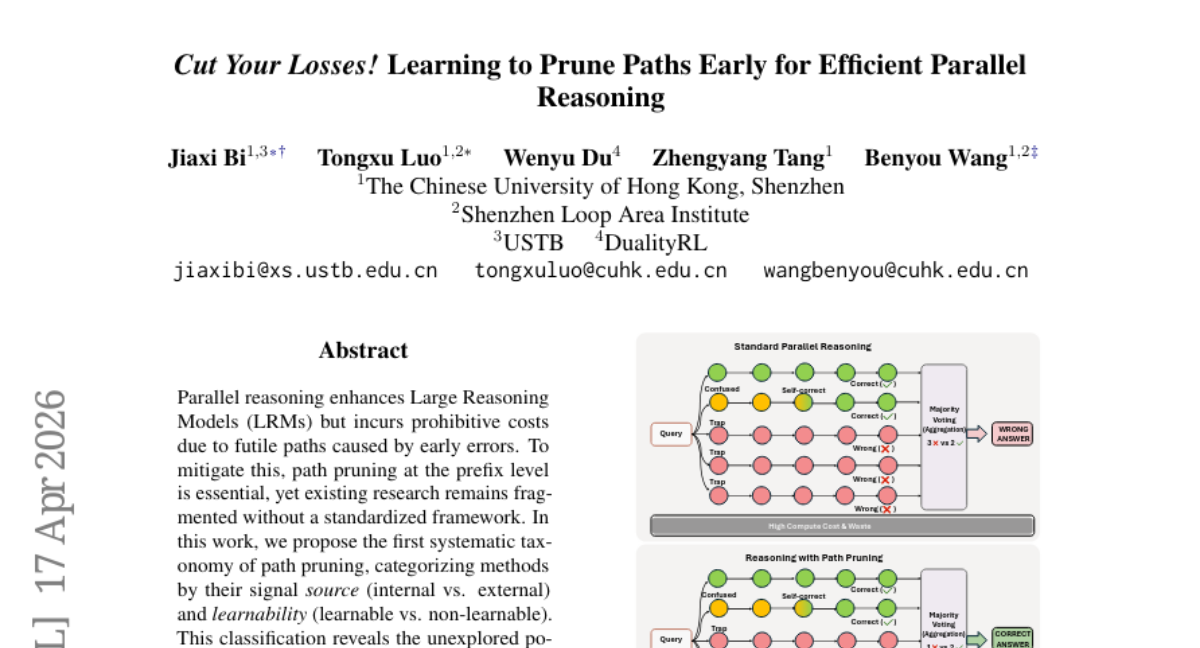

25. Cut Your Losses! Learning to Prune Paths Early for Efficient Parallel Reasoning

🔑 Keywords: path pruning, Large Reasoning Models, STOP, learnable token-level pruning, empirical guidelines

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– The study introduces STOP, a systematic path pruning method to enhance efficiency and accuracy in Large Reasoning Models (LRMs) by leveraging learnable token-level pruning across various compute budgets.

🛠️ Research Methods:

– Proposes the first systematic taxonomy categorizing path pruning methods based on signal source and learnability, highlighting the potential of learnable internal methods.

💬 Research Conclusions:

– Extensive evaluations show that STOP outperforms existing baselines in effectiveness and efficiency across Large Reasoning Models ranging from 1.5B to 20B parameters, demonstrating superior scalability under fixed compute budgets.

👉 Paper link: https://huggingface.co/papers/2604.16029

26. Web Retrieval-Aware Chunking (W-RAC) for Efficient and Cost-Effective Retrieval-Augmented Generation Systems

🔑 Keywords: Web Retrieval-Aware Chunking, Retrieval-Augmented Generation, LLM token usage, hallucination risks, web-based documents

💡 Category: Natural Language Processing

🌟 Research Objective:

– The objective is to introduce Web Retrieval-Aware Chunking (W-RAC), aiming to create a cost-efficient framework for processing web documents while minimizing token usage and the risk of hallucination through structured content representation and retrieval-aware grouping.

🛠️ Research Methods:

– The methodology includes using W-RAC to decouple text extraction from semantic chunk planning, with a focus on structured, ID-addressable units and leveraging large language models (LLMs) for retrieval-aware grouping decisions instead of text generation.

💬 Research Conclusions:

– The proposed W-RAC significantly reduces token usage and eliminates hallucination risks, achieving comparable or superior retrieval performance compared to traditional chunking approaches, while also reducing chunking-related LLM costs significantly.

👉 Paper link: https://huggingface.co/papers/2604.04936

27. Maximal Brain Damage Without Data or Optimization: Disrupting Neural Networks via Sign-Bit Flips

🔑 Keywords: Deep Neural Lesion, parameter bits, sign bits, ImageNet, Mask R-CNN

💡 Category: Computer Vision

🌟 Research Objective:

– The research aims to investigate the catastrophic vulnerability of Deep Neural Networks to minimal parameter bit flips and propose strategies for identifying and mitigating these vulnerabilities without relying on data or optimization processes.

🛠️ Research Methods:

– Introduce Deep Neural Lesion (DNL) and a refined variant, 1P-DNL, which feature a data-free and optimization-free approach to locating critical parameters through single-pass forward and backward passes on random inputs.

💬 Research Conclusions:

– The study demonstrates the wide span of this vulnerability across multiple domains like image classification, object detection, and language modeling. The selective protection of a small fraction of vulnerable sign bits can effectively defend against such catastrophic disruptions.

👉 Paper link: https://huggingface.co/papers/2502.07408