AI Native Daily Paper Digest – 20260423

1. LLaDA2.0-Uni: Unifying Multimodal Understanding and Generation with Diffusion Large Language Model

🔑 Keywords: LLaDA2.0-Uni, Discrete Diffusion Language Model, Multimodal Understanding, MoE-based Backbone, High-Fidelity Image Generation

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Develop LLaDA2.0-Uni, a unified discrete diffusion large language model to enhance multimodal understanding and generation.

🛠️ Research Methods:

– Incorporate a semantic discrete tokenizer, an MoE-based backbone, and a diffusion decoder.

– Utilize SigLIP-VQ for discretizing continuous visual inputs and implement block-level masked diffusion.

💬 Research Conclusions:

– LLaDA2.0-Uni achieves performance similar to specialized vision-language models while providing efficient inference and high-quality image reconstructions.

– Establishes a scalable paradigm for next-generation unified foundation models with native support for interleaved generation and reasoning.

👉 Paper link: https://huggingface.co/papers/2604.20796

2. DR-Venus: Towards Frontier Edge-Scale Deep Research Agents with Only 10K Open Data

🔑 Keywords: DR-Venus-4B, Edge-scale deployment, Agentic supervised fine-tuning, Agentic reinforcement learning, Turn-level rewards

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The main objective is to train a powerful small deep research agent, DR-Venus-4B, using open data to improve performance on research benchmarks, particularly focusing on edge-scale deployment advantages.

🛠️ Research Methods:

– The training is divided into two stages: Firstly, agentic supervised fine-tuning (SFT) is applied to enhance basic agentic capabilities through data quality improvement and trajectory resampling. Secondly, agentic reinforcement learning is used to improve execution reliability, employing turn-level rewards and format-aware regularization.

💬 Research Conclusions:

– DR-Venus-4B outperforms prior agentic models under 9B parameters on multiple benchmarks and narrows the gap to 30B-class systems, demonstrating the potential of small models for deployment and the benefits of test-time scaling. The study provides models, code, and key recipes to facilitate further research.

👉 Paper link: https://huggingface.co/papers/2604.19859

3. DeVI: Physics-based Dexterous Human-Object Interaction via Synthetic Video Imitation

🔑 Keywords: DeVI, Dexterous robotic manipulation, Text-conditioned synthetic videos, Hybrid tracking reward, Zero-shot generalization

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– Introduce DeVI, a novel framework that utilizes text-conditioned synthetic videos to achieve physically plausible control in dexterous robot manipulation.

🛠️ Research Methods:

– Develop a hybrid tracking reward system that combines 3D human tracking with robust 2D object tracking to enhance hand-object interaction modeling.

💬 Research Conclusions:

– DeVI outperforms existing 3D human-object interaction demonstration methods, enabling effective zero-shot generalization in diverse scenarios and showcasing its utility as an HOI-aware motion planner.

👉 Paper link: https://huggingface.co/papers/2604.20841

4. Exploring Spatial Intelligence from a Generative Perspective

🔑 Keywords: Generative Spatial Intelligence, 3D Spatial Constraints, Image Generation, GSI-Bench, Spatial Reasoning

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The primary aim is to assess and enhance the ability of generative and multimodal models to handle 3D spatial constraints during image generation.

🛠️ Research Methods:

– Introduction of GSI-Bench, a benchmark featuring GSI-Real and GSI-Syn datasets, designed to evaluate spatial compliance and editing fidelity in image generation.

💬 Research Conclusions:

– Findings indicate that fine-tuning on the GSI-Syn dataset significantly improves the spatial reasoning abilities of multimodal models, demonstrating a new pathway to advance spatial intelligence.

👉 Paper link: https://huggingface.co/papers/2604.20570

5. WavAlign: Enhancing Intelligence and Expressiveness in Spoken Dialogue Models via Adaptive Hybrid Post-Training

🔑 Keywords: Spoken Dialogue Models, Modality-Aware, Reinforcement Learning, Semantic Channel, Explicit Anchoring

💡 Category: Natural Language Processing

🌟 Research Objective:

– To enhance the expressiveness and semantic quality of spoken dialogue models using a modality-aware adaptive post-training method.

🛠️ Research Methods:

– Analyses of challenges in spoken dialogue models, focusing on reward modeling, rollout sampling, and sparse preference supervision.

– Development of a post-training method with constrained preference updates and explicit anchoring.

💬 Research Conclusions:

– The proposed method improves the semantic quality and expressiveness of dialogue models across multiple benchmarks and architectures.

👉 Paper link: https://huggingface.co/papers/2604.14932

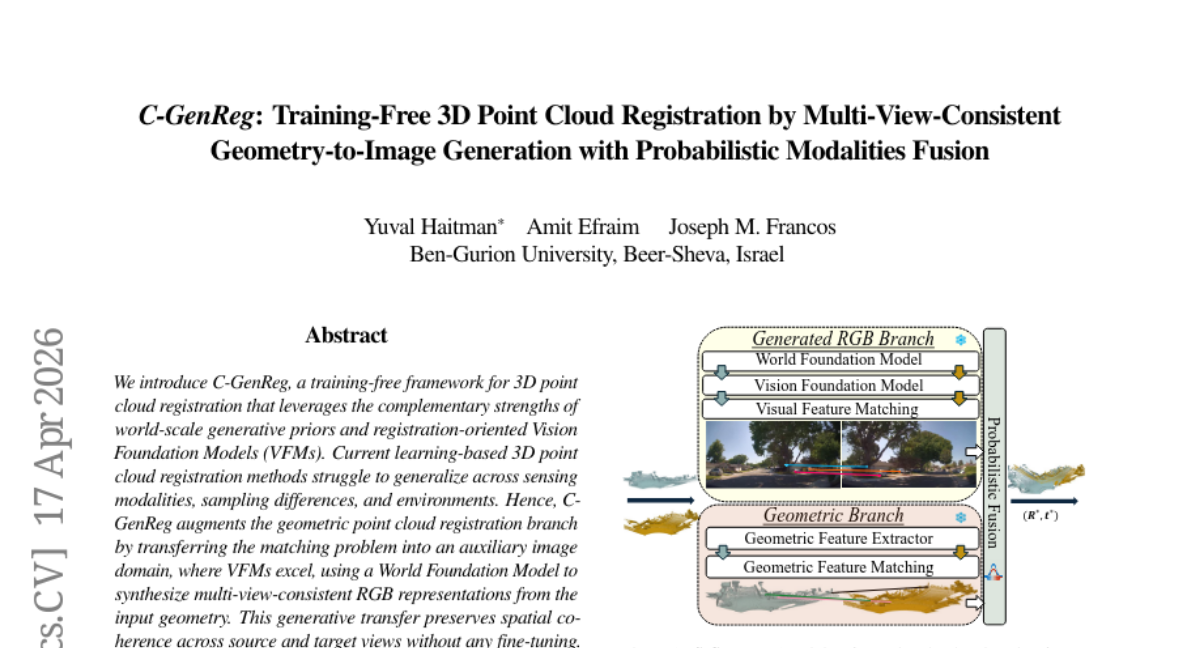

6. C-GenReg: Training-Free 3D Point Cloud Registration by Multi-View-Consistent Geometry-to-Image Generation with Probabilistic Modalities Fusion

🔑 Keywords: C-GenReg, 3D point cloud registration, generative priors, Vision Foundation Models, zero-shot performance

💡 Category: Computer Vision

🌟 Research Objective:

– Introduce a training-free framework, C-GenReg, for 3D point cloud registration leveraging World Foundation Models to improve cross-domain generalization.

🛠️ Research Methods:

– The framework employs generative priors and Vision Foundation Models to transfer matching problems to an image domain, using multi-view-consistent RGB representations for extracting dense correspondences.

– Implements a “Match-then-Fuse” probabilistic scheme to enhance robustness without fine-tuning.

💬 Research Conclusions:

– C-GenReg demonstrates strong zero-shot performance and superior cross-domain generalization in indoor and outdoor benchmarks.

– Successfully operates on real outdoor LiDAR data without imagery, showcasing its versatility and adaptability.

👉 Paper link: https://huggingface.co/papers/2604.16680

7. Convergent Evolution: How Different Language Models Learn Similar Number Representations

🔑 Keywords: Transformers, Fourier domain, geometrically separable features, periodic features, convergent evolution

💡 Category: Natural Language Processing

🌟 Research Objective:

– The study aims to understand how language models such as Transformers, Linear RNNs, and LSTMs develop geometrically separable features for linear classification of numbers modulo T, despite the insufficiency of Fourier sparsity alone for this separability.

🛠️ Research Methods:

– The research investigates model training conditions, including data, architecture, optimizer, and tokenizer, and explores two different pathways for acquiring geometrically separable features: through complementary co-occurrence signals in general language data, and from multi-token addition problems.

💬 Research Conclusions:

– Various models demonstrate convergent evolution in feature learning, acquiring similar geometrically separable features from distinct training signals, highlighting the complexity and adaptability of language models in learning numerical representations.

👉 Paper link: https://huggingface.co/papers/2604.20817

8. Self-Evolving LLM Memory Extraction Across Heterogeneous Tasks

🔑 Keywords: LLM-based assistants, heterogeneous memory extraction, CluE, BEHEMOTH, AI-generated summary

💡 Category: Natural Language Processing

🌟 Research Objective:

– The objective is to develop a formalization of heterogeneous memory extraction for LLM-based assistants and evaluate it through the BEHEMOTH benchmark.

🛠️ Research Methods:

– The study proposes CluE, a cluster-based, self-evolving prompt optimization strategy that analyzes training examples by grouping them into clusters of extraction scenarios.

💬 Research Conclusions:

– CluE shows a significant performance improvement (+9.04% relative gain) over prior self-evolving frameworks when applied to heterogeneous tasks, indicating its effectiveness in optimizing memory extraction across diverse task categories.

👉 Paper link: https://huggingface.co/papers/2604.11610

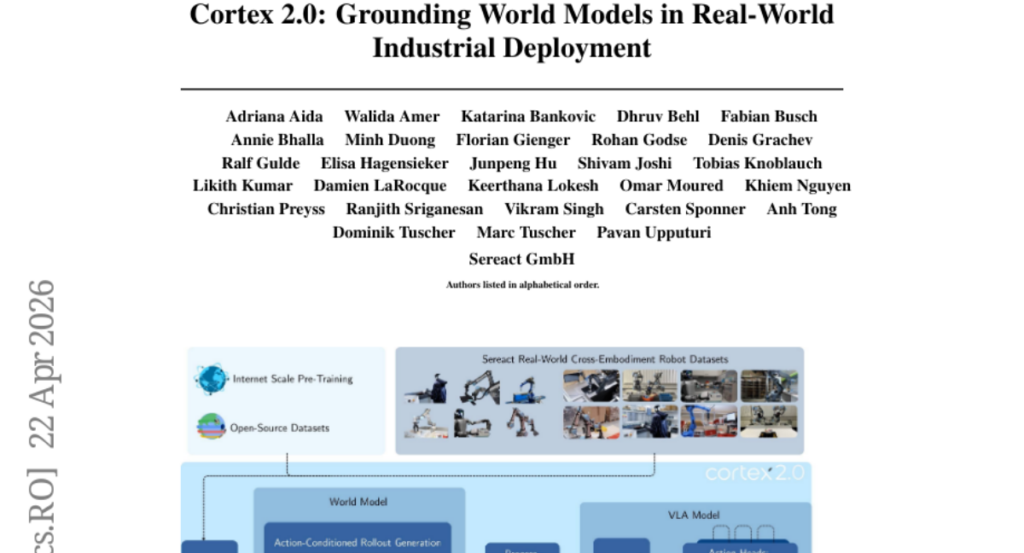

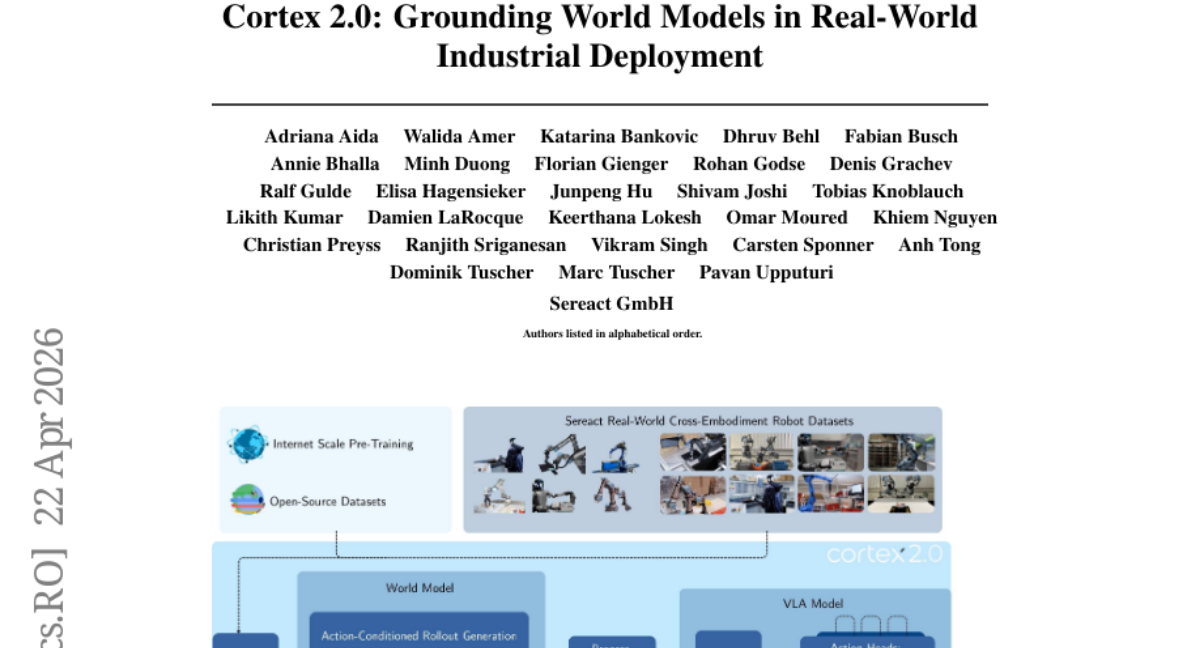

9. Cortex 2.0: Grounding World Models in Real-World Industrial Deployment

🔑 Keywords: Cortex 2.0, plan-and-act, visual latent space, industrial manipulation, Vision-Language-Action

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To enable reliable long-horizon robotic manipulation in complex industrial environments by using Cortex 2.0, which employs plan-and-act control in visual latent space.

🛠️ Research Methods:

– Evaluation of Cortex 2.0 on multiple manipulation platforms and tasks, including pick and place, item sorting, and shoebox unpacking, to compare its performance with state-of-the-art Vision-Language-Action models.

💬 Research Conclusions:

– Cortex 2.0 outperforms reactive Vision-Language-Action models in all tested tasks, demonstrating superior reliability in unstructured environments, validating the effectiveness of world-model-based planning.

👉 Paper link: https://huggingface.co/papers/2604.20246

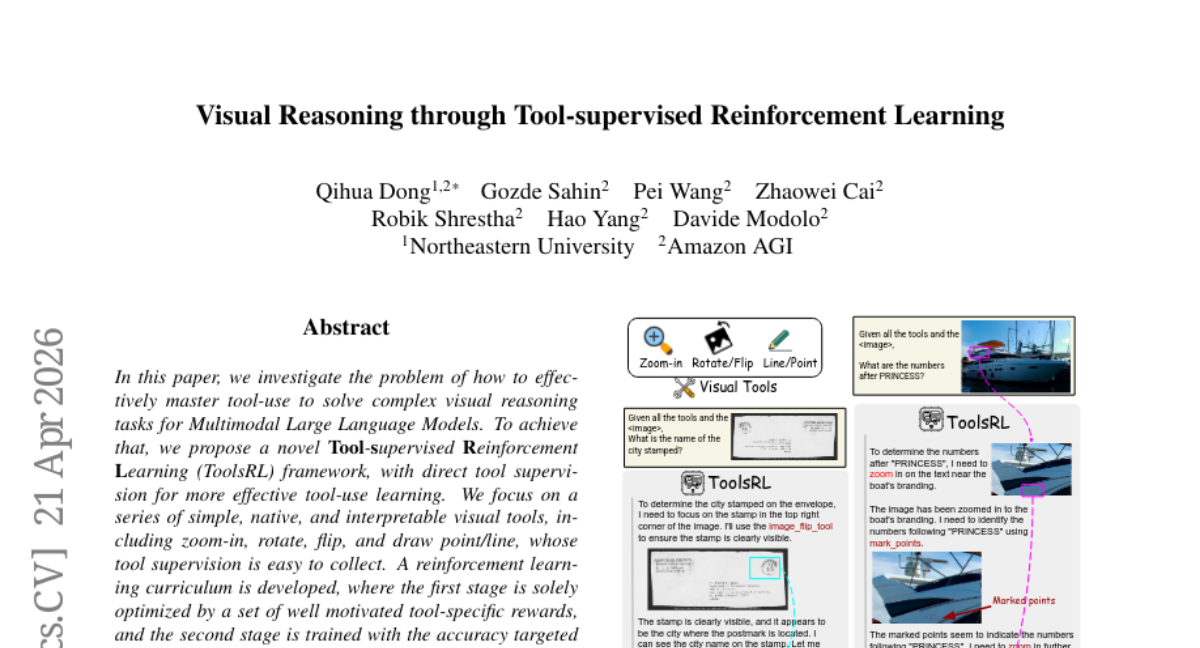

10. Visual Reasoning through Tool-supervised Reinforcement Learning

🔑 Keywords: Tool-supervised Reinforcement Learning, Multimodal Large Language Models, visual reasoning tasks, tool-use learning, reinforcement learning curriculum

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The study aims to address the effective mastery of tool-use in solving complex visual reasoning tasks using Multimodal Large Language Models by introducing a novel framework called Tool-supervised Reinforcement Learning (ToolsRL).

🛠️ Research Methods:

– The research employs a two-stage curriculum approach. The first stage optimizes tool-use through tool-specific rewards, while the second stage focuses on accuracy-targeted rewards, refining tool-use capabilities for visual reasoning tasks.

💬 Research Conclusions:

– The experiments demonstrate that the tool-supervised curriculum training is efficient. The ToolsRL framework successfully enhances tool-use capabilities, achieving strong performance in complex visual reasoning tasks.

👉 Paper link: https://huggingface.co/papers/2604.19945

11. Scaling Test-Time Compute for Agentic Coding

🔑 Keywords: Test-time scaling, Agentic coding, Rollout trajectories, Recursive Tournament Voting, Parallel-Distill-Refine

💡 Category: Reinforcement Learning

🌟 Research Objective:

– Introduce a test-time scaling framework for agentic coding to enhance long-horizon task performance using compact trajectory representations.

🛠️ Research Methods:

– The framework employs Recursive Tournament Voting (RTV) for parallel scaling and adapts Parallel-Distill-Refine (PDR) for sequential scaling in the agentic setting.

💬 Research Conclusions:

– The method significantly improves performance metrics on benchmarks such as SWE-Bench Verified and Terminal-Bench v2.0, highlighting that test-time scaling for long-horizon agents involves effective representation, selection, and reuse.

👉 Paper link: https://huggingface.co/papers/2604.16529

12. AI scientists produce results without reasoning scientifically

🔑 Keywords: Large language model, Scientific agents, Epistemic norms, Hypothesis-driven inquiry, Scientific reasoning

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– To evaluate the capability of large language model-based scientific agents to adhere to the epistemic norms necessary for self-correcting scientific inquiry across various domains.

🛠️ Research Methods:

– Conducting over 25,000 agent runs in eight domains, analyzing performance and behavior through a systematic performance analysis of base models and scaffold contributions, as well as a behavioral analysis of the epistemological structure of agent reasoning.

💬 Research Conclusions:

– The base model predominantly influences both performance and behavior, leading to a lack of genuine scientific reasoning. Current agents neglect evidence in most traces and fail to reliably revise beliefs through refutation-driven processes or convergent multi-test evidence, highlighting their unreliability in epistemically demanding domains.

👉 Paper link: https://huggingface.co/papers/2604.18805

13. Benign Fine-Tuning Breaks Safety Alignment in Audio LLMs

🔑 Keywords: AI-generated summary, Audio LLMs, fine-tuning, harmful content, embedding space

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To systematically study the safety degradation of Audio LLMs due to benign fine-tuning and to evaluate the patterns of vulnerability based on model architecture and input modality.

🛠️ Research Methods:

– The study utilized a proximity-based filtering framework to select benign audio, analyzed semantic, acoustic, and mixed proximity axes using both external reference encoders and internal model encoders, and tested various defense mechanisms.

💬 Research Conclusions:

– Benign fine-tuning in Audio LLMs can elevate the Jailbreak Success Rate to 87.12%. The vulnerability is architecture-conditioned, affecting how models transform audio into input space. Proposed defenses can reduce the JSR to near-zero, highlighting that safety degradation from benign fine-tuning poses a distinct risk in Audio LLMs.

👉 Paper link: https://huggingface.co/papers/2604.16659

14. COMPASS: COntinual Multilingual PEFT with Adaptive Semantic Sampling

🔑 Keywords: COMPASS, parameter-efficient fine-tuning, multilingual embeddings, cross-lingual transfer, continual learning

💡 Category: Natural Language Processing

🌟 Research Objective:

– Improve multilingual language model adaptation to prevent negative cross-lingual interference.

🛠️ Research Methods:

– Use COMPASS, a data-centric framework with adaptive semantic sampling and parameter-efficient fine-tuning (PEFT).

– Incorporate multilingual embeddings and clustering to manage semantic gaps.

💬 Research Conclusions:

– COMPASS enhances performance across languages by maximizing positive cross-lingual transfer and minimizing interference.

– Demonstrates superior performance over baseline methods in various multilingual benchmarks and dynamic environments.

👉 Paper link: https://huggingface.co/papers/2604.20720

15. Streaming Structured Inference with Flash-SemiCRF

🔑 Keywords: Semi-Markov Conditional Random Fields, exact inference, streaming algorithms, prefix-sum array, Flash-SemiCRF

💡 Category: Machine Learning

🌟 Research Objective:

– The research aims to enhance Semi-Markov Conditional Random Fields by implementing efficient memory management techniques to allow for exact inference on long sequences and large label sets.

🛠️ Research Methods:

– Improvements include replacing a large edge potential tensor with a prefix-sum lookup, implementing a streaming forward-backward pass with checkpoint-boundary normalization, and using zero-centered cumulative scores to manage numerical drift.

💬 Research Conclusions:

– The integration of these techniques into Flash-SemiCRF makes exact semi-CRF inference feasible for previously intractable problem sizes, significantly reducing memory requirements and accommodating long sequences with large label sets.

👉 Paper link: https://huggingface.co/papers/2604.18780

16.

17. CreativeGame:Toward Mechanic-Aware Creative Game Generation

🔑 Keywords: Multi-agent system, HTML5 game generation, Mechanic-guided planning, Lineage memory, Runtime validation

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The primary aim is to develop CreativeGame, a multi-agent system designed for iterative HTML5 game generation, focusing on interpretable version-to-version evolution.

🛠️ Research Methods:

– The method involves using programmatic rewards, lineage memory, and runtime validation, along with mechanic-guided planning, to enhance creative improvement and experience accumulation across versions.

💬 Research Conclusions:

– CreativeGame demonstrates substantial capability, managing 71 stored lineages and a 774-entry global mechanic archive. This allows for architectural analysis and real lineage-level case studies, showing potential for mechanic-level innovation through explicit mechanic change.

👉 Paper link: https://huggingface.co/papers/2604.19926

18. Diverse Dictionary Learning

🔑 Keywords: Diverse Dictionary Learning, Observational Data, Latent Variables, Identifiability, Inductive Bias

💡 Category: Foundations of AI

🌟 Research Objective:

– The paper aims to explore latent variable recovery without strong assumptions through diverse dictionary learning, focusing on understanding set-theoretic relationships and structures from observational data.

🛠️ Research Methods:

– The research introduces a framework that identifies intersections, complements, and symmetric differences of latent variables to aid identifiability even without robust assumptions. It leverages set algebra and relies on an inductive bias during estimation.

💬 Research Conclusions:

– The study concludes that while full identifiability is not often attainable, meaningful recovery with guarantees is possible. This approach demonstrates applicability through both synthetic and real-world data, highlighting the utility of structural diversity in achieving identifiability.

👉 Paper link: https://huggingface.co/papers/2604.17568

19. MMCORE: MultiModal COnnection with Representation Aligned Latent Embeddings

🔑 Keywords: MMCORE, Vision-Language Model, semantic visual embeddings, diffusion model, high-fidelity synthesis

💡 Category: Generative Models

🌟 Research Objective:

– Introduce MMCORE, a framework for multimodal image generation and editing using a Vision-Language Model to enhance diffusion model conditioning.

🛠️ Research Methods:

– Utilize pre-trained Vision-Language Models with learnable query tokens to predict semantic visual embeddings serving as conditioning signals for a diffusion model, avoiding deep fusion or training from scratch.

💬 Research Conclusions:

– Demonstrates significant improvements over state-of-the-art baselines in text-to-image and image editing benchmarks, showcasing robust multimodal comprehension in complex scenarios.

👉 Paper link: https://huggingface.co/papers/2604.19902

20. ReImagine: Rethinking Controllable High-Quality Human Video Generation via Image-First Synthesis

🔑 Keywords: pose-controllable, SMPL-X, video diffusion models, human video generation, temporal consistency

💡 Category: Generative Models

🌟 Research Objective:

– The study aims to tackle the complexities of generating human video by modeling appearance, motion, and viewpoint simultaneously, ensuring high-quality and temporally consistent videos.

🛠️ Research Methods:

– A novel pipeline is proposed that integrates pretrained image generation techniques with SMPL-X-based motion guidance and temporal refinement using video diffusion models.

💬 Research Conclusions:

– The method successfully generates high-quality videos with diverse poses and viewpoints and introduces a canonical human dataset and an auxiliary model for compositional image synthesis.

👉 Paper link: https://huggingface.co/papers/2604.19720

21. SAVOIR: Learning Social Savoir-Faire via Shapley-based Reward Attribution

🔑 Keywords: SAVOIR framework, social intelligence, cooperative game theory, credit assignment problem, Shapley values

💡 Category: Natural Language Processing

🌟 Research Objective:

– To enhance social intelligence in language agents by utilizing the SAVOIR framework, which incorporates principles from cooperative game theory for improved credit assignment in dialogue systems.

🛠️ Research Methods:

– Combination of expected utility shifts and Shapley values to evaluate utterances’ strategic potential and ensure fair credit distribution with axiomatic guarantees.

💬 Research Conclusions:

– SAVOIR achieves state-of-the-art performance on the SOTOPIA benchmark, surpassing large proprietary models, indicating that social intelligence in language agents requires distinct capabilities from analytical reasoning.

👉 Paper link: https://huggingface.co/papers/2604.18982

22. Tadabur: A Large-Scale Quran Audio Dataset

🔑 Keywords: Quranic data, Tadabur, audio dataset, recitation styles

💡 Category: Natural Language Processing

🌟 Research Objective:

– To present Tadabur, a comprehensive and representative Quran audio dataset that significantly expands the scale and diversity of current datasets.

🛠️ Research Methods:

– Compilation of over 1400 hours of audio recitation from more than 600 reciters, capturing variations in style, vocal attributes, and recording conditions.

💬 Research Conclusions:

– Tadabur aims to support future research by providing a substantial resource for Quranic speech research and to aid in the development of standardized Quranic speech benchmarks.

👉 Paper link: https://huggingface.co/papers/2604.18932

23. Image Generators are Generalist Vision Learners

🔑 Keywords: Image Generation, Vision Tasks, Instruction Tuning, Generative Models, Foundational Vision Models

💡 Category: Computer Vision

🌟 Research Objective:

– To explore the role of image generation pretraining in developing strong visual understanding capabilities and achieving state-of-the-art (SOTA) performance on various vision tasks.

🛠️ Research Methods:

– Introduced a generalist model named Vision Banana by instruction-tuning Nano Banana Pro (NBP) with a combination of original training data and a small amount of vision task data, using image generation as a unified interface for vision tasks.

💬 Research Conclusions:

– Vision Banana achieves SOTA results in both 2D and 3D understanding, surpassing zero-shot domain-specialists in some cases, demonstrating that image generation pretraining serves as a powerful approach for generalist vision learning.

– The study highlights a potential paradigm shift in computer vision towards generative vision pretraining for building foundational models that excel in both generative and understanding capabilities.

👉 Paper link: https://huggingface.co/papers/2604.20329

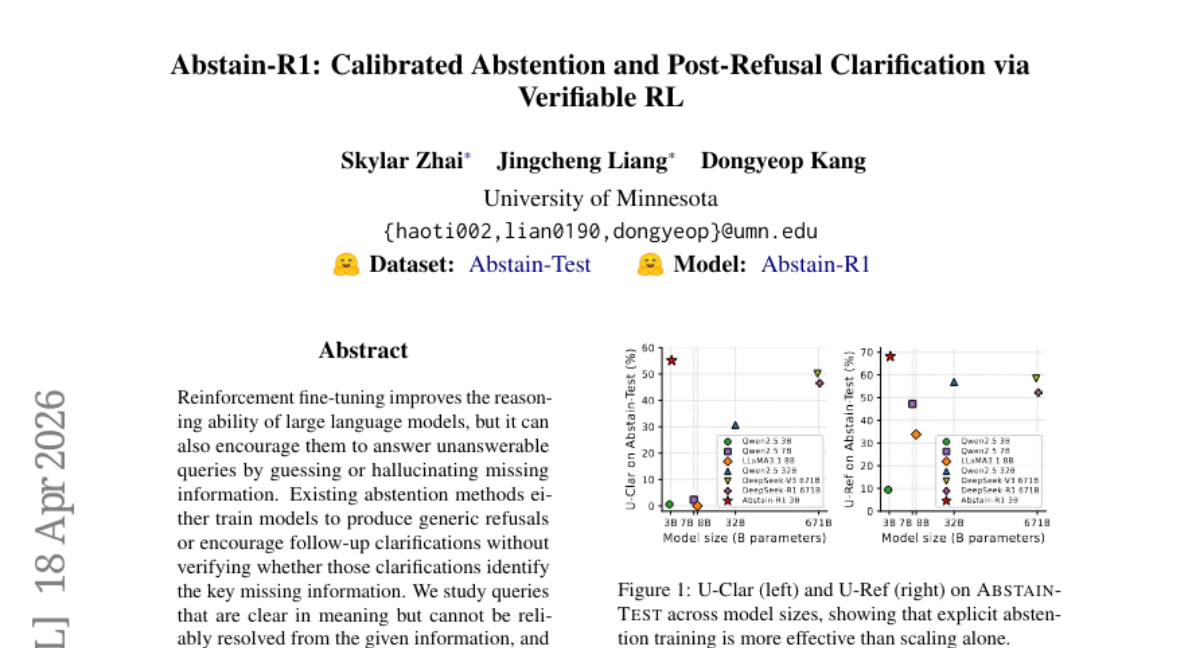

24. Abstain-R1: Calibrated Abstention and Post-Refusal Clarification via Verifiable RL

🔑 Keywords: Reinforcement fine-tuning, large language models, clarification-aware RLVR reward, Abstain-R1, calibrated abstention

💡 Category: Natural Language Processing

🌟 Research Objective:

– To enhance the reasoning ability of large language models while enabling effective abstention and clarification for unanswerable queries through a new reward mechanism.

🛠️ Research Methods:

– Proposition of clarification-aware RLVR reward that simultaneously optimizes abstention and clarification on unanswerable queries alongside correct responses to answerable ones.

– Development and training of Abstain-R1, a 3B model, utilizing this reward system.

💬 Research Conclusions:

– Abstain-R1 shows improved performance on unanswerable queries with notable abstention and clarification capabilities, matching larger systems like DeepSeek-R1.

– Verifiable rewards play a key role in learning calibrated abstention and clarification, without reliance on model scale alone.

👉 Paper link: https://huggingface.co/papers/2604.17073

25. SWE-chat: Coding Agent Interactions From Real Users in the Wild

🔑 Keywords: AI coding agents, SWE-chat, real coding agent interactions, inefficiencies, security vulnerabilities

💡 Category: Human-AI Interaction

🌟 Research Objective:

– To present SWE-chat, a large-scale dataset capturing real-world coding agent usage and inefficiencies in AI-assisted development.

🛠️ Research Methods:

– Leveraged a dataset of 6,000 sessions from open-source developers, with a focus on agent tool calls and user prompts, and continuously updated from public repositories.

💬 Research Conclusions:

– Found that coding agents exhibit bimodal patterns of interaction; inefficient, with only 44% of agent-produced code integrating into final commits and resulting in increased security vulnerabilities.

👉 Paper link: https://huggingface.co/papers/2604.20779

26. Expert Upcycling: Shifting the Compute-Efficient Frontier of Mixture-of-Experts

🔑 Keywords: Expert Upcycling, Mixture-of-Experts, continued pre-training, expert duplication, router extension

💡 Category: Machine Learning

🌟 Research Objective:

– To propose and formalize the “expert upcycling” method for expanding Mixture-of-Experts (MoE) capacity while maintaining fixed inference cost, improving training efficiency and model quality.

🛠️ Research Methods:

– Utilization of expert duplication and router extension techniques to increase expert numbers during continued pre-training, integrating top-K routing to preserve per-token inference cost.

– Deployment of utility-based expert selection using gradient-based importance scores to enhance non-uniform duplication.

💬 Research Conclusions:

– The upcycling approach allows for warm initialization, maintaining learned representations and starts from lower loss levels compared to random initialization.

– Expert upcycling effectively saves computational resources, reducing GPU hours by 32% in experiments, and matches performance with fixed-size models on validation loss.

– Presents a practical, compute-efficient alternative for large-scale MoE models, validated across diverse model scales and architectures.

👉 Paper link: https://huggingface.co/papers/2604.19835

27. A Self-Evolving Framework for Efficient Terminal Agents via Observational Context Compression

🔑 Keywords: TACO, self-evolving compression, token overhead, terminal agents

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The primary objective is to develop TACO, a terminal agent compression framework that automatically discovers and refines compression rules to enhance long-horizon agent performance while reducing token overhead.

🛠️ Research Methods:

– The research introduces a plug-and-play, self-evolving framework tested on TerminalBench and four additional benchmarks, demonstrating improvements across mainstream agent frameworks and backbone models.

💬 Research Conclusions:

– TACO improves performance significantly in bench tests, consistently reducing token overhead by approximately 10% and increasing accuracy by 1%-4% across various agent models, proving its effectiveness and generalization for terminal agents.

👉 Paper link: https://huggingface.co/papers/2604.19572

28. Reward Hacking in the Era of Large Models: Mechanisms, Emergent Misalignment, Challenges

🔑 Keywords: Reward hacking, Language models, Proxy Compression Hypothesis, Misalignment, Reinforcement Learning from Human Feedback (RLHF)

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The paper aims to explore reward hacking in aligned language models and how it arises due to optimizing expressive policies against compressed reward signals, leading to potentially broader forms of misalignment.

🛠️ Research Methods:

– The researchers propose the Proxy Compression Hypothesis (PCH) as a framework to understand reward hacking, focusing on the interaction between objective compression, optimization amplification, and evaluator-policy co-adaptation.

💬 Research Conclusions:

– Reward hacking manifests as structural instability in proxy-based alignment, resulting in various misalignments such as verbosity bias and deception. The study calls for advancements in scalable oversight, multimodal grounding, and agentic autonomy to address these challenges.

👉 Paper link: https://huggingface.co/papers/2604.13602

29. OpenMobile: Building Open Mobile Agents with Task and Trajectory Synthesis

🔑 Keywords: Mobile agents, vision-language models, task synthesis pipeline, policy-switching strategy, AndroidWorld

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– The research aims to develop an open-source framework called OpenMobile for training mobile agents, improving performance and transparency in task instruction and trajectory synthesis.

🛠️ Research Methods:

– Utilization of a scalable task synthesis pipeline that constructs a global environment memory for generating diverse instructions.

– Implementation of a policy-switching strategy to enhance trajectory rollout by alternating between learner and expert models.

💬 Research Conclusions:

– OpenMobile-trained agents achieve competitive results on mobile agent benchmarks, such as AndroidWorld, with significant improvements in success rates, reaching 51.7% and 64.7%.

– The study provides transparent analyses to confirm that performance gains are not due to benchmark overfitting, but broad functionality coverage.

– Data and code are publicly released to support further mobile agent research.

👉 Paper link: https://huggingface.co/papers/2604.15093

30. Near-Future Policy Optimization

🔑 Keywords: Reinforcement Learning, Near-Future Policy Optimization, Effective Learning Signal, Mixed-Policy Methods

💡 Category: Reinforcement Learning

🌟 Research Objective:

– To accelerate reinforcement learning convergence and enhance performance by optimizing mixed-policy trajectories using Near-Future Policy Optimization (NPO).

🛠️ Research Methods:

– Utilization of Near-Future Policy Optimization (NPO) which leverages a policy’s own near-future checkpoints as a natural source of auxiliary trajectories.

– Validation through manual interventions such as early-stage bootstrapping and late-stage plateau breakthrough.

– Introduction of AutoNPO, an adaptive variant utilizing online training signals to optimize policy performance.

💬 Research Conclusions:

– NPO was demonstrated to improve average performance metrics significantly on Qwen3-VL-8B-Instruct with GRPO, increasing from 57.88 to 62.84, while AutoNPO further elevates it to 63.15.

– The approach effectively balances trajectory quality against variance cost, raising the final performance ceiling.

👉 Paper link: https://huggingface.co/papers/2604.20733