AI Native Daily Paper Digest – 20260428

1. From Skills to Talent: Organising Heterogeneous Agents as a Real-World Company

🔑 Keywords: OneManCompany, multi-agent systems, agent identities, Talent Market, hierarchical loop

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The research aims to introduce OneManCompany (OMC), an organizational framework designed for multi-agent systems that facilitates dynamic team assembly, governance, and improvement through portable agent identities and hierarchical decision-making processes.

🛠️ Research Methods:

– OMC encapsulates skills and runtime configurations into portable agent identities called Talents, coordinated through typed organizational interfaces. It utilizes an Explore-Execute-Review (E^2R) tree search for unified hierarchical decision-making, decomposing tasks into accountable units.

💬 Research Conclusions:

– The OMC framework transforms static multi-agent systems into self-organizing and self-improving AI organizations, achieving significant improvements in success rate as demonstrated by its 84.67% success rate on PRDBench, surpassing the state of the art by 15.48 percentage points.

👉 Paper link: https://huggingface.co/papers/2604.22446

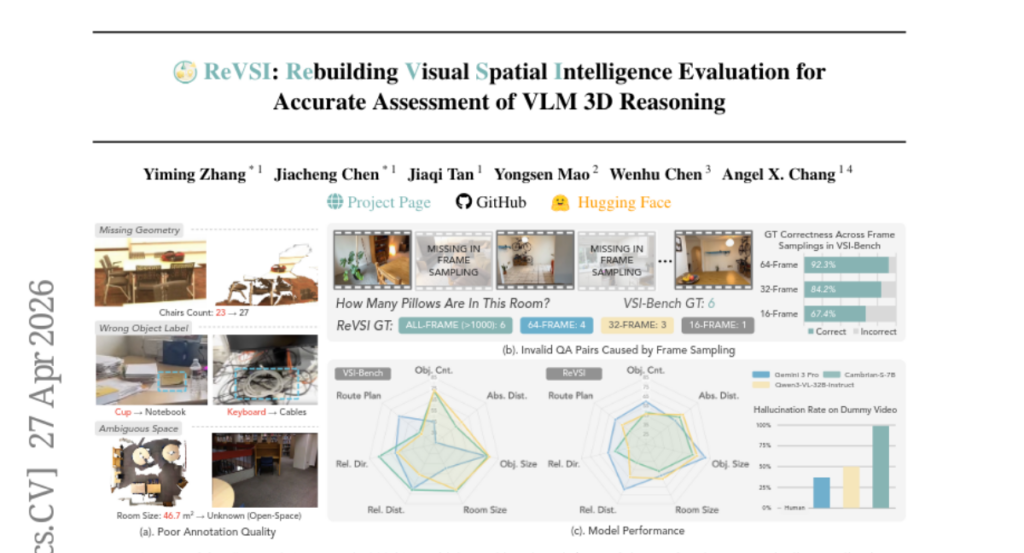

2. ReVSI: Rebuilding Visual Spatial Intelligence Evaluation for Accurate Assessment of VLM 3D Reasoning

🔑 Keywords: ReVSI, Spatial Intelligence, Benchmark, VLMs, 3D Annotations

💡 Category: Computer Vision

🌟 Research Objective:

– The paper aims to improve the validity of spatial intelligence evaluations by introducing a new benchmark, ReVSI, with enhanced annotations and controlled sampling conditions.

🛠️ Research Methods:

– The research involved re-annotating objects and geometry across 381 scenes from five datasets, using professional 3D annotation tools, ensuring that each QA pair is correctly answerable under model inputs.

💬 Research Conclusions:

– Evaluations using ReVSI reveal systematic failure modes in general and domain-specific VLMs that were hidden in previous benchmarks, leading to a more reliable assessment of spatial intelligence.

👉 Paper link: https://huggingface.co/papers/2604.24300

3. Vision-Language-Action Safety: Threats, Challenges, Evaluations, and Mechanisms

🔑 Keywords: Vision-Language-Action models, embodied intelligence, adversarial attacks, data poisoning, safety challenges

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To provide a unified and comprehensive overview of safety in Vision-Language-Action models, addressing the unique safety challenges they present due to their embodied nature.

🛠️ Research Methods:

– The survey organizes the safety aspects of VLA models along attack and defense timing axes, distinguishes VLA safety from other safety areas, and reviews threats, defenses, evaluations, and deployments in this domain.

💬 Research Conclusions:

– The research highlights the fragmented nature of current literature and emphasizes the need for a unified approach to address safety challenges. It underlines key open problems such as establishing certified robustness for embodied trajectories and developing standardized evaluation and safety-aware training methods.

👉 Paper link: https://huggingface.co/papers/2604.23775

4. Tuna-2: Pixel Embeddings Beat Vision Encoders for Multimodal Understanding and Generation

🔑 Keywords: Tuna-2, pixel embeddings, unified multimodal model, visual understanding, end-to-end optimization

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Introduce Tuna-2, a unified multimodal model capable of performing visual understanding and generation directly from pixel embeddings, without relying on pretrained vision encoders.

🛠️ Research Methods:

– Implementation of simple patch embedding layers to encode visual input, completely foregoing traditional modular vision encoder designs such as VAEs.

💬 Research Conclusions:

– Tuna-2 achieves state-of-the-art performance in multimodal benchmarks.

– Demonstrates that end-to-end pixel-space learning offers scalable and stronger visual representations, particularly excelling in tasks requiring fine-grained visual perception.

– Highlights that pretrained vision encoders are unnecessary for effective multimodal modelling.

👉 Paper link: https://huggingface.co/papers/2604.24763

5. World-R1: Reinforcing 3D Constraints for Text-to-Video Generation

🔑 Keywords: 3D constraints, reinforcement learning, video generation, geometric consistency, world simulation

💡 Category: Generative Models

🌟 Research Objective:

– To enhance video generation by aligning it with 3D constraints using reinforcement learning and specialized text datasets.

🛠️ Research Methods:

– Implemented World-R1 framework leveraging Flow-GRPO for structural coherence.

– Utilized feedback from pre-trained 3D foundation models and vision-language models.

– Adopted periodic decoupled training strategy to balance geometric consistency with scene fluidity.

💬 Research Conclusions:

– The approach significantly improves 3D consistency while maintaining visual quality and scalability in video generation.

👉 Paper link: https://huggingface.co/papers/2604.24764