AI Native Daily Paper Digest – 20250818

1. SSRL: Self-Search Reinforcement Learning

🔑 Keywords: LLMs, Self-Search, Self-Search RL, Reinforcement Learning

💡 Category: Reinforcement Learning

🌟 Research Objective:

– To explore the potential of large language models (LLMs) as efficient simulators for agentic search tasks in reinforcement learning (RL) to reduce dependence on external search engines.

🛠️ Research Methods:

– Quantifying intrinsic search capability through structured prompting and repeated sampling, referred to as Self-Search.

– Introducing Self-Search RL (SSRL), which enhances Self-Search capability using format-based and rule-based rewards.

💬 Research Conclusions:

– LLMs demonstrate substantial world knowledge, achieving high performance on question-answering benchmarks.

– SSRL reduces hallucination and integrates seamlessly with external search engines.

– SSRL-trained policy models provide a cost-effective and stable environment, facilitating robust sim-to-real transfer in RL.

👉 Paper link: https://huggingface.co/papers/2508.10874

2. Thyme: Think Beyond Images

🔑 Keywords: Thyme, MLLMs, image manipulations, reasoning tasks, GRPO-ATS

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To introduce Thyme, a novel paradigm that enables MLLMs to autonomously perform diverse image manipulations and computations, enhancing performance in perception and reasoning tasks.

🛠️ Research Methods:

– Employed a two-stage training strategy consisting of initial SFT on a curated dataset of 500K samples and an RL phase refined with GRPO-ATS algorithm.

💬 Research Conclusions:

– Thyme yields significant and consistent performance gains in nearly 20 benchmarks, particularly effective in challenging high-resolution perception and complex reasoning tasks.

👉 Paper link: https://huggingface.co/papers/2508.11630

3. DINOv3

🔑 Keywords: DINOv3, Self-supervised learning, Gram anchoring, Vision foundation model, Scalable solutions

💡 Category: Computer Vision

🌟 Research Objective:

– To introduce DINOv3, a self-supervised learning model designed to enhance performance across various vision tasks by scaling datasets and models and applying post-hoc strategies.

🛠️ Research Methods:

– Leveraging simple yet effective strategies such as scaling dataset and model sizes, introduction of Gram anchoring methods, and employing post-hoc techniques to improve model flexibility.

💬 Research Conclusions:

– DINOv3 presents a versatile vision foundation model that outperforms the specialized state of the art across a broad range of settings without fine-tuning, achieving outstanding performance on diverse vision tasks.

👉 Paper link: https://huggingface.co/papers/2508.10104

4. BeyondWeb: Lessons from Scaling Synthetic Data for Trillion-scale Pretraining

🔑 Keywords: synthetic data, pretraining, large language models, BeyondWeb

💡 Category: Natural Language Processing

🌟 Research Objective:

– Introduce BeyondWeb, a synthetic data generation framework, that aims to improve pretraining for large language models by optimizing multiple factors.

🛠️ Research Methods:

– Benchmark evaluations against state-of-the-art synthetic datasets like Cosmopedia and Nemotron-Synth, demonstrating performance improvements and faster training times.

💬 Research Conclusions:

– BeyondWeb achieves significantly better performance compared to existing datasets, facilitating more efficient training of models. Insights show that optimizing multiple factors is crucial for generating high-quality synthetic data for pretraining.

👉 Paper link: https://huggingface.co/papers/2508.10975

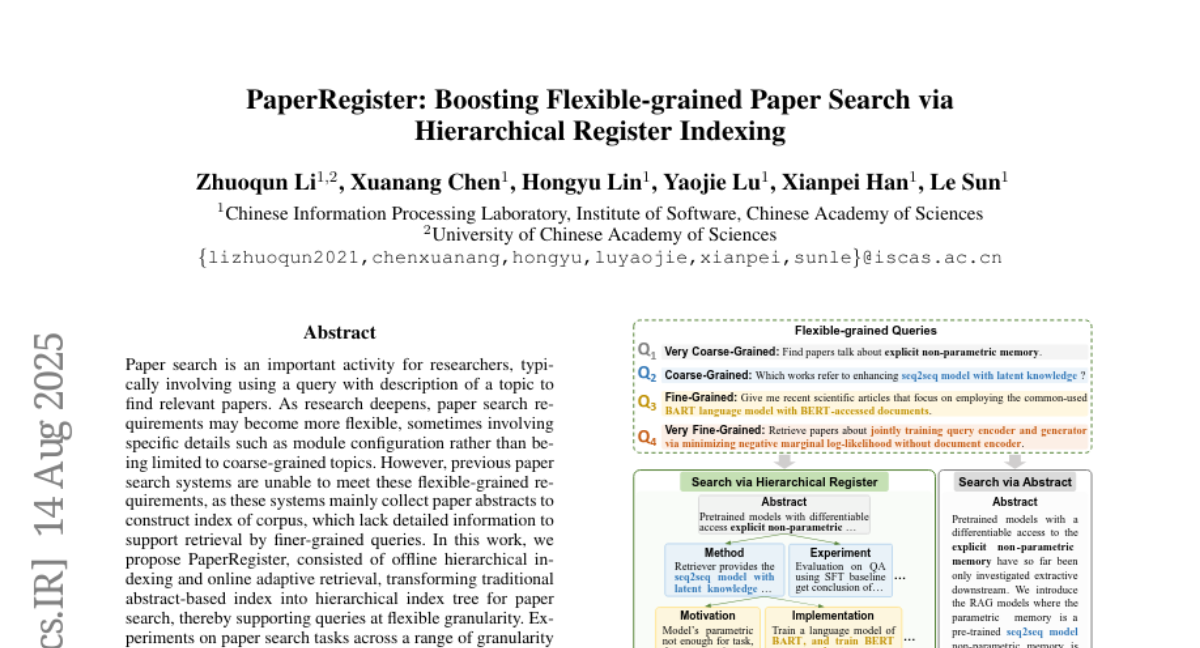

5. PaperRegister: Boosting Flexible-grained Paper Search via Hierarchical Register Indexing

🔑 Keywords: Hierarchical Indexing, Adaptive Retrieval, Fine-Grained Queries, AI-generated Summary, Paper Search

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The study aims to enhance paper search capabilities by using hierarchical indexing and adaptive retrieval, enabling more flexible and fine-grained paper queries beyond traditional abstract-based systems.

🛠️ Research Methods:

– The proposed system, named PaperRegister, transforms traditional abstract-based indexes into hierarchical index trees and utilizes online adaptive retrieval to support variable granularity in paper searches.

💬 Research Conclusions:

– Experiments across different granularity levels show that PaperRegister achieves state-of-the-art performance, particularly excelling in fine-grained search scenarios, indicating its potential utility in real-world applications.

👉 Paper link: https://huggingface.co/papers/2508.11116

6. XQuant: Breaking the Memory Wall for LLM Inference with KV Cache Rematerialization

🔑 Keywords: XQuant, XQuant-CL, low-bit quantization, memory savings, cross-layer similarity

💡 Category: Natural Language Processing

🌟 Research Objective:

– The study aims to reduce memory consumption in large language model (LLM) inference by exploiting low-bit quantization and cross-layer similarity.

🛠️ Research Methods:

– The research introduces XQuant, leveraging low-bit quantization and caching of layer input activations for memory reduction. It also presents XQuant-CL, which utilizes cross-layer similarity for further compression.

💬 Research Conclusions:

– XQuant achieves up to a 7.7 times reduction in memory usage with minimal accuracy loss, while XQuant-CL extends this to 10-12.5 times memory savings, maintaining near-FP16 accuracy with minimal perplexity degradation.

👉 Paper link: https://huggingface.co/papers/2508.10395

7. TexVerse: A Universe of 3D Objects with High-Resolution Textures

🔑 Keywords: TexVerse, high-resolution textures, 3D vision, PBR materials

💡 Category: Computer Vision

🌟 Research Objective:

– Introduce TexVerse, a comprehensive 3D dataset with over 858K high-resolution models, aimed at enhancing research in texture synthesis and PBR material development.

🛠️ Research Methods:

– TexVerse aggregates 3D models, including 158K models with PBR materials, from Sketchfab, incorporating all high-resolution variants for a total of 1.6M 3D instances.

💬 Research Conclusions:

– TexVerse expands the landscape of 3D datasets with its extensive collection, offering significant potential for applications in texture synthesis, animation, and various 3D vision and graphics tasks.

👉 Paper link: https://huggingface.co/papers/2508.10868

8. StyleMM: Stylized 3D Morphable Face Model via Text-Driven Aligned Image Translation

🔑 Keywords: StyleMM, 3D Morphable Model (3DMM), stylization method, facial attributes, diffusion model

💡 Category: Generative Models

🌟 Research Objective:

– Introduce StyleMM, a framework for constructing stylized 3D Morphable Models based on user-defined text descriptions.

🛠️ Research Methods:

– Utilize a diffusion model for text-guided image-to-image translation while preserving facial attributes.

– Fine-tune pre-trained mesh deformation and texture generation networks with stylized facial images.

💬 Research Conclusions:

– StyleMM demonstrates enhanced identity-level facial diversity and stylization capability compared to state-of-the-art methods.

– Enables feed-forward generation of stylized face meshes with control over shape, expression, and texture.

👉 Paper link: https://huggingface.co/papers/2508.11203

9. FantasyTalking2: Timestep-Layer Adaptive Preference Optimization for Audio-Driven Portrait Animation

🔑 Keywords: multimodal reward model, adaptive preference optimization, AI-generated summary, lip-sync accuracy, visual quality

💡 Category: Generative Models

🌟 Research Objective:

– To enhance audio-driven portrait animation by aligning models with human preferences across multiple dimensions, including motion naturalness and lip-sync accuracy.

🛠️ Research Methods:

– Introduced Talking-Critic, a multimodal reward model to quantify satisfaction of videos with respect to multidimensional human preferences.

– Developed Talking-NSQ, a large-scale dataset with 410K human preference pairs.

– Proposed Timestep-Layer adaptive multi-expert Preference Optimization (TLPO) framework to enhance portrait animation models, decoupling preferences into expert modules fused across network layers.

💬 Research Conclusions:

– Talking-Critic surpasses existing methods in aligning with human preference ratings.

– TLPO framework shows significant improvements in lip-sync accuracy, motion naturalness, and visual quality, outperforming baseline models in both qualitative and quantitative evaluations.

👉 Paper link: https://huggingface.co/papers/2508.11255

10. X-Node: Self-Explanation is All We Need

🔑 Keywords: X-Node, self-explaining GNN, interpretability, AI in Healthcare

💡 Category: AI in Healthcare

🌟 Research Objective:

– Develop X-Node, a self-explaining GNN framework to enhance node-level interpretability while maintaining classification accuracy in high-stakes applications, such as healthcare.

🛠️ Research Methods:

– Encode local topology features (e.g., degree, centrality, clustering) into a context vector for each node.

– Utilize a lightweight Reasoner module to create a compact explanation vector.

– Incorporate explanations back into the GNN through a text-injection mechanism.

💬 Research Conclusions:

– X-Node provides faithful per-node explanations and maintains competitive accuracy on graph datasets from MedMNIST and MorphoMNIST.

👉 Paper link: https://huggingface.co/papers/2508.10461

11. Controlling Multimodal LLMs via Reward-guided Decoding

🔑 Keywords: Multimodal Large Language Models, Reward-guided decoding, Visual grounding, Object precision, Image captioning

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The study aims to enhance the adaptability of Multimodal Large Language Models (MLLMs) for diverse user needs through controlled decoding.

🛠️ Research Methods:

– Introduces a novel reward-guided decoding method for MLLMs to improve visual grounding by building separate reward models for controlling object precision and recall.

💬 Research Conclusions:

– The proposed method provides significant controllability over MLLM inference and consistently outperforms existing hallucination mitigation methods on standard benchmarks.

👉 Paper link: https://huggingface.co/papers/2508.11616

12. SPARSE Data, Rich Results: Few-Shot Semi-Supervised Learning via Class-Conditioned Image Translation

🔑 Keywords: GAN-based semi-supervised learning, neural networks, ensemble-based pseudo-labeling, MedMNIST datasets, 5-shot setting

💡 Category: AI in Healthcare

🌟 Research Objective:

– To develop a GAN-based semi-supervised learning framework aimed at improving medical image classification with minimal labeled data.

🛠️ Research Methods:

– The framework integrates three specialized neural networks within a three-phase training framework, alternating between supervised and unsupervised learning.

– It employs ensemble-based pseudo-labeling, combining confidence-weighted predictions with temporal consistency for reliable label estimation.

💬 Research Conclusions:

– The proposed framework shows statistically significant improvements over existing methods, particularly in scenarios with extremely limited labeled data (5-shot setting), offering a practical solution for medical imaging where annotation costs are high.

👉 Paper link: https://huggingface.co/papers/2508.06429

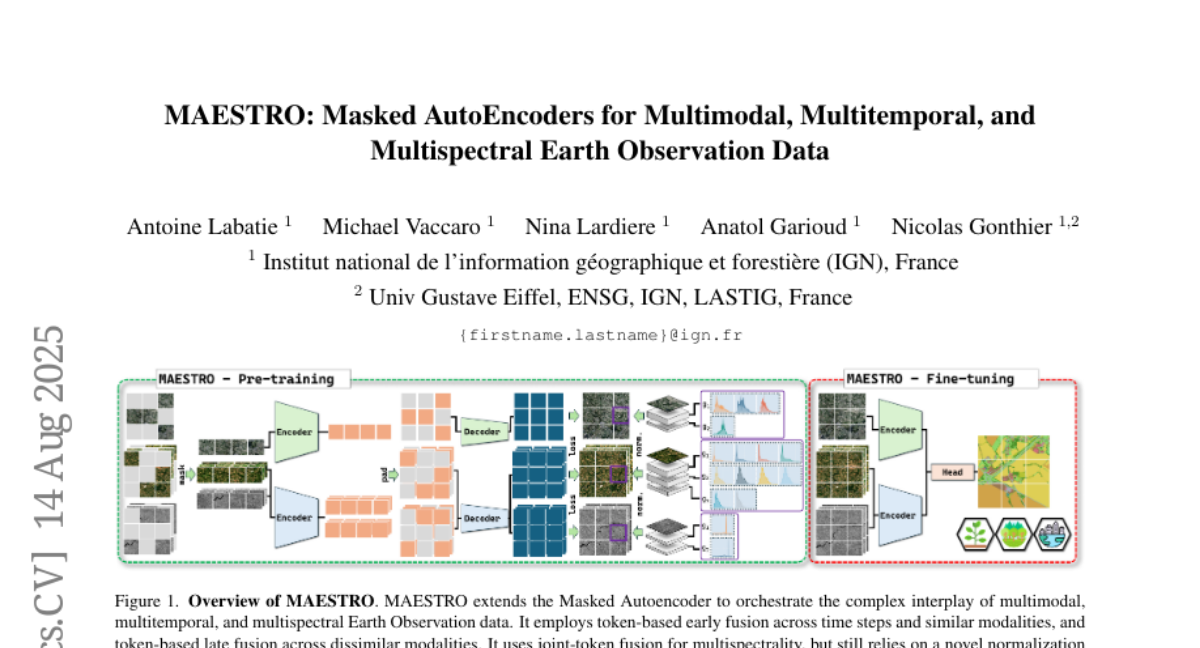

13. MAESTRO: Masked AutoEncoders for Multimodal, Multitemporal, and Multispectral Earth Observation Data

🔑 Keywords: Self-supervised learning, Earth observation, Masked Autoencoder, fusion strategies, spectral prior

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To adapt standard self-supervised learning methods for the unique characteristics of Earth observation data by proposing MAESTRO, an adapted Masked Autoencoder with optimized fusion strategies and spectral prior normalization.

🛠️ Research Methods:

– Conducted a comprehensive benchmark of fusion strategies and reconstruction target normalization schemes for multimodal, multitemporal, and multispectral Earth observation data.

💬 Research Conclusions:

– MAESTRO achieves state-of-the-art performance on multitemporal Earth observation tasks, demonstrating competitive results across different temporal modalities.

👉 Paper link: https://huggingface.co/papers/2508.10894

14.