AI Native Daily Paper Digest – 20250821

1. From Scores to Skills: A Cognitive Diagnosis Framework for Evaluating Financial Large Language Models

🔑 Keywords: FinCDM, AI Native, Large Language Models, financial LLMs, knowledge-skill level

💡 Category: AI in Finance

🌟 Research Objective:

– The paper introduces FinCDM, a cognitive diagnosis framework, aiming to accurately evaluate financial Large Language Models (LLMs) at the knowledge-skill level, revealing hidden knowledge gaps.

🛠️ Research Methods:

– A new financial evaluation dataset, CPA-QKA, is constructed from the Certified Public Accountant examination, enabling detailed assessment through skill-tagged tasks and fine-grained knowledge labels validated by domain experts.

💬 Research Conclusions:

– FinCDM provides a novel paradigm for evaluating financial LLMs, uncovering knowledge gaps, under-tested areas like tax and regulatory reasoning, and behavioral clusters, promoting more targeted and trustworthy model development.

👉 Paper link: https://huggingface.co/papers/2508.13491

2. DuPO: Enabling Reliable LLM Self-Verification via Dual Preference Optimization

🔑 Keywords: Dual Learning, Annotation-Free, Reinforcement Learning, LLMs, Self-Supervised Reward

💡 Category: Natural Language Processing

🌟 Research Objective:

– The research introduces DuPO, a dual learning-based framework to optimize tasks without reliance on costly labels by using a generalized duality.

🛠️ Research Methods:

– DuPO decomposes a primal task into known and unknown components and constructs its dual task to reconstruct unknown parts, synergizing with large language models (LLMs) for enhanced performance across non-invertible tasks.

💬 Research Conclusions:

– DuPO demonstrates significant improvements, such as a 2.13 COMET increase in translation quality over 756 directions, a rise of 6.4 points in mathematical reasoning accuracy, and a 9.3-point boost in inference-time accuracy, marking it as a scalable and annotation-free paradigm for LLM optimization.

👉 Paper link: https://huggingface.co/papers/2508.14460

3. FutureX: An Advanced Live Benchmark for LLM Agents in Future Prediction

🔑 Keywords: Future prediction, LLM agents, real-time updates, data contamination, reasoning

💡 Category: Natural Language Processing

🌟 Research Objective:

– Introduce FutureX, a dynamic and live benchmark designed to evaluate LLM agents in future prediction tasks, addressing issues with real-time updates and data contamination.

🛠️ Research Methods:

– Evaluate 25 LLM/agent models on their adaptive reasoning and performance, utilizing both reasoning capabilities and the integration of external tools.

💬 Research Conclusions:

– Establish a contamination-free evaluation standard, providing insights into agents’ failure modes, performance pitfalls, and vulnerabilities to fake web pages, aiming to enhance LLM agents’ capabilities to professional human analyst levels in complex reasoning.

👉 Paper link: https://huggingface.co/papers/2508.11987

4. MeshCoder: LLM-Powered Structured Mesh Code Generation from Point Clouds

🔑 Keywords: MeshCoder, Blender Python scripts, point clouds, 3D shape understanding, multimodal large language model

💡 Category: AI Systems and Tools

🌟 Research Objective:

– Introduce MeshCoder for reconstructing complex 3D objects from point clouds into editable Blender Python scripts.

🛠️ Research Methods:

– Developed expressive Blender Python APIs and constructed a large-scale paired object-code dataset.

– Trained a multimodal large language model to translate 3D point clouds into executable scripts.

💬 Research Conclusions:

– MeshCoder achieves superior performance in shape-to-code reconstruction, enabling intuitive geometric and topological editing.

– Enhances reasoning capabilities of LLMs in tasks related to 3D shape understanding.

👉 Paper link: https://huggingface.co/papers/2508.14879

5. Tinker: Diffusion’s Gift to 3D–Multi-View Consistent Editing From Sparse Inputs without Per-Scene Optimization

🔑 Keywords: Diffusion models, 3D editing, Multi-view consistency, Zero-shot 3D editing, Scene completion

💡 Category: Generative Models

🌟 Research Objective:

– The goal was to create Tinker, a framework enabling high-fidelity 3D editing without extensive per-scene training, achieving multi-view consistency from as few as one or two images.

🛠️ Research Methods:

– Utilization of pretrained diffusion models to harness their latent 3D awareness.

– Development of a large-scale multi-view editing dataset and data pipeline.

– Integration of two novel components: a Referring multi-view editor, and an Any-view-to-video synthesizer.

💬 Research Conclusions:

– Tinker reduces the barrier to generalizable 3D content creation.

– It achieves state-of-the-art performance in editing, novel-view synthesis, and rendering enhancement tasks.

– Represents a step towards scalable, zero-shot 3D editing.

👉 Paper link: https://huggingface.co/papers/2508.14811

6. From AI for Science to Agentic Science: A Survey on Autonomous Scientific Discovery

🔑 Keywords: AI for Science, large language models, multimodal systems, Agentic Science, autonomous scientific discovery

💡 Category: AI Systems and Tools

🌟 Research Objective:

– Position Agentic Science as a pivotal stage in AI-driven scientific discovery, unifying fragmented perspectives and enhancing scientific agency across multiple domains.

🛠️ Research Methods:

– Utilize a comprehensive framework to trace AI evolution in scientific discovery, model a dynamic workflow, and review domain-specific applications.

💬 Research Conclusions:

– Establishes Agentic Science as a structured paradigm for AI-driven research, highlighting core capabilities, dynamic workflows, and future opportunities for autonomous scientific discovery.

👉 Paper link: https://huggingface.co/papers/2508.14111

7. MCP-Universe: Benchmarking Large Language Models with Real-World Model Context Protocol Servers

🔑 Keywords: MCP-Universe, large language models, long-horizon reasoning, unknown-tools challenge, AI-generated summary

💡 Category: Natural Language Processing

🌟 Research Objective:

– The study aims to introduce MCP-Universe, a comprehensive benchmark designed to evaluate large language models in realistic and challenging tasks through interaction with real-world MCP servers.

🛠️ Research Methods:

– The research involves creating six core domains across eleven different MCP servers, utilizing execution-based evaluators, format evaluators for agent format compliance, static evaluators for content matching, and dynamic evaluators for real-time data retrieval.

💬 Research Conclusions:

– Performance limitations were identified even in leading models like GPT-5 and Grok-4. The benchmark highlights challenges in long-horizon reasoning, handling large context input, and navigating unknown tool spaces.

– Enterprise-level agents show no superior performance compared to standard frameworks, and an open-source extensible evaluation framework is provided to encourage further innovations in the MCP ecosystem.

👉 Paper link: https://huggingface.co/papers/2508.14704

8. Quantization Meets dLLMs: A Systematic Study of Post-training Quantization for Diffusion LLMs

🔑 Keywords: dLLMs, post-training quantization, activation outliers, low-bit quantization, AI-generated summary

💡 Category: Natural Language Processing

🌟 Research Objective:

– The study aims to systematically explore the challenges and evaluate state-of-the-art methods for quantizing diffusion large language models (dLLMs) to improve their deployment on edge devices.

🛠️ Research Methods:

– Implementation of state-of-the-art post-training quantization methods and a comprehensive evaluation across various task types, model variants, and key parameters such as bit-width and quantization method.

💬 Research Conclusions:

– The study identifies activation outliers as a significant obstacle to effective low-bit quantization, which complicates precision preservation. Insights from the multi-perspective evaluation offer a foundation for future research in efficient dLLM deployment.

👉 Paper link: https://huggingface.co/papers/2508.14896

9. NVIDIA Nemotron Nano 2: An Accurate and Efficient Hybrid Mamba-Transformer Reasoning Model

🔑 Keywords: Nemotron-Nano-9B-v2, Hybrid Mamba-Transformer, self-attention layers, Minitron strategy, inference throughput

💡 Category: Natural Language Processing

🌟 Research Objective:

– The primary aim of the research is to enhance reasoning workload throughput and accuracy by developing a new model, Nemotron-Nano-9B-v2, which utilizes a hybrid Mamba-Transformer architecture.

🛠️ Research Methods:

– The model replaces traditional self-attention layers with Mamba-2 layers to improve inference speed and incorporates the Minitron strategy for model compression and distillation.

💬 Research Conclusions:

– Nemotron-Nano-9B-v2 outperforms similarly-sized models like Qwen3-8B in reasoning benchmarks, achieving up to 6x higher inference throughput while maintaining comparable or superior accuracy. The checkpoints and datasets are made available on Hugging Face for public access.

👉 Paper link: https://huggingface.co/papers/2508.14444

10. RynnEC: Bringing MLLMs into Embodied World

🔑 Keywords: RynnEC, Region-Centric, Embodied Cognition, Object Segmentation, Spatial Reasoning

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Introduce RynnEC, a video multimodal large language model designed for enhancing embodied cognition through a region-centric approach.

🛠️ Research Methods:

– Utilize a region encoder and a mask decoder within an egocentric video pipeline to achieve flexible region-level video interaction.

💬 Research Conclusions:

– RynnEC demonstrates state-of-the-art performance in object property understanding, segmentation, and spatial reasoning, offering a new paradigm for precise interaction in embodied agents.

– The introduction of RynnEC-Bench provides a region-centered benchmark for evaluating embodied cognitive capabilities.

👉 Paper link: https://huggingface.co/papers/2508.14160

11. Virtuous Machines: Towards Artificial General Science

🔑 Keywords: Domain-Agnostic AI, Agentic AI, Hypothesis Generation, Methodological Rigour, Real-World Experiments

💡 Category: AI Systems and Tools

🌟 Research Objective:

– Demonstration of a domain-agnostic AI system capable of autonomously designing, executing, and analyzing psychological studies with methodological rigor comparable to human researchers.

🛠️ Research Methods:

– The AI system independently executed three psychological studies, conducted online data collection with 288 participants, and developed analysis pipelines through extended continuous coding sessions.

💬 Research Conclusions:

– The AI system showcased its capability to conduct non-trivial research tasks comparable to experienced researchers, though it faces limitations in conceptual nuance and theoretical interpretation. This work represents progress towards AI systems that autonomously explore uncharted scientific areas, raising questions about scientific understanding and credit attribution.

👉 Paper link: https://huggingface.co/papers/2508.13421

12. On-Policy RL Meets Off-Policy Experts: Harmonizing Supervised Fine-Tuning and Reinforcement Learning via Dynamic Weighting

🔑 Keywords: CHORD, Supervised Fine-Tuning, Reinforcement Learning, Large Language Models, Dynamic Weighting

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The primary objective of this study is to integrate Supervised Fine-Tuning and Reinforcement Learning using a novel framework, CHORD, that dynamically weights off-policy and on-policy data to enhance model stability and performance in Large Language Models.

🛠️ Research Methods:

– CHORD reframes SFT as a dynamically weighted auxiliary objective within the on-policy RL process, incorporating a dual-control mechanism that includes a global coefficient and token-wise weighting function.

💬 Research Conclusions:

– Extensive experiments on widely used benchmarks demonstrate that CHORD achieves stable and efficient learning, effectively harmonizing off-policy expert data with on-policy exploration and leading to significant improvements over baselines.

👉 Paper link: https://huggingface.co/papers/2508.11408

13. FLARE: Fast Low-rank Attention Routing Engine

🔑 Keywords: FLARE, linear complexity, self-attention, neural PDE surrogates, additive manufacturing dataset

💡 Category: Machine Learning

🌟 Research Objective:

– To introduce FLARE, a linear complexity self-attention mechanism, that enhances scalability and accuracy in large unstructured meshes and neural PDE surrogates.

🛠️ Research Methods:

– Utilization of a linear complexity self-attention mechanism through fixed-length latent sequences to achieve global communication among tokens at a reduced computational cost.

💬 Research Conclusions:

– FLARE not only scales to larger problem sizes but also achieves superior accuracy compared to state-of-the-art neural PDE surrogates. Additionally, a new additive manufacturing dataset is released to support further research.

👉 Paper link: https://huggingface.co/papers/2508.12594

14. ViExam: Are Vision Language Models Better than Humans on Vietnamese Multimodal Exam Questions?

🔑 Keywords: Vision Language Models, Vietnamese educational assessments, Cross-lingual Multimodal Reasoning, ViExam

💡 Category: AI in Education

🌟 Research Objective:

– The study aims to evaluate the performance of Vision Language Models (VLMs) on Vietnamese educational assessments, focusing on their ability to handle cross-lingual multimodal reasoning.

🛠️ Research Methods:

– A new benchmark named ViExam, consisting of 2,548 multimodal questions across seven academic domains, was used to test VLMs predominantly trained on English data.

💬 Research Conclusions:

– VLMs performed poorly compared to human test-takers on Vietnamese assessments, with only the thinking VLM o3 surpassing average human performance. Efforts to improve performance using cross-lingual prompting with English instructions were ineffective, while human-in-the-loop collaboration resulted in a modest improvement.

👉 Paper link: https://huggingface.co/papers/2508.13680

15. mSCoRe: a Multilingual and Scalable Benchmark for Skill-based Commonsense Reasoning

🔑 Keywords: Multilingual Commonsense Reasoning, Large Language Models, Reasoning Skills, Data Synthesis Pipeline

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– To evaluate the reasoning skills of large language models across different languages and cultures, while addressing the limitations in nuanced commonsense understanding.

🛠️ Research Methods:

– Introduction of a Multilingual and Scalable Benchmark for Skill-based Commonsense Reasoning (mSCoRe) using a taxonomy of reasoning skills, a data synthesis pipeline, and a complexity scaling framework.

💬 Research Conclusions:

– Current models show significant challenges when tested with higher complexity levels, revealing limitations in reasoning-reinforced models, particularly in multilingual general and cultural commonsense.

👉 Paper link: https://huggingface.co/papers/2508.10137

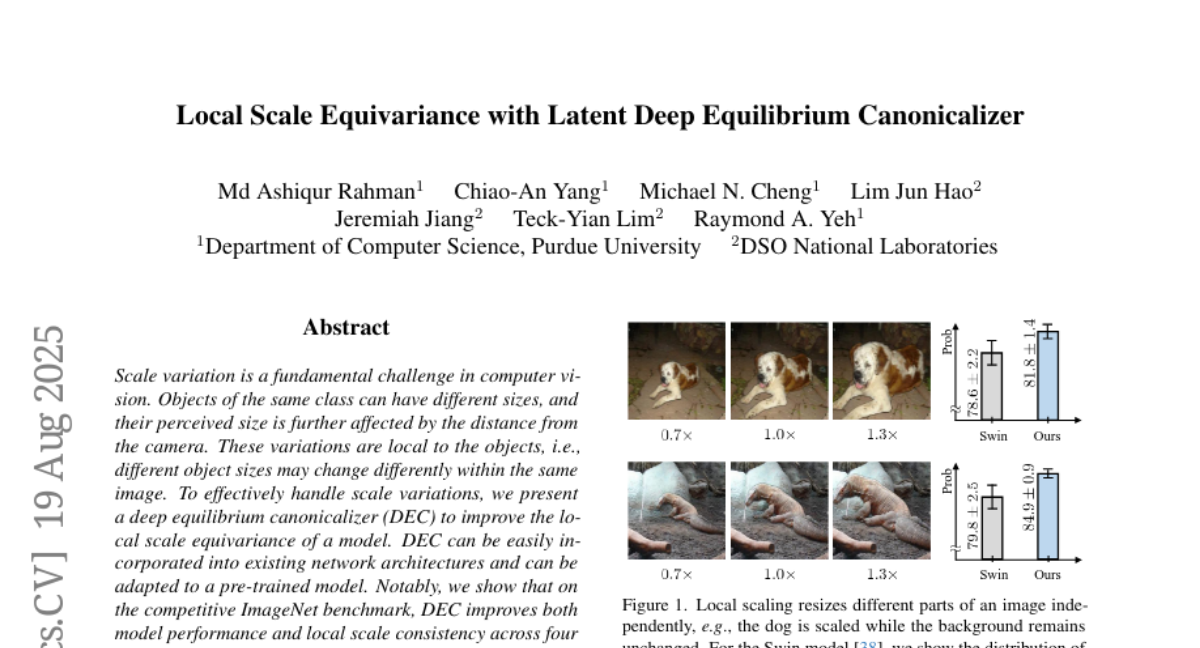

16. Local Scale Equivariance with Latent Deep Equilibrium Canonicalizer

🔑 Keywords: Deep equilibrium canonicalizer, local scale equivariance, ImageNet, ViT, DeiT

💡 Category: Computer Vision

🌟 Research Objective:

– To address scale variation in computer vision, a significant challenge due to differing object sizes and distances, by introducing a deep equilibrium canonicalizer (DEC) to improve local scale equivariance.

🛠️ Research Methods:

– Implementation of DEC into existing network architectures, adaptable to pre-trained models, tested across several deep networks such as ViT, DeiT, Swin, and BEiT.

💬 Research Conclusions:

– DEC enhances both performance and local scale consistency on the ImageNet benchmark, demonstrating improved model effectiveness.

👉 Paper link: https://huggingface.co/papers/2508.14187

17. Leuvenshtein: Efficient FHE-based Edit Distance Computation with Single Bootstrap per Cell

🔑 Keywords: Fully Homomorphic Encryption, Levenshtein Distance, TFHE, Programmable Bootstraps, Wagner-Fisher Algorithm

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The paper introduces a novel algorithm designed to optimize the computation of edit distance within the framework of Fully Homomorphic Encryption, focusing on third-generation schemes like TFHE.

🛠️ Research Methods:

– The method involves reducing the programmable bootstraps needed per cell and efficient equality checks on characters, significantly decreasing computational cost.

💬 Research Conclusions:

– The optimized algorithm achieves up to 278 times faster performance than the best available TFHE implementation and substantially outpaces the Wagner-Fisher algorithm, with further improvements via offline preprocessing.

👉 Paper link: https://huggingface.co/papers/2508.14568

18. Refining Contrastive Learning and Homography Relations for Multi-Modal Recommendation

🔑 Keywords: Multi-modal recommender system, Contrastive learning, Homography, Graph neural networks, Meta-network

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The objective of the study is to enhance multi-modal recommender systems by refining contrastive learning and homography relations to improve feature representation and user-item interaction mining.

🛠️ Research Methods:

– The study employs meta-network and orthogonal constraint strategies to filter out noise in modal-shared features and retain relevant information in modal-unique features. Additionally, it integrates user interest and item co-occurrence graphs with existing graphs for effective graph learning.

💬 Research Conclusions:

– Extensive experiments on three real-world datasets show that the proposed framework, REARM, outperforms various state-of-the-art baselines. The visualization indicates improvements in distinguishing between modal-shared and modal-unique features.

👉 Paper link: https://huggingface.co/papers/2508.13745

19.