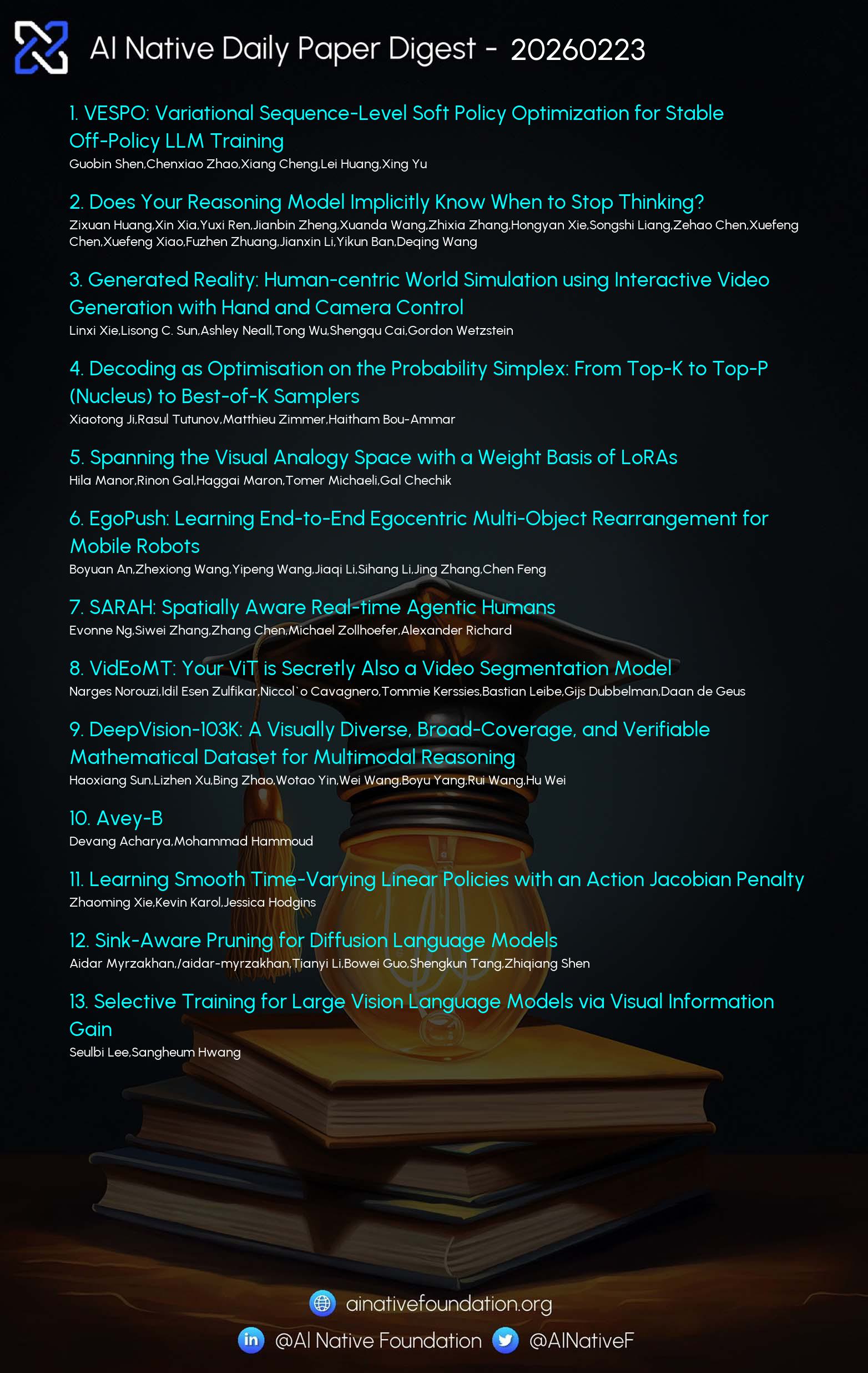

AI Native Daily Paper Digest – 20260223

1. VESPO: Variational Sequence-Level Soft Policy Optimization for Stable Off-Policy LLM Training

🔑 Keywords: Variational sEquence-level Soft Policy Optimization (VESPO), Variance reduction, Reinforcement learning, Large language models, Asynchronous training

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The paper aims to address training instability in reinforcement learning for large language models by correcting policy divergence without length normalization through the application of VESPO.

🛠️ Research Methods:

– The authors employ a variational formulation with variance reduction to derive a reshaping kernel that directly operates on sequence-level importance weights. This method provides a principled correction for distribution shifts during training.

💬 Research Conclusions:

– VESPO is demonstrated to maintain stable training under staleness ratios up to 64x and fully asynchronous execution. It also yields consistent performance gains across different model architectures, such as dense and Mixture-of-Experts models.

👉 Paper link: https://huggingface.co/papers/2602.10693

2. Generated Reality: Human-centric World Simulation using Interactive Video Generation with Hand and Camera Control

🔑 Keywords: human-centric video world model, head and hand poses, bidirectional video diffusion model, egocentric virtual environments

💡 Category: Human-AI Interaction

🌟 Research Objective:

– The primary goal was to develop a human-centric video world model that enhances dexterous interactions by leveraging tracked head and hand poses to generate egocentric virtual environments.

🛠️ Research Methods:

– Evaluation of existing diffusion transformer conditioning strategies to propose an effective mechanism for 3D control, followed by training a bidirectional video diffusion model to aid in interaction within virtual environments.

💬 Research Conclusions:

– The study demonstrated improved task performance and a higher level of perceived control over actions compared to existing baselines, verified through evaluations with human subjects.

👉 Paper link: https://huggingface.co/papers/2602.18422

3. Spanning the Visual Analogy Space with a Weight Basis of LoRAs

🔑 Keywords: Visual analogy learning, LoRA, dynamic composition, transformation primitives, semantic spaces

💡 Category: Computer Vision

🌟 Research Objective:

– Introduce a novel approach called LoRWeB for enhancing image manipulation through visual analogy learning.

🛠️ Research Methods:

– Implement a learnable basis of LoRA modules to cover diverse visual transformations.

– Use a lightweight encoder to dynamically select and weigh these LoRA modules based on input analogy pair.

💬 Research Conclusions:

– Achieves state-of-the-art performance in visual transformation tasks.

– Demonstrates improved generalization to previously unseen visual transformations.

– Suggests that LoRA basis decompositions provide a promising avenue for flexible visual manipulation.

👉 Paper link: https://huggingface.co/papers/2602.15727

4. DeepVision-103K: A Visually Diverse, Broad-Coverage, and Verifiable Mathematical Dataset for Multimodal Reasoning

🔑 Keywords: DeepVision-103K, Multimodal Models, Reinforcement Learning, Visual Elements, Mathematical Content

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The research introduces the DeepVision-103K dataset to enhance the reasoning abilities of large multimodal models by incorporating diverse mathematical content and visual elements.

🛠️ Research Methods:

– Development of a large-scale, diverse dataset to train models in Reinforcement Learning with Verifiable Rewards, focusing on multimodal reasoning across various educational topics.

💬 Research Conclusions:

– Models trained on DeepVision-103K display enhanced visual perception and reasoning capabilities, demonstrating improved performance on multimodal mathematical benchmarks and effective generalization to broader reasoning tasks.

👉 Paper link: https://huggingface.co/papers/2602.16742

5. SARAH: Spatially Aware Real-time Agentic Humans

🔑 Keywords: causal transformer, spatially-aware, conversational motion, virtual reality, flow matching

💡 Category: Human-AI Interaction

🌟 Research Objective:

– To develop a real-time, spatially-aware conversational motion method for embodied agents in virtual reality applications.

🛠️ Research Methods:

– The approach combines a causal transformer-based variational autoencoder with interleaved latent tokens for streaming inference and a flow matching model conditioned on user trajectory and audio.

💬 Research Conclusions:

– The method achieves state-of-the-art motion quality at over 300 FPS, significantly faster than non-causal baselines, and is validated for real-time deployment in VR systems.

👉 Paper link: https://huggingface.co/papers/2602.18432

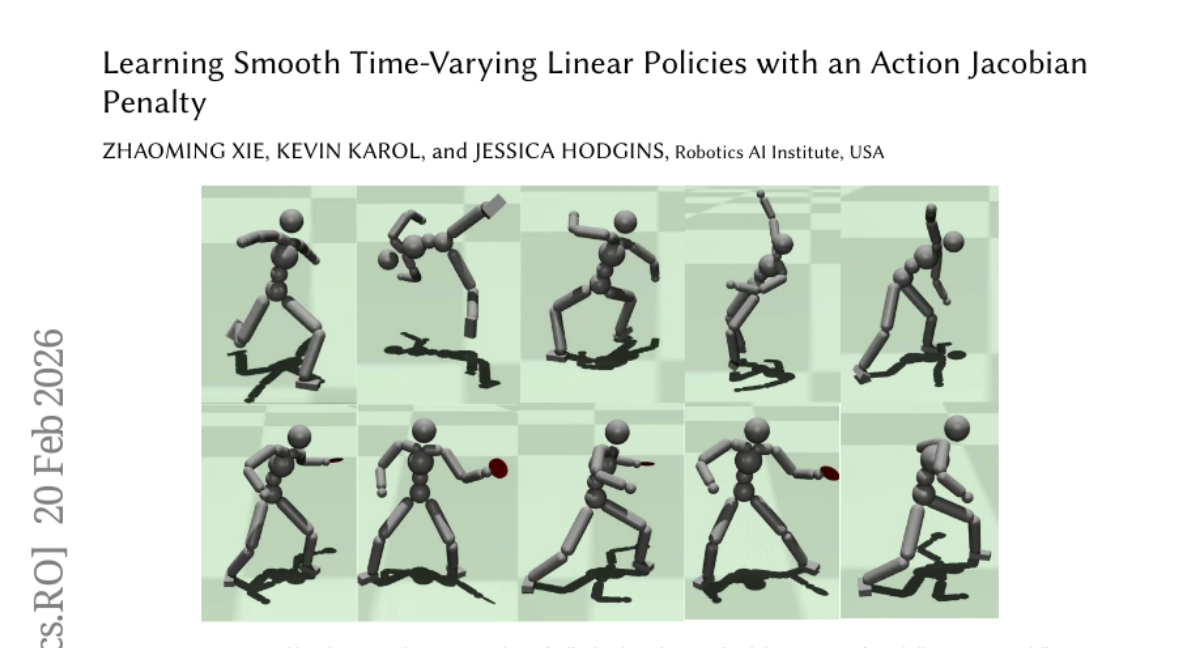

6. Learning Smooth Time-Varying Linear Policies with an Action Jacobian Penalty

🔑 Keywords: Reinforcement Learning, action Jacobian penalty, Linear Policy Net, motion imitation, quadrupedal robot

💡 Category: Reinforcement Learning

🌟 Research Objective:

– Improve reinforcement learning policies for motion imitation by eliminating unrealistic high-frequency signals using an action Jacobian penalty and introduce a new Linear Policy Net architecture.

🛠️ Research Methods:

– Utilize auto differentiation to directly apply action Jacobian penalty without task-specific tuning and develop a Linear Policy Net architecture to reduce computational burden.

💬 Research Conclusions:

– The proposed approach effectively generates smooth control signals, enhances learning convergence, and successfully applies to dynamic motion tasks on simulated characters and physical robots, including backflips and parkour skills.

👉 Paper link: https://huggingface.co/papers/2602.18312

7. Selective Training for Large Vision Language Models via Visual Information Gain

🔑 Keywords: Visual Information Gain, language bias, selective training, visual grounding, perplexity-based metric

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To introduce Visual Information Gain (VIG) as a metric for measuring the contribution of visual input to prediction uncertainty, aiming to improve visual grounding and reduce language bias in large vision-language models.

🛠️ Research Methods:

– Development of a perplexity-based metric (VIG) for fine-grained analysis at sample and token levels.

– Implementation of a VIG-guided selective training scheme focusing on high-VIG samples and tokens.

💬 Research Conclusions:

– The proposed VIG-guided selective training enhances visual grounding and mitigates language bias, leading to superior performance with reduced supervision by concentrating on visually informative data.

👉 Paper link: https://huggingface.co/papers/2602.17186

8. Rubrics as an Attack Surface: Stealthy Preference Drift in LLM Judges

🔑 Keywords: LLM-based judges, Rubric-Induced Preference Drift, alignment pipelines, preference attacks, model behavior

💡 Category: Natural Language Processing

🌟 Research Objective:

– The study aims to identify and analyze the vulnerability called Rubric-Induced Preference Drift (RIPD) in evaluation and alignment pipelines for large language models, highlighting the manipulation potential of natural-language rubrics.

🛠️ Research Methods:

– The research investigates the effects of rubric modifications on LLM-based judges by validating them on benchmarks, identifying shifts in preferences, and examining the propagation of bias through alignment pipelines.

💬 Research Conclusions:

– The findings reveal that natural-language rubrics serve as a manipulable control interface, with edits leading to significant preference drifts and reduced accuracy. This manipulation risk highlights a critical system-level alignment issue extending beyond evaluator reliability.

👉 Paper link: https://huggingface.co/papers/2602.13576

9. ReIn: Conversational Error Recovery with Reasoning Inception

🔑 Keywords: Conversational agents, large language models, tool integration, error recovery, Reasoning Inception

💡 Category: Natural Language Processing

🌟 Research Objective:

– This study aims to improve error recovery in conversational agents without modifying model parameters or prompts by using a method called Reasoning Inception (ReIn).

🛠️ Research Methods:

– The research explores test-time intervention via an external inception module to identify and address predefined errors within the dialogue context, integrating recovery plans into the agent’s reasoning process.

💬 Research Conclusions:

– ReIn demonstrates substantial improvements in task success across various scenarios and agent models, outperforming prompt-modification approaches, thus enhancing the resilience of conversational agents to user-induced errors.

👉 Paper link: https://huggingface.co/papers/2602.17022

10.

11. 4RC: 4D Reconstruction via Conditional Querying Anytime and Anywhere

🔑 Keywords: 4D reconstruction, monocular videos, motion dynamics, transformer backbone, spatio-temporal latent space

💡 Category: Computer Vision

🌟 Research Objective:

– Introduce 4RC, a feed-forward framework designed for 4D reconstruction from monocular videos that effectively integrates scene geometry and motion dynamics.

🛠️ Research Methods:

– Utilize a transformer-based encoder-decoder architecture with conditional querying, enabling an encode-once, query-anytime paradigm, and decomposing 4D attributes into base geometry and time-dependent relative motion.

💬 Research Conclusions:

– 4RC surpasses previous and concurrent methods in various 4D reconstruction tasks, proving its effectiveness in learning holistic 4D representations.

👉 Paper link: https://huggingface.co/papers/2602.10094

12. Whom to Query for What: Adaptive Group Elicitation via Multi-Turn LLM Interactions

🔑 Keywords: Adaptive group elicitation, Large language models, Graph neural networks, Respondent selection, Information gain

💡 Category: Natural Language Processing

🌟 Research Objective:

– To improve population-level predictions under budget constraints by combining LLM-based information gain scoring with graph neural networks.

🛠️ Research Methods:

– Developed a theoretical framework that uses LLM for expected information gain to score questions and a heterogeneous graph neural network for propagating responses, allowing adaptation of both questions and respondents in a multi-round setting.

💬 Research Conclusions:

– The proposed method enhances population-level response prediction with limited budget resources, demonstrating over 12% improvement on CES data with a 10% respondent budget.

👉 Paper link: https://huggingface.co/papers/2602.14279

13. Adam Improves Muon: Adaptive Moment Estimation with Orthogonalized Momentum

🔑 Keywords: orthogonalized momentum, norm-based noise adaptation, NAMO, convergence rates, GPT-2

💡 Category: Machine Learning

🌟 Research Objective:

– Introduce a new class of optimizers, NAMO and NAMO-D, that improves convergence rates and performance in training large language models by integrating orthogonalized momentum with norm-based Adam-type noise adaptation.

🛠️ Research Methods:

– Employing a single adaptive stepsize to scale orthogonalized momentum while preserving orthogonality and introducing a diagonal extension, NAMO-D, to enable neuron-wise noise adaptation.

💬 Research Conclusions:

– Both NAMO and NAMO-D demonstrated improved performance over AdamW and Muon in pretraining GPT-2 models, with NAMO-D achieving further gains through an additional clamping hyperparameter.

👉 Paper link: https://huggingface.co/papers/2602.17080

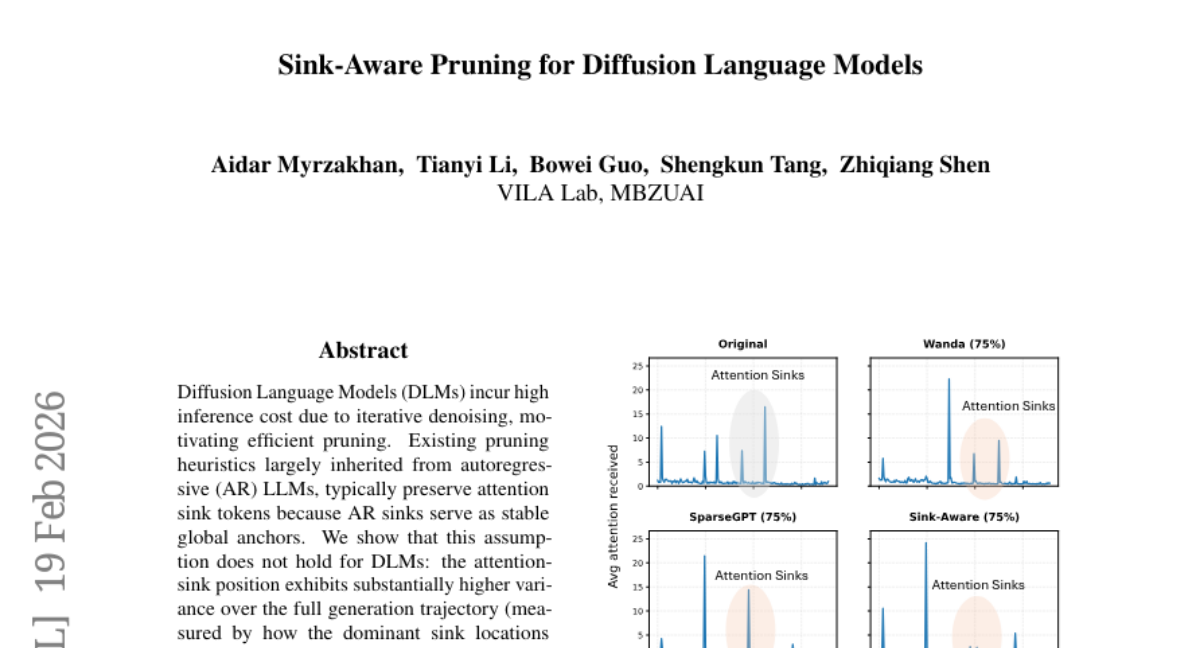

14. Sink-Aware Pruning for Diffusion Language Models

🔑 Keywords: Diffusion Language Models, Iterative Denoising, Pruning, Sink-Aware Pruning, Quality-Efficiency Trade-off

💡 Category: Generative Models

🌟 Research Objective:

– Address the high inference costs in Diffusion Language Models by developing Sink-Aware Pruning that identifies and removes unstable attention sinks.

🛠️ Research Methods:

– Analyze the variance and transient nature of attention-sink positions in Diffusion Language Models to propose an automatic pruning method without retraining.

💬 Research Conclusions:

– Sink-Aware Pruning achieves a superior quality-efficiency trade-off and outperforms existing pruning strategies for Diffusion Language Models without the need for retraining.

👉 Paper link: https://huggingface.co/papers/2602.17664

15. Avey-B

🔑 Keywords: Compact pretrained bidirectional encoders, Avey architecture, Encoder-only adaptation, Neural compression

💡 Category: Natural Language Processing

🌟 Research Objective:

– Reformulate Avey architecture for an encoder-only paradigm and introduce innovations for better performance compared to Transformer-based models.

🛠️ Research Methods:

– Implement decoupled static and dynamic parameterizations, stability-oriented normalization, and neural compression to enhance the Avey architecture.

💬 Research Conclusions:

– The reformulated Avey architecture outperforms Transformer-based models in token classification and information retrieval tasks, while also scaling more efficiently to long contexts.

👉 Paper link: https://huggingface.co/papers/2602.15814

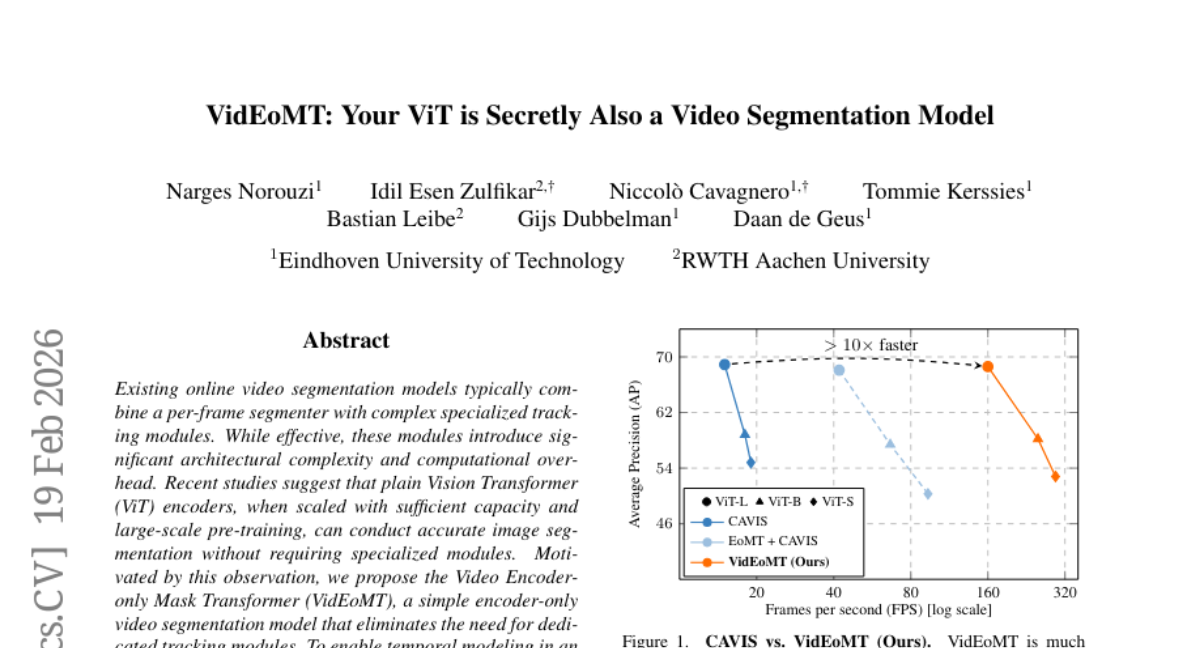

16. VidEoMT: Your ViT is Secretly Also a Video Segmentation Model

🔑 Keywords: Vision Transformer, Encoder-only, Query Propagation, Video Segmentation, Mask Transformer

💡 Category: Computer Vision

🌟 Research Objective:

– Propose a video segmentation model that eliminates the need for specialized tracking modules using a Vision Transformer encoder with query propagation and fusion mechanisms.

🛠️ Research Methods:

– Utilize a Vision Transformer encoder-only approach with lightweight query propagation to carry information across frames and a query fusion strategy to combine propagated queries with temporally-agnostic learned queries.

💬 Research Conclusions:

– VidEoMT achieves competitive accuracy without added complexity, running 5x–10x faster than existing models and reaching up to 160 FPS with a ViT-L backbone.

👉 Paper link: https://huggingface.co/papers/2602.17807

17. EgoPush: Learning End-to-End Egocentric Multi-Object Rearrangement for Mobile Robots

🔑 Keywords: EgoPush, perception-driven policy learning, object-centric latent spaces, reinforcement-learning, zero-shot sim-to-real transfer

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To enable robot manipulation in cluttered environments using egocentric perception and policy learning without relying on explicit global state estimation.

🛠️ Research Methods:

– Development of the EgoPush framework which designs object-centric latent spaces and uses stage-decomposed rewards.

– Utilization of a privileged reinforcement-learning teacher to learn latent states and actions, distilled into a visual student policy.

💬 Research Conclusions:

– EgoPush significantly outperforms existing RL baselines in simulated environments and demonstrates effective zero-shot sim-to-real transfer in real-world applications.

👉 Paper link: https://huggingface.co/papers/2602.18071

18. Decoding as Optimisation on the Probability Simplex: From Top-K to Top-P (Nucleus) to Best-of-K Samplers

🔑 Keywords: Decoding, Optimization Layer, Best-of-K, Mathematical Reasoning

💡 Category: Natural Language Processing

🌟 Research Objective:

– Reinterpret decoding as an optimization layer balancing model scores with structural preferences to improve mathematical reasoning accuracy.

🛠️ Research Methods:

– An optimization framework covering known methods like greedy decoding, Softmax sampling, Top-K, and introducing the new Best-of-K decoder to enhance accuracy.

💬 Research Conclusions:

– The Best-of-K decoder improves performance significantly in multi-sample scenarios, evidenced by a +18.6% increase in accuracy for Qwen2.5-Math-7B on MATH500 at high sampling temperatures.

👉 Paper link: https://huggingface.co/papers/2602.18292

19. Does Your Reasoning Model Implicitly Know When to Stop Thinking?

🔑 Keywords: Large Reasoning Models, Long Chains of Thought, SAGE-RL, Efficient Reasoning, Sampling Paradigms

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– To enhance the reasoning accuracy and efficiency of Large Reasoning Models by reducing redundancy and leveraging implicit stopping points through a novel sampling paradigm, SAGE.

🛠️ Research Methods:

– Utilizing the SAGE paradigm to identify efficient reasoning patterns and integrating it into group-based reinforcement learning (SAGE-RL) for improved inference processes.

💬 Research Conclusions:

– The integration of SAGE into reinforcement learning significantly improves the performance of Large Reasoning Models on complex tasks, ensuring higher accuracy and efficiency across challenging mathematical benchmarks.

👉 Paper link: https://huggingface.co/papers/2602.08354