AI Native Daily Paper Digest – 20260224

1. A Very Big Video Reasoning Suite

🔑 Keywords: Video Reasoning, Large-scale Dataset, Spatiotemporal Reasoning, VBVR, Emergent Generalization

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– The main objective is to study video intelligence capabilities beyond just visual quality by enabling systematic analysis of spatiotemporal reasoning and generalization across diverse tasks.

🛠️ Research Methods:

– Introduction of the Very Big Video Reasoning (VBVR) Dataset, a large-scale dataset spanning 200 curated reasoning tasks and over one million video clips.

– Development of VBVR-Bench, an evaluation framework that incorporates rule-based, human-aligned scorers for reproducible and interpretable diagnostics.

💬 Research Conclusions:

– The study observes early signs of emergent generalization to unseen reasoning tasks, indicating a promising foundation for the next stage of research in generalizable video reasoning.

👉 Paper link: https://huggingface.co/papers/2602.20159

2. SkillOrchestra: Learning to Route Agents via Skill Transfer

🔑 Keywords: SkillOrchestra, Compound AI systems, orchestration, reinforcement learning, performance-cost trade-off

💡 Category: AI Systems and Tools

🌟 Research Objective:

– Introduce SkillOrchestra, a skill-aware orchestration framework to enhance Compound AI system performance by focusing on fine-grained skill modeling and efficient agent selection.

🛠️ Research Methods:

– SkillOrchestra learns fine-grained skills from execution experience and models agent-specific competence and cost. It uses this information to infer the skill demands of interactions and selects agents to satisfy these demands while maintaining a performance-cost trade-off.

💬 Research Conclusions:

– SkillOrchestra outperforms state-of-the-art RL-based orchestrators, achieving up to 22.5% better performance with significantly reduced learning costs (700x and 300x compared to Router-R1 and ToolOrchestra, respectively). This demonstrates that explicit skill modeling facilitates scalable, interpretable, and sample-efficient orchestration, providing a viable alternative to data-intensive methods.

👉 Paper link: https://huggingface.co/papers/2602.19672

3. Mobile-O: Unified Multimodal Understanding and Generation on Mobile Device

🔑 Keywords: Mobile-O, vision-language-diffusion model, edge devices, unified multimodal intelligence, visual understanding

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The objective is to develop a compact vision-language-diffusion model, Mobile-O, capable of efficient multimodal understanding and generation on mobile devices.

🛠️ Research Methods:

– Mobile-O utilizes the Mobile Conditioning Projector (MCP) to integrate vision-language features with diffusion generators through depthwise-separable convolutions and layerwise alignment, alongside training on a few million samples with a novel quadruplet format.

💬 Research Conclusions:

– Mobile-O achieves competitive or superior performance compared to other unified models, proving efficient for real-time unified multimodal understanding and generation on edge devices. It provides a practical framework that operates on-device without cloud dependency, strengthening research in real-time unified multimodal intelligence.

👉 Paper link: https://huggingface.co/papers/2602.20161

4. Agents of Chaos

🔑 Keywords: Autonomous language-model-powered agents, Security vulnerabilities, Unauthorized actions, Information disclosure, System takeovers

💡 Category: AI Ethics and Fairness

🌟 Research Objective:

– The study aims to explore the security and governance vulnerabilities of autonomous language-model-powered agents in a live laboratory environment.

🛠️ Research Methods:

– A two-week exploratory red-teaming study involving twenty AI researchers interacting with the agents under benign and adversarial conditions, documenting failures related to autonomy and communication.

💬 Research Conclusions:

– The study identifies significant security- and governance-relevant vulnerabilities, such as unauthorized actions, sensitive information disclosure, and potential system takeovers. These findings emphasize the need for urgent attention from legal scholars, policymakers, and researchers regarding accountability and responsibility.

👉 Paper link: https://huggingface.co/papers/2602.20021

5. RoboCurate: Harnessing Diversity with Action-Verified Neural Trajectory for Robot Learning

🔑 Keywords: RoboCurate, synthetic robot learning data, simulator replay consistency, observation diversity, image editing

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To enhance synthetic robot learning data by evaluating action quality through simulator replay and augmenting observation diversity with advanced techniques.

🛠️ Research Methods:

– Introduction of RoboCurate framework to evaluate and filter action quality using simulation replay; use of image-to-image editing and video-to-video transfer for augmenting data diversity.

💬 Research Conclusions:

– RoboCurate’s synthetic data significantly improves performance, with notable success rate increases in various robotic scenarios such as GR-1 Tabletop, DexMimicGen, and ALLEX humanoid dexterous manipulation.

👉 Paper link: https://huggingface.co/papers/2602.18742

6. SimToolReal: An Object-Centric Policy for Zero-Shot Dexterous Tool Manipulation

🔑 Keywords: Sim-to-Real Reinforcement Learning, Tool Manipulation, Dexterous Tool Manipulation, Zero-Shot Performance

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The study aims to enable generalizable robot manipulation of diverse tools through procedural simulation and universal reinforcement learning policies without the need for task-specific training.

🛠️ Research Methods:

– The researchers propose SimToolReal, which uses a procedural generation of tool-like object primitives in simulation. A single RL policy is trained with the universal goal of manipulating each object to random goal poses, allowing for general dexterous tool manipulation at test-time.

💬 Research Conclusions:

– The SimToolReal approach outperformed previous methods by 37% and matched the performance of specialist RL policies. It exhibited strong zero-shot performance across a diverse set of everyday tools, achieving impressive results over 120 real-world rollouts.

👉 Paper link: https://huggingface.co/papers/2602.16863

7. Anatomy of Agentic Memory: Taxonomy and Empirical Analysis of Evaluation and System Limitations

🔑 Keywords: Agentic Memory Systems, Long-Horizon Reasoning, Personalization, Empirical Challenges

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To present a structured analysis of agentic memory systems for LLM agents focusing on their architectural and system perspectives.

🛠️ Research Methods:

– Introduction of a concise taxonomy of MAG systems based on four memory structures, along with an analysis of key limitations such as benchmark saturation, metric validity, backbone model dependency, and costs associated with maintaining memory.

💬 Research Conclusions:

– The survey explains why current agentic memory systems often fail to meet their theoretical potential and provides directions for more reliable evaluations and scalable system designs.

👉 Paper link: https://huggingface.co/papers/2602.19320

8. Nacrith: Neural Lossless Compression via Ensemble Context Modeling and High-Precision CDF Coding

🔑 Keywords: Lossless Compression, Transformer Language Model, CDF Precision, Token-level N-gram Model, Hybrid Binary Format

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The research aims to develop Nacrith, a lossless compression system that enhances compression efficiency by integrating a transformer language model with various advanced techniques.

🛠️ Research Methods:

– Nacrith utilizes a 135M-parameter transformer language model combined with lightweight online predictors and a 32-bit arithmetic coder.

– Innovations include enhanced CDF precision, token-level n-gram modeling, adaptive bias correction, and introduction of a hybrid binary format.

💬 Research Conclusions:

– Nacrith achieves superior compression metrics compared to existing methods, outperforming gzip, bzip2, CMIX v21, and ts_zip on standard datasets, demonstrating its effectiveness and efficiency.

👉 Paper link: https://huggingface.co/papers/2602.19626

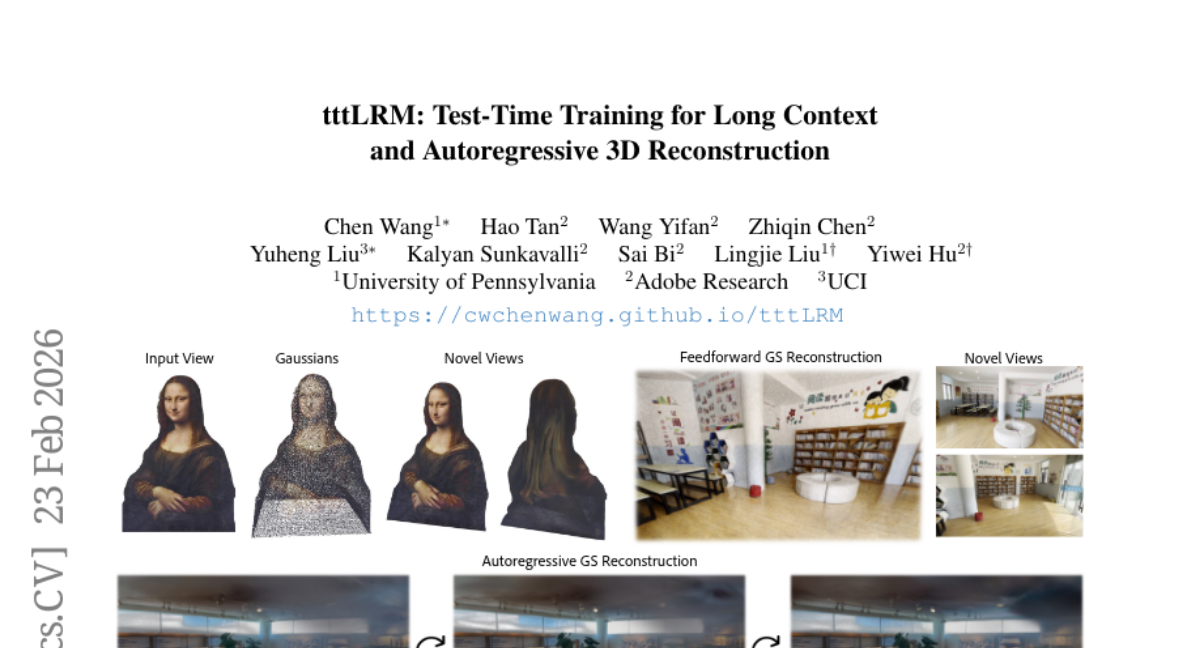

9. tttLRM: Test-Time Training for Long Context and Autoregressive 3D Reconstruction

🔑 Keywords: tttLRM, Test-Time Training, autoregressive 3D reconstruction, Gaussian Splats, latent space

💡 Category: Computer Vision

🌟 Research Objective:

– The primary objective is to develop tttLRM, a novel large 3D reconstruction model that facilitates long-context, autoregressive 3D reconstruction with linear computational complexity.

🛠️ Research Methods:

– tttLRM uses a Test-Time Training layer to compress multiple image observations into fast weights, forming an implicit 3D representation in the latent space that can be decoded into formats like Gaussian Splats.

– The model includes an online learning variant to support progressive 3D reconstruction from streaming observations and utilizes pretraining on novel view synthesis tasks for improved explicit 3D modeling.

💬 Research Conclusions:

– The proposed tttLRM model achieves superior performance in feedforward 3D Gaussian reconstruction compared to state-of-the-art methods, demonstrating enhanced reconstruction quality and faster convergence.

👉 Paper link: https://huggingface.co/papers/2602.20160

10. Decoding ML Decision: An Agentic Reasoning Framework for Large-Scale Ranking System

🔑 Keywords: ranking optimization, specialized agent skills, validation hooks, AI-generated summary, statistical robustness

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The main objective of GEARS is to transform ranking optimization into an autonomous discovery process, focusing on balancing algorithmic signals with ranking context and ensuring production reliability.

🛠️ Research Methods:

– GEARS utilizes specialized agent skills to encapsulate ranking expert knowledge and includes validation hooks to enforce statistical robustness and prevent overfitting to short-term signals.

💬 Research Conclusions:

– Experimental validation across various product surfaces shows that GEARS consistently identifies superior, near-Pareto-efficient policies by combining algorithmic signals with deep ranking context while maintaining deployment stability.

👉 Paper link: https://huggingface.co/papers/2602.18640

11. AAVGen: Precision Engineering of Adeno-associated Viral Capsids for Renal Selective Targeting

🔑 Keywords: AAVGen, generative AI, protein language model, reinforcement learning, multi-objective optimization

💡 Category: Generative Models

🌟 Research Objective:

– The study aims to design AAV capsids with enhanced traits to address limitations in gene therapy, particularly for overcoming kidney anatomical barriers and optimizing multiple functional properties.

🛠️ Research Methods:

– The AAVGen framework integrates a protein language model with supervised fine-tuning and reinforcement learning techniques, specifically Group Sequence Policy Optimization, guided by a composite reward signal from ESM-2-based regression predictors.

💬 Research Conclusions:

– AAVGen successfully produces a diverse library of novel VP1 protein sequences with superior performance in production fitness, kidney tropism, and thermostability, confirmed by in silico validation and structural analysis via AlphaFold3. This framework accelerates the development of next-generation AAV vectors with tailored functional characteristics.

👉 Paper link: https://huggingface.co/papers/2602.18915

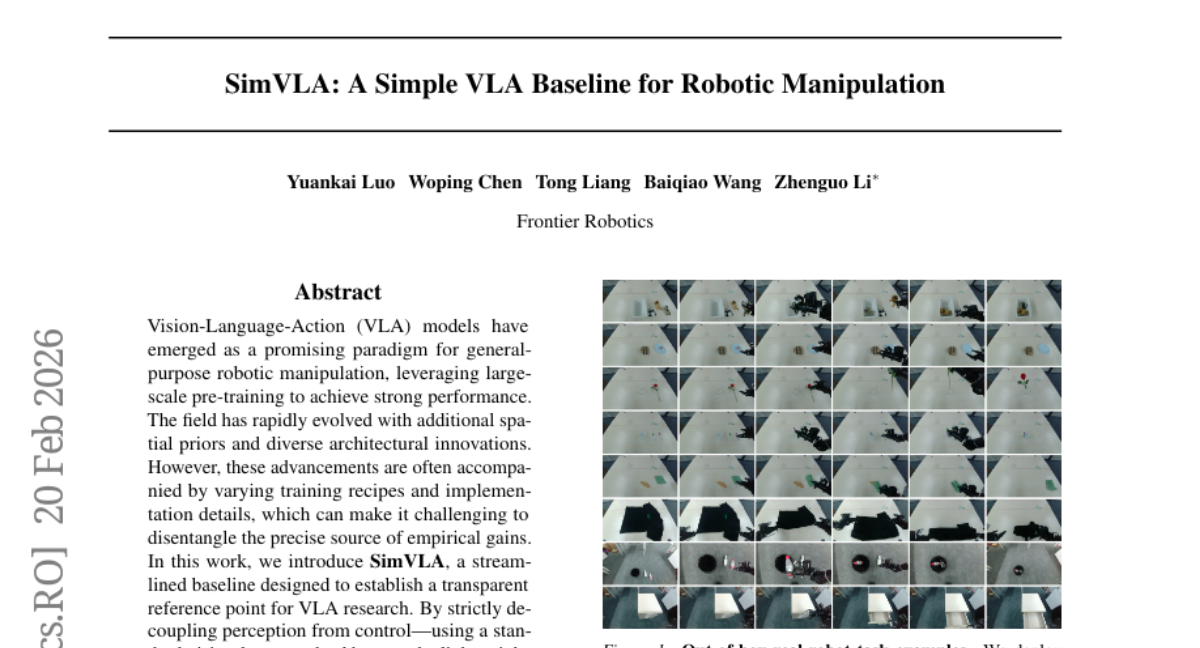

12. SimVLA: A Simple VLA Baseline for Robotic Manipulation

🔑 Keywords: Vision-Language-Action models, state-of-the-art performance, perception, control, training dynamics

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– The study introduces SimVLA as a simplified baseline for Vision-Language-Action models to achieve high performance with fewer parameters and allow for clearer evaluation of architectural improvements.

🛠️ Research Methods:

– Utilizes a decoupled approach by separating perception from control using a standard vision-language backbone and a lightweight action head, along with standardizing training dynamics.

💬 Research Conclusions:

– SimVLA achieves state-of-the-art performance despite having only 0.5B parameters and outperforms larger models on standard simulation benchmarks without robot pretraining. It demonstrates capability comparable to larger models in real-robot performance.

👉 Paper link: https://huggingface.co/papers/2602.18224

13. K-Search: LLM Kernel Generation via Co-Evolving Intrinsic World Model

🔑 Keywords: GPU kernels, AI-generated summary, Large Language Models, World Model, non-monotonic optimization

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The research aims to optimize GPU kernels by separating high-level planning from low-level implementation, using a co-evolving world model to enhance performance significantly over existing evolutionary methods.

🛠️ Research Methods:

– The methodology involves replacing static search heuristics with a co-evolving world model, leveraging Large Language Models’ domain knowledge to guide the optimization search. This approach allows for navigation of non-monotonic optimization paths.

💬 Research Conclusions:

– K-Search demonstrates significant performance improvements over state-of-the-art evolutionary search methods, achieving up to a 14.3x gain on complex kernels and surpassing previous human-designed solutions.

👉 Paper link: https://huggingface.co/papers/2602.19128

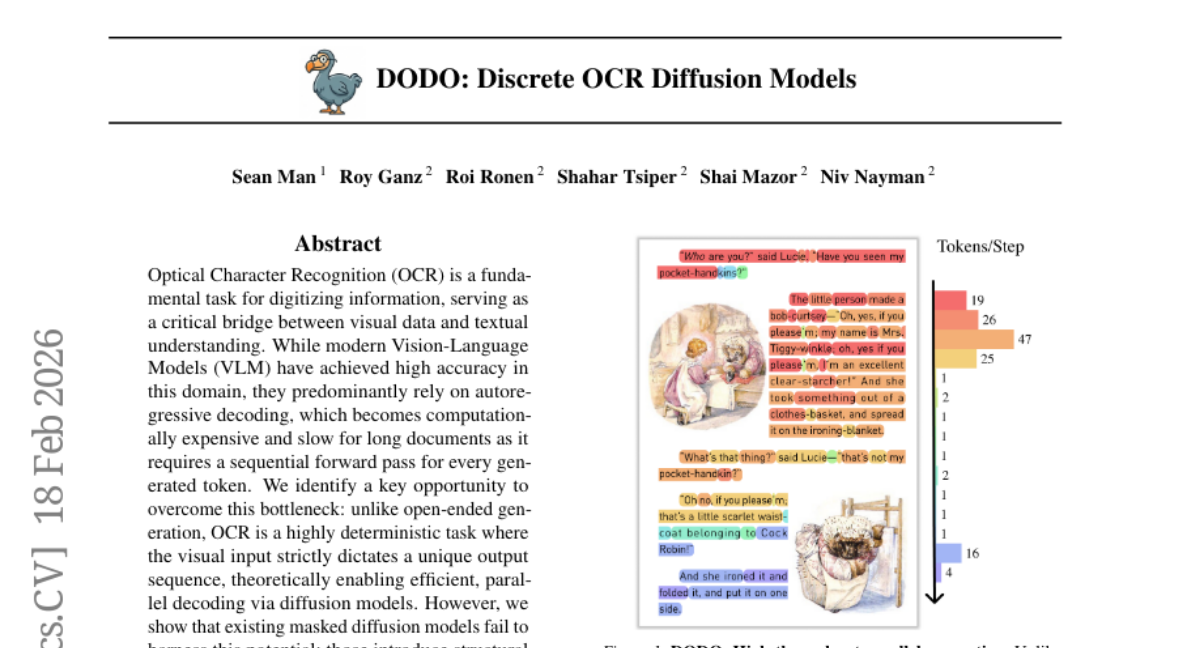

14. DODO: Discrete OCR Diffusion Models

🔑 Keywords: Optical Character Recognition, Vision-Language Models, autoregressive decoding, diffusion models, block discrete diffusion

💡 Category: Generative Models

🌟 Research Objective:

– The study aims to enhance Optical Character Recognition (OCR) by adapting diffusion models to facilitate faster and parallel processing while maintaining high accuracy.

🛠️ Research Methods:

– The research introduces “DODO”, utilizing block discrete diffusion to decompose generation into blocks, overcoming synchronization errors in global diffusion.

💬 Research Conclusions:

– The proposed method achieves near state-of-the-art accuracy and can be up to 3x faster in inference than autoregressive baselines.

👉 Paper link: https://huggingface.co/papers/2602.16872

15. DSDR: Dual-Scale Diversity Regularization for Exploration in LLM Reasoning

🔑 Keywords: Reinforcement Learning, Diversity Regularization, Large Language Models, Reasoning

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The paper presents DSDR, a reinforcement learning framework aimed at enhancing the reasoning capabilities of large language models by promoting diversity at both the global and local levels.

🛠️ Research Methods:

– The approach involves a dual-scale regularization technique that decomposes diversity in reasoning into global and local components, ensuring both depth of exploration and correctness.

💬 Research Conclusions:

– The DSDR framework consistently improves accuracy and pass@k benchmarks, emphasizing the significance of dual-scale diversity for deep exploration in Reinforcement Learning with Verifiers (RLVR).

👉 Paper link: https://huggingface.co/papers/2602.19895

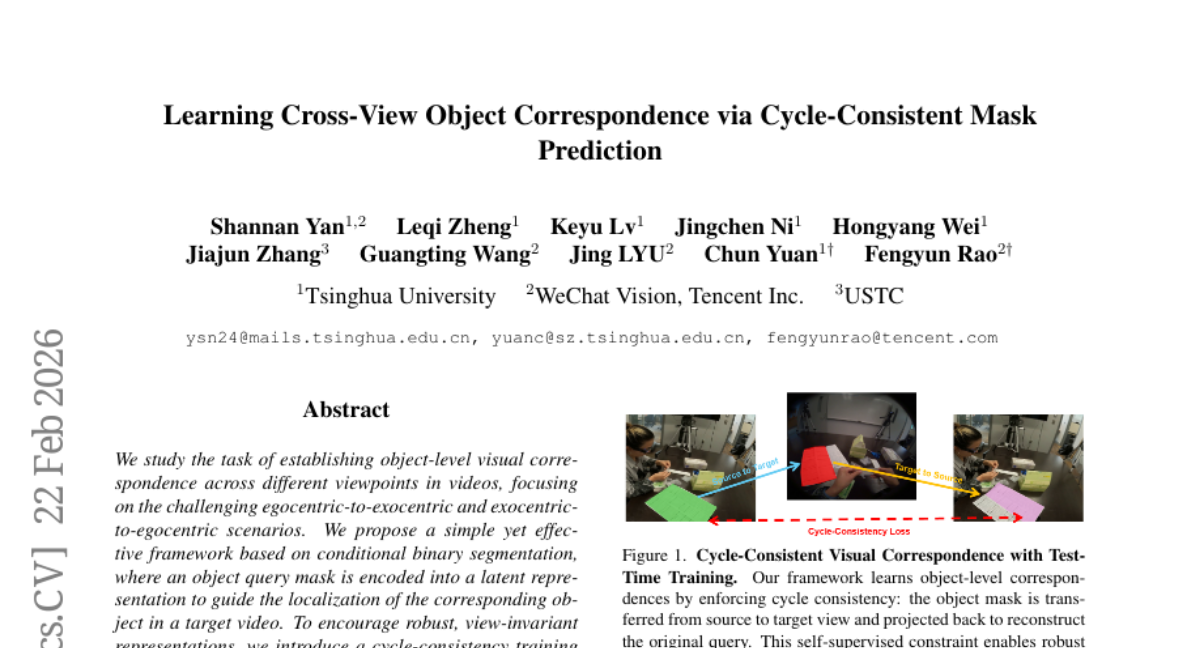

16. Learning Cross-View Object Correspondence via Cycle-Consistent Mask Prediction

🔑 Keywords: Conditional Binary Segmentation, Cycle-Consistency Training, View-Invariant Representations, Test-Time Training

💡 Category: Computer Vision

🌟 Research Objective:

– The study aims to establish object-level visual correspondence across different viewpoints, specifically targeting egocentric-to-exocentric scenarios using a conditional binary segmentation framework.

🛠️ Research Methods:

– The researchers employed a framework where object query masks are encoded into latent representations to guide object localization in target videos. They introduced cycle-consistency training to enhance view-invariant representations, which includes projecting predicted masks back to the source view for original query mask reconstruction.

💬 Research Conclusions:

– The proposed approach, validated on the Ego-Exo4D and HANDAL-X benchmarks, demonstrates state-of-the-art performance, showing the strength of their optimization objective and test-time training strategy.

👉 Paper link: https://huggingface.co/papers/2602.18996

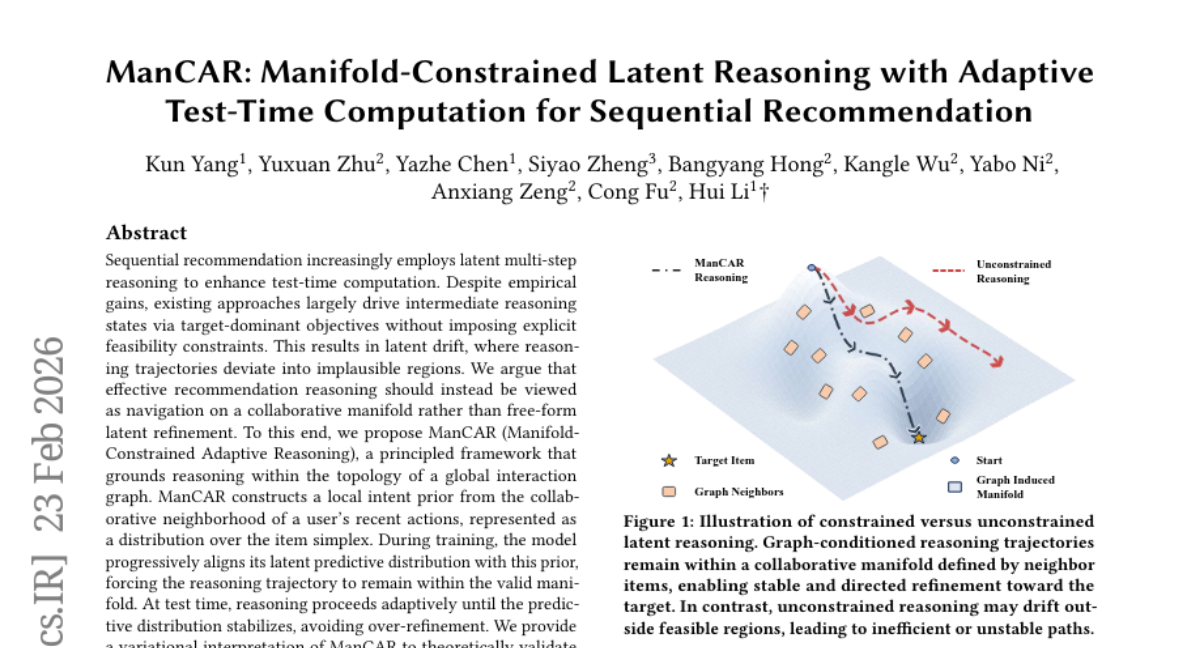

17. ManCAR: Manifold-Constrained Latent Reasoning with Adaptive Test-Time Computation for Sequential Recommendation

🔑 Keywords: ManCAR, collaborative manifold, Adaptive Reasoning, latent drift, global interaction graph

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– The objective is to develop a recommendation framework that improves accuracy by constraining reasoning within a collaborative manifold, aiming to prevent implausible reasoning trajectories.

🛠️ Research Methods:

– ManCAR employs a principled framework that incorporates reasoning within the topology of a global interaction graph, using a local intent prior from user’s recent actions as a distribution over the item simplex for alignment during training.

💬 Research Conclusions:

– ManCAR demonstrates significant improvement in recommendation accuracy, outperforming state-of-the-art baselines by up to 46.88% in NDCG@10 on seven benchmarks, confirming its efficacy in drift prevention and adaptive test-time reasoning.

👉 Paper link: https://huggingface.co/papers/2602.20093

18. TOPReward: Token Probabilities as Hidden Zero-Shot Rewards for Robotics

🔑 Keywords: TOPReward, Vision-Language Models, Reinforcement Learning, zero-shot evaluations, robotic task progress

💡 Category: Reinforcement Learning

🌟 Research Objective:

– To introduce TOPReward, a novel probabilistically grounded temporal value function that estimates robotic task progress using pretrained video Vision-Language Models.

🛠️ Research Methods:

– Leveraging internal token logits of Vision-Language Models for task progress estimation as opposed to direct progress value outputs, conducting zero-shot evaluations across diverse tasks and robot platforms.

💬 Research Conclusions:

– TOPReward achieves superior zero-shot performance with a 0.947 mean Value-Order Correlation, outperforming state-of-the-art baselines and demonstrating versatility in downstream applications like success detection and reward-aligned behavior cloning.

👉 Paper link: https://huggingface.co/papers/2602.19313

19. VLANeXt: Recipes for Building Strong VLA Models

🔑 Keywords: Vision-Language-Action models, VLANeXt, policy learning, VLA design space, perception essentials

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To analyze and optimize Vision-Language-Action models (VLAs) through a unified framework to achieve superior performance and generalization.

🛠️ Research Methods:

– Systematic analysis of design choices along foundational components, perception essentials, and action modelling perspectives.

💬 Research Conclusions:

– Developed a model named VLANeXt, which surpasses previous state-of-the-art methods on major benchmarks and shows strong real-world application potential. The research aims to provide a unified codebase for further exploration and development by the community.

👉 Paper link: https://huggingface.co/papers/2602.18532