AI Native Daily Paper Digest – 20260317

1. AI Can Learn Scientific Taste

🔑 Keywords: Scientific Taste, Reinforcement Learning, Community Feedback, AI Scientists

💡 Category: Reinforcement Learning

🌟 Research Objective:

– To enhance an AI scientist’s capacity to judge and propose impactful research ideas by developing its scientific taste.

🛠️ Research Methods:

– Introduced Reinforcement Learning from Community Feedback (RLCF) using a scientific taste learning framework through preference modeling and alignment.

– Developed Scientific Judge to evaluate ideas using 700K pairs of high- vs. low-citation papers.

– Trained Scientific Thinker to propose impactful research ideas, leveraging Scientific Judge as a reward model.

💬 Research Conclusions:

– Scientific Judge outperformed state-of-the-art language models in idea judgment tasks and generalized across various contexts.

– Scientific Thinker generated research ideas with greater potential impact than existing baselines, signifying a step towards human-level AI scientists.

👉 Paper link: https://huggingface.co/papers/2603.14473

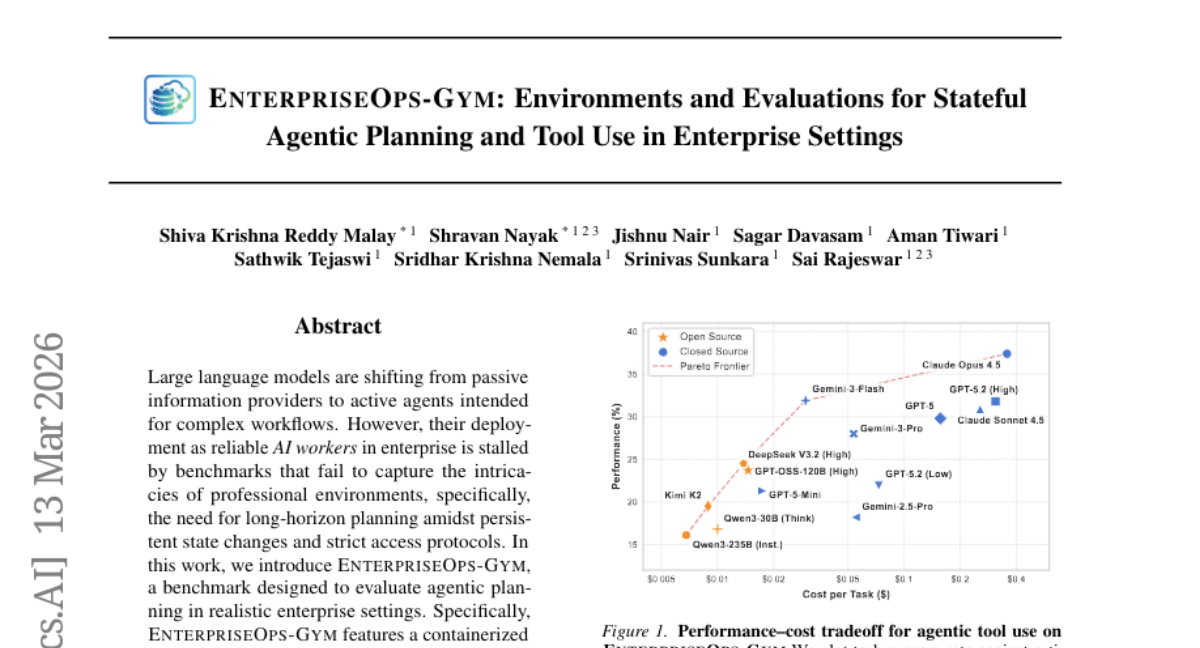

2. EnterpriseOps-Gym: Environments and Evaluations for Stateful Agentic Planning and Tool Use in Enterprise Settings

🔑 Keywords: EnterpriseOps-Gym, agentic planning, strategic reasoning, AI workers, professional workflows

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The study introduces EnterpriseOps-Gym, a benchmark designed to evaluate the effectiveness of agentic planning in realistic enterprise settings, addressing the current benchmarks’ inadequacies.

🛠️ Research Methods:

– A containerized sandbox with 164 database tables and 512 functional tools is utilized to mimic real-world search friction, where agents are evaluated on 1,150 expert-curated tasks across eight critical enterprise verticals.

💬 Research Conclusions:

– Current state-of-the-art models exhibit significant limitations, with the top-performing model achieving only a 37.4% success rate. The introduction of oracle human plans significantly increases performance, emphasizing strategic reasoning as a key bottleneck. Moreover, agents often fail to refuse infeasible tasks, indicating the current inadequacy of AI workers for autonomous deployment in enterprises.

👉 Paper link: https://huggingface.co/papers/2603.13594

3. Grounding World Simulation Models in a Real-World Metropolis

🔑 Keywords: Seoul World Model, autoregressive video generation, synthetic dataset, Virtual Lookahead Sink

💡 Category: Generative Models

🌟 Research Objective:

– The study aims to develop a city-scale world model, Seoul World Model (SWM), that is grounded in the real city of Seoul and capable of generating spatially and temporally coherent videos of urban environments.

🛠️ Research Methods:

– SWM utilizes retrieval-augmented conditioning with nearby street-view images, cross-temporal pairing for enhancing trajectory diversity, and a view interpolation pipeline for synthesizing coherent training videos.

💬 Research Conclusions:

– SWM outperforms existing video world models by generating more accurate and consistent long-horizon videos grounded in actual urban environments, with diverse camera movements and versatility in scenarios.

👉 Paper link: https://huggingface.co/papers/2603.15583

4. Anatomy of a Lie: A Multi-Stage Diagnostic Framework for Tracing Hallucinations in Vision-Language Models

🔑 Keywords: Vision-Language Models, Hallucination, Computational Rationality, Cognitive State Space, Geometric-Information Duality

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The study aims to diagnose hallucinations in Vision-Language Models by redefining them as dynamic pathologies of cognitive processing.

🛠️ Research Methods:

– The researchers designed information-theoretic probes that map cognitive trajectories of VLMs onto a low-dimensional Cognitive State Space to identify geometric abnormalities.

💬 Research Conclusions:

– The framework developed can detect hallucinations as geometric anomalies and shows state-of-the-art performance across various settings, with robust efficiency under weak supervision.

– It facilitates causal attribution of VLMs’ failures to specific pathological states, enhancing the transparency and auditability of AI systems.

👉 Paper link: https://huggingface.co/papers/2603.15557

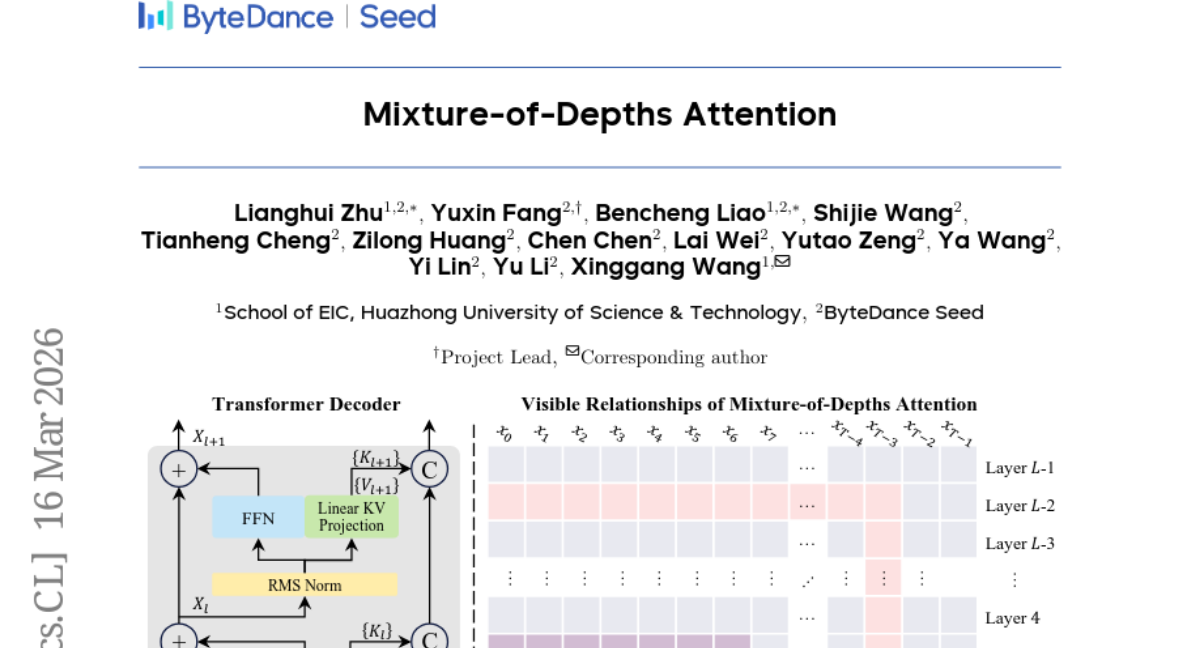

5. Mixture-of-Depths Attention

🔑 Keywords: Scaling depth, MoDA, residual updates, performance improvement, post-norm

💡 Category: Natural Language Processing

🌟 Research Objective:

– To address signal degradation in large language models as they scale in depth through the introduction of mixture-of-depths attention (MoDA).

🛠️ Research Methods:

– Integration of MoDA allowing attention to both current and preceding layer depths.

– Development of a hardware-efficient algorithm for MoDA with efficient memory access patterns.

💬 Research Conclusions:

– MoDA enhances performance compared to strong baselines, improving average perplexity and downstream task performance with minimal computational overhead.

– Post-norm combination with MoDA improves performance over pre-norm.

👉 Paper link: https://huggingface.co/papers/2603.15619

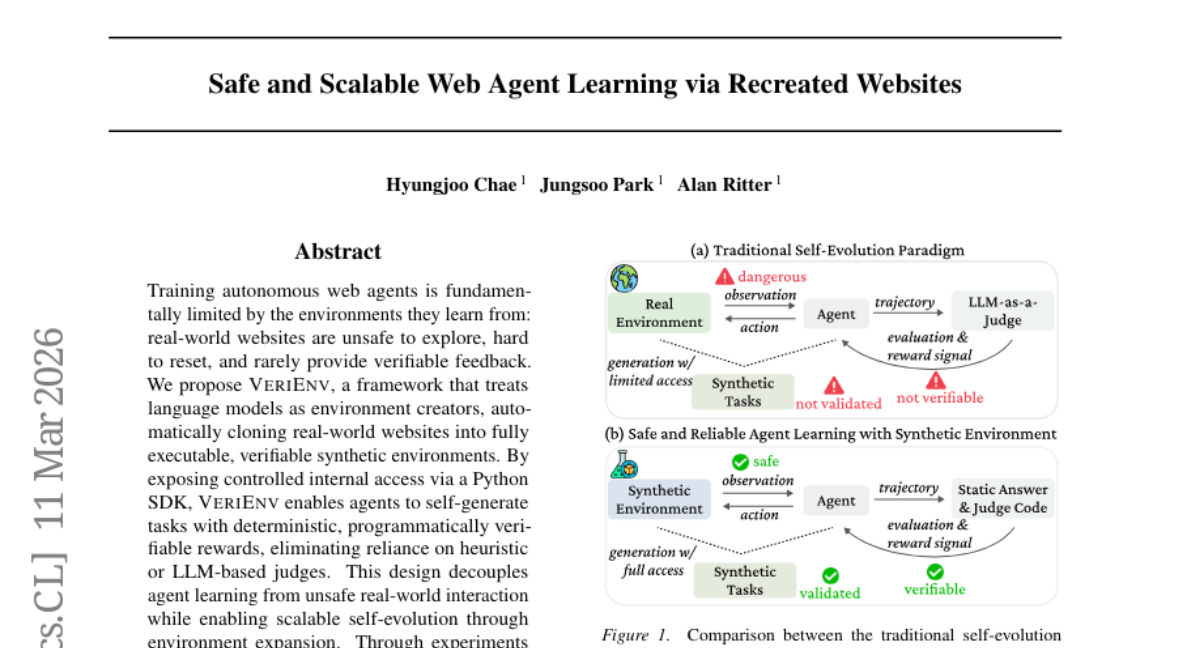

6. Safe and Scalable Web Agent Learning via Recreated Websites

🔑 Keywords: VeriEnv, Language Models, Synthetic Environments, Web Agents, Self-Evolving Training

💡 Category: Reinforcement Learning

🌟 Research Objective:

– To develop VeriEnv, a framework for training autonomous web agents safely and scalably by cloning real-world websites into verifiable synthetic environments.

🛠️ Research Methods:

– Utilization of language models to create synthetic, executable environments that can be accessed and controlled programmatically, allowing agents to self-generate tasks with verifiable rewards.

💬 Research Conclusions:

– VeriEnv enables agents to generalize learning to unseen websites, achieve mastery through self-evolving training, and benefit from scalable training environments, all while avoiding unsafe real-world interactions.

👉 Paper link: https://huggingface.co/papers/2603.10505

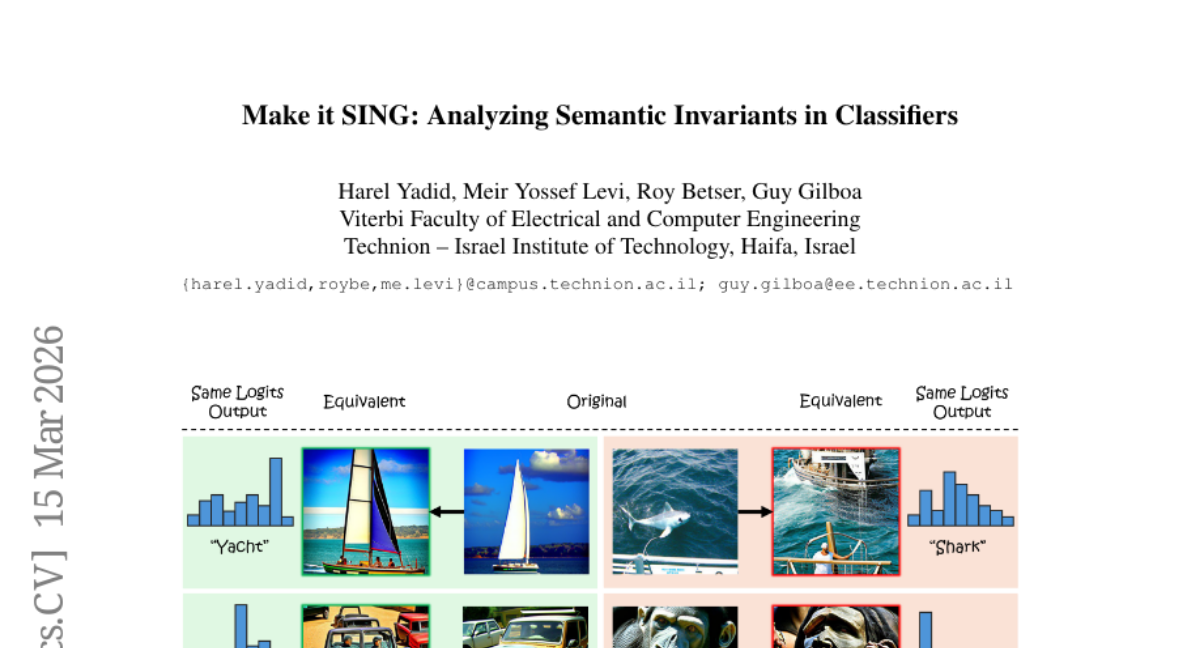

7. Make it SING: Analyzing Semantic Invariants in Classifiers

🔑 Keywords: Null-space Geometry, Semantic Interpretations, Multi-modal Vision Language Models

💡 Category: Computer Vision

🌟 Research Objective:

– The research presents Semantic Interpretation of the Null-space Geometry (SING) to address the lack of human-interpretable semantic content in classifier invariants.

🛠️ Research Methods:

– Utilizes a mapping from network features to multi-modal vision language models to provide natural language descriptions and visual examples of semantic shifts.

💬 Research Conclusions:

– Reveals that different models, such as ResNet50 and DinoViT, handle semantic attributes in null spaces differently; ResNet50 leaks these attributes while DinoViT maintains class semantics more effectively.

👉 Paper link: https://huggingface.co/papers/2603.14610

8. WebVR: Benchmarking Multimodal LLMs for WebPage Recreation from Videos via Human-Aligned Visual Rubrics

🔑 Keywords: video-conditioned webpage generation, WebVR, Generative Models

💡 Category: Generative Models

🌟 Research Objective:

– The paper introduces WebVR, a benchmark designed to evaluate the ability of MLLMs to recreate webpages from demonstration videos, filling the gap in video-conditioned webpage generation research.

🛠️ Research Methods:

– WebVR comprises 175 webpages created through a controlled synthesis pipeline, ensuring realistic demonstrations. It includes a visual rubric for evaluating generated webpages across various dimensions.

💬 Research Conclusions:

– Experiments on 19 models show significant gaps in fine-grained style and motion quality recreation, with a rubric-based evaluation that aligns 96% with human preferences. The dataset, evaluation toolkit, and baseline results are released for further research in the field.

👉 Paper link: https://huggingface.co/papers/2603.13391

9. Understanding Reasoning in LLMs through Strategic Information Allocation under Uncertainty

🔑 Keywords: LLMs, information-theoretic framework, reasoning performance, uncertainty externalization, Aha moments

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– The study aims to understand the underlying mechanisms causing Aha moments in LLMs’ reasoning and how externalization of uncertainty can improve reasoning performance.

🛠️ Research Methods:

– The authors introduce an information-theoretic framework that separates procedural information from epistemic verbalization, which supports necessary control actions based on externalized uncertainty.

💬 Research Conclusions:

– The research concludes that effective reasoning is not driven by specific tokens but by the externalization of uncertainty, which is key to enhancing information acquisition and achieving information sufficiency.

👉 Paper link: https://huggingface.co/papers/2603.15500

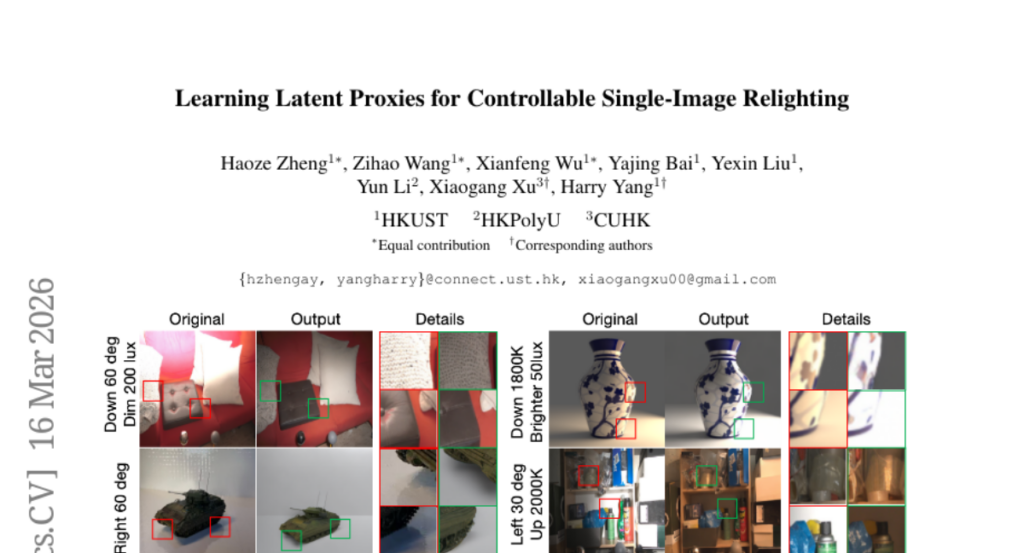

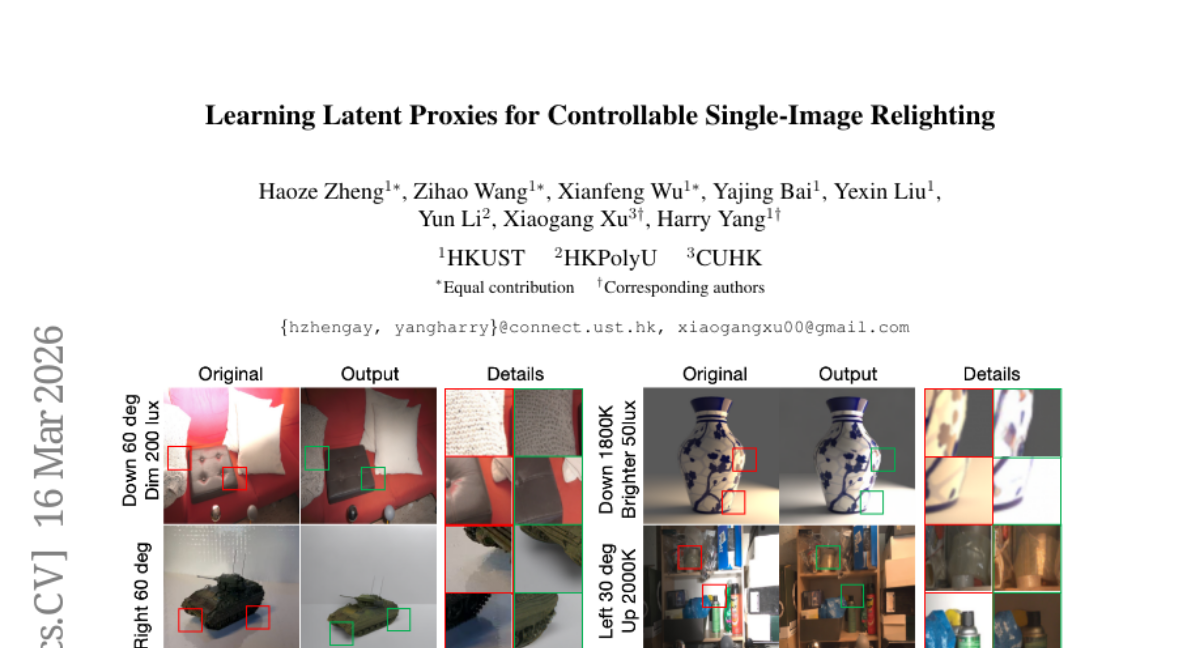

10. Learning Latent Proxies for Controllable Single-Image Relighting

🔑 Keywords: Single-image relighting, diffusion model, lighting-aware mask, PBR supervision, ScaLight dataset

💡 Category: Computer Vision

🌟 Research Objective:

– To develop a method for single-image relighting that is both photometrically faithful and allows for accurate, continuous control of illumination changes without the need for full intrinsic decomposition.

🛠️ Research Methods:

– Introduced LightCtrl, a model integrating physical priors through a few-shot latent proxy encoder and a lighting-aware mask to guide illumination adjustments.

– Developed the ScaLight dataset to enable physically consistent training with large-scale object-level illumination variation.

💬 Research Conclusions:

– LightCtrl surpasses previous diffusion and intrinsic-based methods in achieving photometric fidelity and controlled relighting, demonstrating up to +2.4 dB PSNR improvement and 35% lower RMSE under controlled lighting shifts.

👉 Paper link: https://huggingface.co/papers/2603.15555

11. Code-A1: Adversarial Evolving of Code LLM and Test LLM via Reinforcement Learning

🔑 Keywords: Reinforcement learning, Code Generation, Adversarial Co-evolution, Test LLM, Code LLM

💡 Category: Reinforcement Learning

🌟 Research Objective:

– To address the limitations in code generation by introducing an adversarial co-evolution framework called Code-A1.

🛠️ Research Methods:

– Code-A1 utilizes a framework where a Code LLM and a Test LLM are jointly optimized with opposing objectives to improve code and test generation.

💬 Research Conclusions:

– Code-A1 achieves an effective balance by eliminating self-collusion and improves both code generation and test generation capabilities, surpassing models trained on human-annotated tests.

👉 Paper link: https://huggingface.co/papers/2603.15611

12. The PokeAgent Challenge: Competitive and Long-Context Learning at Scale

🔑 Keywords: AI Native, Partial Observability, Long-Horizon Planning, Game-Theoretic Reasoning, Multi-Agent System

💡 Category: Reinforcement Learning

🌟 Research Objective:

– Establish a large-scale benchmark for decision-making research using Pokemon’s multi-agent battle system and RPG environment, focusing on strategic reasoning, partial observability, and long-horizon planning.

🛠️ Research Methods:

– Develop two tracks: the Battling Track for strategic reasoning in competitive battles and the Speedrunning Track for long-horizon planning in RPGs, providing datasets and evaluation frameworks.

💬 Research Conclusions:

– The PokeAgent Challenge reveals significant gaps between AI models and human performance, showing its effectiveness as a benchmark to advance Reinforcement Learning and Language Models, and supports active research with its live leaderboard.

👉 Paper link: https://huggingface.co/papers/2603.15563

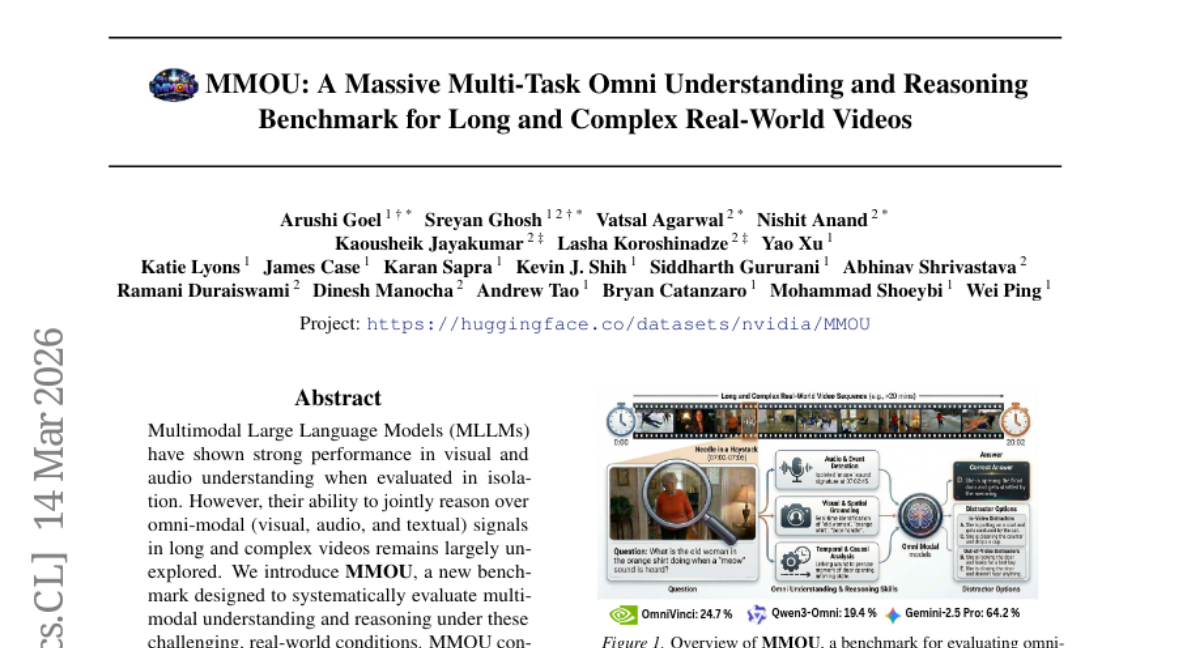

13. MMOU: A Massive Multi-Task Omni Understanding and Reasoning Benchmark for Long and Complex Real-World Videos

🔑 Keywords: Multimodal Large Language Models, MMOU, Omni-Modal Understanding, Visual, Audio

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Introducing MMOU, a new benchmark to evaluate multimodal understanding and reasoning under real-world conditions in long and complex videos.

🛠️ Research Methods:

– MMOU consists of 15,000 curated questions paired with 9038 videos, covering 13 fundamental skill categories that require integrating multi-modal evidence over time.

💬 Research Conclusions:

– The evaluation of over 20 multimodal models on MMOU reveals a significant performance gap, with the best closed-source model achieving 64.2% accuracy and the strongest open-source model reaching only 46.8%, highlighting challenges in long-form omni-modal understanding.

👉 Paper link: https://huggingface.co/papers/2603.14145

14. VisionCoach: Reinforcing Grounded Video Reasoning via Visual-Perception Prompting

🔑 Keywords: Video reasoning, Spatio-temporal grounding, Reinforcement Learning, Visual prompting, Self-distillation

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The primary objective is to enhance spatio-temporal grounding in video reasoning using an input-adaptive reinforcement learning framework with visual prompting.

🛠️ Research Methods:

– An input-adaptive RL framework, VisonCoach, composed of a Visual Prompt Selector and Spatio-Temporal Reasoner, is utilized. The framework employs visual prompts during training to amplify question-relevant evidence and self-distillation to retain improvements.

💬 Research Conclusions:

– VisonCoach achieves state-of-the-art performance in video reasoning benchmarks, enhancing grounded reasoning capabilities during training and directly enabling reasoning on raw videos without visual prompts at inference.

👉 Paper link: https://huggingface.co/papers/2603.14659

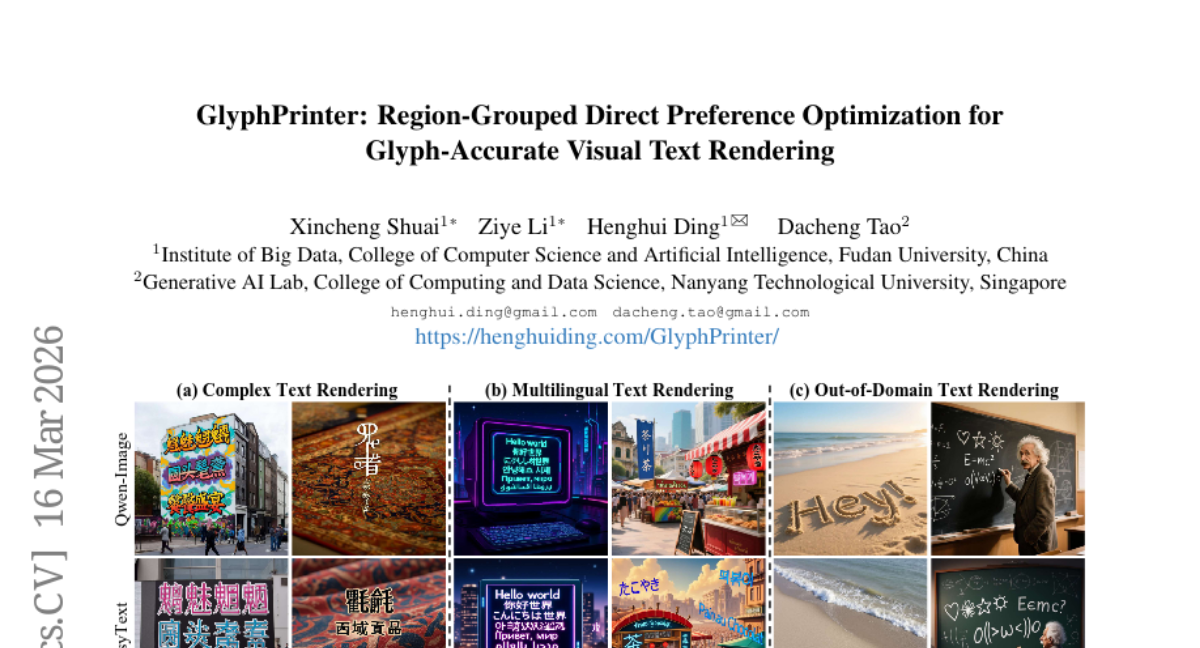

15. GlyphPrinter: Region-Grouped Direct Preference Optimization for Glyph-Accurate Visual Text Rendering

🔑 Keywords: GlyphPrinter, Region-Grouped DPO, Direct Preference Optimization, glyph accuracy, stylization

💡 Category: Computer Vision

🌟 Research Objective:

– The study aims to enhance glyph accuracy in visual text rendering by addressing limitations in existing methods that rely heavily on large datasets and are prone to stylization issues.

🛠️ Research Methods:

– The authors introduce GlyphPrinter, a preference-based text rendering method inspired by Direct Preference Optimization (DPO), and develop a new dataset, GlyphCorrector, with region-level glyph preference annotations.

– They propose Region-Grouped DPO (R-GDPO) to optimize preferences at a regional level within and between samples.

💬 Research Conclusions:

– GlyphPrinter demonstrates superior glyph accuracy compared to existing text rendering methods, maintaining a balance between stylization and precision due to its innovative region-based optimization strategy.

👉 Paper link: https://huggingface.co/papers/2603.15616

16. Motivation in Large Language Models

🔑 Keywords: Large Language Models, Motivation, Human Psychology

💡 Category: Natural Language Processing

🌟 Research Objective:

– Investigate whether large language models exhibit something akin to human motivation and how it relates to their behavior.

🛠️ Research Methods:

– Conduct experiments that examine self-reported motivation of LLMs, their behavioral correlations, and influence by external factors.

💬 Research Conclusions:

– LLMs exhibit structured motivational patterns similar to human psychology, connecting self-reports, choices, and performance.

– Motivation acts as a coherent organizing construct for LLM behavior, revealing dynamics akin to human motivation.

👉 Paper link: https://huggingface.co/papers/2603.14347

17. Efficient Document Parsing via Parallel Token Prediction

🔑 Keywords: Document parsing, Vision-Language Models (VLMs), Parallel-Token Prediction, decoding speed, model generalization

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To enhance the efficiency and speed of document parsing using vision-language models by overcoming the limitations of autoregressive decoding.

🛠️ Research Methods:

– Introduction of Parallel-Token Prediction (PTP), a plugable and model-agnostic method for parallel token generation.

– Integration of learnable tokens into input sequences for improved parallel decoding.

– Development of a data generation pipeline for producing large-scale, high-quality training data.

💬 Research Conclusions:

– The proposed method significantly improves decoding speed (1.6x-2.2x).

– Reduces model hallucinations and enhances generalization abilities, as evidenced by experiments on OmniDocBench and olmOCR-bench.

👉 Paper link: https://huggingface.co/papers/2603.15206

18. Towards Generalizable Robotic Manipulation in Dynamic Environments

🔑 Keywords: Vision-Language-Action models, dynamic manipulation, DOMINO, PUMA, spatiotemporal reasoning

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– The study introduces DOMINO, a large-scale dataset and benchmark designed to enhance the performance of Vision-Language-Action (VLA) models in dynamic environments with moving targets.

🛠️ Research Methods:

– Comprehensive experiments are performed to evaluate existing VLAs on dynamic tasks, with the proposal of PUMA, a dynamics-aware VLA architecture leveraging historical optical flow and specialized queries for improved predictions.

💬 Research Conclusions:

– PUMA achieves state-of-the-art performance, improving the success rate by 6.3% over baselines and demonstrates robust spatiotemporal representations that can be transferred to static tasks.

👉 Paper link: https://huggingface.co/papers/2603.15620

19. EvoClaw: Evaluating AI Agents on Continuous Software Evolution

🔑 Keywords: AI agents, software evolution, EvoClaw, DeepCommit

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To autonomously construct and evolve customized software for AI agents to interact in dynamic environments, addressing the gap left by existing benchmarks that overlook long-term software evolution.

🛠️ Research Methods:

– Introduction of DeepCommit, which reconstructs verifiable Milestone DAGs from commit logs and develops the EvoClaw benchmark focusing on maintaining system integrity over time.

💬 Research Conclusions:

– Evaluation of 12 frontier models across 4 agent frameworks showed a significant drop in performance from over 80% for isolated tasks to a maximum of 38% in continuous settings, highlighting issues in long-term maintenance and error propagation of AI agents.

👉 Paper link: https://huggingface.co/papers/2603.13428

20. Autonomous Agents Coordinating Distributed Discovery Through Emergent Artifact Exchange

🔑 Keywords: Autonomous agents, Artifact DAG, Provenance-aware governance, Emergent convergence, Traceable reasoning

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The framework aims to enable autonomous scientific investigation by independent agents without central coordination.

🛠️ Research Methods:

– Utilization of a registry with over 300 scientific skills, a DAG-based artifact layer, and agent-based scientific discourse to facilitate research.

– Plannerless coordination through a pressure-based scoring system and schema-overlap matching.

💬 Research Conclusions:

– Demonstrated success across diverse investigations, showcasing heterogeneous tool chaining and emergent convergence with traceable reasoning from computation to findings.

👉 Paper link: https://huggingface.co/papers/2603.14312

21. SNCE: Geometry-Aware Supervision for Scalable Discrete Image Generation

🔑 Keywords: VQ codebook, Stochastic Neighbor Cross Entropy Minimization, discrete image generation, convergence speed

💡 Category: Generative Models

🌟 Research Objective:

– To address the optimization challenges of large-codebook discrete image generators by proposing a novel training objective, Stochastic Neighbor Cross Entropy Minimization (SNCE).

🛠️ Research Methods:

– Implemented SNCE which uses a soft categorical distribution based on the proximity of embeddings, fostering a semantically meaningful geometric structure.

– Conducted extensive experiments on ImageNet-256, text-to-image synthesis, and image editing tasks.

💬 Research Conclusions:

– SNCE improves the convergence speed and overall generation quality over standard cross-entropy objectives.

👉 Paper link: https://huggingface.co/papers/2603.15150

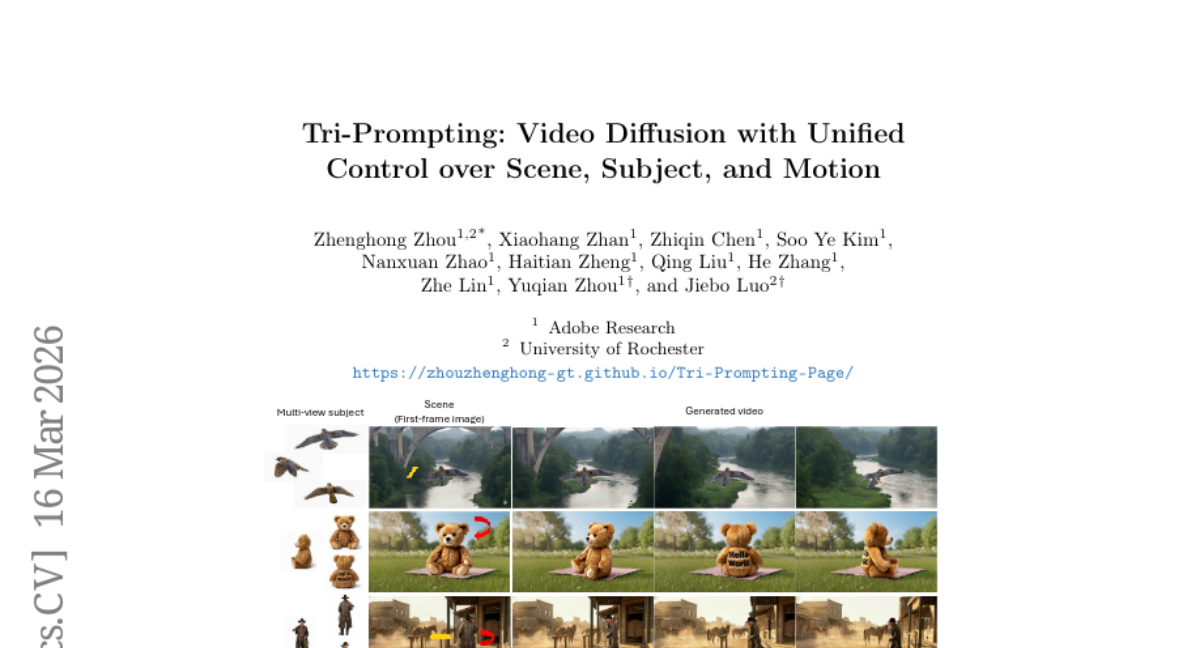

22. Tri-Prompting: Video Diffusion with Unified Control over Scene, Subject, and Motion

🔑 Keywords: Tri-Prompting, video diffusion, scene composition, multi-view subject consistency, motion control

💡 Category: Generative Models

🌟 Research Objective:

– The objective of the research is to develop a unified framework called Tri-Prompting for video diffusion that offers joint control over scene composition, multi-view subject consistency, and motion.

🛠️ Research Methods:

– The study introduces Tri-Prompting, a framework and two-stage training paradigm, utilizing a dual-condition motion module with 3D tracking points for background scenes and downsampled RGB cues for foreground subjects. It also proposes an inference ControlNet scale schedule to balance controllability and visual realism.

💬 Research Conclusions:

– Tri-Prompting significantly outperforms existing methods like Phantom and DaS in multi-view subject identity, 3D consistency, and motion accuracy, paving the way for more versatile and controllable AI-generated video content.

👉 Paper link: https://huggingface.co/papers/2603.15614

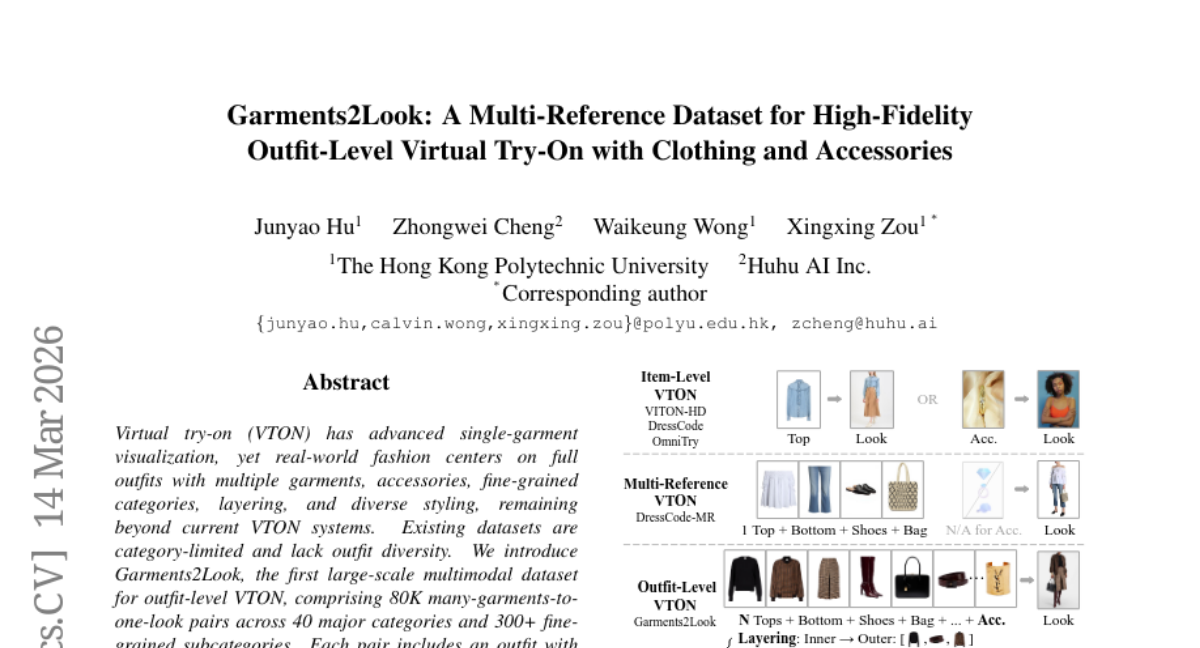

23. Garments2Look: A Multi-Reference Dataset for High-Fidelity Outfit-Level Virtual Try-On with Clothing and Accessories

🔑 Keywords: Virtual try-on, VTON, Garments2Look, Multimodal Dataset, AI Native

💡 Category: Computer Vision

🌟 Research Objective:

– To introduce a large-scale multimodal dataset called Garments2Look for outfit-level virtual try-on, with comprehensive garment and outfit diversity.

🛠️ Research Methods:

– Developed a synthesis pipeline for constructing outfit lists and generating try-on results with heuristic and automated filtering followed by human validation.

💬 Research Conclusions:

– Current state-of-the-art VTON methods and image editing models struggle with full outfit try-ons, resulting in issues with layering, styling, and artifact generation.

👉 Paper link: https://huggingface.co/papers/2603.14153

24.

25. sebis at ArchEHR-QA 2026: How Much Can You Do Locally? Evaluating Grounded EHR QA on a Single Notebook

🔑 Keywords: EHR QA, Privacy-Preserving, Local Systems

💡 Category: AI in Healthcare

🌟 Research Objective:

– The research aims to explore the feasibility of conducting EHR-based clinical question answering using systems running fully on local hardware without relying on cloud infrastructures.

🛠️ Research Methods:

– The study evaluates various approaches in the ArchEHR-QA 2026 shared task, utilizing commodity hardware and conducting experiments locally.

💬 Research Conclusions:

– The findings demonstrate that competitive performance in EHR QA can be achieved with smaller, locally-configured models, offering a viable option for privacy-preserving applications.

👉 Paper link: https://huggingface.co/papers/2603.13962

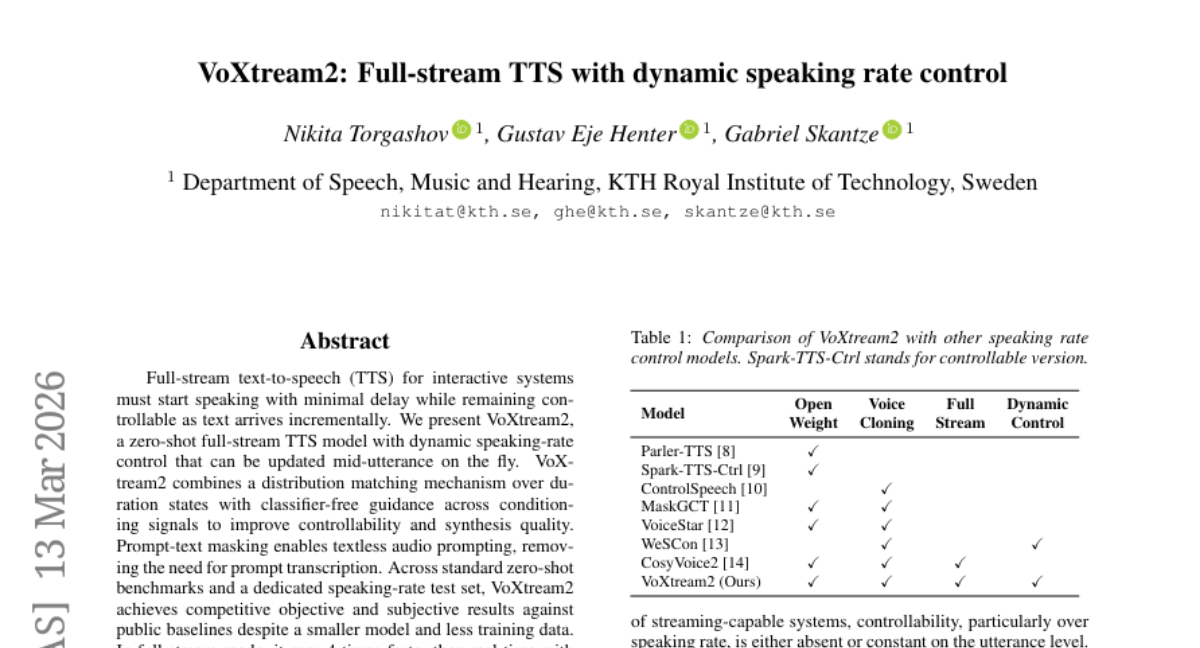

26. VoXtream2: Full-stream TTS with dynamic speaking rate control

🔑 Keywords: VoXtream2, full-stream TTS, dynamic speaking-rate control, zero-shot

💡 Category: Natural Language Processing

🌟 Research Objective:

– Investigate a zero-shot full-stream text-to-speech model that provides minimal latency, allowing interactive systems to initiate speech with dynamic speaking-rate control as text arrives incrementally.

🛠️ Research Methods:

– Use distribution matching over duration states and classifier-free guidance to enhance controllability and synthesis quality. Implement prompt-text masking to enable textless audio prompting without the need for transcription.

💬 Research Conclusions:

– VoXtream2 demonstrates competitive performance in both objective and subjective tests against existing baselines with a smaller model and less training data. It operates four times faster than real time with first-packet latency of 74 ms on a consumer GPU, making it effective for real-time applications.

👉 Paper link: https://huggingface.co/papers/2603.13518

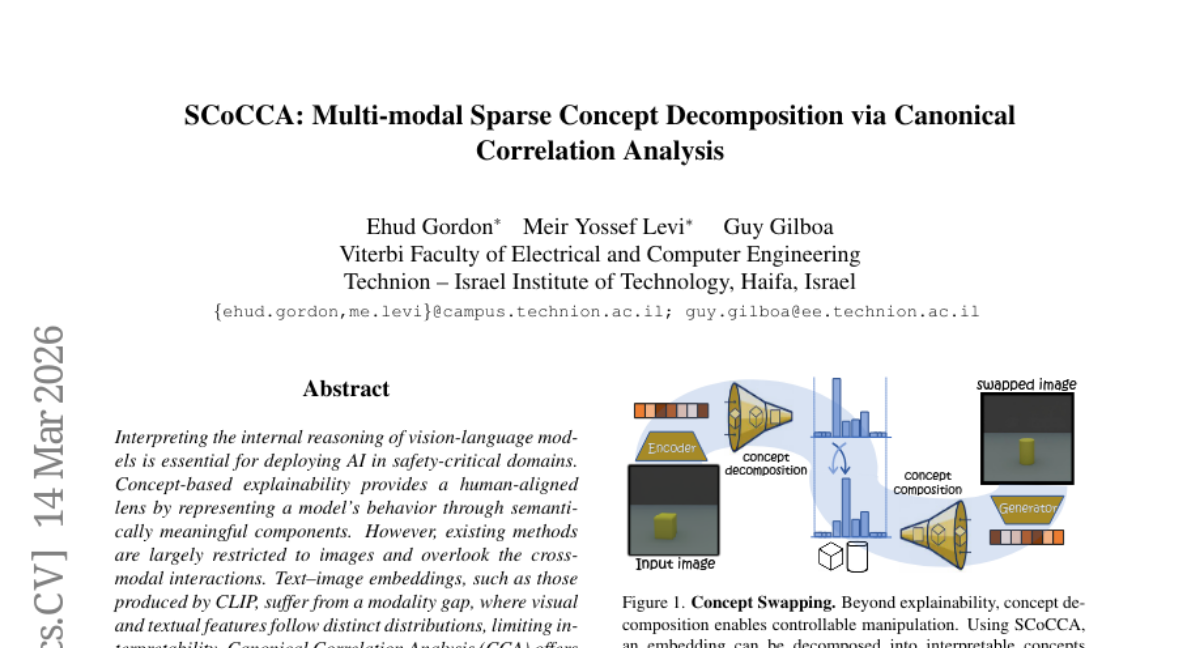

27. SCoCCA: Multi-modal Sparse Concept Decomposition via Canonical Correlation Analysis

🔑 Keywords: concept-based explainability, cross-modal embeddings, Canonical Correlation Analysis (CCA), Sparse Concept CCA (SCoCCA), AI Native

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Explore how concept-based explainability can be extended to multi-modal embeddings, specifically in vision-language models, to enhance interpretability.

🛠️ Research Methods:

– Introduce the use of Canonical Correlation Analysis (CCA) to align features from distinct distributions and optimize cross-modal alignments. Develop the Concept CCA (CoCCA) framework and propose Sparse Concept CCA (SCoCCA) for more discriminative concepts.

💬 Research Conclusions:

– The proposed framework, CoCCA, and its extension, SCoCCA, allow for better alignment and semantic manipulation in multi-modal embeddings, achieving state-of-the-art performance in concept discovery tasks.

👉 Paper link: https://huggingface.co/papers/2603.13884

28. Spectrum Matching: a Unified Perspective for Superior Diffusability in Latent Diffusion

🔑 Keywords: Variational Autoencoders, Latent Diffusion, Spectrum Matching, CelebA, ImageNet

💡 Category: Generative Models

🌟 Research Objective:

– To study the learnability of Variational Autoencoders (VAE) in latent diffusion and propose the Spectrum Matching Hypothesis to enhance it.

🛠️ Research Methods:

– Introduced Spectrum Matching techniques such as Encoding Spectrum Matching and Decoding Spectrum Matching to align spectral densities and maintain frequency semantics.

– Conducted experiments on CelebA and ImageNet datasets to validate the effectiveness of the approach.

💬 Research Conclusions:

– Spectrum Matching provides a unified understanding of why some latents are over-noisy or over-smoothed, outperforming prior methods like VA-VAE and EQ-VAE.

– Extended the spectral framework to representation alignment, employing a DoG-based method for further performance improvement in REPA.

👉 Paper link: https://huggingface.co/papers/2603.14645

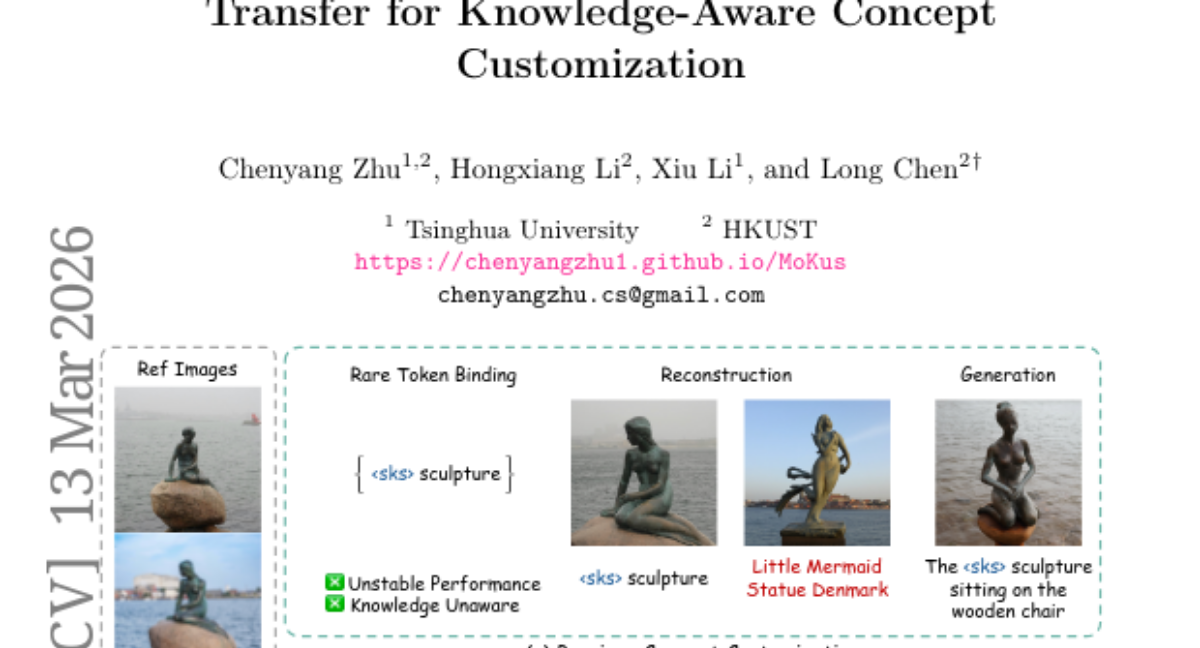

29. MoKus: Leveraging Cross-Modal Knowledge Transfer for Knowledge-Aware Concept Customization

🔑 Keywords: Knowledge-aware Concept Customization, Visual Concepts, Textual Knowledge, Cross-modal Knowledge Transfer, MoKus

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Introduce Knowledge-aware Concept Customization task to bind textual knowledge to visual concepts.

🛠️ Research Methods:

– Proposes MoKus framework, a two-stage approach involving visual anchor learning and textual knowledge updating.

💬 Research Conclusions:

– MoKus outperforms state-of-the-art methods, with potential extensions to other applications such as virtual concept creation and concept erasure.

👉 Paper link: https://huggingface.co/papers/2603.12743

30. Mind the Shift: Decoding Monetary Policy Stance from FOMC Statements with Large Language Models

🔑 Keywords: Delta-Consistent Scoring, Large Language Model, monetary-policy signals, stance detection, self-supervision

💡 Category: AI in Finance

🌟 Research Objective:

– To develop a framework, Delta-Consistent Scoring (DCS), that accurately measures the hawkish-dovish stance in Federal Open Market Committee (FOMC) statements by considering relative shifts in meetings rather than isolated labels.

🛠️ Research Methods:

– Introduction of DCS, which maps frozen large language model representations to stance scores, leveraging self-supervision from consecutive meetings. It jointly models absolute stance and relative shifts, achieving coherence without manual annotations.

💬 Research Conclusions:

– DCS outperforms traditional supervised classification methods, achieving up to 71.1% accuracy in sentence-level classification. The framework successfully correlates stance scores with economic indicators like inflation and Treasury yields, revealing the encoded monetary-policy signals in LLM representations.

👉 Paper link: https://huggingface.co/papers/2603.14313

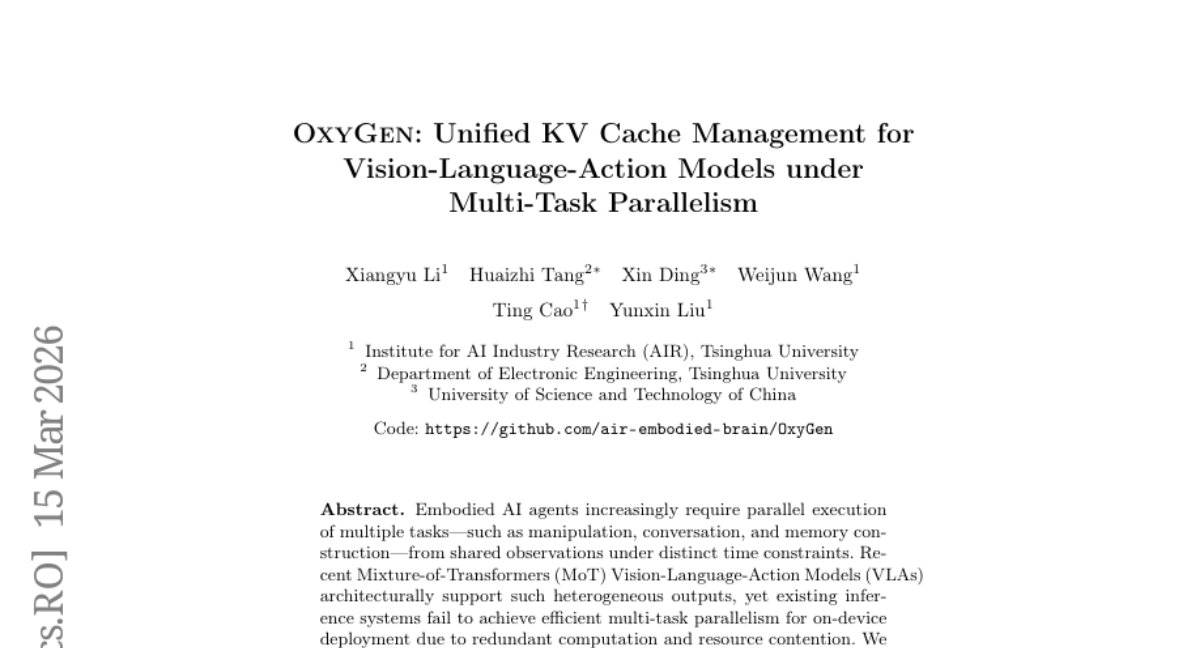

31. OxyGen: Unified KV Cache Management for Vision-Language-Action Models under Multi-Task Parallelism

🔑 Keywords: Embodied AI, Mixture-of-Transformers, KV Cache Management, Multi-task Parallelism, Language Throughput

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To optimize the inference of Mixture-of-Transformers Vision-Language-Action Models for efficient multi-task execution in Embodied AI agents through unified KV cache management.

🛠️ Research Methods:

– Introduction of a unified KV cache management paradigm enabling cross-task KV sharing and cross-frame continuous batching to eliminate redundant computation and decouple language decoding from action generation.

💬 Research Conclusions:

– Implementation of the unified KV cache management paradigm in π_{0.5} achieves up to 3.7 times speedup in performance with high language throughput and action frequency, maintaining action quality.

👉 Paper link: https://huggingface.co/papers/2603.14371

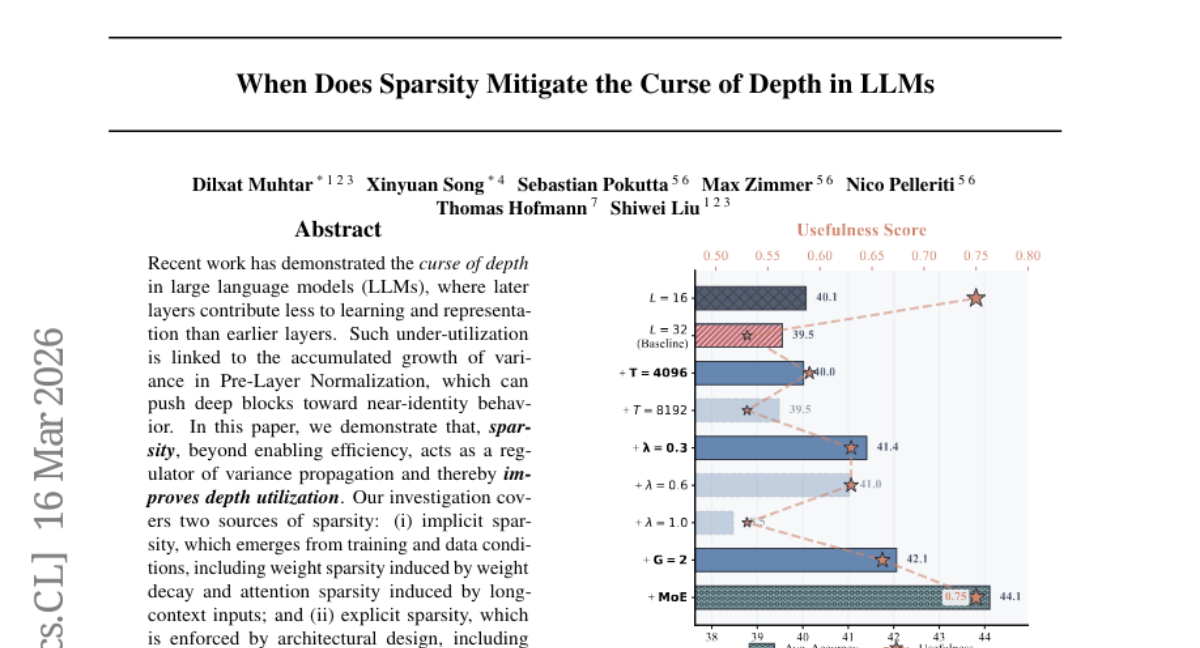

32. When Does Sparsity Mitigate the Curse of Depth in LLMs

🔑 Keywords: Sparsity, Depth Utilization, Large Language Models, Pre-Layer Normalization

💡 Category: Natural Language Processing

🌟 Research Objective:

– Investigate how sparsity influences layer utilization in large language models to enhance efficiency and depth scaling.

🛠️ Research Methods:

– Analysis of implicit and explicit sparsity sources, including weight and attention sparsity, through controlled depth-scaling experiments and layer effectiveness interventions.

💬 Research Conclusions:

– Sparsity aids in reducing output variance, improving layer utilization, and promoting functional differentiation, resulting in a 4.6% accuracy improvement on LLMs in downstream tasks.

👉 Paper link: https://huggingface.co/papers/2603.15389

33. FlashMotion: Few-Step Controllable Video Generation with Trajectory Guidance

🔑 Keywords: Video Generation, Trajectory-Controllable, FlashMotion, Video Quality, Multi-Step

💡 Category: Generative Models

🌟 Research Objective:

– To develop a novel training framework, called FlashMotion, that enhances trajectory-controllable video generation efficiency and quality.

🛠️ Research Methods:

– Utilization of an adapter-based architecture for precise trajectory control.

– Distillation of a multi-step video generator into a few-step version, coupled with a hybrid strategy of diffusion and adversarial objectives for finetuning.

💬 Research Conclusions:

– FlashMotion outperforms existing video distillation methods and prior multi-step models in producing high-quality and trajectory-accurate videos, as demonstrated by experiments using the benchmark FlashBench.

👉 Paper link: https://huggingface.co/papers/2603.12146

34. HorizonMath: Measuring AI Progress Toward Mathematical Discovery with Automatic Verification

🔑 Keywords: AI Native, HorizonMath, mathematical reasoning, computational verification

💡 Category: Natural Language Processing

🌟 Research Objective:

– The objective is to assess if AI can make progress on unsolved mathematical problems through the introduction of HorizonMath, a benchmark specifically designed for this purpose.

🛠️ Research Methods:

– Developing a benchmark with over 100 unsolved problems across eight domains in mathematics, paired with an open-source evaluation framework for automated verification.

💬 Research Conclusions:

– State-of-the-art large language models currently score near 0%, indicating the difficulty and novelty of the problems. However, GPT 5.4 Pro proposed solutions to two problems, potentially contributing novel mathematical insights.

👉 Paper link: https://huggingface.co/papers/2603.15617

35. RS-WorldModel: a Unified Model for Remote Sensing Understanding and Future Sense Forecasting

🔑 Keywords: Remote Sensing, World Model, Spatiotemporal Change, Text-Guided Forecasting, Reinforcement Optimization

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To create a unified world model (RS-WorldModel) for remote sensing that simultaneously addresses spatiotemporal change understanding and text-guided future scene forecasting.

🛠️ Research Methods:

– Developed a new model trained through three stages: Geo-Aware Generative Pre-training (GAGP); synergistic instruction tuning (SIT); and verifiable reinforcement optimization (VRO).

💬 Research Conclusions:

– RS-WorldModel, with only 2B parameters, surpasses models up to 120 times larger on change question-answering metrics and achieves superior FID on text-guided forecasts compared to all current baselines, including the closed-source Gemini-2.5-Flash Image.

👉 Paper link: https://huggingface.co/papers/2603.14941

36. Supervised Fine-Tuning versus Reinforcement Learning: A Study of Post-Training Methods for Large Language Models

🔑 Keywords: Pre-trained Large Language Model, Supervised Fine-Tuning, Reinforcement Learning, Hybrid Training Pipelines, Large Language Model post-training

💡 Category: Natural Language Processing

🌟 Research Objective:

– To provide a comprehensive and unified perspective on post-training of Pre-trained Large Language Models using Supervised Fine-Tuning and Reinforcement Learning.

🛠️ Research Methods:

– In-depth overview of SFT and RL, examining objectives, algorithmic structures, and data requirements.

– Analysis of the interplay between SFT and RL, highlighting frameworks and hybrid training pipelines.

💬 Research Conclusions:

– Identification of emerging trends towards hybrid post-training paradigms.

– Clarification of when and why each method is most effective within a unified framework.

– Outlines promising directions for future research in scalable, efficient, and generalizable LLM post-training.

👉 Paper link: https://huggingface.co/papers/2603.13985

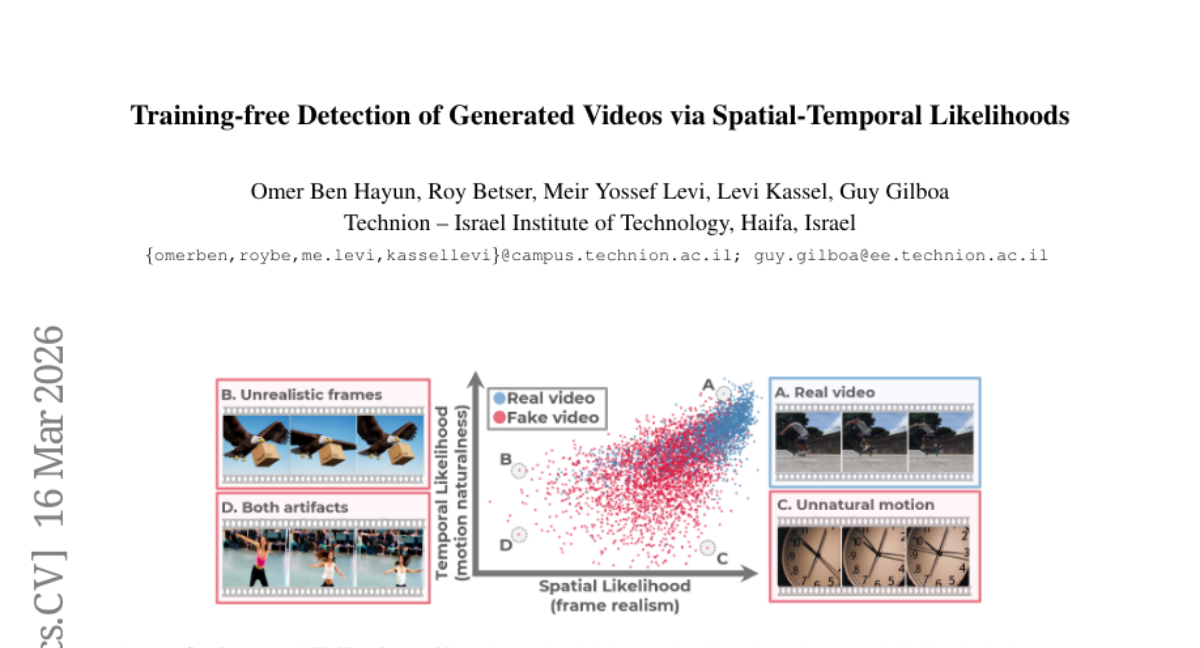

37. Training-free Detection of Generated Videos via Spatial-Temporal Likelihoods

🔑 Keywords: video generation, misinformation detection, zero-shot approach, probabilistic framework, STALL

💡 Category: Computer Vision

🌟 Research Objective:

– Address the challenges in detecting synthetic videos due to the limitations of current detectors and the rapid evolution of generative models.

🛠️ Research Methods:

– Introduce STALL, a training-free, probabilistic detector that evaluates both spatial and temporal aspects for zero-shot detection of synthetic videos.

💬 Research Conclusions:

– STALL outperforms existing image- and video-based detectors on established public benchmarks and a new benchmark called ComGenVid.

👉 Paper link: https://huggingface.co/papers/2603.15026

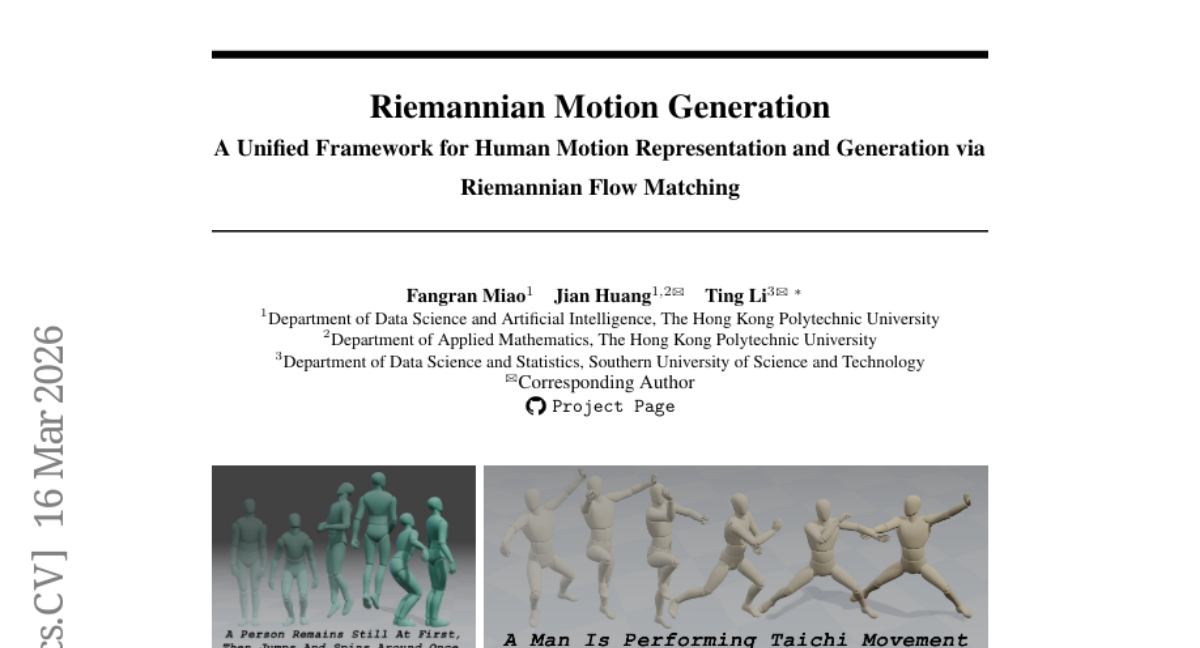

38. Riemannian Motion Generation: A Unified Framework for Human Motion Representation and Generation via Riemannian Flow Matching

🔑 Keywords: Riemannian Motion Generation, motion generation, Riemannian flow matching, geometry-aware modeling

💡 Category: Generative Models

🌟 Research Objective:

– Introduce a framework called Riemannian Motion Generation (RMG) for generating human motion on a product manifold, leveraging non-Euclidean geometry structures.

🛠️ Research Methods:

– Factorization of motion into manifold factors, use of geodesic interpolation, tangent-space supervision, and manifold-preserving ODE integration for learning and sampling dynamics.

💬 Research Conclusions:

– RMG achieves state-of-the-art performance on HumanML3D and surpasses baselines on MotionMillion, proving effective and stable with a T+R (translation + rotations) representation, emphasizing the practicality of geometry-aware modeling for high-fidelity motion generation.

👉 Paper link: https://huggingface.co/papers/2603.15016

39. FineRMoE: Dimension Expansion for Finer-Grained Expert with Its Upcycling Approach

🔑 Keywords: FineRMoE, fine-grained expert design, parameter efficiency, decoding throughput, scaling law

💡 Category: Machine Learning

🌟 Research Objective:

– The study aims to improve the model performance beyond the single-dimension limit by proposing a FineR-Grained MoE (FineRMoE) architecture that expands expert design to both intermediate and output dimensions.

🛠️ Research Methods:

– Introduces a bi-level sparse forward computation paradigm and a specialized routing mechanism to enhance activation control.

– Devises a generalized upcycling method for cost-effective construction of FineRMoE, reducing training costs.

💬 Research Conclusions:

– FineRMoE demonstrates superior performance across ten benchmarks, achieving 6 times higher parameter efficiency, significantly lower prefill latency, and much higher decoding throughput during inference compared to the strongest baseline.

👉 Paper link: https://huggingface.co/papers/2603.13364

40. Panoramic Affordance Prediction

🔑 Keywords: Panoramic Affordance Prediction, Embodied AI, PAP-12K, 360-degree imagery, Coarse-to-fine pipeline

💡 Category: Computer Vision

🌟 Research Objective:

– To explore Panoramic Affordance Prediction using 360-degree imagery for improved scene understanding in embodied AI.

🛠️ Research Methods:

– Introduction of PAP-12K, a benchmark dataset with ultra-high-resolution panoramic images and annotated QA pairs and affordance masks.

– Development of PAP framework, inspired by the human foveal system, utilizing recursive visual routing and adaptive gaze mechanisms to address challenges in panoramic vision.

💬 Research Conclusions:

– Traditional affordance prediction methods suffer performance degradation with panoramic images.

– The PAP framework outperforms state-of-the-art baselines, demonstrating the potential of panoramic perception for enhanced embodied intelligence.

👉 Paper link: https://huggingface.co/papers/2603.15558

41. TERMINATOR: Learning Optimal Exit Points for Early Stopping in Chain-of-Thought Reasoning

🔑 Keywords: TERMINATOR, Large Reasoning Models, Chain-of-Thought, overthinking, early-exit strategy

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– The study introduces TERMINATOR, an early-exit method designed to identify optimal reasoning lengths in large reasoning models to enhance computational efficiency.

🛠️ Research Methods:

– The method leverages the predictability of the first arrival of an LRM’s final answer to create a dataset of optimal reasoning lengths, which is then used to train TERMINATOR for practical applications.

💬 Research Conclusions:

– TERMINATOR significantly reduces Chain-of-Thought lengths by 14%-55% on average across four practical datasets, outperforming current state-of-the-art methods in the process.

👉 Paper link: https://huggingface.co/papers/2603.12529

42. POLCA: Stochastic Generative Optimization with LLM

🔑 Keywords: Stochastic Generative Optimization, Generative Language Model, POLCA, Exploration-Exploitation Tradeoff, Meta-Learning

💡 Category: Generative Models

🌟 Research Objective:

– Develop a framework utilizing a generative language model for optimizing complex systems through stochastic generative optimization.

🛠️ Research Methods:

– Introduced POLCA, a framework with Prioritized Optimization and Local Contextual Aggregation to handle stochasticity and manage solution space expansion.

– Implemented a priority queue and an epsilon-Net mechanism to balance exploration and exploitation and maintain parameter diversity.

– Utilized an LLM Summarizer for meta-learning across historical trials.

💬 Research Conclusions:

– POLCA consistently outperformed state-of-the-art algorithms, achieving robust, sample, and time-efficient performance in both deterministic and stochastic environments across diverse benchmarks.

👉 Paper link: https://huggingface.co/papers/2603.14769

43. ViFeEdit: A Video-Free Tuner of Your Video Diffusion Transformer

🔑 Keywords: Diffusion Transformers, video control, video editing, ViFeEdit, video-free tuning

💡 Category: Generative Models

🌟 Research Objective:

– Extend the capabilities of Diffusion Transformers from image generation to controllable video generation and editing without the use of video data.

🛠️ Research Methods:

– Introduce ViFeEdit, a video-free tuning framework that utilizes 2D images for video diffusion transformers through architectural reparameterization and a dual-path pipeline with separate timestep embeddings.

💬 Research Conclusions:

– ViFeEdit allows for versatile video generation and editing with minimal training on 2D image data, maintaining temporal consistency and delivering promising results in controllable video tasks.

👉 Paper link: https://huggingface.co/papers/2603.15478

44. Effective Distillation to Hybrid xLSTM Architectures

🔑 Keywords: lossless distillation, xLSTM, quadratic attention, transformer-based LLMs

💡 Category: Natural Language Processing

🌟 Research Objective:

– To achieve lossless distillation by matching or exceeding the performance of teacher LLMs with xLSTM-based students using tolerance-corrected Win-and-Tie rates.

🛠️ Research Methods:

– Introduction of a distillation pipeline for xLSTM-based students, including a merging stage to combine linearized experts into a single model.

💬 Research Conclusions:

– Effective distillation pipeline allows xLSTM-based students to recover and, in some cases, exceed the performance of teacher models from Llama, Qwen, and Olmo families, highlighting a path towards more energy-efficient and cost-effective LLMs.

👉 Paper link: https://huggingface.co/papers/2603.15590

45. Attention Residuals

🔑 Keywords: Attention Residuals, PreNorm, Block AttnRes, Large Language Models, AI Systems and Tools

💡 Category: Natural Language Processing

🌟 Research Objective:

– To address the uncontrolled hidden-state growth in large language models due to the uniform aggregation in residual connections with PreNorm.

🛠️ Research Methods:

– Introducing Attention Residuals (AttnRes) using softmax attention over preceding layer outputs.

– Developing Block AttnRes to reduce memory and communication overhead by partitioning layers into blocks.

– Employing a cache-based pipeline communication and a two-phase computation strategy to integrate Block AttnRes efficiently.

💬 Research Conclusions:

– AttnRes achieves more uniform output magnitudes and gradient distribution, enhancing downstream performance across tasks.

– Block AttnRes maintains performance while reducing memory usage, confirmed by scaling law experiments across model sizes.

👉 Paper link: https://huggingface.co/papers/2603.15031

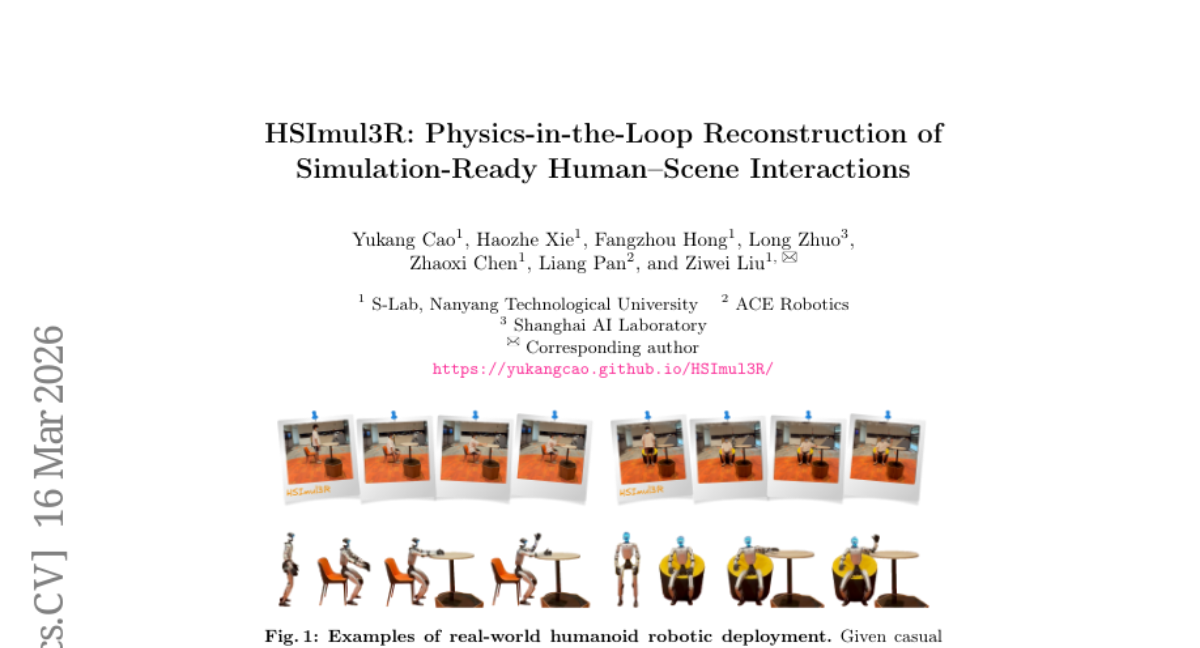

46. HSImul3R: Physics-in-the-Loop Reconstruction of Simulation-Ready Human-Scene Interactions

🔑 Keywords: 3D reconstruction, human-scene interactions, reinforcement learning, physics engine, simulation-ready

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To develop HSImul3R, a unified framework for simulation-ready 3D reconstruction of human-scene interactions from casual captures.

🛠️ Research Methods:

– Utilizes a physics-grounded bi-directional optimization pipeline involving Scene-targeted Reinforcement Learning to optimize human motion and Direct Simulation Reward Optimization to refine scene geometry.

💬 Research Conclusions:

– HSImul3R successfully bridges the perception-simulation gap by incorporating physics into its process, producing stable and ready-to-use HSI reconstructions for real-world humanoid robots.

👉 Paper link: https://huggingface.co/papers/2603.15612

47. OpenSeeker: Democratizing Frontier Search Agents by Fully Open-Sourcing Training Data

🔑 Keywords: OpenSeeker, Large Language Model, search agent, high-quality training data, collaborative ecosystem

💡 Category: Natural Language Processing

🌟 Research Objective:

– To introduce OpenSeeker, the first fully open-source search agent, aiming to advance frontier-level performance in search capabilities for Large Language Model agents by overcoming the scarcity of high-quality training data.

🛠️ Research Methods:

– Implemented two core technical innovations: Fact-grounded scalable controllable QA synthesis and denoised trajectory synthesis to enhance complex reasoning tasks and high-quality action generation.

💬 Research Conclusions:

– OpenSeeker, even with a limited training dataset of 11.7k synthesized samples, achieves state-of-the-art performance across multiple benchmarks, outperforming existing open-source agents and even some industrial competitors.

– The open-sourcing of the model and data aims to democratize search agent research and foster a transparent, collaborative ecosystem.

👉 Paper link: https://huggingface.co/papers/2603.15594