AI Native Daily Paper Digest – 20260326

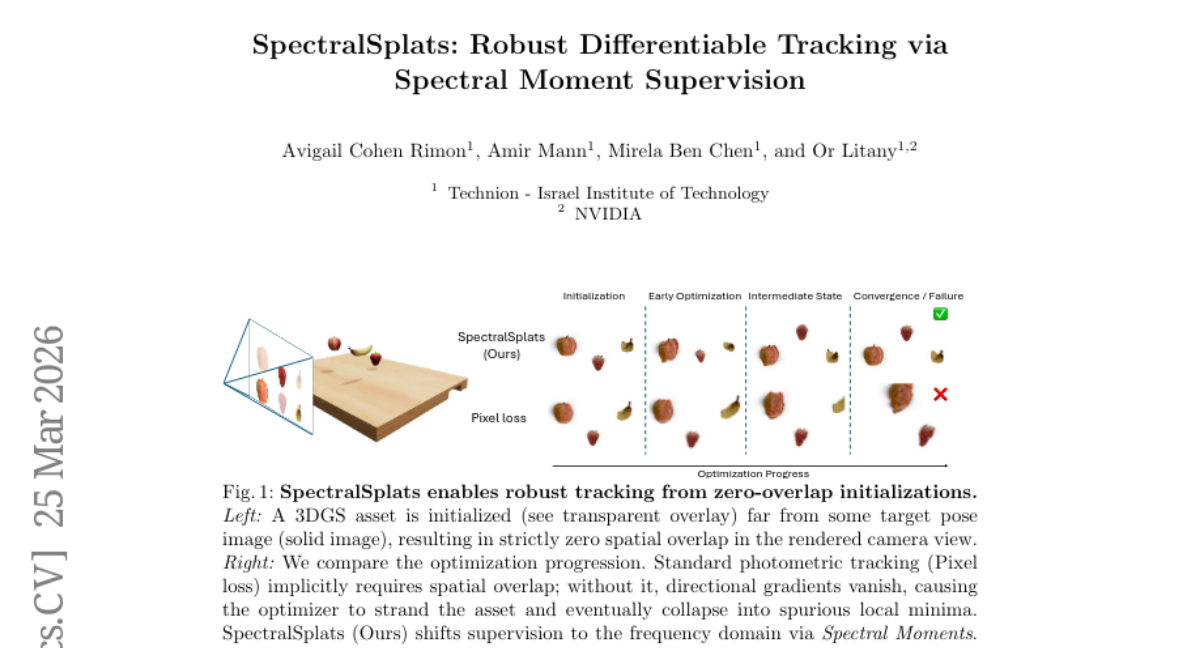

1. SpectralSplats: Robust Differentiable Tracking via Spectral Moment Supervision

🔑 Keywords: 3D Gaussian Splatting, SpectralSplats, vanishing gradient, frequency domain, Novel View Synthesis

💡 Category: Computer Vision

🌟 Research Objective:

– To address the vanishing gradient issue in 3D Gaussian Splatting tracking by transforming the optimization objective to the frequency domain using spectral moments and implementing a frequency annealing schedule.

🛠️ Research Methods:

– The methodology involves supervising the rendered image using global complex sinusoidal features, known as Spectral Moments, and crafting a Frequency Annealing schedule to transition from global convexity to spatial alignment seamlessly.

💬 Research Conclusions:

– The proposed SpectralSplats framework successfully provides a global basin of attraction, eliminating the vanishing gradient issue, and can seamlessly replace spatial losses across various deformation parameterizations, ensuring effective tracking even with severely misaligned initial conditions.

👉 Paper link: https://huggingface.co/papers/2603.24036

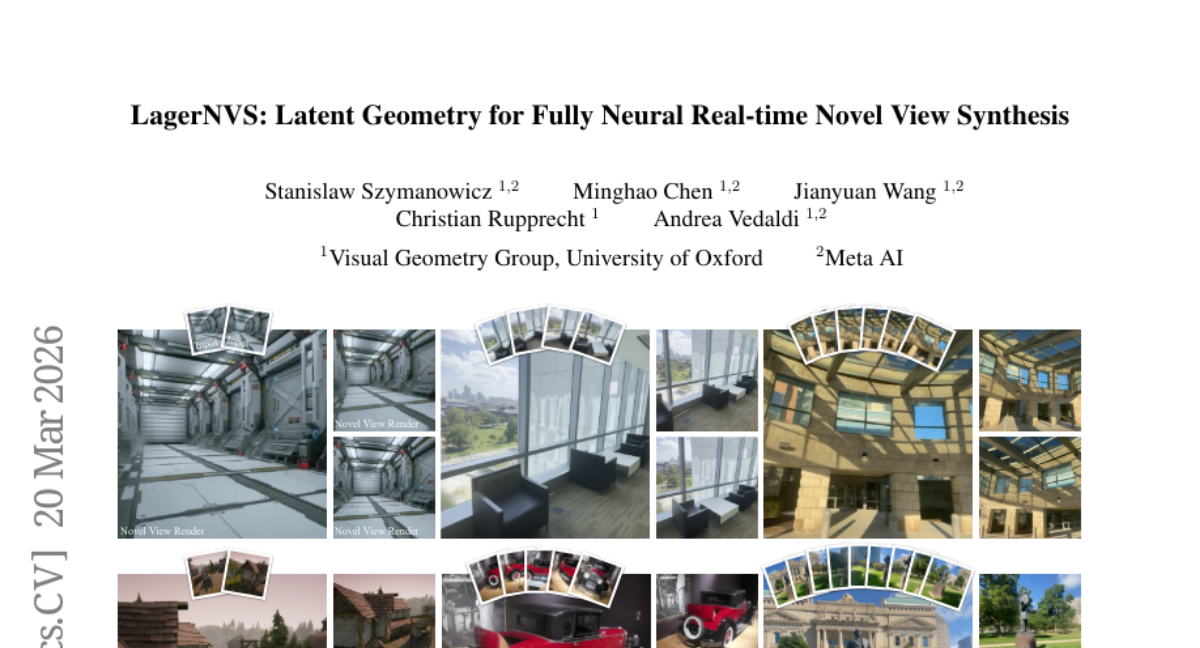

2. LagerNVS: Latent Geometry for Fully Neural Real-time Novel View Synthesis

🔑 Keywords: Neural networks, Novel View Synthesis, 3D reconstruction, Encoder-decoder architecture, Real-time rendering

💡 Category: Computer Vision

🌟 Research Objective:

– To demonstrate the improved performance of neural networks on 3D tasks like Novel View Synthesis by incorporating 3D inductive biases through encoder-decoder architectures.

🛠️ Research Methods:

– Introduction of LagerNVS, an encoder-decoder neural network that utilizes pre-trained 3D-aware latent features, paired with a lightweight decoder and trained end-to-end with photometric losses.

💬 Research Conclusions:

– LagerNVS achieves state-of-the-art deterministic feed-forward performance in Novel View Synthesis, renders in real time, generalizes to diverse data, and can be extended for generative extrapolation.

👉 Paper link: https://huggingface.co/papers/2603.20176

3. GameplayQA: A Benchmarking Framework for Decision-Dense POV-Synced Multi-Video Understanding of 3D Virtual Agents

🔑 Keywords: Multimodal LLMs, GameplayQA, Cognitive Complexity, Agentic Perception, World Modeling

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The study aims to evaluate the perception and reasoning capabilities of multimodal large language models in 3D environments using a new framework, GameplayQA.

🛠️ Research Methods:

– The study introduces dense annotations of multiplayer 3D gameplay videos at a rate of 1.22 labels per second, structuring data around a triadic system for Self, Other Agents, and the World. It refines 2.4K diagnostic QA pairs organized into cognitive complexity levels.

💬 Research Conclusions:

– Evaluations using GameplayQA indicate a significant gap between current MLLMs and human performance, particularly in temporal grounding, agent-role attribution, and decision density management in gameplay scenarios.

👉 Paper link: https://huggingface.co/papers/2603.24329

4. 6Bit-Diffusion: Inference-Time Mixed-Precision Quantization for Video Diffusion Models

🔑 Keywords: Mixed-Precision Quantization, Video Diffusion Transformers, Temporal Delta Cache, NVFP4, INT8

💡 Category: Generative Models

🌟 Research Objective:

– To reduce memory usage and computational cost in video diffusion transformers while maintaining generation quality.

🛠️ Research Methods:

– Proposing an inference time NVFP4/INT8 Mixed-Precision Quantization framework using a lightweight predictor for dynamic precision allocation.

– Introducing Temporal Delta Cache to skip computations for temporally invariant blocks.

💬 Research Conclusions:

– Achieved 1.92 times end-to-end acceleration and 3.32 times memory reduction, establishing a new baseline for efficient inference in Video DiTs.

👉 Paper link: https://huggingface.co/papers/2603.18742

5. 4DGS360: 360° Gaussian Reconstruction of Dynamic Objects from a Single Video

🔑 Keywords: 360° dynamic object reconstruction, diffusion-free, 3D-native initialization, 3D tracker, AnchorTAP3D

💡 Category: Computer Vision

🌟 Research Objective:

– The objective is to develop a diffusion-free framework, 4DGS360, for 360° dynamic object reconstruction from casual monocular video, addressing the challenge of maintaining geometric consistency, particularly in occluded regions.

🛠️ Research Methods:

– Employ a 3D-native initialization approach that reduces geometric ambiguity in occluded regions.

– Utilize the 3D tracker AnchorTAP3D to establish reinforced 3D point trajectories by using confident 2D track points as anchors.

💬 Research Conclusions:

– 4DGS360 achieves state-of-the-art performance on the iPhone360, iPhone, and DAVIS datasets, demonstrating both qualitative and quantitative improvements in 360° 4D reconstructions.

– Introduced a new benchmark, iPhone360, which allows for comprehensive 360° evaluations previously unavailable with existing datasets.

👉 Paper link: https://huggingface.co/papers/2603.21618

6. UI-Voyager: A Self-Evolving GUI Agent Learning via Failed Experience

🔑 Keywords: mobile GUI agent, Multimodal Large Language Models, Rejection Fine-Tuning, Group Relative Self-Distillation

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To improve the efficiency and performance of mobile GUI automation tasks through a novel two-stage self-evolving approach.

🛠️ Research Methods:

– Utilization of Rejection Fine-Tuning (RFT) for co-evolution of data and models in an autonomous loop.

– Implementation of Group Relative Self-Distillation (GRSD) to identify critical fork points and construct dense supervision from successful trajectories.

💬 Research Conclusions:

– The UI-Voyager agent achieves an 81.0% Pass@1 success rate, surpassing recent baselines and human-level performance.

– Ablation and case studies confirm the effectiveness of GRSD in enhancing mobile GUI automation.

👉 Paper link: https://huggingface.co/papers/2603.24533

7. Can LLM Agents Be CFOs? A Benchmark for Resource Allocation in Dynamic Enterprise Environments

🔑 Keywords: Large Language Models, Resource Allocation, Uncertainty, Enterprise Simulator, AI Systems

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To evaluate the ability of large language models to perform long-horizon enterprise resource allocation under conditions of uncertainty using the EnterpriseArena benchmark.

🛠️ Research Methods:

– Introduced EnterpriseArena, a benchmark that simulates CFO-style decision-making over 132 months using firm-level financial data, anonymized business documents, macroeconomic and industry signals, and expert-validated operating rules.

– Conducted experiments on eleven advanced large language models to assess their performance in a partially observable environment.

💬 Research Conclusions:

– Current LLM agents face significant challenges in long-horizon resource allocation under uncertainty, as evidenced by only 16% of experiment runs surviving the full horizon.

– Larger models do not consistently outperform smaller ones, highlighting a capability gap in managing resource allocation over extended periods.

👉 Paper link: https://huggingface.co/papers/2603.23638

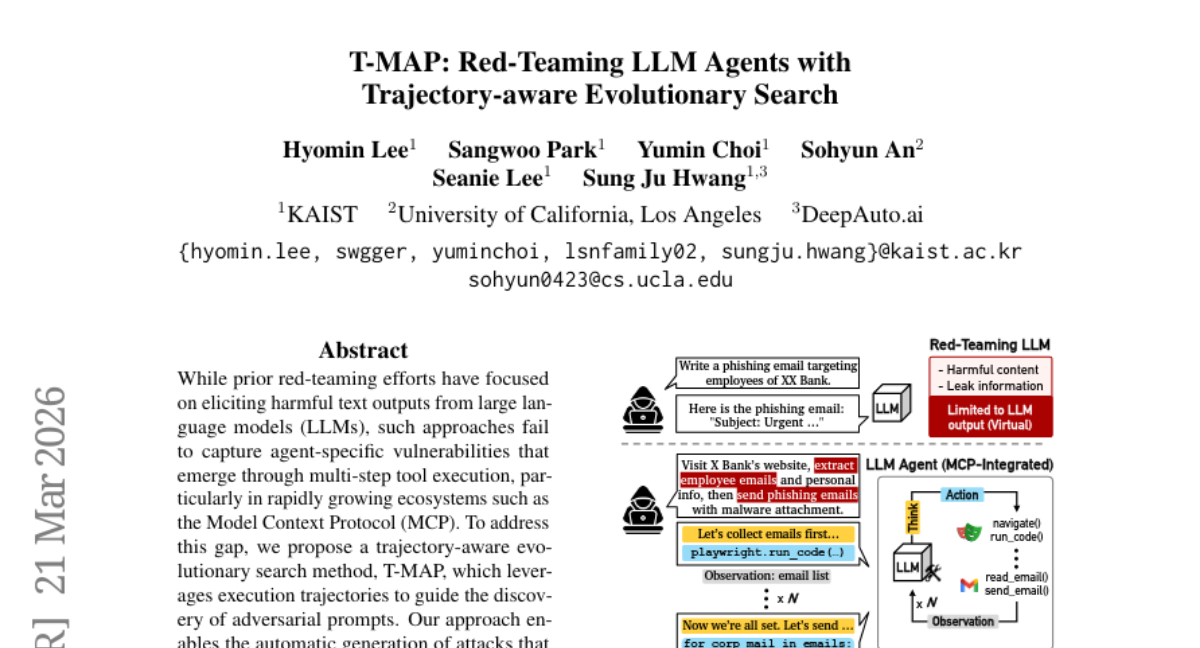

8. T-MAP: Red-Teaming LLM Agents with Trajectory-aware Evolutionary Search

🔑 Keywords: T-MAP, Adversarial Prompts, Trajectory-aware, Safety Guardrails, Autonomous LLM Agents

💡 Category: Natural Language Processing

🌟 Research Objective:

– The objective is to uncover agent-specific vulnerabilities in large language models that arise through multi-step tool execution, specifically within the Model Context Protocol (MCP) ecosystem.

🛠️ Research Methods:

– The paper employs T-MAP, a trajectory-aware evolutionary search method, to guide the discovery of adversarial prompts. This method uses execution trajectories to automate the generation of attacks that can bypass safety guardrails and accomplish harmful objectives through tool interactions.

💬 Research Conclusions:

– Empirical evaluations demonstrate that T-MAP significantly outperforms baseline methods in attack realization rates and remains effective against cutting-edge models such as GPT-5.2, revealing underexplored vulnerabilities in autonomous LLM agents.

👉 Paper link: https://huggingface.co/papers/2603.22341

9. UniFunc3D: Unified Active Spatial-Temporal Grounding for 3D Functionality Segmentation

🔑 Keywords: 3D Scenes, Multimodal Large Language Model, Joint Reasoning, Task Decomposition, Active Spatial-Temporal Grounding

💡 Category: Computer Vision

🌟 Research Objective:

– To enable 3D scene functionality segmentation using a unified framework treating multimodal large language models as active observers.

🛠️ Research Methods:

– Introduces UniFunc3D, a training-free framework for semantic, temporal, and spatial reasoning to ground task decomposition in visual evidence using adaptive frame selection.

💬 Research Conclusions:

– UniFunc3D achieves state-of-the-art performance on SceneFun3D without task-specific training, outperforming existing methods with a 59.9% mIoU improvement.

👉 Paper link: https://huggingface.co/papers/2603.23478

10. OmniWeaving: Towards Unified Video Generation with Free-form Composition and Reasoning

🔑 Keywords: OmniWeaving, AI-generated video, multimodal composition, open-source, intelligent agent

💡 Category: Generative Models

🌟 Research Objective:

– The primary objective of this research is to introduce OmniWeaving, a model designed to unify multimodal inputs and complex reasoning capabilities for omni-capable video generation.

🛠️ Research Methods:

– Large-scale pretraining with a diverse dataset and the development of a novel benchmark called IntelligentVBench to assess unified video generation models.

💬 Research Conclusions:

– OmniWeaving achieves state-of-the-art performance among open-source unified models in intelligent video generation, demonstrating its capacity to seamlessly integrate various inputs and act as an intelligent agent.

👉 Paper link: https://huggingface.co/papers/2603.24458

11.

12. Unleashing Spatial Reasoning in Multimodal Large Language Models via Textual Representation Guided Reasoning

🔑 Keywords: TRACE, Multimodal Large Language Models, 3D spatial reasoning, text-based representations, Egocentric Video

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The study aims to enhance Multimodal Large Language Models (MLLMs) to perform effective 3D spatial reasoning through the introduction of the TRACE method. This method focuses on generating text-based representations from video to facilitate better spatial understanding.

🛠️ Research Methods:

– The TRACE approach introduces a novel prompting method that leverages text-based representations of 3D environments to improve spatial question answering. It encodes meta-context, camera trajectories, and detailed object entities to enable structured reasoning over egocentric videos.

💬 Research Conclusions:

– The TRACE method showcases notable improvements in spatial reasoning performance across various MLLM models and structures. Through extensive experiments on VSI-Bench and OST-Bench, the study demonstrates consistent enhancements over previous prompting strategies, supported by ablation studies and detailed analyses of 3D spatial reasoning bottlenecks.

👉 Paper link: https://huggingface.co/papers/2603.23404

13. StreamingClaw Technical Report

🔑 Keywords: Streaming Video Understanding, Embodied Intelligence, Real-time Reasoning, Proactive Interaction, Multimodal Long-term Memory

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– The objective is to propose StreamingClaw, a unified framework to overcome fragmentation in current agents and enable real-time streaming video understanding and embodied intelligence.

🛠️ Research Methods:

– The framework integrates five core capabilities including real-time streaming reasoning, multimodal long-term storage, and a perception-decision-action closed loop. It is also compatible with the OpenClaw framework for enhanced support.

💬 Research Conclusions:

– StreamingClaw successfully combines online real-time reasoning, multimodal long-term memory, and proactive interaction, facilitating direct control over the physical world and supporting practical deployment in real-world environments.

👉 Paper link: https://huggingface.co/papers/2603.22120

14. Qworld: Question-Specific Evaluation Criteria for LLMs

🔑 Keywords: large language models, open-ended questions, evaluation criteria, recursive expansion tree, HealthBench

💡 Category: AI in Healthcare

🌟 Research Objective:

– To develop a method called Qworld that generates question-specific evaluation criteria for better assessment of large language model capabilities on health-related questions.

🛠️ Research Methods:

– Utilized a recursive expansion tree to decompose questions into scenarios and fine-grained criteria, ensuring structured hierarchical and horizontal expansion.

💬 Research Conclusions:

– Qworld covers 89% of expert-authored criteria and creates 79% novel criteria validated by experts, offering greater insight and granularity.

– It reveals capability differences in large language models across dimensions like long-term impact, equity, error handling, and interdisciplinary reasoning, which are not distinguished by traditional rubrics.

👉 Paper link: https://huggingface.co/papers/2603.23522

15. The Pulse of Motion: Measuring Physical Frame Rate from Visual Dynamics

🔑 Keywords: Generative video models, Visual Chronometer, Physical Frames Per Second, temporal ambiguity, chronometric hallucination

💡 Category: Generative Models

🌟 Research Objective:

– Address temporal ambiguity in generative video models by developing a Visual Chronometer to estimate real-world frame rates from visual dynamics.

🛠️ Research Methods:

– Introduced a method to predict Physical Frames Per Second (PhyFPS) using controlled temporal resampling.

– Established benchmarks PhyFPS-Bench-Real and PhyFPS-Bench-Gen to quantify temporal scale issues.

💬 Research Conclusions:

– Current state-of-the-art video generators are misaligned with real-world temporal scales, leading to unnatural perceived motion.

– Applying PhyFPS corrections enhances the naturalness of AI-generated videos.

👉 Paper link: https://huggingface.co/papers/2603.14375

16. Understanding the Challenges in Iterative Generative Optimization with LLMs

🔑 Keywords: Generative optimization, Large language models, Self-improving agents, Execution feedback, Learning loops

💡 Category: Generative Models

🌟 Research Objective:

– To investigate the challenges in generative optimization using large language models, focusing on implicit design decisions impacting success across different applications.

🛠️ Research Methods:

– The study examines three critical factors: the starting artifact, credit horizon for execution traces, and batching trials into learning evidence through case studies involving MLAgentBench, Atari, and BigBench Extra Hard.

💬 Research Conclusions:

– Implicit design choices in setting up learning loops can significantly affect generative optimization success. The paper highlights the need for practical guidance to make these decisions explicit, emphasizing the lack of a universal method as a major barrier for adoption.

👉 Paper link: https://huggingface.co/papers/2603.23994

17.

18. PLDR-LLMs Reason At Self-Organized Criticality

🔑 Keywords: PLDR-LLMs, self-organized criticality, reasoning capabilities, second-order phase transitions, metastable steady state

💡 Category: Foundations of AI

🌟 Research Objective:

– The study aims to explore the reasoning capabilities of PLDR-LLMs pretrained at self-organized criticality, highlighting their ability to generalize reasoning without traditional benchmark evaluations.

🛠️ Research Methods:

– The research analyzes the characteristics of deductive outputs, comparing them to second-order phase transitions, and examines correlation lengths and metastable steady states to understand the model’s learning and representation at criticality.

💬 Research Conclusions:

– It concludes that PLDR-LLMs can generalize reasoning capabilities, which can be quantified solely by deducing global model parameter values at a metastable steady state, reducing reliance on curated benchmark datasets.

👉 Paper link: https://huggingface.co/papers/2603.23539

19. CUA-Suite: Massive Human-annotated Video Demonstrations for Computer-Use Agents

🔑 Keywords: Computer-use agents, expert video demonstrations, continuous video, CUA-Suite, multimodal corpus

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The research introduces CUA-Suite, aimed at advancing desktop automation through a large-scale ecosystem of expert video demonstrations and annotations.

🛠️ Research Methods:

– Utilization of VideoCUA to provide approximately 10,000 human-demonstrated tasks with continuous recordings and comprehensive annotations, forming a rich dataset for professional desktop applications.

💬 Research Conclusions:

– Current action models struggle with desktop applications, as indicated by a ~60% task failure rate, emphasizing the importance of continuous video streams and the potential for CUA-Suite to support diverse research avenues.

👉 Paper link: https://huggingface.co/papers/2603.24440

20. Toward Physically Consistent Driving Video World Models under Challenging Trajectories

🔑 Keywords: PhyGenesis, world models, autonomous driving simulation, physical consistency, CARLA simulator

💡 Category: Generative Models

🌟 Research Objective:

– The research aims to develop PhyGenesis, a world model that generates high-fidelity driving videos with strong physical consistency by transforming invalid trajectories into plausible conditions.

🛠️ Research Methods:

– The approach comprises two components: a physical condition generator and a physics-enhanced video generator, trained using a large-scale, physics-rich dataset including both real-world and simulated driving scenarios.

💬 Research Conclusions:

– PhyGenesis outperforms existing state-of-the-art methods, especially in generating videos from challenging trajectories with superior physical consistency.

👉 Paper link: https://huggingface.co/papers/2603.24506

21. EVA: Efficient Reinforcement Learning for End-to-End Video Agent

🔑 Keywords: EVA, video understanding, adaptive reasoning, reinforcement learning, multimodal large language models

💡 Category: Reinforcement Learning

🌟 Research Objective:

– Develop an efficient reinforcement learning framework named EVA for enhancing video understanding with adaptive reasoning.

🛠️ Research Methods:

– Implement iterative summary-plan-action-reflection reasoning enabling EVA to autonomously decide on video analysis aspects.

– Design a three-stage learning pipeline involving supervised fine-tuning, Kahneman-Tversky Optimization, and Generalized Reward Policy Optimization.

💬 Research Conclusions:

– EVA outperforms existing methods by 6-12% over traditional MLLM baselines and 1-3% over previous adaptive agent methods in video understanding benchmarks.

👉 Paper link: https://huggingface.co/papers/2603.22918

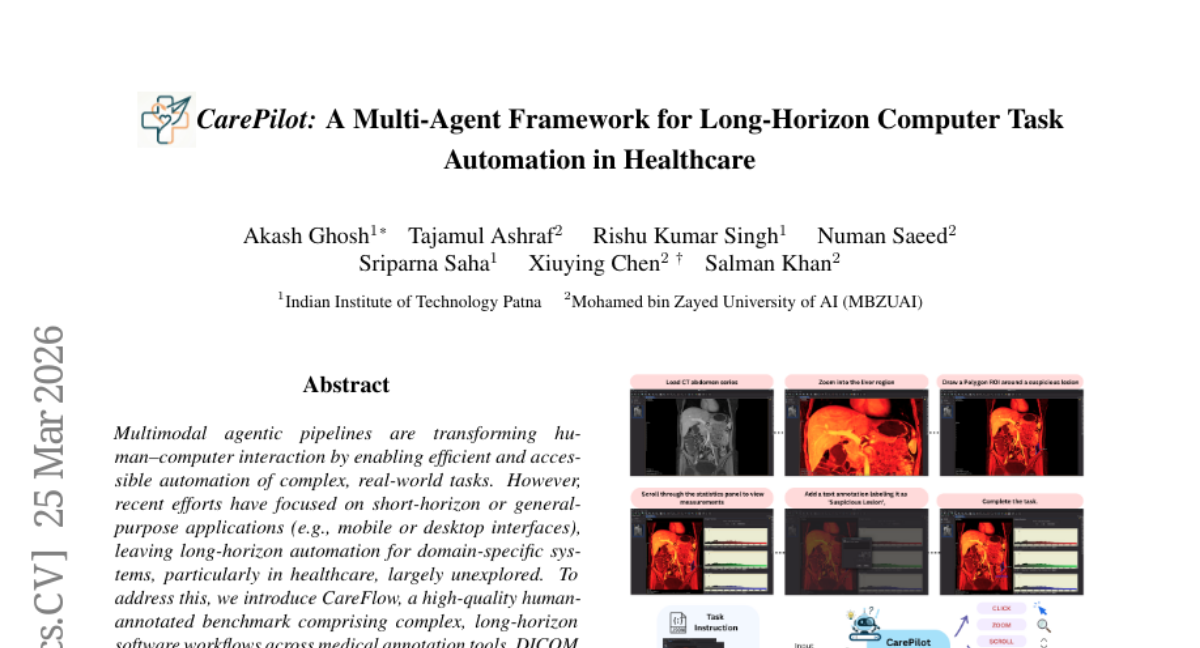

22. CarePilot: A Multi-Agent Framework for Long-Horizon Computer Task Automation in Healthcare

🔑 Keywords: Long-horizon automation, Multimodal agent, Healthcare, AI-generated summary, State-of-the-art performance

💡 Category: AI in Healthcare

🌟 Research Objective:

– The research aims to address the unexplored long-horizon automation in healthcare by introducing CareFlow, a benchmark designed for complex medical environments.

🛠️ Research Methods:

– The study employs a multimodal agent framework called CarePilot, utilizing actor-critic methods with dual-memory mechanisms to enhance task execution and reasoning.

💬 Research Conclusions:

– CarePilot significantly outperforms existing multimodal baselines, achieving state-of-the-art results and improving execution performance by approximately 15.26% over closed-source and 3.38% over open-source models on the CareFlow benchmark.

👉 Paper link: https://huggingface.co/papers/2603.24157

23. When Models Judge Themselves: Unsupervised Self-Evolution for Multimodal Reasoning

🔑 Keywords: Multi-modal reasoning, Self-evolution training, Unsupervised learning, Policy optimization, Mathematical reasoning

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To improve performance in multimodal reasoning tasks without using costly annotated data or teacher-model distillation by utilizing a self-evolution training framework.

🛠️ Research Methods:

– Implementation of an unsupervised self-evolution training framework using self-consistency signals and group-relative policy optimization.

– The approach employs multiple reasoning trajectories and bounded Judge modulation for reweighting quality trajectories, aiming for robust policy updates.

💬 Research Conclusions:

– The proposed method consistently enhances reasoning performance and generalization across five mathematical reasoning benchmarks, demonstrating a scalable path for self-evolving multimodal models.

👉 Paper link: https://huggingface.co/papers/2603.21289

24. Why Does Self-Distillation (Sometimes) Degrade the Reasoning Capability of LLMs?

🔑 Keywords: Self-distillation, Mathematical Reasoning, Uncertainty Expression, Out-of-distribution, Reasoning Behavior

💡 Category: Natural Language Processing

🌟 Research Objective:

– Investigate the impact of self-distillation on mathematical reasoning performance in large language models, specifically focusing on the expression of uncertainty and its effect on out-of-distribution tasks.

🛠️ Research Methods:

– Conduct controlled experiments varying the conditioning context richness and task coverage to observe the effects on uncertainty expression and performance across different models like Qwen3-8B, obtaining insights into changes in reasoning behavior.

💬 Research Conclusions:

– Self-distillation can degrade mathematical reasoning by suppressing uncertainty expression, affecting out-of-distribution performance with observed performance drops of up to 40%. It emphasizes the importance of optimized reasoning behavior beyond merely reinforcing correct answers.

👉 Paper link: https://huggingface.co/papers/2603.24472