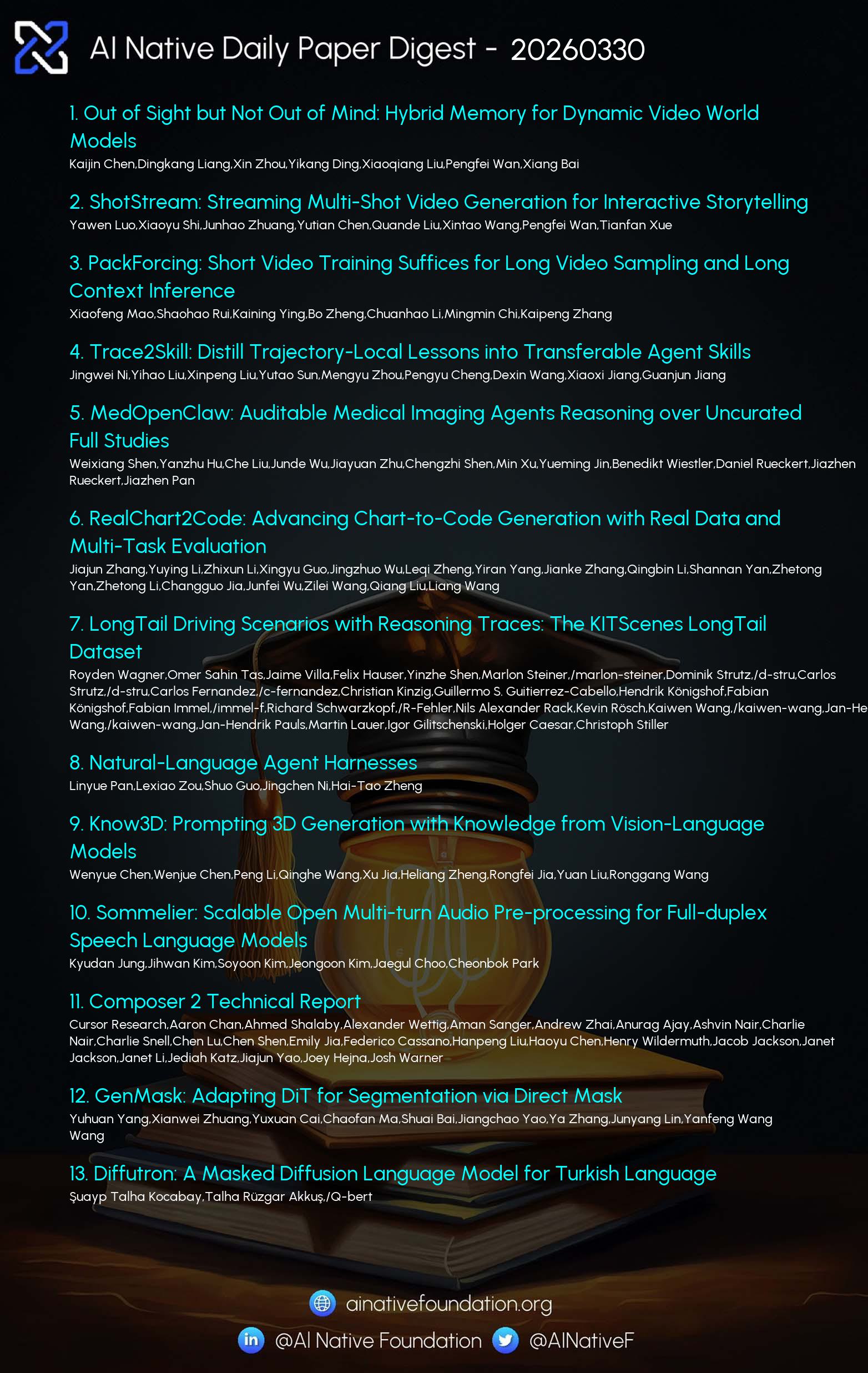

AI Native Daily Paper Digest – 20260330

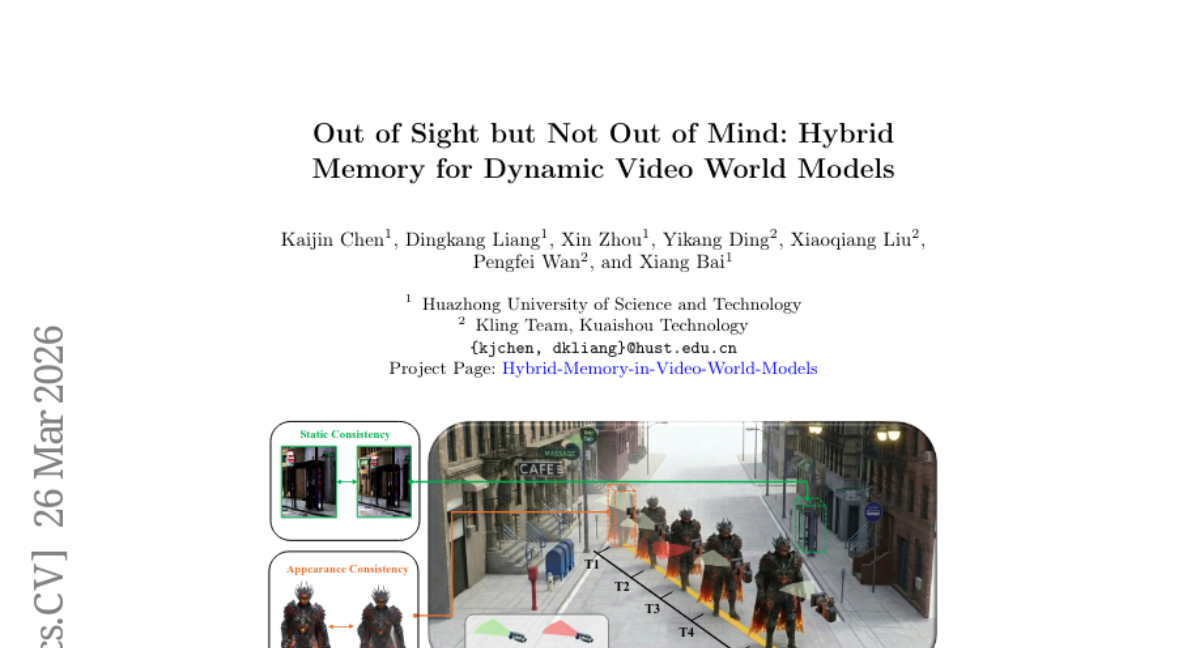

1. Out of Sight but Not Out of Mind: Hybrid Memory for Dynamic Video World Models

🔑 Keywords: Hybrid Memory, Video World Models, Dynamic Subjects, Memory Architecture, Motion Continuity

💡 Category: Computer Vision

🌟 Research Objective:

– To address video model limitations by introducing Hybrid Memory that ensures consistent tracking of dynamic subjects during occlusions.

🛠️ Research Methods:

– Developed HyDRA, a memory architecture using tokenization and spatiotemporal retrieval to manage memory effectively.

– Constructed HM-World, a large-scale video dataset designed to evaluate the hybrid coherence with high-fidelity clips.

💬 Research Conclusions:

– Hybrid Memory significantly outperforms existing methods in maintaining dynamic subject consistency and improving overall video generation quality.

👉 Paper link: https://huggingface.co/papers/2603.25716

2. PackForcing: Short Video Training Suffices for Long Video Sampling and Long Context Inference

🔑 Keywords: PackForcing, KV-cache, temporal consistency, hierarchical context compression, video diffusion models

💡 Category: Generative Models

🌟 Research Objective:

– The paper introduces PackForcing, a framework aimed at efficient long-video generation by managing KV-cache growth, ensuring temporal consistency, and reducing memory usage.

🛠️ Research Methods:

– Utilizes a novel three-partition KV-cache strategy categorizing historical context into Sink tokens, Mid tokens, and Recent tokens for memory optimization.

– Implements a dynamic top-k context selection mechanism and Temporal RoPE Adjustment to address memory footprint without quality loss.

💬 Research Conclusions:

– PackForcing can successfully generate consistent long video segments using minimal initial training data, achieving significant improvements in temporal extrapolation and memory usage with state-of-the-art results in temporal consistency and dynamic degree.

👉 Paper link: https://huggingface.co/papers/2603.25730

3. MedOpenClaw: Auditable Medical Imaging Agents Reasoning over Uncurated Full Studies

🔑 Keywords: MEDOPENCLAW, MEDFLOWBENCH, vision-language models, 3D medical volumes, spatial reasoning

💡 Category: AI in Healthcare

🌟 Research Objective:

– The primary goal is to enhance the evaluation of vision-language models (VLMs) in medical imaging by enabling dynamic interaction with 3D medical volumes using standard medical viewers.

🛠️ Research Methods:

– Introduction of MEDOPENCLAW, an auditable runtime for VLMs to operate within standard medical tools, and MEDFLOWBENCH, a comprehensive benchmark for evaluating medical imaging capabilities across different tracks.

💬 Research Conclusions:

– Initial results indicate that while state-of-the-art models can navigate basic tasks, their performance declines with professional tools due to insufficient spatial grounding. This work provides a foundation for developing interactive medical imaging agents.

👉 Paper link: https://huggingface.co/papers/2603.24649

4. LongTail Driving Scenarios with Reasoning Traces: The KITScenes LongTail Dataset

🔑 Keywords: long-tail driving events, few-shot generalization, multimodal models, instruction following, multilingual reasoning traces

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The primary goal is to enhance few-shot generalization and assess multimodal models’ abilities to follow instructions within the context of long-tail driving scenarios.

🛠️ Research Methods:

– The study introduces a novel driving dataset featuring multi-view video, trajectories, high-level instructions, and reasoning traces in multiple languages to support in-context learning.

💬 Research Conclusions:

– The dataset creates a benchmark for multimodal models by evaluating instruction following and semantic coherence, offering valuable insights into how reasoning affects driving capability across diverse linguistic and cultural backgrounds.

👉 Paper link: https://huggingface.co/papers/2603.23607

5. Know3D: Prompting 3D Generation with Knowledge from Vision-Language Models

🔑 Keywords: Know3D, AI-generated summary, multimodal large language models, latent hidden-state injection, 3D generation

💡 Category: Generative Models

🌟 Research Objective:

– The paper introduces Know3D, a framework that combines multimodal large language models with 3D generation through latent hidden-state injection to achieve language-controlled 3D asset synthesis.

🛠️ Research Methods:

– Utilizes a VLM-diffusion-based model where the VLM provides semantic understanding and guidance to bridge abstract textual instructions with geometric reconstruction of unobserved regions.

💬 Research Conclusions:

– Know3D successfully transforms traditionally stochastic back-view generation into a semantically controllable process, presenting a promising direction for the future of 3D generation models.

👉 Paper link: https://huggingface.co/papers/2603.22782

6. Composer 2 Technical Report

🔑 Keywords: Composer 2, agentic software engineering, continued pretraining, large-scale reinforcement learning, CursorBench

💡 Category: AI Systems and Tools

🌟 Research Objective:

– Develop a specialized coding model, Composer 2, for real-world software engineering tasks using a phased learning approach.

🛠️ Research Methods:

– Trained in two phases: continued pretraining to enhance knowledge and latent coding skills, followed by large-scale reinforcement learning for end-to-end coding improvements.

– Used infrastructure and environments closely matching real coding problems in the same Cursor harness.

💬 Research Conclusions:

– Composer 2 shows significant performance improvements, achieving high scores on benchmarks like Terminal-Bench and SWE-bench Multilingual, comparable to state-of-the-art systems.

👉 Paper link: https://huggingface.co/papers/2603.24477

7. Diffutron: A Masked Diffusion Language Model for Turkish Language

🔑 Keywords: Masked Diffusion Language Models, Turkish, LoRA-based pre-training, non-autoregressive text generation, progressive instruction tuning

💡 Category: Natural Language Processing

🌟 Research Objective:

– To introduce Diffutron, a Masked Diffusion Language Model (MDLM) designed for Turkish, aiming for efficient non-autoregressive text generation.

🛠️ Research Methods:

– Utilized LoRA-based continual pre-training with a multilingual encoder on a large-scale corpus.

– Employed progressive instruction tuning to adapt the model for general and task-specific instructions.

💬 Research Conclusions:

– Diffutron achieves competitive performance with existing multi-billion-parameter models despite its compact size, validating the efficiency of masked diffusion modeling and multi-stage tuning for morphologically rich languages like Turkish.

👉 Paper link: https://huggingface.co/papers/2603.20466

8. Lie to Me: How Faithful Is Chain-of-Thought Reasoning in Reasoning Models?

🔑 Keywords: Chain-of-thought, Faithfulness, Model architecture, Training methodology, Reasoning hints

💡 Category: Natural Language Processing

🌟 Research Objective:

– To evaluate the faithfulness of Chain-of-thought (CoT) reasoning across a variety of open-weight models and understand how system design and reasoning hints influence this faithfulness.

🛠️ Research Methods:

– Testing 12 open-weight reasoning models from 9 architectural families using 498 multiple-choice questions from MMLU and GPQA Diamond, injecting six categories of reasoning hints, and measuring acknowledgment rates across over 41,832 inference runs.

💬 Research Conclusions:

– Faithfulness in CoT reasoning is not a fixed property but varies with architecture, training method, and hint type. The research highlights a significant gap between internal model acknowledgment and output acknowledgment, suggesting systematic suppression in outputs. This variability impacts the effectiveness of CoT monitoring as a safety mechanism.

👉 Paper link: https://huggingface.co/papers/2603.22582

9.

10. Learning to Commit: Generating Organic Pull Requests via Online Repository Memory

🔑 Keywords: LLM coding agents, Learning to Commit, Online Repository Memory, coding style, API reuse

💡 Category: AI Systems and Tools

🌟 Research Objective:

– Enhance LLM-based coding agents by improving code organicity and adherence to project-specific conventions through the Learning to Commit framework.

🛠️ Research Methods:

– Utilize Online Repository Memory to perform supervised contrastive reflection on chronological commit histories, capturing coding style, internal API usage, and architectural invariants.

💬 Research Conclusions:

– The framework effectively improves the organicity of code on held-out future tasks, demonstrating better code-style consistency and internal API reuse in comparison to traditional methods.

👉 Paper link: https://huggingface.co/papers/2603.26664

11. GenMask: Adapting DiT for Segmentation via Direct Mask

🔑 Keywords: Generative Models, Segmentation, Timestep Sampling Strategy, VAE Latents, GenMask

💡 Category: Generative Models

🌟 Research Objective:

– To directly train generative models for segmentation tasks to overcome the limitations of indirect feature extraction methods.

🛠️ Research Methods:

– Introduced a timestep sampling strategy to balance noise levels for binary masks and image generation, enabling effective joint training.

💬 Research Conclusions:

– The proposed GenMask achieves state-of-the-art performance in segmentation benchmarks, eliminating the need for complex feature extraction pipelines.

👉 Paper link: https://huggingface.co/papers/2603.23906

12. Sommelier: Scalable Open Multi-turn Audio Pre-processing for Full-duplex Speech Language Models

🔑 Keywords: Speech Language Models, full-duplex systems, multi-speaker conversational data, open-source data processing pipeline

💡 Category: Natural Language Processing

🌟 Research Objective:

– Address the scarcity of high-quality multi-speaker conversational data needed for effective development of full-duplex Speech Language Models.

🛠️ Research Methods:

– Development of a robust and scalable open-source data processing pipeline to tackle challenges like overlapping dialogue and system errors.

💬 Research Conclusions:

– The proposed pipeline effectively addresses the limitations of standard processing pipelines, mitigating issues such as diarization errors and ASR hallucinations, thus supporting the shift towards more natural human-computer interactions.

👉 Paper link: https://huggingface.co/papers/2603.25750

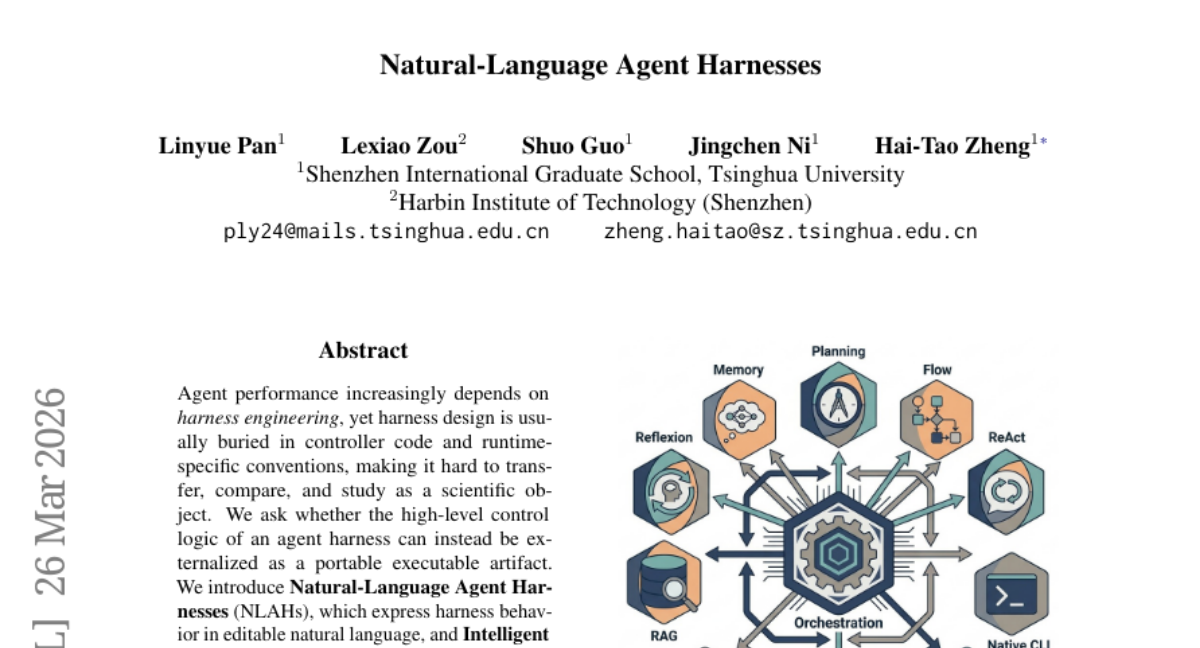

13. Natural-Language Agent Harnesses

🔑 Keywords: Natural-Language Agent Harness, Intelligent Harness Runtime, portable executable artifact, natural language, operational viability

💡 Category: AI Systems and Tools

🌟 Research Objective:

– Investigate whether the high-level control logic of an agent harness can be externalized as a portable executable artifact.

🛠️ Research Methods:

– Introduction of Natural-Language Agent Harnesses (NLAHs) and Intelligent Harness Runtime (IHR) to enable execution through explicit contracts, durable artifacts, and lightweight adapters.

– Controlled evaluations across coding and computer-use benchmarks to assess operational viability, module ablation, and code-to-text harness migration.

💬 Research Conclusions:

– Demonstrates the potential of NLAHs and IHR in making agent harness design more accessible and scientifically viable by externalizing control logic.

👉 Paper link: https://huggingface.co/papers/2603.25723

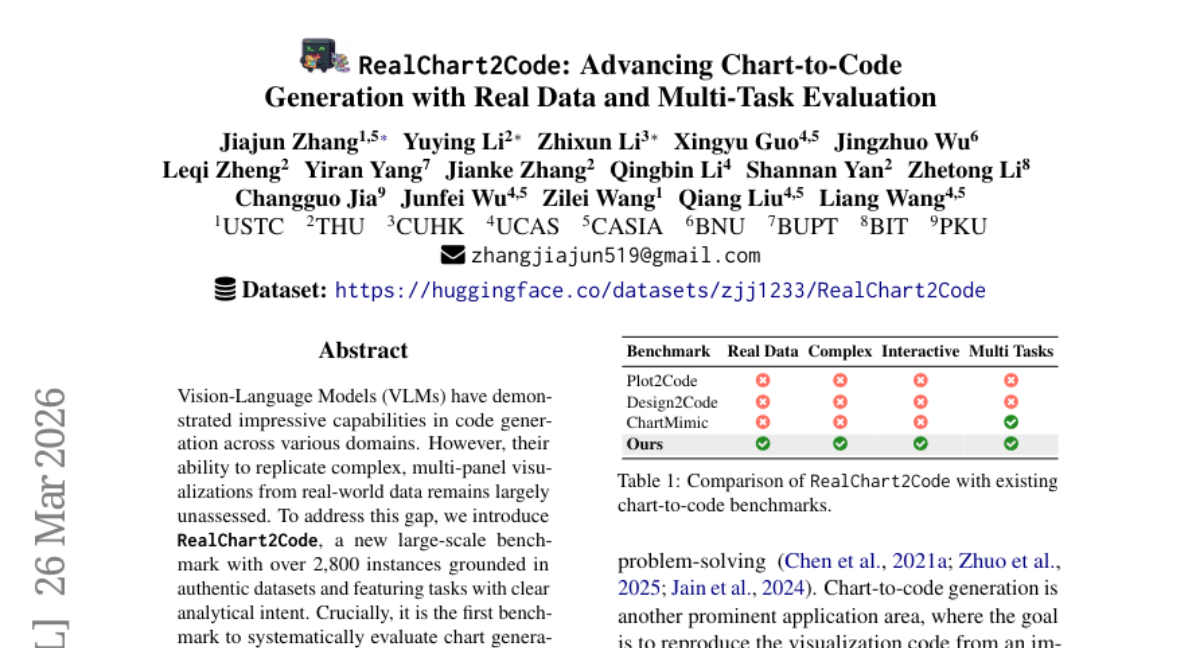

14. RealChart2Code: Advancing Chart-to-Code Generation with Real Data and Multi-Task Evaluation

🔑 Keywords: Vision-Language Models, multi-panel visualization, real-world data, large-scale benchmark, chart generation

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The primary objective is to introduce RealChart2Code, a large-scale benchmark designed to assess Vision-Language Models’ capability in generating complex, multi-panel charts from real-world data.

🛠️ Research Methods:

– The paper employs RealChart2Code to evaluate 14 leading VLMs, focusing on their performance with complex plot structures and authentic datasets within a multi-turn conversational setting.

💬 Research Conclusions:

– The study uncovers significant performance degradation of VLMs on RealChart2Code compared to simpler benchmarks and a noticeable performance gap between proprietary and open-weight models, indicating limitations in replicating intricate, multi-panel charts.

👉 Paper link: https://huggingface.co/papers/2603.25804

15. Trace2Skill: Distill Trajectory-Local Lessons into Transferable Agent Skills

🔑 Keywords: Trace2Skill, skill generation, Large Language Model, inductive reasoning, declarative skills

💡 Category: Natural Language Processing

🌟 Research Objective:

– To develop a scalable framework, Trace2Skill, for generating and improving skills for Large Language Model (LLM) agents without the need for parameter updates or external modules.

🛠️ Research Methods:

– Trace2Skill employs a parallel analysis of diverse execution traces, utilizing sub-agents to generate consolidated skills through inductive reasoning instead of relying on sequential overfitting or shallow knowledge.

💬 Research Conclusions:

– The Trace2Skill framework notably improves skill transfer and generalization across LLM scales and out-of-distribution (OOD) settings, as evidenced by performance improvements in complex domains like spreadsheets, VisionQA, and math reasoning.

👉 Paper link: https://huggingface.co/papers/2603.25158

16. ShotStream: Streaming Multi-Shot Video Generation for Interactive Storytelling

🔑 Keywords: Multi-shot video generation, ShotStream, interactive storytelling, dual-cache memory mechanism, two-stage distillation

💡 Category: Generative Models

🌟 Research Objective:

– The study aims to develop a novel causal architecture, ShotStream, to enable real-time interactive multi-shot video generation, facilitating dynamic storytelling.

🛠️ Research Methods:

– Utilization of a dual-cache memory mechanism to ensure visual consistency across frames and a two-stage distillation process to minimize latency and error accumulation.

💬 Research Conclusions:

– ShotStream effectively generates coherent multi-shot videos with high efficiency, achieving 16 FPS on a single GPU, and matches or surpasses the quality of current slower models. This advancement supports real-time interactive storytelling.

👉 Paper link: https://huggingface.co/papers/2603.25746