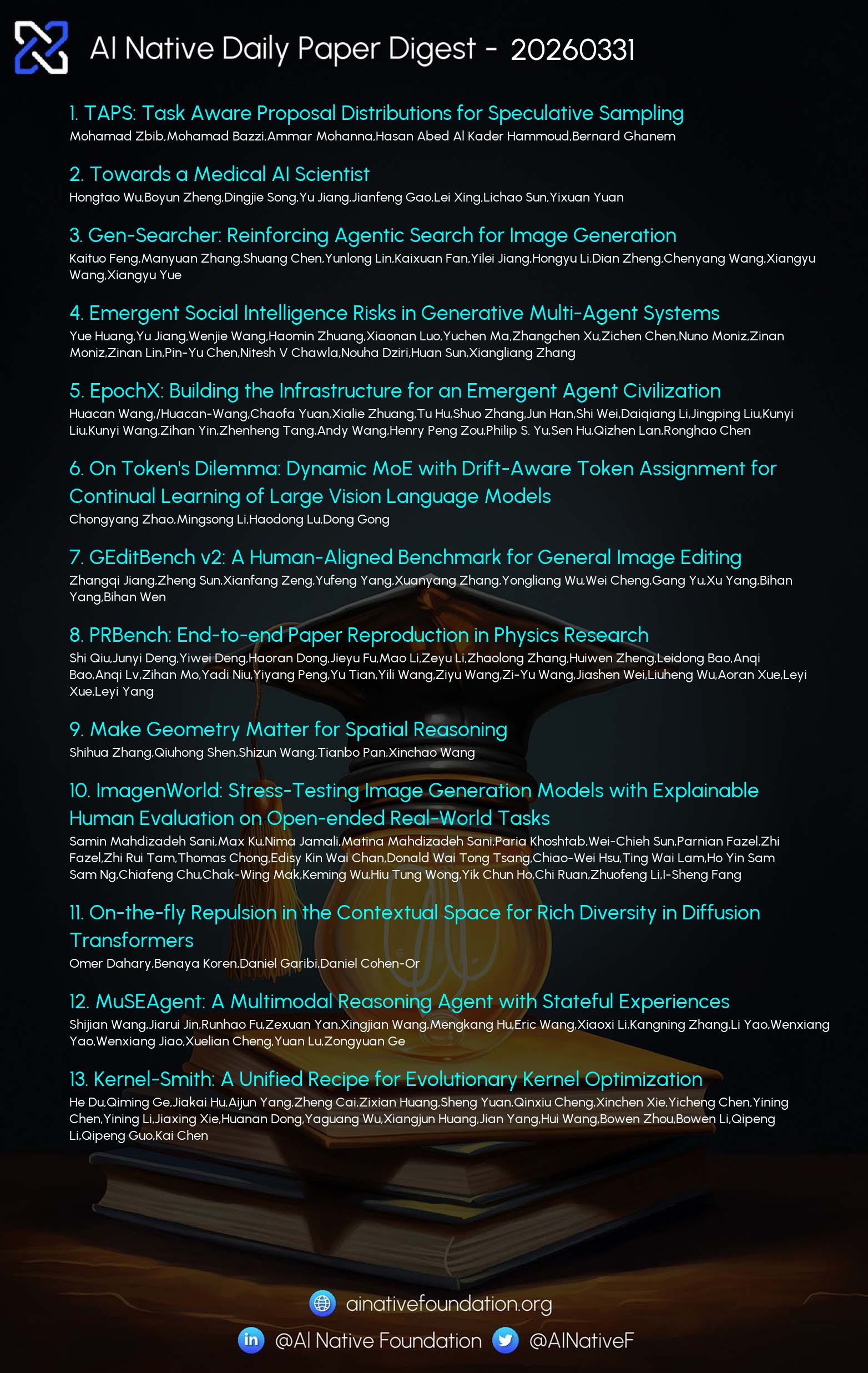

AI Native Daily Paper Digest – 20260331

1. TAPS: Task Aware Proposal Distributions for Speculative Sampling

🔑 Keywords: Speculative decoding, draft model, confidence-based routing, acceptance length

💡 Category: Generative Models

🌟 Research Objective:

– Examine the effectiveness of speculative decoding based on the alignment of draft model training data with downstream tasks.

🛠️ Research Methods:

– Investigate using lightweight HASS and EAGLE-2 drafters trained on datasets like MathInstruct and ShareGPT, evaluated on benchmarks such as MT-Bench, GSM8K, and others.

💬 Research Conclusions:

– Specialized drafters show improved performance when combined through confidence-based routing rather than simple averaging.

– Effective speculative decoding is influenced by draft training data and its match with the task.

– Confidence-based routing outperforms entropy for routing decisions, enhancing specialized drafter combination.

👉 Paper link: https://huggingface.co/papers/2603.27027

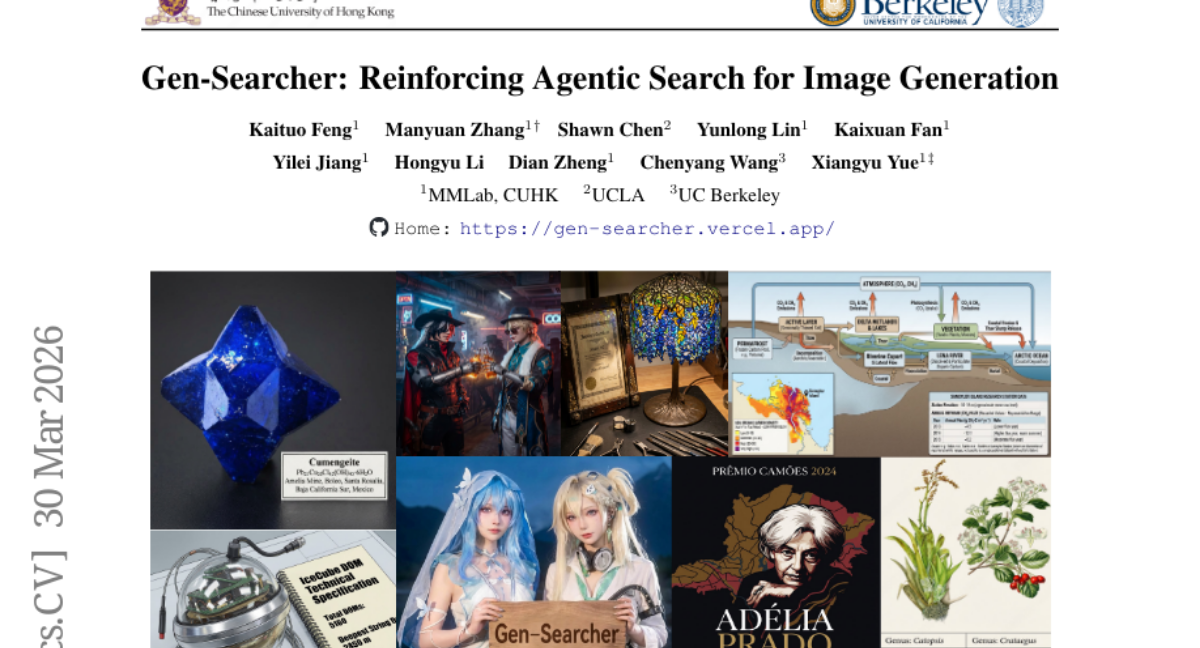

2. Gen-Searcher: Reinforcing Agentic Search for Image Generation

🔑 Keywords: Search-Augmented Image Generation, Multi-Hop Reasoning, Agentic Reinforcement Learning, Dual Reward Feedback, KnowGen

💡 Category: Generative Models

🌟 Research Objective:

– The study aims to address the limitations of image generation models with static internal knowledge by introducing Gen-Searcher, an innovative search-augmented image generation agent.

🛠️ Research Methods:

– Gen-Searcher employs multi-hop reasoning and a search mechanism to gather textual knowledge and reference images. It is trained using a combination of supervised fine-tuning and agentic reinforcement learning with dual reward feedback, using datasets Gen-Searcher-SFT-10k and Gen-Searcher-RL-6k.

💬 Research Conclusions:

– Gen-Searcher significantly improves performance, enhancing Qwen-Image scores by approximately 16 points on KnowGen and 15 points on WISE, showcasing its effectiveness in image generation tasks that require external knowledge. The research also contributes to the field by open-sourcing data, models, and code as a foundation for further developments in search-augmented image generation.

👉 Paper link: https://huggingface.co/papers/2603.28767

3. EpochX: Building the Infrastructure for an Emergent Agent Civilization

🔑 Keywords: AI agents, foundation models, EpochX, human-agent production, credit mechanism

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The research aims to introduce EpochX, a credits-native marketplace infrastructure designed for fostering collaboration between humans and AI agents in production networks.

🛠️ Research Methods:

– The authors developed a system where tasks are posted and claimed by humans and agents as peer participants, using an explicit delivery workflow with verification and acceptance to decompose and execute subtasks.

💬 Research Conclusions:

– EpochX reframes AI as an organizational design issue by creating infrastructures that ensure verifiable work leads to reusable artifacts and support sustainable human-agent collaboration through a native credit mechanism for economic viability.

👉 Paper link: https://huggingface.co/papers/2603.27304

4. GEditBench v2: A Human-Aligned Benchmark for General Image Editing

🔑 Keywords: image editing, visual consistency, GEditBench v2, PVC-Judge, human alignment

💡 Category: Computer Vision

🌟 Research Objective:

– Introduce a new benchmark, GEditBench v2, and evaluation model, PVC-Judge, to assess visual consistency and human alignment in complex image editing tasks.

🛠️ Research Methods:

– GEditBench v2 includes 1,200 user queries across 23 tasks, with an open-set category for complex edits outside predefined tasks.

– PVC-Judge uses region-decoupled preference data synthesis pipelines for pairwise assessment of visual consistency.

💬 Research Conclusions:

– PVC-Judge achieves state-of-the-art evaluation performance among open-source models and surpasses GPT-5.1.

– GEditBench v2 enables more human-aligned evaluation, revealing limitations of current models and fostering advancements in precise image editing.

👉 Paper link: https://huggingface.co/papers/2603.28547

5. Make Geometry Matter for Spatial Reasoning

🔑 Keywords: GeoSR, spatial reasoning, vision-language models, geometry tokens, Geometry-Unleashing Masking

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To enhance the spatial reasoning capability of vision-language models (VLMs) by integrating geometry tokens using the proposed GeoSR framework.

🛠️ Research Methods:

– Implementation of Geometry-Unleashing Masking to strategically mask 2D vision tokens and encourage reliance on geometry tokens.

– Utilization of Geometry-Guided Fusion, a gated routing mechanism, to amplify the contribution of geometry tokens in critical areas.

💬 Research Conclusions:

– GeoSR significantly outperforms prior methods in both static and dynamic spatial reasoning tasks, establishing new state-of-the-art performance by leveraging geometric information effectively.

👉 Paper link: https://huggingface.co/papers/2603.26639

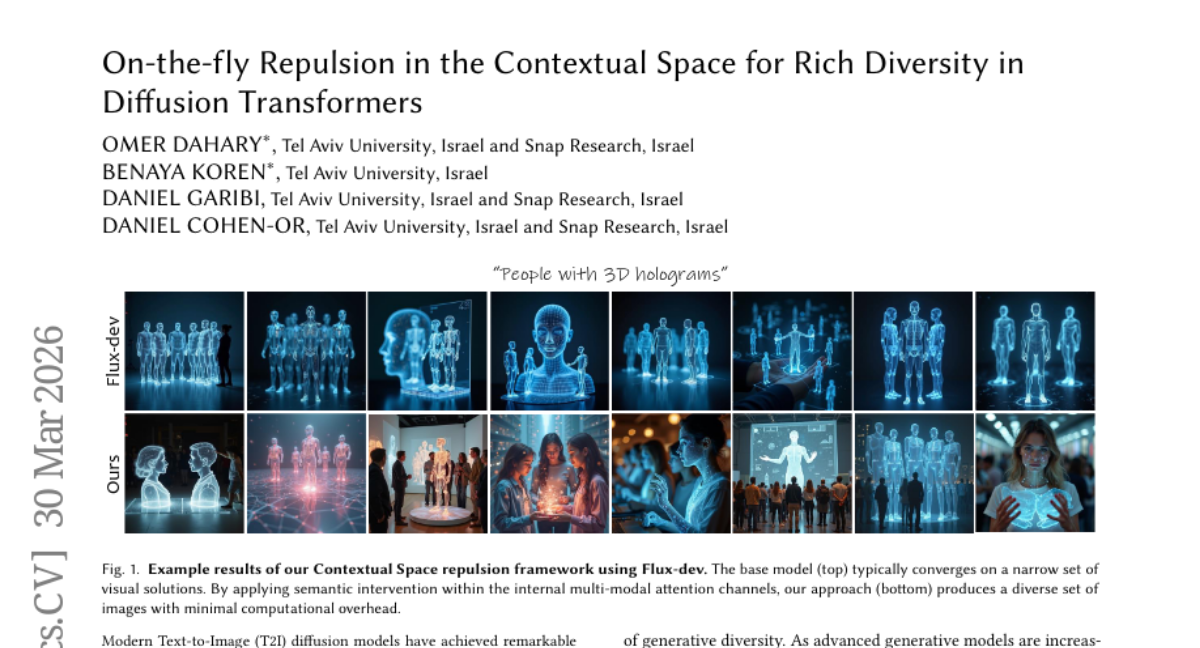

6. On-the-fly Repulsion in the Contextual Space for Rich Diversity in Diffusion Transformers

🔑 Keywords: Diffusion Transformers, Contextual Space, Repulsion, Text-to-Image, Semantic Adherence

💡 Category: Generative Models

🌟 Research Objective:

– To enhance the diversity of visual outputs in Diffusion Transformers while maintaining visual quality and semantic accuracy.

🛠️ Research Methods:

– Implement repulsion in the Contextual Space during the transformer’s forward pass to intervene in multimodal attention channels for richer diversity.

💬 Research Conclusions:

– The application of repulsion in Contextual Space significantly increases diversity without compromising visual fidelity and proves efficient in modern models.

👉 Paper link: https://huggingface.co/papers/2603.28762

7. Kernel-Smith: A Unified Recipe for Evolutionary Kernel Optimization

🔑 Keywords: Kernel-Smith, GPU kernel generation, evolutionary algorithms, reinforcement learning, performance optimization

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To develop Kernel-Smith, a framework for generating high-performance GPU kernels and operators, optimizing performance across different hardware backends.

🛠️ Research Methods:

– Combines evolutionary algorithms with post-training reinforcement learning.

– Maintains a population of executable candidates, iteratively improved using high-performance program archives and execution feedback.

– Converts evolution trajectories into step-centric supervision to enhance optimization.

💬 Research Conclusions:

– Kernel-Smith-235B-RL achieved state-of-the-art performance on KernelBench with Nvidia Triton backend.

– Demonstrated superior adaptability across heterogeneous platforms such as MetaX MACA.

– Extended utility beyond benchmarks to contributions in production systems like SGLang and LMDeploy.

👉 Paper link: https://huggingface.co/papers/2603.28342

8. ChartNet: A Million-Scale, High-Quality Multimodal Dataset for Robust Chart Understanding

🔑 Keywords: ChartNet, multimodal dataset, chart interpretation, code-guided synthesis, cross-modal alignment

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Introduce ChartNet, designed to advance interpretation and reasoning abilities in multimodal models.

🛠️ Research Methods:

– Utilizes a novel code-guided synthesis pipeline to generate a diverse set of 1.5 million chart samples across multiple chart types and libraries.

💬 Research Conclusions:

– Demonstrates improved benchmarks when fine-tuned on ChartNet, highlighting its effectiveness for large-scale multimodal model supervision.

👉 Paper link: https://huggingface.co/papers/2603.27064

9. HandX: Scaling Bimanual Motion and Interaction Generation

🔑 Keywords: HandX, bimanual interaction, finger dynamics, large language models, autoregressive models

💡 Category: Generative Models

🌟 Research Objective:

– HandX aims to provide a comprehensive foundation for bimanual hand motion synthesis by developing a new dataset, annotation method, and evaluation metrics.

🛠️ Research Methods:

– Employed a decoupled strategy for scalable annotation, utilizing large language models to extract motion features and produce detailed descriptions.

– Benchmarked diffusion and autoregressive models using various conditioning modes.

💬 Research Conclusions:

– Experiments showcased high-quality dexterous motion generation, revealing that larger models with high-fidelity datasets produce more coherent bimanual motions.

– The HandX dataset is released to facilitate future research in this area.

👉 Paper link: https://huggingface.co/papers/2603.28766

10. Story2Proposal: A Scaffold for Structured Scientific Paper Writing

🔑 Keywords: AI-generated summary, multi-agent framework, structured scientific manuscripts, visual contract

💡 Category: Natural Language Processing

🌟 Research Objective:

– The primary aim is to develop a contract-governed multi-agent framework called Story2Proposal that generates structured scientific manuscripts with enhanced consistency and visual alignment.

🛠️ Research Methods:

– Implements coordinated agents including architect, writer, refiner, and renderer operating under a shared visual contract. The system uses a generate-evaluate-adapt loop to maintain manuscript alignment through feedback and contract updates.

💬 Research Conclusions:

– Story2Proposal shows improved structural consistency and visual alignment with an expert evaluation score of 6.145, outperforming other methods such as DirectChat and the structured generation baseline Fars.

👉 Paper link: https://huggingface.co/papers/2603.27065

11. Think over Trajectories: Leveraging Video Generation to Reconstruct GPS Trajectories from Cellular Signaling

🔑 Keywords: GPS trajectories, cellular signaling, map-visual domain, video generation model, reinforcement learning

💡 Category: Generative Models

🌟 Research Objective:

– Transform cellular signaling records into high-precision GPS trajectories using map-visual video generation.

🛠️ Research Methods:

– Formulate the Sig2GPS problem as an image-to-video generation task.

– Develop a paired signaling-to-trajectory video dataset to fine-tune a video generation model.

– Implement a trajectory-aware reinforcement learning-based optimization method to enhance generation fidelity.

💬 Research Conclusions:

– The map-visual video generation approach significantly outperforms traditional methods in generating accurate GPS trajectories.

– Demonstrates scalability and cross-city applicability, suggesting practicality for trajectory data mining.

👉 Paper link: https://huggingface.co/papers/2603.26610

12. Density-aware Soft Context Compression with Semi-Dynamic Compression Ratio

🔑 Keywords: Dynamic Compression Ratio, Information Density, Context Compression, Discrete Ratio Selector, AI-generated summary

💡 Category: Natural Language Processing

🌟 Research Objective:

– The paper aims to develop a density-aware dynamic compression framework for large language models that adaptively compresses contexts, outperforming static methods in this task.

🛠️ Research Methods:

– A Semi-Dynamic Context Compression framework is introduced featuring a Discrete Ratio Selector, which predicts compression targets based on information density and quantizes them into predefined discrete ratios.

– The framework is trained jointly with the compressor on synthetic data, using summary lengths to create labels for compression ratio prediction.

💬 Research Conclusions:

– The density-aware framework, using mean pooling as the backbone, consistently outperforms static baselines and establishes a robust Pareto frontier for context compression techniques.

👉 Paper link: https://huggingface.co/papers/2603.25926

13. HISA: Efficient Hierarchical Indexing for Fine-Grained Sparse Attention

🔑 Keywords: HISA, Sparse Attention, Context Length, Indexer, Efficiency

💡 Category: Foundations of AI

🌟 Research Objective:

– To improve sparse attention efficiency by introducing HISA, which reduces computational complexity while maintaining exact token-level selection fidelity.

🛠️ Research Methods:

– Employ a hierarchical approach for the indexer, featuring a block-level coarse filter followed by token-level refinement, transforming the computational process from a flat token scan to a two-stage hierarchical procedure.

💬 Research Conclusions:

– HISA achieves significant efficiency improvements, reflected by 2x speedup at 32K context length and 4x at 128K, while maintaining selection fidelity with a mean IoU greater than 99%.

– HISA, when integrated into existing systems like DeepSeek-V3.2, closely matches the quality of original methods while outperforming block-sparse baselines without requiring additional training or fine-tuning.

👉 Paper link: https://huggingface.co/papers/2603.28458

14. A Comparative Study in Surgical AI: Datasets, Foundation Models, and Barriers to Med-AGI

🔑 Keywords: Surgical tool detection, Vision Language Models, multi-billion parameter models, neurosurgery, AI in Healthcare

💡 Category: AI in Healthcare

🌟 Research Objective:

– To explore the limitations and potential of current Vision Language Models in detecting surgical tools, particularly in neurosurgery, using state-of-the-art methods available in 2026.

🛠️ Research Methods:

– Conducted a case study involving scaling experiments with multi-billion parameter Vision Language Models to assess their performance in surgical tool detection tasks.

💬 Research Conclusions:

– The study found that even large-scale models struggle with seemingly simple tasks such as tool detection in neurosurgery. Increasing the model size and training time led to diminishing returns in performance, indicating fundamental limitations in current models that cannot be easily overcome with more data or compute resources. The study highlights ongoing challenges and suggests that current AI models face significant obstacles in practical surgical applications.

👉 Paper link: https://huggingface.co/papers/2603.27341

15. Text Data Integration

🔑 Keywords: Data Integration, Structured Data, Unstructured Data, Textual Data

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– The paper aims to explore the integration of textual data into data integration systems, highlighting the challenges and potential open problems in effectively unifying diverse data formats.

🛠️ Research Methods:

– The study discusses current methodologies, state-of-the-art techniques, and existing systems for data integration focusing on both structured and unstructured data types.

💬 Research Conclusions:

– The paper concludes that while current data integration systems excel in handling structured data, there is a wealth of untapped knowledge in unstructured data, necessitating advancements in integrating textual data to unlock its full potential.

👉 Paper link: https://huggingface.co/papers/2603.27055

16. INSID3: Training-Free In-Context Segmentation with DINOv3

🔑 Keywords: INSID3, DINOv3, In-context Segmentation, Self-supervised Backbone, Training-free Approach

💡 Category: Computer Vision

🌟 Research Objective:

– To explore whether a single self-supervised backbone can effectively support both semantic matching and segmentation tasks without relying on supervision or auxiliary models.

🛠️ Research Methods:

– Utilization of scaled-up dense self-supervised features from DINOv3 for versatile segmentation tasks through a training-free approach, named INSID3.

💬 Research Conclusions:

– INSID3 demonstrated superior performance in one-shot semantic, part, and personalized segmentation, outperforming existing methods by +7.5% mIoU while using significantly fewer parameters and without any mask or category-level supervision.

👉 Paper link: https://huggingface.co/papers/2603.28480

17. STRIDE: When to Speak Meets Sequence Denoising for Streaming Video Understanding

🔑 Keywords: STRIDE, streaming video, proactive activation, iterative denoising, sliding temporal window

💡 Category: Computer Vision

🌟 Research Objective:

– The objective of this research is to improve timing decisions in AI systems for streaming scenarios by developing STRIDE, which models temporal activation patterns through iterative denoising within sliding windows.

🛠️ Research Methods:

– The study introduces STRIDE, employing a masked diffusion module to predict and progressively refine activation signals across sliding temporal windows in streaming video environments.

💬 Research Conclusions:

– The research shows that STRIDE leads to more reliable and temporally coherent proactive responses, enhancing the quality of “when-to-speak” decisions in online streaming scenarios.

👉 Paper link: https://huggingface.co/papers/2603.27593

18.

19. KAT-Coder-V2 Technical Report

🔑 Keywords: KAT-Coder-V2, Specialized-Agentic Approach, Reinforcement Learning, Code Generation, Modular Infrastructure

💡 Category: Generative Models

🌟 Research Objective:

– The primary objective of KAT-Coder-V2 is to enhance code generation performance through a specialized-agentic approach with domain-specific fine-tuning and reinforcement learning.

🛠️ Research Methods:

– The model employs a “Specialize-then-Unify” paradigm, decomposing coding tasks into five expert domains with independent supervised fine-tuning and reinforcement learning, later unified through on-policy distillation.

– Additionally, it introduces KwaiEnv, a modular infrastructure to support extensive concurrent sandbox instances and scales RL training using MCLA and Tree Training for optimization.

💬 Research Conclusions:

– KAT-Coder-V2 demonstrates significant achievements with high scores on SWE-bench Verified and PinchBench, ranking first in frontend aesthetics scenarios, and showing strong generalist capabilities on various benchmarks.

👉 Paper link: https://huggingface.co/papers/2603.27703

20. MOOZY: A Patient-First Foundation Model for Computational Pathology

🔑 Keywords: patient-first, foundation model, case transformer, clinical semantics, histopathology

💡 Category: AI in Healthcare

🌟 Research Objective:

– The study aims to develop and validate MOOZY, a patient-first pathology foundation model that uses a case transformer to model dependencies across multiple slides from a single patient.

🛠️ Research Methods:

– MOOZY employs a two-stage pretraining process: in Stage 1, a vision-only slide encoder is pretrained on thousands of public slide feature grids using masked self-distillation. In Stage 2, the representations are aligned with clinical semantics through a case transformer and multi-task supervision across numerous tasks from public datasets.

💬 Research Conclusions:

– MOOZY excels in achieving superior performance over TITAN and PRISM in various evaluation metrics and tasks, highlighting its efficiency and scalability as a patient-first histopathology foundation model.

👉 Paper link: https://huggingface.co/papers/2603.27048

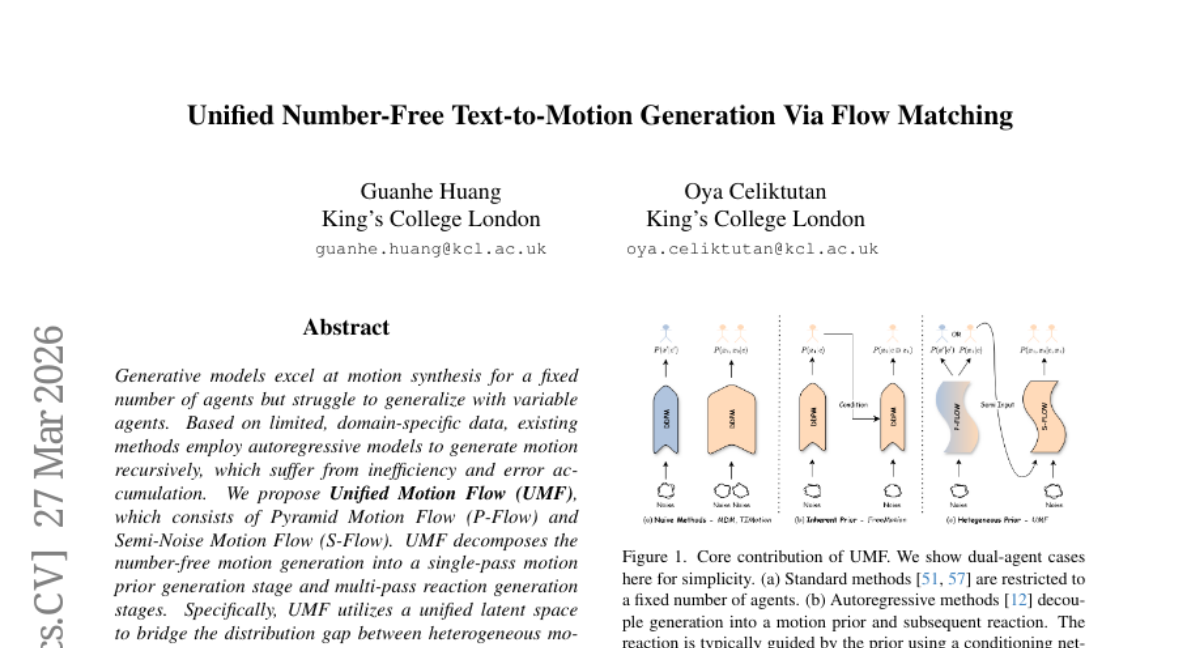

21. Unified Number-Free Text-to-Motion Generation Via Flow Matching

🔑 Keywords: Unified Motion Flow, Motion Synthesis, Autoregressive Models, Error Accumulation, Hierarchical Resolutions

💡 Category: Generative Models

🌟 Research Objective:

– To address the challenges of generalizing motion synthesis with variable agent counts by introducing a new framework, Unified Motion Flow (UMF).

🛠️ Research Methods:

– Decomposition of motion generation into a motion prior generation stage and reaction generation stages using Pyramid Motion Flow (P-Flow) and Semi-Noise Motion Flow (S-Flow).

– Utilization of a unified latent space to enable effective unified training across heterogeneous motion datasets.

💬 Research Conclusions:

– Unified Motion Flow (UMF) effectively handles motion generation for multiple agents, reducing computational inefficiencies and error accumulation.

– Extensive results and user studies highlight UMF’s effectiveness as a generalist model for multi-person motion generation from text inputs.

👉 Paper link: https://huggingface.co/papers/2603.27040

22. MolmoPoint: Better Pointing for VLMs with Grounding Tokens

🔑 Keywords: Vision-Language Models, Visual Tokens, Pointing Tokens, Fine-Grained Subpatch, State-of-the-Art

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Develop a vision-language model that directly selects visual tokens containing target concepts for improved grounding performance.

🛠️ Research Methods:

– Utilize specialized pointing tokens to directly select visual tokens, followed by a fine-grained subpatch selection and a location specification within that subpatch.

– Implement a sequential generation of points and a special no-more-points class to enhance selection accuracy and efficiency.

💬 Research Conclusions:

– The proposed model sets new state-of-the-art performances in image and GUI pointing tasks, improves video pointing and tracking capabilities, and achieves higher sample efficiency compared to traditional text coordinate baseline methods.

👉 Paper link: https://huggingface.co/papers/2603.28069

23. Superintelligence and Law

🔑 Keywords: Artificial Superintelligence, Legal System, AI Agents, Human Oversight, Legal Theory

💡 Category: AI Ethics and Fairness

🌟 Research Objective:

– To explore how the emergence of artificial superintelligence will transform legal frameworks and challenge current legal theories.

🛠️ Research Methods:

– Theoretical analysis of the potential roles and impacts of AI agents in the legal system.

💬 Research Conclusions:

– AI agents will potentially assume roles as subjects, consumers, and producers of law.

– Fundamental legal assumptions will be challenged as AI agents integrate deeper into legal systems.

– Both challenges and opportunities will arise in aligning AI agents with human laws, necessitating a joint human-AI legal framework in the future.

👉 Paper link: https://huggingface.co/papers/2603.28669

24. AdaptToken: Entropy-based Adaptive Token Selection for MLLM Long Video Understanding

🔑 Keywords: AdaptToken, long-video understanding, Multi-modal Large Language Models, token selection, early stopping

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The objective is to enhance the efficiency and accuracy of long-video understanding using AdaptToken by leveraging model uncertainty to dynamically select relevant tokens across video segments.

🛠️ Research Methods:

– AdaptToken is a training-free framework that uses self-uncertainty as a global control signal for long-video token selection. It involves splitting the video into groups, using cross-modal attention to rank tokens, and estimating group relevance through response entropy, enabling global token budget allocation and early stopping.

💬 Research Conclusions:

– AdaptToken improves accuracy consistently across various benchmarks and enhances performance even with extremely long inputs. The introduction of AdaptToken-Lite significantly reduces inference time by about half while maintaining comparable performance.

👉 Paper link: https://huggingface.co/papers/2603.28696

25. SEAR: Schema-Based Evaluation and Routing for LLM Gateways

🔑 Keywords: SEAR, LLM reasoning, operational metrics, routing decisions, structured outputs

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To develop SEAR, a schema-based system for evaluating and routing LLM responses that improves accuracy and interpretable routing decisions across multiple providers.

🛠️ Research Methods:

– Utilized an extensible relational schema to cover LLM evaluation signals and gateway operational metrics, employing self-contained signal instructions, in-schema reasoning, and multi-stage generation for database-ready structured outputs.

💬 Research Conclusions:

– SEAR achieved strong signal accuracy on human-labeled data, supported practical routing decisions, and resulted in large cost reductions while maintaining comparable quality across thousands of production sessions.

👉 Paper link: https://huggingface.co/papers/2603.26728

26. DreamLite: A Lightweight On-Device Unified Model for Image Generation and Editing

🔑 Keywords: Diffusion models, Text-to-Image generation, Text-guided image editing, On-device models, Mobile U-Net

💡 Category: Generative Models

🌟 Research Objective:

– The research introduces DreamLite, a compact on-device diffusion model designed to support both text-to-image generation and text-guided image editing efficiently within a single network.

🛠️ Research Methods:

– DreamLite utilizes a pruned mobile U-Net backbone and in-context spatial concatenation in the latent space for task efficiency.

– A task-progressive joint pretraining strategy and step distillation are employed to enhance model performance and reduce processing steps.

💬 Research Conclusions:

– DreamLite achieves high performance in image generation and editing, outperforming existing on-device models, while being competitive with server-side models. It can process a 1024 x 1024 image in under 1 second on a Xiaomi 14 smartphone.

👉 Paper link: https://huggingface.co/papers/2603.28713

27. Marco DeepResearch: Unlocking Efficient Deep Research Agents via Verification-Centric Design

🔑 Keywords: Deep Research Agents, Verification Mechanisms, QA Data Synthesis, Trajectory Construction, Test-time Scaling

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– The paper aims to enhance the performance of deep research agents by integrating a verification-centric framework to address issues in QA data synthesis, trajectory construction, and test-time scaling.

🛠️ Research Methods:

– Marco DeepResearch introduces verification mechanisms to ensure correctness in QA synthesis.

– Implements a verification-driven approach in trajectory construction by embedding verification patterns.

– Utilizes the deep research agent itself for test-time verification to improve inferential accuracy.

💬 Research Conclusions:

– Marco DeepResearch significantly outperforms other 8B-scale deep research agents on complex benchmarks such as BrowseComp and BrowseComp-ZH.

– Under limited resources, it can match or exceed the capabilities of 30B-scale agents.

👉 Paper link: https://huggingface.co/papers/2603.28376

28. ResAdapt: Adaptive Resolution for Efficient Multimodal Reasoning

🔑 Keywords: ResAdapt, Multimodal Large Language Models, visual budget, Input-side adaptation, Cost-Aware Policy Optimization

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To improve efficiency in video tasks for multimodal large language models by dynamically allocating visual resources through the proposed ResAdapt framework.

🛠️ Research Methods:

– Utilizes a lightweight Allocator coupled with an unchanged MLLM backbone and formulates allocation as a contextual bandit trained with Cost-Aware Policy Optimization.

💬 Research Conclusions:

– ResAdapt supports up to 16x more frames under the same visual budget, achieving over 15% performance gain with clear advantages in efficiency-accuracy trade-offs, especially in reasoning-intensive benchmarks under aggressive compression.

👉 Paper link: https://huggingface.co/papers/2603.28610

29. MuSEAgent: A Multimodal Reasoning Agent with Stateful Experiences

🔑 Keywords: MuSEAgent, Multimodal Reasoning, Stateful Experience Learning, Policy-driven Experience Retrieval

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The paper introduces MuSEAgent, aiming to enhance decision-making in multimodal reasoning by leveraging stateful experiences in research agents.

🛠️ Research Methods:

– The approach involves a stateful experience learning paradigm that abstracts interaction data into atomic decision experiences using hindsight reasoning. These experiences are organized into a quality-filtered experience bank that supports policy-driven experience retrieval through wide- and deep-search strategies.

💬 Research Conclusions:

– MuSEAgent consistently outperforms strong trajectory-level experience retrieval baselines in fine-grained visual perception and complex multimodal reasoning tasks, validating the effectiveness of stateful experience modeling.

👉 Paper link: https://huggingface.co/papers/2603.27813

30. ImagenWorld: Stress-Testing Image Generation Models with Explainable Human Evaluation on Open-ended Real-World Tasks

🔑 Keywords: ImagenWorld, AI-generated, image synthesis, explainable evaluation, VLM-based metrics

💡 Category: Generative Models

🌟 Research Objective:

– To introduce ImagenWorld, a comprehensive benchmark for evaluating image generation and editing across multiple tasks and domains with human annotations and explainable evaluation.

🛠️ Research Methods:

– Employ a benchmark consisting of 3.6K condition sets and 20K human annotations to assess model performance in six core tasks across six domains, utilizing automated VLM-based metrics for evaluation.

💬 Research Conclusions:

– Models generally perform better in image generation than editing, struggle with local edits, and excel in artistic and photorealistic images but not in symbolic or text-heavy domains.

– Closed-source systems dominate, but data curation reduces discrepancies in text-heavy scenarios.

– VLM-based metrics achieve high accuracy yet lack fine-grained explainable error attribution, highlighting the need for advanced benchmarking tools.

👉 Paper link: https://huggingface.co/papers/2603.27862

31. PRBench: End-to-end Paper Reproduction in Physics Research

🔑 Keywords: AI agents, PRBench, end-to-end reproduction, scientific reasoning, large language models

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To evaluate AI agents’ ability to reproduce scientific research by implementing algorithms from published papers and matching original results.

🛠️ Research Methods:

– Introduction of PRBench, a benchmark consisting of 30 tasks across 11 subfields of physics, requiring agents to comprehend and implement methodologies from real published papers in a sandboxed environment.

💬 Research Conclusions:

– All evaluated AI agents, including OpenAI Codex powered by GPT-5.3-Codex, showed significant challenges in formula implementation, debugging, and data accuracy, with zero end-to-end callback success rate, highlighting systematic failure modes in reproducing scientific research.

👉 Paper link: https://huggingface.co/papers/2603.27646

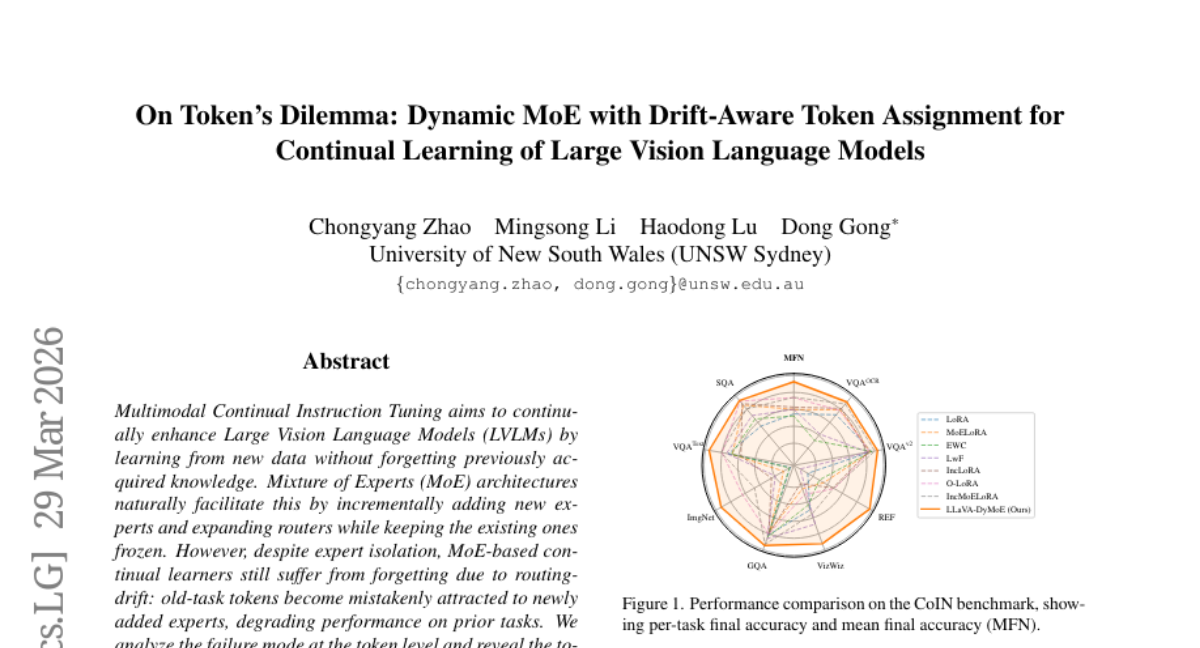

32. On Token’s Dilemma: Dynamic MoE with Drift-Aware Token Assignment for Continual Learning of Large Vision Language Models

🔑 Keywords: LLaVA-DyMoE, routing-drift, Mixture of Experts (MoE), token-level assignment guidance, large vision language models

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The primary goal is to improve multimodal continual instruction tuning by addressing routing-drift-induced forgetting within large vision language models through an innovative dynamic MoE framework.

🛠️ Research Methods:

– The study employs a dynamic expansion of Mixture of Experts (MoE) framework, utilizing token-level assignment guidance and routing score regularizations to manage token assignment and minimize forgetting.

💬 Research Conclusions:

– LLaVA-DyMoE effectively reduces forgetting caused by routing-drift, with over a 7% improvement in mean final accuracy and a 12% reduction in forgetting when compared to baseline models.

👉 Paper link: https://huggingface.co/papers/2603.27481

33. Emergent Social Intelligence Risks in Generative Multi-Agent Systems

🔑 Keywords: Multi-agent systems, Large generative models, Emergent risks, Social intelligence risk

💡 Category: AI Ethics and Fairness

🌟 Research Objective:

– To investigate the emergent risks associated with multi-agent systems composed of large generative models, especially in contexts involving shared resources and collaborative decision-making.

🛠️ Research Methods:

– Analyzing multi-agent systems’ behaviors during tasks involving competition, sequential collaboration, and decision aggregation under various conditions and constraints.

💬 Research Conclusions:

– Multi-agent systems frequently exhibit complex collective behaviors like collusion and conformity without explicit instructions, mirroring human societal issues and revealing limitations of current agent-level safeguards.

👉 Paper link: https://huggingface.co/papers/2603.27771

34. Towards a Medical AI Scientist

🔑 Keywords: Medical AI Scientist, Autonomous research framework, Clinical applications, AI-generated hypothesis, Ethical policies

💡 Category: AI in Healthcare

🌟 Research Objective:

– The paper introduces the Medical AI Scientist, the first autonomous research framework specifically designed for clinical applications that transform literature into actionable evidence and enable evidence-based hypothesis generation and manuscript drafting.

🛠️ Research Methods:

– The framework operates under three research modes: paper-based reproduction, literature-inspired innovation, and task-driven exploration, each offering progressively increasing autonomy in scientific inquiry.

– Evaluations were conducted using both large language models and human experts to assess the quality of generated ideas and their alignment with executable experiments.

💬 Research Conclusions:

– The Medical AI Scientist system produced ideas of higher quality than commercial LLMs in various clinical tasks and data modalities.

– It demonstrated significantly higher success rates in experiments and the generated manuscripts were comparable to high-quality scientific standards like MICCAI, surpassing those from ISBI and BIBM.

👉 Paper link: https://huggingface.co/papers/2603.28589