AI Native Daily Paper Digest – 20260410

1. Rethinking Generalization in Reasoning SFT: A Conditional Analysis on Optimization, Data, and Model Capability

🔑 Keywords: supervised finetuning, reinforcement learning, cross-domain generalization, optimization dynamics, model capability

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– To investigate the conditional cross-domain generalization in reasoning tasks influenced by factors like optimization dynamics, data quality, and model capability.

🛠️ Research Methods:

– Analysis of reasoning SFT with long chain-of-thought supervision to evaluate the effects of training data quality and model strength on generalization.

💬 Research Conclusions:

– Cross-domain generalization is conditional and asymmetric, where reasoning improvements might lead to safety degradation, with generalization influenced by extended training and data quality.

👉 Paper link: https://huggingface.co/papers/2604.06628

2. HY-Embodied-0.5: Embodied Foundation Models for Real-World Agents

🔑 Keywords: HY-Embodied-0.5, Mixture-of-Transformers, embodied agents, VLA model, on-policy distillation

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To develop HY-Embodied-0.5, a family of foundation models tailored for embodied agents to enhance spatial and temporal visual perception and embodied reasoning capabilities.

🛠️ Research Methods:

– Utilization of a Mixture-of-Transformers architecture to enable modality-specific computing.

– Implementation of an iterative, self-evolving post-training paradigm.

– Application of on-policy distillation to transfer capabilities from a large model to a smaller variant.

💬 Research Conclusions:

– The HY-Embodied-0.5 suite demonstrated enhanced performance on 22 benchmarks, outperforming state-of-the-art models, and achieving compelling results in robot control experiments through the VLA model.

👉 Paper link: https://huggingface.co/papers/2604.07430

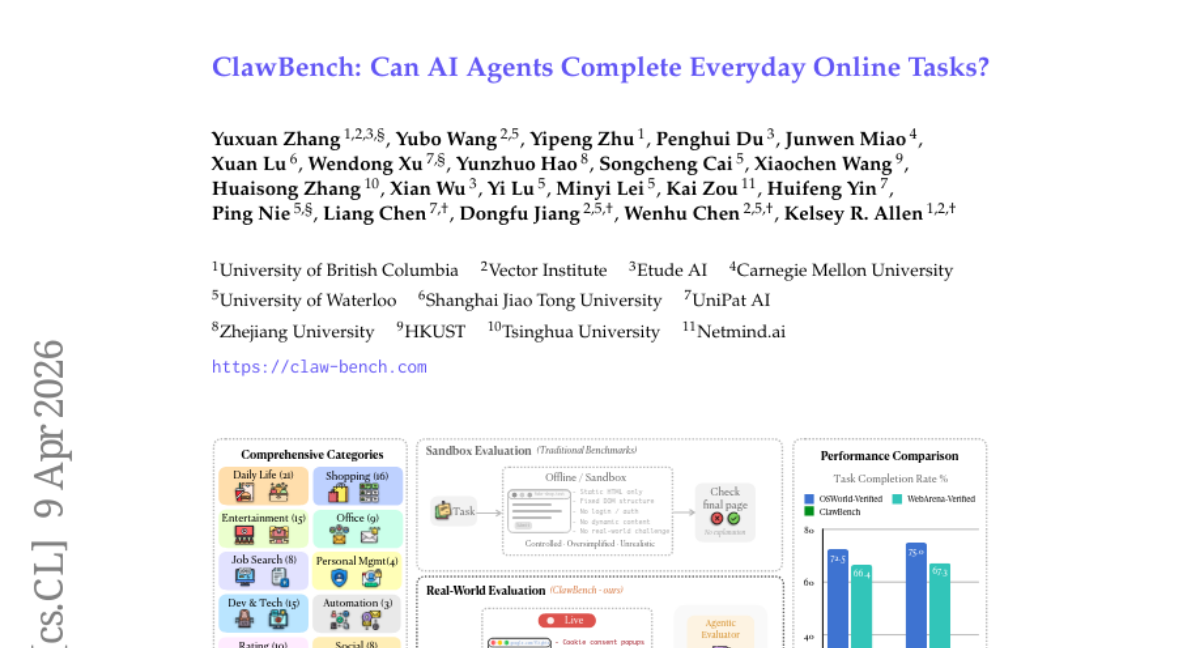

3. ClawBench: Can AI Agents Complete Everyday Online Tasks?

🔑 Keywords: AI agents, online tasks, evaluation framework, real-world web interaction, multi-step workflows

💡 Category: AI Systems and Tools

🌟 Research Objective:

– Develop a comprehensive evaluation framework, ClawBench, for testing AI agents’ ability to automate everyday online tasks through complex workflows and document processing.

🛠️ Research Methods:

– Utilize ClawBench to evaluate AI agents across 153 real-world tasks on 144 platforms, involving dynamic web interactions and navigation through multi-step workflows.

💬 Research Conclusions:

– Both proprietary and open-source AI models show limited success in completing these complex tasks, with leading models like Claude Sonnet 4.6 achieving a success rate of only 33.3%. ClawBench highlights the challenges in creating reliable, general-purpose AI assistants for everyday tasks.

👉 Paper link: https://huggingface.co/papers/2604.08523

4. LPM 1.0: Video-based Character Performance Model

🔑 Keywords: AI-generated summary, real-time inference, identity-consistent performance, Diffusion Transformer, multimodal conditioning

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The study aims to develop a large-scale multimodal model, LPM 1.0, that achieves real-time conversational character performance generation with identity consistency.

🛠️ Research Methods:

– Creation of a multimodal dataset through audio-video pairing and identity-aware extraction.

– Development of a 17B-parameter Diffusion Transformer for controlled performance using multimodal conditioning.

– Implementation of a causal streaming generator for low-latency, infinite-length interaction.

💬 Research Conclusions:

– LPM 1.0 generates high-quality, identity-stable, infinite-length character videos in real-time.

– The proposed LPM-Bench benchmark demonstrates LPM 1.0’s state-of-the-art performance across all evaluated dimensions.

👉 Paper link: https://huggingface.co/papers/2604.07823

5. Externalization in LLM Agents: A Unified Review of Memory, Skills, Protocols and Harness Engineering

🔑 Keywords: Large Language Model Agents, Externalization, Memory Stores, Reusable Skills, Interaction Protocols

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The objective is to explore how large language model agents are transitioning from weight-based modifications to incorporating externalized components like memory, skills, and protocols to enhance their reliability and coordination.

🛠️ Research Methods:

– The study involves reviewing the shift from internal model dependency to externalization through memory, skills, and protocols, and analyzing how these components interact within a larger agent system.

💬 Research Conclusions:

– The conclusion is that improved cognitive infrastructure, rather than just stronger models, is crucial for the advancement of practical agents. This includes understanding the trade-offs between parametric and externalized capability and recognizing the challenges in evaluation, governance, and the co-evolution of models with external infrastructure.

👉 Paper link: https://huggingface.co/papers/2604.08224

6. DMax: Aggressive Parallel Decoding for dLLMs

🔑 Keywords: DMax, diffusion language models, error accumulation, self-refinement, parallel decoding

💡 Category: Natural Language Processing

🌟 Research Objective:

– The objective of the research is to introduce DMax, a paradigm for improving diffusion language models by reducing error accumulation during parallel decoding through self-refinement and unified training strategies.

🛠️ Research Methods:

– The researchers employ On-Policy Uniform Training and propose Soft Parallel Decoding, enabling iterative self-revising in embedding space by interpolating between predicted token embeddings and mask embeddings.

💬 Research Conclusions:

– The study demonstrates that DMax significantly enhances TPF on benchmarks like GSM8K and MBPP while preserving accuracy, achieving high TPS on two H200 GPUs, highlighting its efficiency and effectiveness.

👉 Paper link: https://huggingface.co/papers/2604.08302

7. OpenSpatial: A Principled Data Engine for Empowering Spatial Intelligence

🔑 Keywords: Open-source data engine, 3D bounding boxes, Spatial reasoning, Large-scale dataset, State-of-the-art performance

💡 Category: Computer Vision

🌟 Research Objective:

– The paper introduces OpenSpatial, an open-source data engine designed to enhance spatial reasoning tasks using 3D bounding boxes and to create a large-scale, high-fidelity dataset.

🛠️ Research Methods:

– The research elucidates the design principles of OpenSpatial, employing 3D bounding boxes to construct a comprehensive data hierarchy across five foundational tasks: Spatial Measurement, Spatial Relationship, Camera Perception, Multi-view Consistency, and Scene-Aware Reasoning.

💬 Research Conclusions:

– Models trained on the OpenSpatial-3M dataset achieve state-of-the-art performance in spatial reasoning benchmarks, showing an average performance improvement of 19 percent. The open-sourcing of both the engine and dataset accelerates future research in spatial intelligence.

👉 Paper link: https://huggingface.co/papers/2604.07296

8. Structured Distillation of Web Agent Capabilities Enables Generalization

🔑 Keywords: Frontier LLMs, synthetic trajectory generation, web agents, AI-generated summary, supervised learning

💡 Category: Natural Language Processing

🌟 Research Objective:

– The study aims to enable locally deployable open-weight web agents with superior performance and cross-environment capabilities by using a frontier LLM as a teacher.

🛠️ Research Methods:

– The research introduces the Agent-as-Annotators framework, which leverages modular LLM components for synthetic trajectory generation. It utilizes Gemini 3 Pro to create and refine trajectories with supervised learning.

💬 Research Conclusions:

– The developed model outperforms closed-source models in WebArena, nearly doubling the previous best open-weight results. It demonstrates significant transferability to unseen environments, emphasizing the effectiveness of structured trajectory synthesis from a single frontier teacher.

👉 Paper link: https://huggingface.co/papers/2604.07776

9. Graph of Skills: Dependency-Aware Structural Retrieval for Massive Agent Skills

🔑 Keywords: Graph of Skills, Skill Libraries, AI-generated Summary, Hybrid Retrieval, Token Efficiency

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To enhance the efficiency of large skill libraries by developing the Graph of Skills (GoS) for improved inference and reduced token usage.

🛠️ Research Methods:

– Utilized a unique inference-time structural retrieval layer, constructing executable skill graphs, and employed hybrid semantic-lexical seeding along with reverse-weighted Personalized PageRank and context-budgeted hydration.

💬 Research Conclusions:

– GoS significantly increases average reward by 43.6% and reduces input tokens by 37.8% compared to traditional methods, with successful generalization across multiple model families.

👉 Paper link: https://huggingface.co/papers/2604.05333

10. Automating Database-Native Function Code Synthesis with LLMs

🔑 Keywords: Database Native Functions, LLM-based Code Generation, Function Synthesis, DBCooker, Database Systems

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To develop an LLM-based system, called DBCooker, for synthesizing database native functions automatically and efficiently.

🛠️ Research Methods:

– Combines three components: function characterization, a pseudo-code-based coding plan generator, and a hybrid fill-in-the-blank model.

– Utilizes operations to manage synthesis challenges and an adaptive orchestration strategy for dynamic sequencing.

💬 Research Conclusions:

– DBCooker significantly improves synthesis accuracy (34.55% higher on average) on systems like SQLite, PostgreSQL, and DuckDB compared to existing methods.

– It also successfully synthesizes new functions not present in the latest SQLite version.

👉 Paper link: https://huggingface.co/papers/2604.06231

11. SIM1: Physics-Aligned Simulator as Zero-Shot Data Scaler in Deformable Worlds

🔑 Keywords: Deformable objects, sim-to-real, metric-consistent twins, elastic modeling, physics-aligned simulation

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To develop a physics-aligned simulation framework that aligns synthetic data with real-world performance for effective robotic manipulation of deformable objects.

🛠️ Research Methods:

– Introduced SIM1, a real-to-sim-to-real data engine that digitizes scenes into metric-consistent twins, calibrates deformable dynamics via elastic modeling, and expands behaviors using diffusion-based trajectory generation with quality filtering.

💬 Research Conclusions:

– Achieved significant performance in robotic manipulation tasks using purely synthetic data, demonstrating 90% zero-shot success and 50% generalization gains compared to real-world baselines, validating the scale and efficiency of the physics-aligned simulation.

👉 Paper link: https://huggingface.co/papers/2604.08544

12. GameWorld: Towards Standardized and Verifiable Evaluation of Multimodal Game Agents

🔑 Keywords: GameWorld, Multimodal Large Language Model, Video games, Semantic Action Parsing, State-verifiable metrics

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To establish GameWorld as a benchmark for evaluating multimodal large language model agents in video games, with a focus on diverse games and verified metrics.

🛠️ Research Methods:

– Development of GameWorld for standardized and verifiable assessment of MLLMs as game agents, using two interfaces: computer-use agents and generalist multimodal agents.

💬 Research Conclusions:

– The current best-performing agents remain inferior to human capabilities in video games; however, GameWorld demonstrates robustness and provides a foundation for future research on multimodal game agents.

👉 Paper link: https://huggingface.co/papers/2604.07429

13. Towards Real-world Human Behavior Simulation: Benchmarking Large Language Models on Long-horizon, Cross-scenario, Heterogeneous Behavior Traces

🔑 Keywords: OmniBehavior, Large Language Models, real-world data, user simulation, structural bias

💡 Category: Natural Language Processing

🌟 Research Objective:

– To introduce OmniBehavior, a user simulation benchmark created from real-world data that integrates complex behavioral patterns into a unified framework.

🛠️ Research Methods:

– Empirical analysis to compare isolated scenarios against real-world decision-making using long-term, cross-scenario causal chains.

💬 Research Conclusions:

– Current state-of-the-art LLMs struggle to simulate complex human behaviors accurately, exhibiting structural bias and resulting in the loss of individual differences and long-tail behaviors.

👉 Paper link: https://huggingface.co/papers/2604.08362

14. Faithful GRPO: Improving Visual Spatial Reasoning in Multimodal Language Models via Constrained Policy Optimization

🔑 Keywords: Reinforcement Learning, Verifiable Rewards, Multimodal Reasoning Models, Visual Grounding, Faithful GRPO

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The research aims to improve visual reasoning accuracy and logical consistency in multimodal reasoning models using reinforcement learning with verifiable rewards.

🛠️ Research Methods:

– A constrained optimization method called Faithful GRPO is proposed, which incorporates batch-level consistency and grounding constraints via Lagrangian dual ascent to enhance reasoning quality.

💬 Research Conclusions:

– The proposed Faithful GRPO significantly reduces inconsistency rates and improves visual grounding scores in spatial reasoning benchmarks, demonstrating enhanced reasoning quality and better final answer accuracy compared to standard GRPO.

👉 Paper link: https://huggingface.co/papers/2604.08476

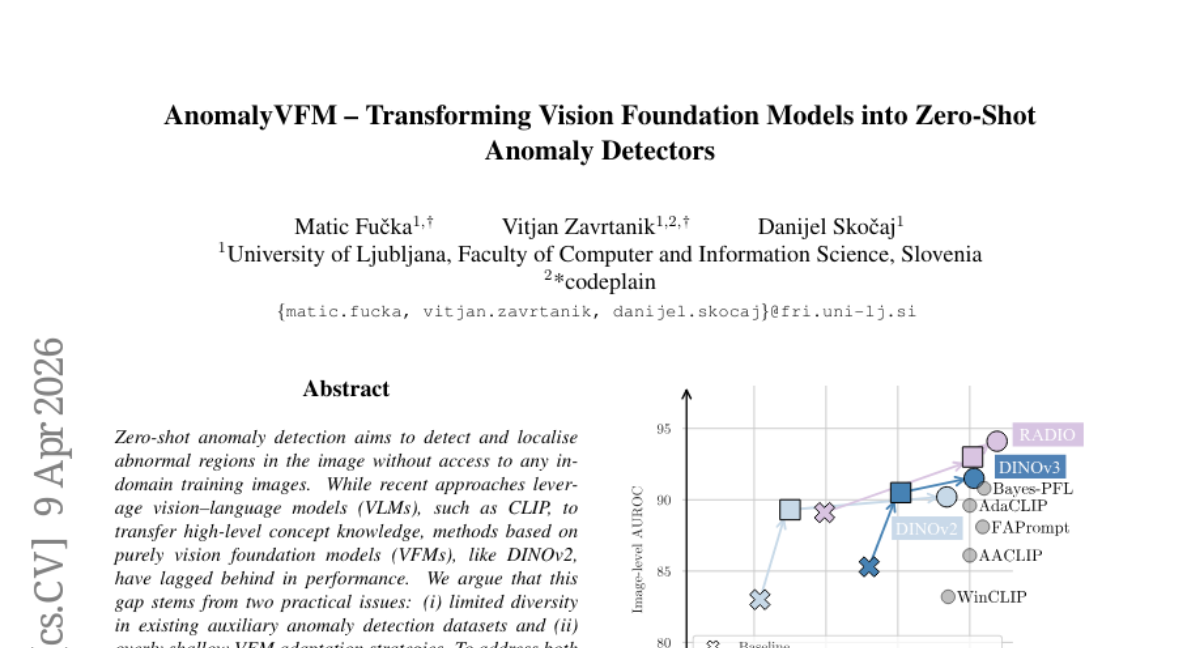

15. AnomalyVFM — Transforming Vision Foundation Models into Zero-Shot Anomaly Detectors

🔑 Keywords: AnomalyVFM, Zero-shot anomaly detection, Vision foundation models, Synthetic dataset generation, Parameter-efficient adaptation

💡 Category: Computer Vision

🌟 Research Objective:

– The study introduces AnomalyVFM, a framework to enhance vision foundation models for zero-shot anomaly detection by addressing limited diversity in datasets and shallow adaptation strategies.

🛠️ Research Methods:

– The approach combines synthetic dataset generation with parameter-efficient adaptation using low-rank feature adapters and confidence-weighted pixel loss.

💬 Research Conclusions:

– AnomalyVFM significantly outperforms existing methods, achieving an average image-level AUROC of 94.1% across diverse datasets, with a notable improvement of 3.3 percentage points over state-of-the-art methods.

👉 Paper link: https://huggingface.co/papers/2601.20524

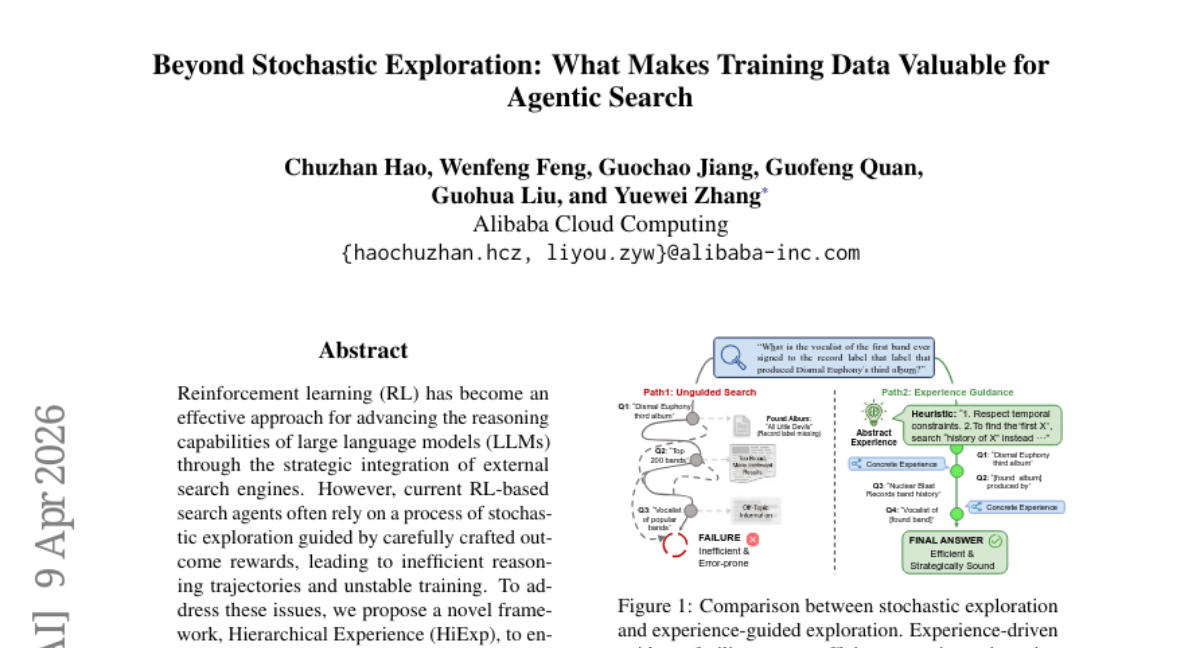

16. Beyond Stochastic Exploration: What Makes Training Data Valuable for Agentic Search

🔑 Keywords: Reinforcement Learning, Hierarchical Experience, Large Language Models, Stochastic Exploration, Experience-Aligned Training

💡 Category: Reinforcement Learning

🌟 Research Objective:

– To enhance the performance and training stability of reinforcement learning-based search agents by introducing a novel Hierarchical Experience framework.

🛠️ Research Methods:

– Utilization of contrastive analysis and multi-level clustering to transform reasoning trajectories into structured knowledge, along with experience-aligned training to improve exploration processes.

💬 Research Conclusions:

– The proposed approach demonstrates substantial performance gains, as well as strong cross-task and cross-algorithm generalization in complex agentic search and mathematical reasoning benchmarks.

👉 Paper link: https://huggingface.co/papers/2604.08124

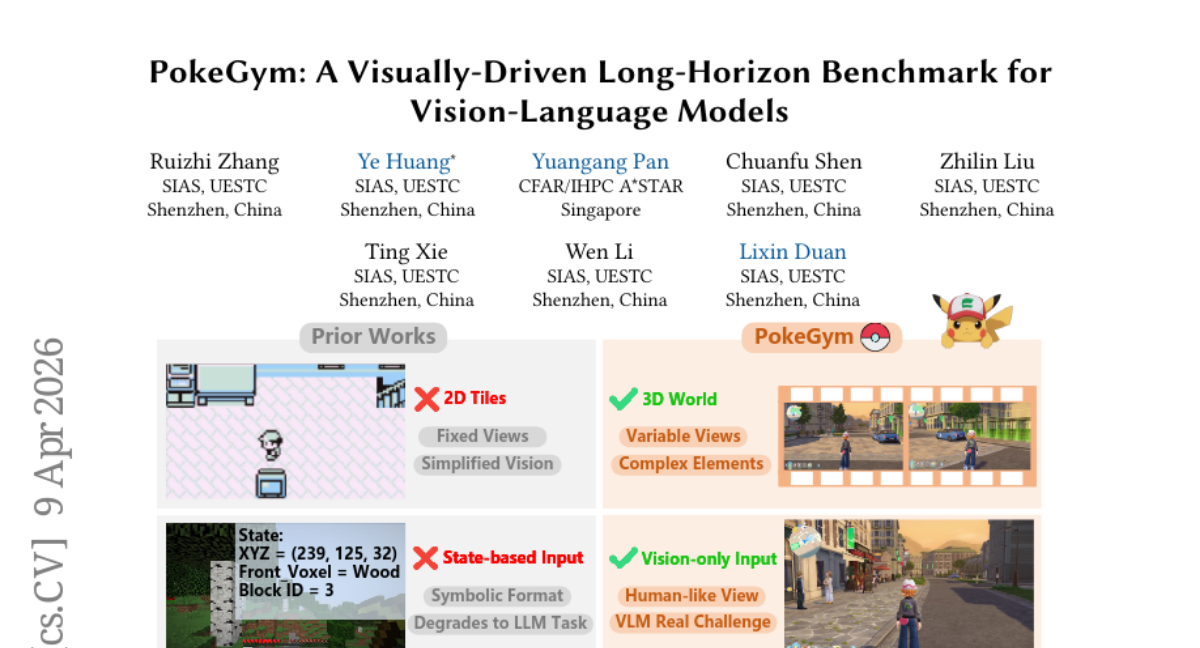

17. PokeGym: A Visually-Driven Long-Horizon Benchmark for Vision-Language Models

🔑 Keywords: Vision-Language Models, 3D Embodied Environments, PokeGym, Deadlock Recovery, Spatial Intuition

💡 Category: Computer Vision

🌟 Research Objective:

– To address the limitations of Vision-Language Models in 3D embodied environments by introducing a new benchmark called PokeGym.

🛠️ Research Methods:

– Development and implementation of the PokeGym benchmark within a visually complex 3D open-world Role-Playing Game, enforcing code-level isolation and evaluating 30 tasks across various scenarios.

💬 Research Conclusions:

– Current Vision-Language Models are limited by issues with physical deadlock recovery, which is a critical bottleneck affecting task success.

– Distinctions were observed between weaker models experiencing Unaware Deadlocks and advanced models encountering Aware Deadlocks, stressing the importance of incorporating explicit spatial intuition into VLM architectures.

👉 Paper link: https://huggingface.co/papers/2604.08340

18. QEIL v2: Heterogeneous Computing for Edge Intelligence via Roofline-Derived Pareto-Optimal Energy Modeling and Multi-Objective Orchestration

🔑 Keywords: QEIL v2, Edge Devices, Energy Efficiency, Runtime-Adaptive Models, Physics-Based Optimization

💡 Category: AI Systems and Tools

🌟 Research Objective:

– Improve energy efficiency and performance of large language model inference on edge devices using physics-based adaptive optimization and workload-aware resource allocation.

🛠️ Research Methods:

– Replacement of static heuristics with runtime-adaptive models grounded in semiconductor physics.

– Introduction of device-workload metrics: DASI, CPQ, and Phi.

– PGSAM used for multi-objective optimization.

– EAC/ARDE selection with CSVET early stopping for progressive verification.

💬 Research Conclusions:

– QEIL v2 achieved significant energy savings and performance improvements, notably surpassing the IPW=1.0 mark.

– Demonstrated substantial latency reduction and fault recovery improvements across multiple model families and benchmarks.

👉 Paper link: https://huggingface.co/papers/2602.06057

19. Personalizing Text-to-Image Generation to Individual Taste

🔑 Keywords: PAMELA, Personalized Reward Model, Aesthetic Assessment, User Preferences, T2I Models

💡 Category: Generative Models

🌟 Research Objective:

– Introduce PAMELA, a novel dataset and predictive framework focused on personalizing image evaluations by utilizing user-specific ratings across diverse image domains.

🛠️ Research Methods:

– A dataset comprising 70,000 ratings by 15 unique users on images generated by models like Flux 2 and Nano Banana, covering domains such as art and fashion.

– Development of a personalized reward model trained on these annotations and existing aesthetic assessment subsets.

💬 Research Conclusions:

– The model improves prediction accuracy for individual aesthetic preferences over current state-of-the-art population-level models.

– Demonstrates the use of personalized predictors in optimizing prompts to align generations with individual preferences, highlighting the role of data quality and personalization in aesthetic judgments.

👉 Paper link: https://huggingface.co/papers/2604.07427

20. Structural Graph Probing of Vision-Language Models

🔑 Keywords: Vision-language models, Multimodal performance, Neural topology, Correlation graph, Recurrent hub neurons

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Investigate the structured neural topology within vision-language models to understand their multimodal performance and identify behaviorally significant patterns.

🛠️ Research Methods:

– Analyzed vision-language models through the lens of neural topology by representing each layer as a within-layer correlation graph derived from neuron-neuron co-activations.

💬 Research Conclusions:

– Identified that correlation topology holds recoverable behavioral signals and pinpointed recurrent hub neurons as crucial components whose perturbation alters model output significantly, establishing neural topology as a meaningful intermediate scale for model interpretability.

👉 Paper link: https://huggingface.co/papers/2603.27070

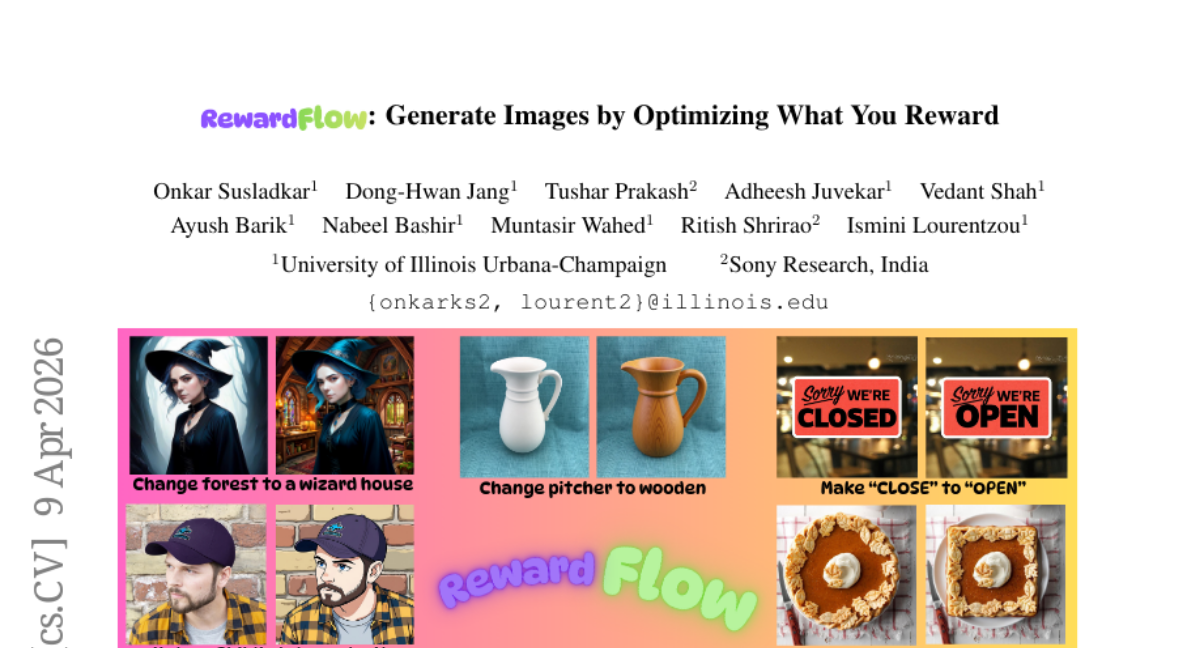

21. RewardFlow: Generate Images by Optimizing What You Reward

🔑 Keywords: RewardFlow, diffusion models, flow-matching models, Langevin dynamics, image editing

💡 Category: Generative Models

🌟 Research Objective:

– The paper introduces RewardFlow, an inversion-free framework designed to guide pretrained diffusion and flow-matching models using multi-reward Langevin dynamics to enhance performance in image editing and compositional generation.

🛠️ Research Methods:

– RewardFlow integrates various differentiable rewards including semantic alignment, perceptual fidelity, and human preference, combined with a new differentiable VQA-based reward for semantic supervision. It employs a prompt-aware adaptive policy to dynamically modulate reward weights and step sizes, tailoring the approach to different instructions and editing intents.

💬 Research Conclusions:

– RewardFlow achieves state-of-the-art results in terms of edit fidelity and compositional alignment on several benchmarks, showcasing its effectiveness in coordinating multiple objectives for enhanced image editing and generation.

👉 Paper link: https://huggingface.co/papers/2604.08536

22.

23. Training a Student Expert via Semi-Supervised Foundation Model Distillation

🔑 Keywords: Semi-supervised Knowledge Distillation, Vision Foundation Models, Instance Segmentation, Contrastive Calibration, Pseudo-label Bias

💡 Category: Computer Vision

🌟 Research Objective:

– To compress vision foundation models into compact experts for instance segmentation using a semi-supervised knowledge distillation framework.

🛠️ Research Methods:

– Implemented a three-stage process: domain adaptation with self-training and contrastive calibration, knowledge transfer via multi-objective loss, and student refinement to address pseudo-label bias.

💬 Research Conclusions:

– The proposed framework significantly improves model performance on Cityscapes and ADE20K, achieving substantial gains over zero-shot vision foundation model teachers and surpassing adapted state-of-the-art semi-supervised knowledge distillation methods.

👉 Paper link: https://huggingface.co/papers/2604.03841

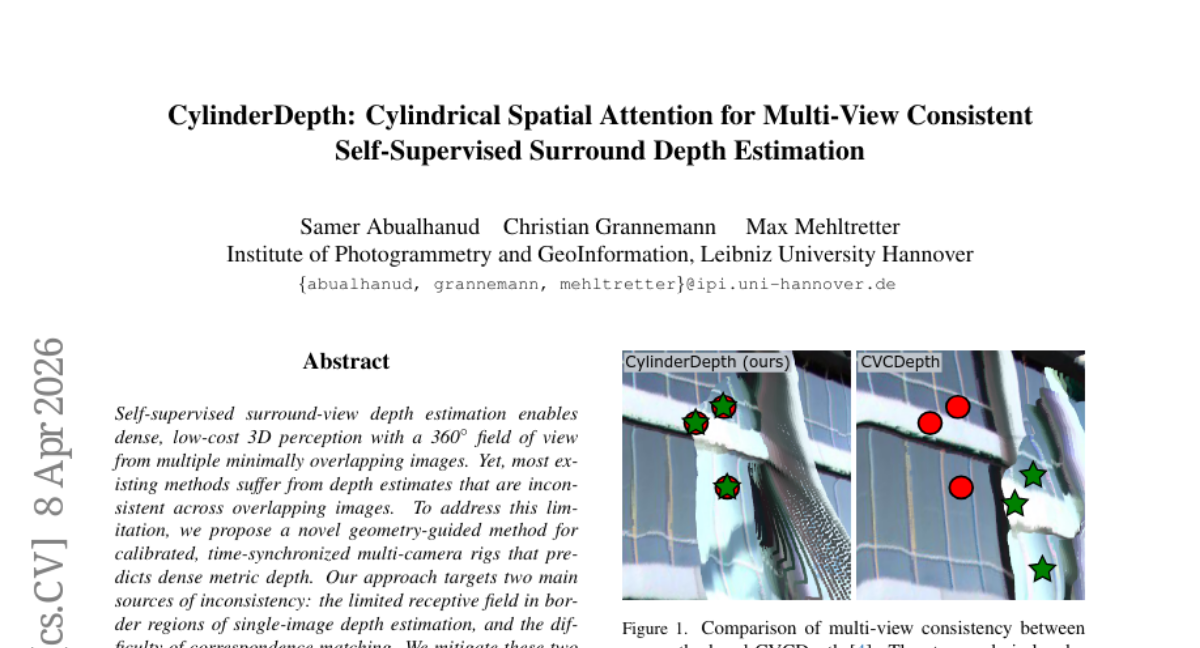

24. CylinderDepth: Cylindrical Spatial Attention for Multi-View Consistent Self-Supervised Surround Depth Estimation

🔑 Keywords: AI-generated summary, self-supervised surround-view depth estimation, calibrated multi-camera rigs, cylindrical spatial attention, cross-view attention

💡 Category: Computer Vision

🌟 Research Objective:

– To address depth inconsistency across overlapping images in self-supervised surround-view depth estimation by proposing a novel geometry-guided method.

🛠️ Research Methods:

– Implemented a strategy using geometry-guided methods with calibrated, time-synchronized multi-camera rigs to expand the receptive field and apply cylindrical spatial attention mechanisms for improved feature aggregation.

💬 Research Conclusions:

– The proposed method enhances cross-view depth consistency and overall depth accuracy compared to state-of-the-art approaches, with evaluations demonstrating improvements on the DDAD and nuScenes datasets.

👉 Paper link: https://huggingface.co/papers/2511.16428

25. Appear2Meaning: A Cross-Cultural Benchmark for Structured Cultural Metadata Inference from Images

🔑 Keywords: Vision-language models, Structured cultural metadata, Cultural inference, LLM-as-Judge framework, Semantic alignment

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To explore and improve the inference of structured cultural metadata from visual input using Vision-language models (VLMs).

🛠️ Research Methods:

– Introduced a multi-category, cross-cultural benchmark for assessing VLMs.

– Utilized an LLM-as-Judge framework to measure semantic alignment with reference annotations.

💬 Research Conclusions:

– VLMs show fragmented signal capture and significant performance variation across different cultures and metadata types, yielding inconsistent and weakly grounded predictions.

👉 Paper link: https://huggingface.co/papers/2604.07338

26. ImplicitMemBench: Measuring Unconscious Behavioral Adaptation in Large Language Models

🔑 Keywords: Implicit Memory, LLM Agents, Procedural Memory, Priming, Classical Conditioning

💡 Category: Natural Language Processing

🌟 Research Objective:

– To introduce ImplicitMemBench as the first benchmark for evaluating implicit memory in LLM agents through procedures like procedural memory, priming, and classical conditioning.

🛠️ Research Methods:

– Developed a 300-item suite using a Learning/Priming-Interfere-Test protocol to assess LLM agents on one-shot skill acquisition, theme-driven bias, and stimulus associations.

💬 Research Conclusions:

– Found severe limitations in current models with no model exceeding 66% performance, highlighting a need for architectural innovations beyond parameter scaling to address implicit memory evaluation.

👉 Paper link: https://huggingface.co/papers/2604.08064

27. POS-ISP: Pipeline Optimization at the Sequence Level for Task-aware ISP

🔑 Keywords: Image Signal Processing, Module Sequences, Reinforcement Learning, Computational Cost

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The study aims to optimize image signal processing (ISP) pipelines by predicting complete module sequences and their parameters in a single forward pass to improve task performance while reducing computational costs.

🛠️ Research Methods:

– The paper introduces POS-ISP, a sequence-level reinforcement learning framework that formulates ISP optimization as a global sequence prediction problem, avoiding the issues of neural architecture search and step-wise reinforcement learning.

💬 Research Conclusions:

– The proposed POS-ISP framework enhances task performance and reduces computational costs across various downstream tasks, demonstrating the stability and efficiency of sequence-level optimization for task-aware ISP.

👉 Paper link: https://huggingface.co/papers/2604.06938

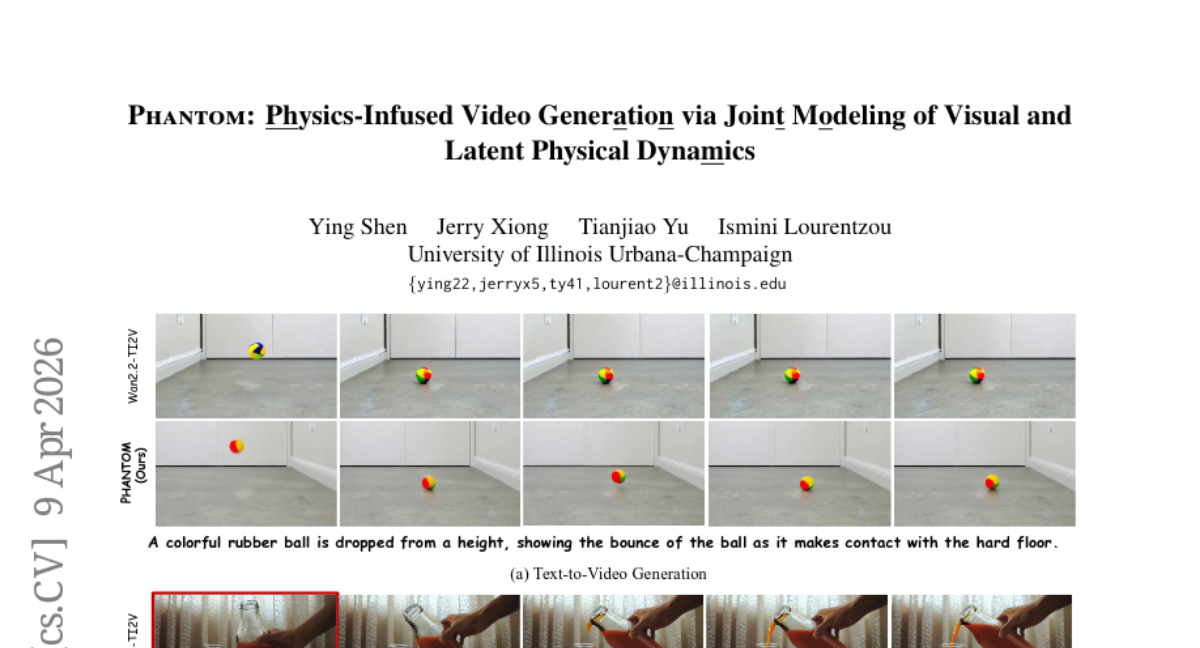

28. Phantom: Physics-Infused Video Generation via Joint Modeling of Visual and Latent Physical Dynamics

🔑 Keywords: Phantom, Video Generation, Physical Dynamics, Physics-Infused, Visual Realism

💡 Category: Generative Models

🌟 Research Objective:

– To investigate the integration of latent physical properties inference into the video generation process to achieve physically plausible videos.

🛠️ Research Methods:

– The proposed model, Phantom, jointly models visual content and latent physical dynamics using a physics-aware video representation.

💬 Research Conclusions:

– Phantom outperforms existing methods in adhering to physical dynamics while maintaining competitive perceptual fidelity, as demonstrated by quantitative and qualitative evaluations on standard and physics-aware benchmarks.

👉 Paper link: https://huggingface.co/papers/2604.08503

29. On the Global Photometric Alignment for Low-Level Vision

🔑 Keywords: Photometric Alignment Loss, pixel-wise losses, photometric transfer, affine color alignment, gradient energy

💡 Category: Computer Vision

🌟 Research Objective:

– The research aims to address optimization pathologies in low-level vision by resolving photometric discrepancies through affine color alignment while preserving content restoration.

🛠️ Research Methods:

– The study analyzes least-squares decomposition to prove the orthogonality of photometric and structural components of prediction-target residuals and proposes Photometric Alignment Loss (PAL) as a solution.

💬 Research Conclusions:

– Photometric Alignment Loss significantly improves metrics and generalization across 6 tasks, 16 datasets, and 16 architectures by resolving nuisance photometric discrepancies with minimal overhead.

👉 Paper link: https://huggingface.co/papers/2604.08172

30. The Master Key Hypothesis: Unlocking Cross-Model Capability Transfer via Linear Subspace Alignment

🔑 Keywords: Post-trained model, Master Key Hypothesis, latent subspace, linear alignment, UNLOCK

💡 Category: Machine Learning

🌟 Research Objective:

– The research investigates if post-trained capabilities can be transferred across different model scales without retraining, focusing on the transfer across these scales.

🛠️ Research Methods:

– The researchers propose the Master Key Hypothesis, identifying that capabilities correspond to directions in a latent subspace. The study introduces UNLOCK, a training-free and label-free framework using linear alignment to apply these capabilities at inference time.

💬 Research Conclusions:

– The study demonstrates significant improvements in reasoning behaviors, specifically Chain-of-Thought and mathematical reasoning, across model scales without additional training. The successful transfer relies on pre-trained capabilities, enhancing them through a sharpening of the output distribution towards effective reasoning trajectories.

👉 Paper link: https://huggingface.co/papers/2604.06377

31. Small Vision-Language Models are Smart Compressors for Long Video Understanding

🔑 Keywords: Tempo, Small Vision-Language Model, Adaptive Token Allocation, intent-aligned representations, long-form video understanding

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The research aims to efficiently compress long videos for multimodal understanding within strict token budgets by using a small vision-language model for temporal compression.

🛠️ Research Methods:

– Tempo leverages a Small Vision-Language Model as a local temporal compressor and introduces a method called Adaptive Token Allocation for maintaining intent-aligned representations without breaking causality.

💬 Research Conclusions:

– Tempo achieves state-of-the-art performance in compressing long videos, significantly outperforming existing models while utilizing a smaller token budget, demonstrating the potential for true long-form video understanding through intent-driven efficiency.

👉 Paper link: https://huggingface.co/papers/2604.08120

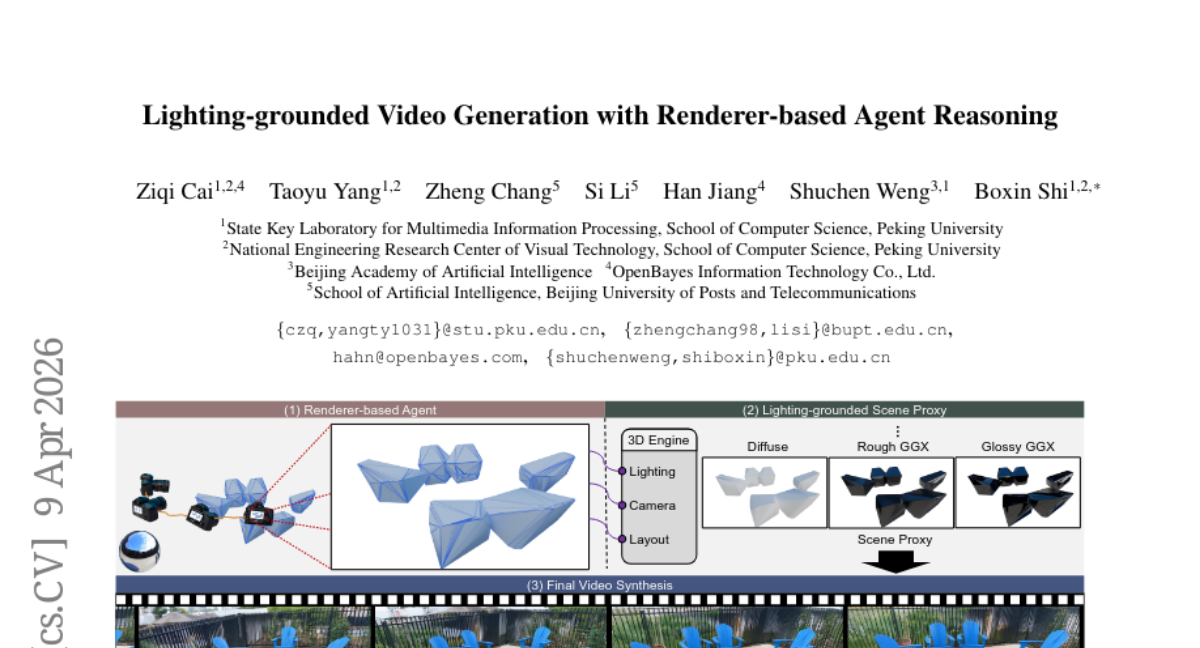

32. Lighting-grounded Video Generation with Renderer-based Agent Reasoning

🔑 Keywords: LiVER, Diffusion models, Scene-controllable, 3D scene properties, Video generation

💡 Category: Generative Models

🌟 Research Objective:

– Develop LiVER, a diffusion-based framework for scene-controllable video generation that disentangles 3D scene properties.

🛠️ Research Methods:

– Introduce a novel framework with explicit conditioning on 3D scene properties, supported by a large-scale dataset with dense annotations.

– Implement a lightweight conditioning module and a progressive training strategy to integrate control signals into a foundational video diffusion model.

– Develop a scene agent to translate high-level user instructions into 3D control signals.

💬 Research Conclusions:

– LiVER achieves state-of-the-art photorealism and temporal consistency, providing precise, disentangled control over scene factors and setting a new standard for controllable video generation.

👉 Paper link: https://huggingface.co/papers/2604.07966

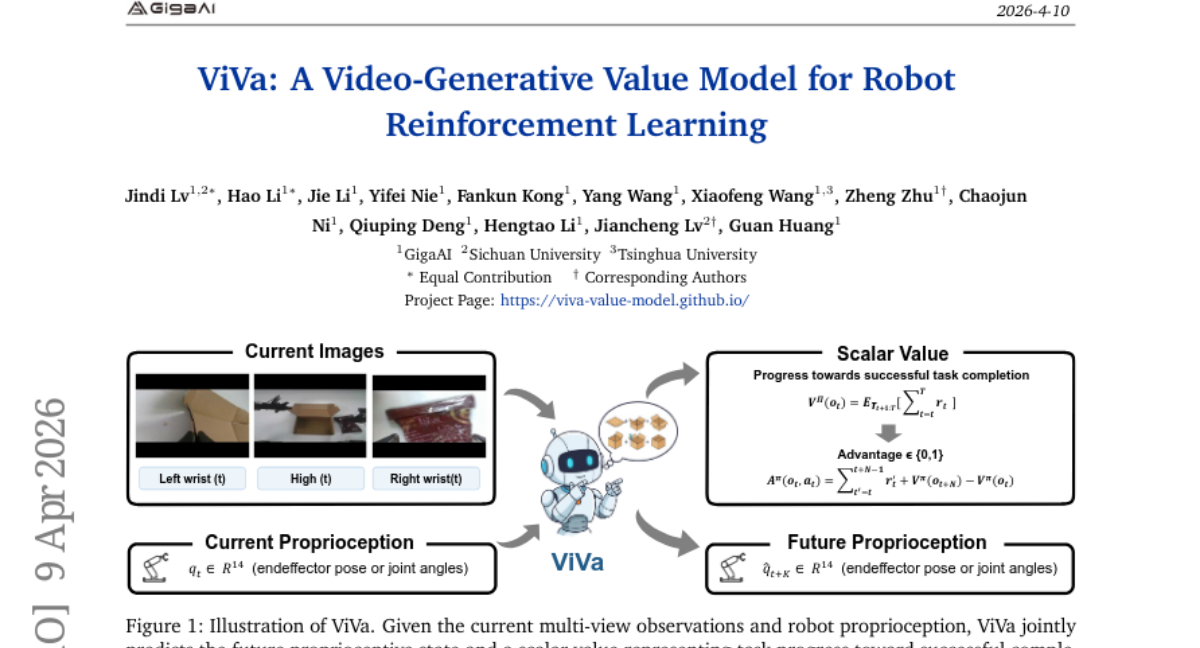

33. ViVa: A Video-Generative Value Model for Robot Reinforcement Learning

🔑 Keywords: ViVa, Reinforcement Learning, Spatiotemporal Priors, Video-generative Models, Embodiment Dynamics

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To improve robot manipulation by utilizing a video-generative value model, ViVa, which exploits pretrained video generators to estimate values based on anticipated embodiment dynamics.

🛠️ Research Methods:

– ViVa takes current observations and robot proprioception as input to predict future proprioception and a scalar value for the current state, grounding value estimation in expectation dynamics.

💬 Research Conclusions:

– ViVa, integrated into RECAP, demonstrates substantial improvements in real-world tasks, such as box assembly, by providing more reliable value signals and generalizing to novel objects.

👉 Paper link: https://huggingface.co/papers/2604.08168

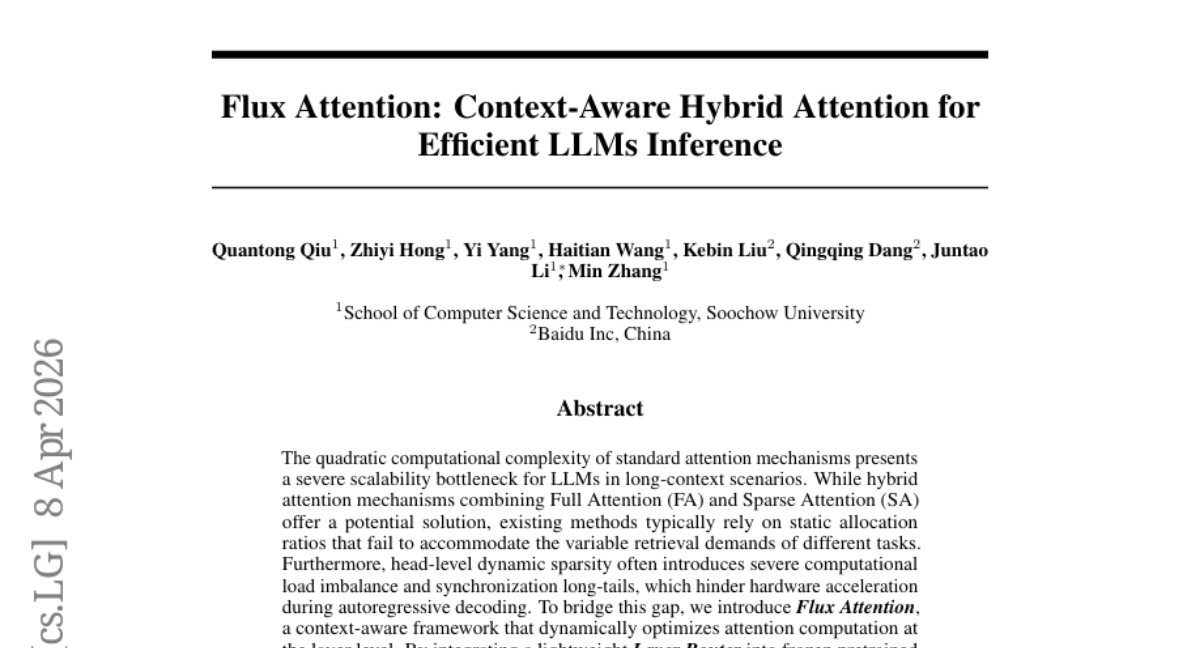

34. Flux Attention: Context-Aware Hybrid Attention for Efficient LLMs Inference

🔑 Keywords: Flux Attention, LLMs, Sparse Attention, Layer Router, computational complexity

💡 Category: Natural Language Processing

🌟 Research Objective:

– The paper aims to introduce Flux Attention, a context-aware framework that dynamically optimizes attention computation in large language models (LLMs) to improve inference speed while maintaining high information retrieval fidelity.

🛠️ Research Methods:

– The method involves incorporating a lightweight Layer Router into frozen pretrained LLMs, allowing for adaptive routing of layers to Full Attention (FA) or Sparse Attention (SA) based on the input context, requiring minimal training overhead of only 12 hours on 8 A800 GPUs.

💬 Research Conclusions:

– Flux Attention achieves significant speed improvements, up to 2.8x in prefill and 2.0x in decode stages, and a superior trade-off between performance and inference speed compared to baseline models, as demonstrated in extensive experiments across various long-context and mathematical reasoning benchmarks.

👉 Paper link: https://huggingface.co/papers/2604.07394

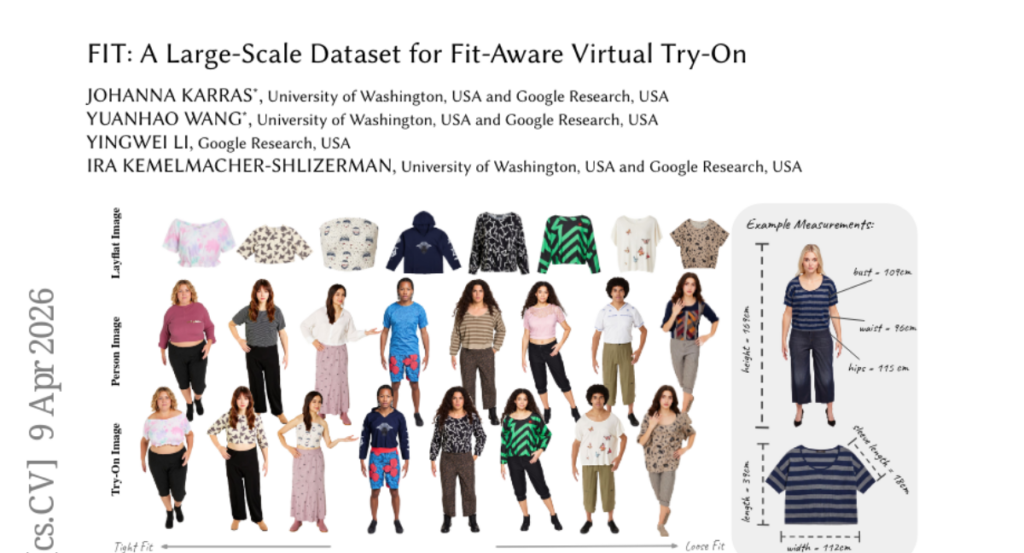

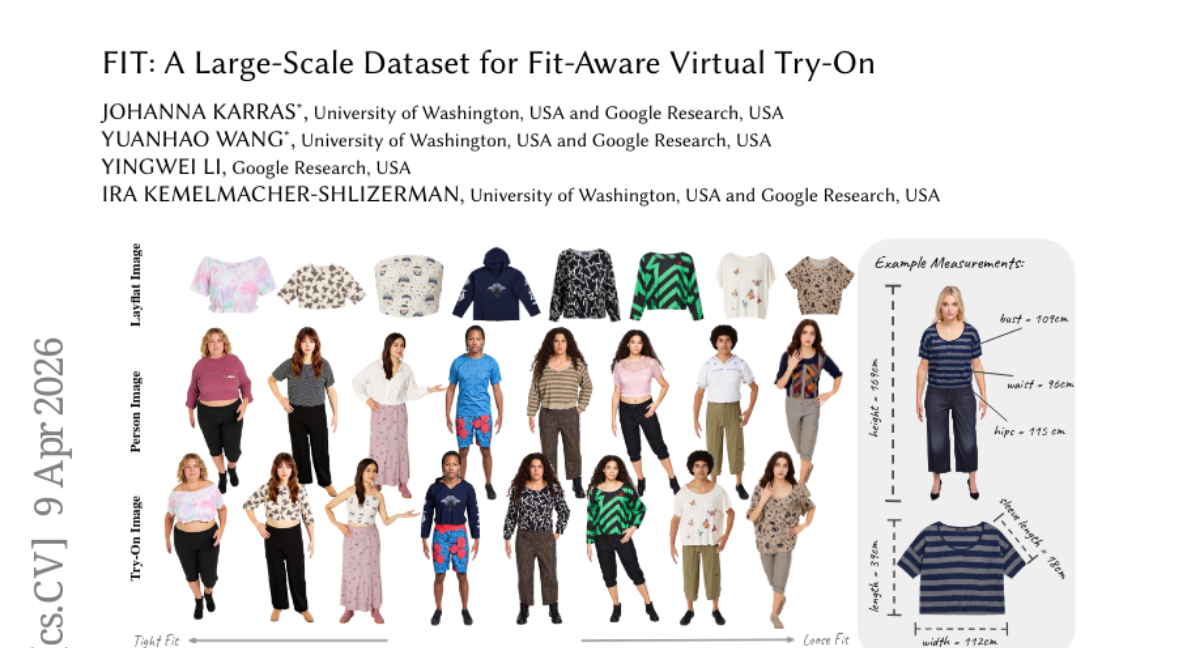

35. FIT: A Large-Scale Dataset for Fit-Aware Virtual Try-On

🔑 Keywords: Virtual Try-on, Garment Fit, Garment Measurements, Physics Simulation, Photorealistic Images

💡 Category: Computer Vision

🌟 Research Objective:

– Introduce the FIT dataset to improve garment fit accuracy in virtual try-on experiences, providing precise body and garment measurements.

🛠️ Research Methods:

– Use synthetic 3D garment generation and physics simulation for realistic garment fitting.

– Employ a novel re-texturing framework to convert synthetic renderings into photorealistic images, maintaining geometry.

💬 Research Conclusions:

– The FIT dataset establishes a new state-of-the-art for fit-aware virtual try-on and provides a robust benchmark for future research.

👉 Paper link: https://huggingface.co/papers/2604.08526

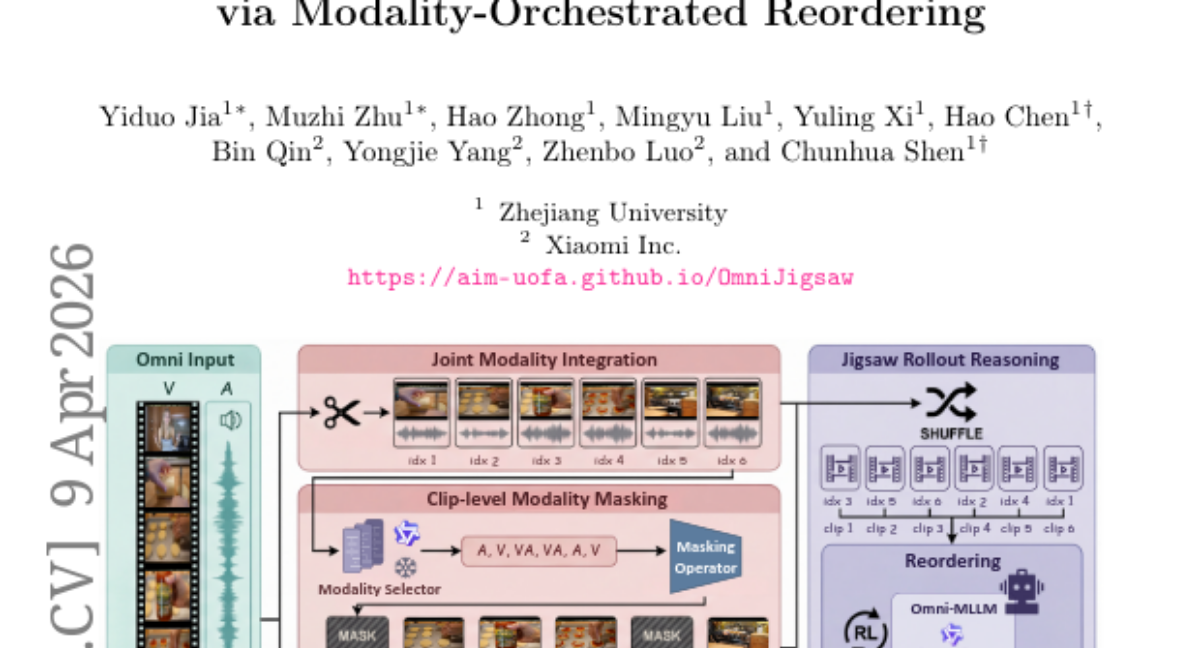

36. OmniJigsaw: Enhancing Omni-Modal Reasoning via Modality-Orchestrated Reordering

🔑 Keywords: OmniJigsaw, self-supervised framework, temporal reordering, cross-modal integration, joint modality integration

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The study aims to develop OmniJigsaw, a self-supervised framework designed to enhance video-audio understanding and collaborative reasoning through temporal reordering and cross-modal integration strategies.

🛠️ Research Methods:

– The framework leverages a temporal reordering proxy task, employing strategies such as Joint Modality Integration, Sample-level Modality Selection, and Clip-level Modality Masking. A two-stage coarse-to-fine data filtering pipeline is introduced to adapt the method to large-scale unannotated omni-modal data efficiently.

💬 Research Conclusions:

– The researchers identify and address a “bi-modal shortcut phenomenon” in joint modality integration, showing that clip-level modality masking outperforms sample-level modality selection. Evaluations on 15 benchmarks display significant improvements in video, audio, and collaborative reasoning, establishing OmniJigsaw as an effective approach for self-supervised omni-modal learning.

👉 Paper link: https://huggingface.co/papers/2604.08209

37. OpenVLThinkerV2: A Generalist Multimodal Reasoning Model for Multi-domain Visual Tasks

🔑 Keywords: Gaussian GRPO, Reinforcement Learning, Multimodal Generalist Models, Gradient Equity, Advantage Distribution

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The objective of this research is to address the challenges in multimodal model training by enhancing reinforcement learning approaches to improve the balance between perception and reasoning within generalist models.

🛠️ Research Methods:

– A novel RL training objective called Gaussian GRPO (G^2RPO) is introduced, which employs distributional matching to ensure gradient equity and stable learning by converging task advantage distributions to a standard normal distribution.

– Two task-level shaping mechanisms, response length shaping and entropy shaping, are developed to enhance reasoning and maintain exploration stability.

💬 Research Conclusions:

– The integration of Gaussian GRPO methodologies results in the creation of OpenVLThinkerV2, a robust multimodal model that demonstrates superior performance across 18 diverse benchmarks compared to both open-source and leading proprietary models.

👉 Paper link: https://huggingface.co/papers/2604.08539

38. MolmoWeb: Open Visual Web Agent and Open Data for the Open Web

🔑 Keywords: Web Agents, MolmoWeb, Open Source, Browser-based Tasks, Multimodal

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The study aims to develop open-source web agents that achieve state-of-the-art performance on browser tasks using the new MolmoWebMix dataset.

🛠️ Research Methods:

– Utilization of diverse datasets, including over 100K synthetic task trajectories and 30K+ human demonstrations, to train instruction-conditioned multimodal web agents.

💬 Research Conclusions:

– MolmoWeb agents outperform comparable models and surpass certain high-capacity models on benchmarks without relying on HTML or accessibility tree information.

👉 Paper link: https://huggingface.co/papers/2604.08516

39. Act Wisely: Cultivating Meta-Cognitive Tool Use in Agentic Multimodal Models

🔑 Keywords: HDPO, Meta-cognitive deficit, tool usage, conditional advantage estimation, cognitive curriculum

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The study aims to address meta-cognitive deficits in agents, which affect their tool usage decisions, by introducing a new framework called HDPO.

🛠️ Research Methods:

– HDPO utilizes decoupled optimization channels for accuracy and efficiency, bypassing reward scalarization to manage task correctness and execution economy separately.

💬 Research Conclusions:

– The proposed model, Metis, significantly reduces unnecessary tool invocations while enhancing reasoning accuracy through its structured cognitive curriculum.

👉 Paper link: https://huggingface.co/papers/2604.08545

40. KnowU-Bench: Towards Interactive, Proactive, and Personalized Mobile Agent Evaluation

🔑 Keywords: Personalized mobile agents, proactive assistance, preference inference, LLM-driven user simulator, intervention calibration

💡 Category: Human-AI Interaction

🌟 Research Objective:

– To evaluate the true preference inference and proactive assistance capabilities of personalized mobile agents in real-world GUI environments using a comprehensive benchmark known as KnowU-Bench.

🛠️ Research Methods:

– Development of an online benchmark built on a reproducible Android emulation environment, covering a variety of general, personalized, and proactive tasks. It includes the use of an LLM-driven user simulator grounded in structured profiles for realistic dialogues and evaluations using a hybrid protocol.

💬 Research Conclusions:

– Agents excel in explicit task execution but show significant performance degradation in tasks requiring user preference inference or intervention calibration, revealing gaps in current models’ ability to provide reliable personal assistance.

👉 Paper link: https://huggingface.co/papers/2604.08455

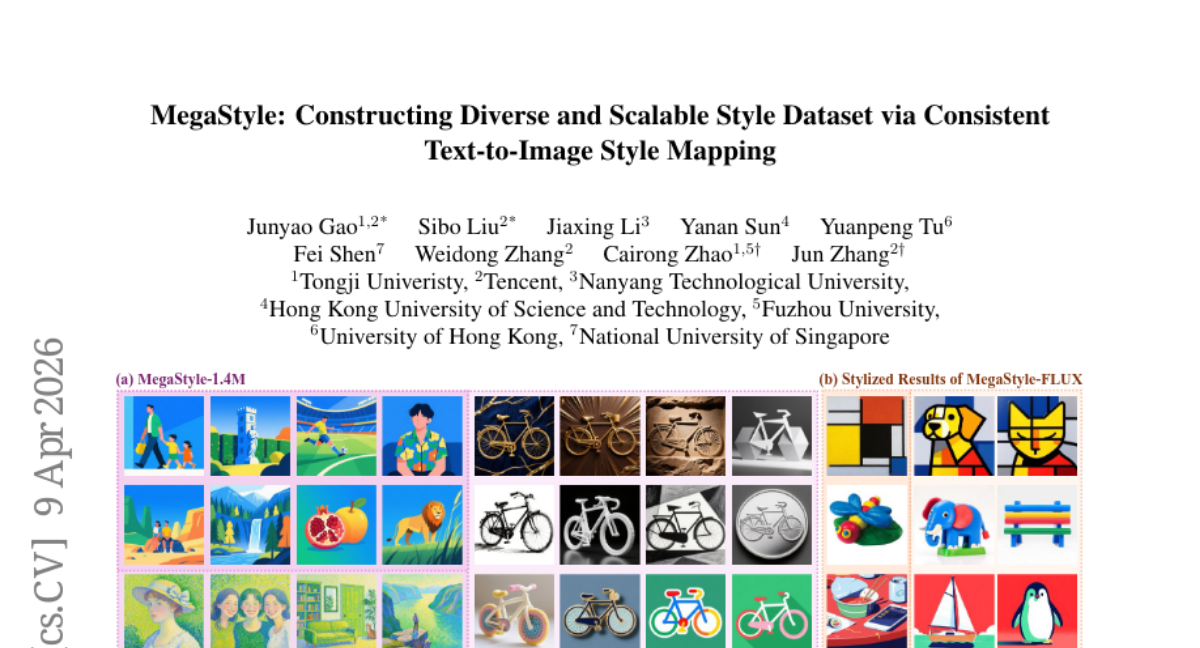

41. MegaStyle: Constructing Diverse and Scalable Style Dataset via Consistent Text-to-Image Style Mapping

🔑 Keywords: MegaStyle, style dataset, large generative models, style-supervised contrastive learning, style transfer

💡 Category: Generative Models

🌟 Research Objective:

– To develop MegaStyle, a scalable data curation pipeline, aimed at creating intra-style consistent, inter-style diverse, and high-quality style datasets using large generative models.

🛠️ Research Methods:

– Curated a diverse prompt gallery with 170K style and 400K content prompts to generate MegaStyle-1.4M style dataset.

– Implemented style-supervised contrastive learning to fine-tune the MegaStyle-Encoder for extracting style-specific representations.

💬 Research Conclusions:

– MegaStyle-1.4M is effective in maintaining intra-style consistency and inter-style diversity, crucial for style datasets.

– Models like MegaStyle-Encoder and MegaStyle-FLUX offer reliable style similarity measurement and contribute significantly to the style transfer community.

👉 Paper link: https://huggingface.co/papers/2604.08364

42. When Numbers Speak: Aligning Textual Numerals and Visual Instances in Text-to-Video Diffusion Models

🔑 Keywords: NUMINA, Text-to-video diffusion models, numerical alignment, prompt-layout inconsistencies, CLIP alignment

💡 Category: Generative Models

🌟 Research Objective:

– The research introduces NUMINA, a training-free framework aimed at enhancing the numerical accuracy of text-to-video diffusion models by identifying and correcting layout inconsistencies.

🛠️ Research Methods:

– NUMINA identifies discriminative attention heads to derive a latent layout, refines it, and uses cross-attention modulation to guide regeneration, improving numerical alignment.

💬 Research Conclusions:

– It achieved up to 7.4% improvement in counting accuracy on specific models and improved CLIP alignment while maintaining temporal consistency, demonstrating an effective approach for count-accurate video generation. The code is publicly available for further use.

👉 Paper link: https://huggingface.co/papers/2604.08546

43. SkillClaw: Let Skills Evolve Collectively with Agentic Evolver

🔑 Keywords: AI Native, multi-user agent ecosystems, reusable skills, cross-user knowledge transfer, cumulative capability improvement

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To enable collective skill evolution in multi-user LLM agent systems, improving skill reliability and system performance.

🛠️ Research Methods:

– Development of SkillClaw, a framework that aggregates user interactions and autonomously updates skills, recognizing behavioral patterns and refining or extending skills.

💬 Research Conclusions:

– SkillClaw significantly enhances Qwen3-Max’s performance in real-world scenarios by facilitating cross-user knowledge transfer and cumulative capability improvement with minimal user feedback.

👉 Paper link: https://huggingface.co/papers/2604.08377