AI Native Daily Paper Digest – 20260421

1. Extending One-Step Image Generation from Class Labels to Text via Discriminative Text Representation

🔑 Keywords: MeanFlow, text inputs, LLM-based text encoders, text-conditioned synthesis, generation performance improvements

💡 Category: Generative Models

🌟 Research Objective:

– To extend the MeanFlow generation framework from class labels to flexible text inputs to enable richer content creation.

🛠️ Research Methods:

– Integration of powerful LLM-based text encoders into the MeanFlow framework to enhance semantic feature representation and address limitations in few-step refinement.

💬 Research Conclusions:

– Efficient text-conditioned synthesis was achieved for the first time, showing significant generation performance improvements on a widely used diffusion model.

– The research provides a general and practical reference for future studies on text-conditioned MeanFlow generation.

👉 Paper link: https://huggingface.co/papers/2604.18168

2. Agent-World: Scaling Real-World Environment Synthesis for Evolving General Agent Intelligence

🔑 Keywords: Agent-World, self-evolving training, general agent intelligence, scalable environments, AI Native

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The study introduces a self-evolving training framework named Agent-World to enhance general agent intelligence through autonomous environment discovery and continuous learning in real-world scenarios.

🛠️ Research Methods:

– It employs a two-component approach: Agentic Environment-Task Discovery for exploring theme-aligned databases and synthesizing tasks, and Continuous Self-Evolving Agent Training, which uses multi-environment reinforcement learning and a self-evolving agent arena.

💬 Research Conclusions:

– Agent-World-8B and 14B show consistent outperformance over strong proprietary models and environments in 23 challenging benchmarks, highlighting insights into environment diversity and self-evolution for developing general agent intelligence.

👉 Paper link: https://huggingface.co/papers/2604.18292

3. MultiWorld: Scalable Multi-Agent Multi-View Video World Models

🔑 Keywords: MultiWorld, multi-agent systems, multi-view consistency, Multi-Agent Condition Module, Global State Encoder

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– Introduce a unified framework called MultiWorld for modeling environments that involve multiple agents and multiple views, enabling precise multi-agent control and maintaining view consistency.

🛠️ Research Methods:

– Implement specialized modules like the Multi-Agent Condition Module for multi-agent controllability and the Global State Encoder for coherent observations across views.

– Test the framework in scenarios such as multi-player game environments and multi-robot manipulation tasks.

💬 Research Conclusions:

– MultiWorld outperforms existing baselines regarding video fidelity, action-following ability, and maintaining multi-view consistency. The framework allows for flexible scaling of agents and views, synthesizing various perspectives efficiently.

👉 Paper link: https://huggingface.co/papers/2604.18564

4. GFT: From Imitation to Reward Fine-Tuning with Unbiased Group Advantages and Dynamic Coefficient Rectification

🔑 Keywords: Group Fine-Tuning, Supervised Fine-Tuning, Reinforcement Learning, Policy Gradient Optimization, Dynamic Coefficient Rectification

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The study aims to improve training stability and efficiency in supervised fine-tuning by leveraging diverse response groups and adaptive weight bounding.

🛠️ Research Methods:

– The introduction of Group Fine-Tuning (GFT) which employs Group Advantage Learning to construct diverse response groups and derive normalized contrastive supervision.

– Dynamic Coefficient Rectification is used to adaptively bound inverse-probability weights to stabilize optimization processes.

💬 Research Conclusions:

– Group Fine-Tuning outperforms traditional supervised fine-tuning methods, leading to policies that are better integrated with subsequent reinforcement learning training.

👉 Paper link: https://huggingface.co/papers/2604.14258

5. WebCompass: Towards Multimodal Web Coding Evaluation for Code Language Models

🔑 Keywords: Large language models, interactive coding agents, multimodal benchmark, human-in-the-loop pipeline, AI-generated summary

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The paper introduces WebCompass, a unified evaluation framework designed to assess the full lifecycle of web engineering capabilities across multiple input modalities and task types.

🛠️ Research Methods:

– The methodology involves a multimodal benchmark covering text, image, and video, and includes tasks like generation, editing, and repair. It employs a human-in-the-loop pipeline, checklist-guided LLM-as-a-Judge protocol, and a novel Agent-as-a-Judge paradigm.

💬 Research Conclusions:

– Closed-source models are generally stronger and more balanced than open-source models, especially in maintaining interactivity and execution capability. Challenges persist in aesthetics, and framework choice significantly influences outcomes.

👉 Paper link: https://huggingface.co/papers/2604.18224

6. SkillFlow:Benchmarking Lifelong Skill Discovery and Evolution for Autonomous Agents

🔑 Keywords: autonomous agents, SkillFlow, Domain-Agnostic Execution Flow, Agentic Lifelong Learning, skill discovery

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– SkillFlow aims to evaluate autonomous agents’ ability to discover, repair, and maintain skills over time through a lifelong learning protocol.

🛠️ Research Methods:

– The study introduces a benchmark of 166 tasks across 20 families using a Domain-Agnostic Execution Flow to assess skill management in autonomous agents under the Agentic Lifelong Learning protocol.

💬 Research Conclusions:

– The experiments indicate a significant capability gap among autonomous agents in skill usage and utility. Specifically, some agents demonstrate improved task success, but high skill usage does not always correlate with utility enhancement.

👉 Paper link: https://huggingface.co/papers/2604.17308

7. The Illusion of Certainty: Decoupling Capability and Calibration in On-Policy Distillation

🔑 Keywords: On-policy distillation (OPD), Scaling Law, Miscalibration, Privileged Context, Calibration-aware OPD (CaOPD)

💡 Category: Natural Language Processing

🌟 Research Objective:

– To address miscalibration in On-policy distillation (OPD) by developing a calibration-aware framework that enhances both model performance and confidence reliability.

🛠️ Research Methods:

– Theoretical formalization of the miscalibration issue stemming from information mismatch between training and deployment contexts.

– Proposal of CaOPD, a framework that estimates empirical confidence from model rollouts and revises self-reported confidence, using a self-distillation pipeline.

💬 Research Conclusions:

– CaOPD achieves Pareto-optimal calibration while maintaining competitive capabilities and generalizes well under out-of-distribution and continual learning scenarios.

– Capability distillation does not guarantee calibrated confidence, which should be an essential objective in post-training processes.

👉 Paper link: https://huggingface.co/papers/2604.16830

8. GenericAgent: A Token-Efficient Self-Evolving LLM Agent via Contextual Information Density Maximization (V1.0)

🔑 Keywords: GenericAgent, context information density maximization, hierarchical memory, self-evolution mechanism, reusable SOPs

💡 Category: AI Systems and Tools

🌟 Research Objective:

– Introduce GenericAgent, a self-evolving large language model agent system that enhances context information density to address long-horizon limitations in AI interactions.

🛠️ Research Methods:

– Utilizes a minimal atomic tool set, hierarchical on-demand memory, self-evolution mechanism, and context truncation and compression layer.

💬 Research Conclusions:

– GenericAgent surpasses existing systems in task completion, tool use efficiency, memory effectiveness, and self-evolution while using fewer resources. It continues to improve over time.

👉 Paper link: https://huggingface.co/papers/2604.17091

9. On the Reliability of Computer Use Agents

🔑 Keywords: stochasticity, task specification ambiguity, behavioral variability, execution reliability, AI-generated summary

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To investigate the sources of unreliability in computer-use agents by exploring execution stochasticity, task specification ambiguity, and behavioral variability.

🛠️ Research Methods:

– Analysis conducted on OSWorld using repeated executions and paired statistical tests to understand task-level changes.

💬 Research Conclusions:

– Reliability of agents is affected by task specification and variability in execution.

– The study emphasizes the necessity for repeated evaluations and developing stable strategies, suggesting interaction to resolve task ambiguities.

👉 Paper link: https://huggingface.co/papers/2604.17849

10. Agents Explore but Agents Ignore: LLMs Lack Environmental Curiosity

🔑 Keywords: LLM-based agents, environmental curiosity, unexpected information, task solutions, training data distribution

💡 Category: Natural Language Processing

🌟 Research Objective:

– The study investigates why LLM-based agents fail to exploit unexpected information despite recognizing it, focusing on environmental curiosity.

🛠️ Research Methods:

– Utilized three benchmarks (Terminal-Bench, SWE-Bench, AppWorld) to inject complete task solutions into environments and measure how agents discover and exploit these solutions.

💬 Research Conclusions:

– LLM-based agents exhibit a significant gap between recognizing unexpected information and effectively exploiting it. Factors like tools, compute, and training data distribution influence this gap, with optimized configurations still resulting in agents ignoring discovered solutions.

👉 Paper link: https://huggingface.co/papers/2604.17609

11. Training LLM Agents for Spontaneous, Reward-Free Self-Evolution via World Knowledge Exploration

🔑 Keywords: intrinsic meta-evolution, world knowledge, outcome-based reward mechanism, native self-evolution, Qwen3-30B

💡 Category: Reinforcement Learning

🌟 Research Objective:

– Develop agents with intrinsic Meta-Evolution capabilities to enhance performance in web navigation by independently learning about unseen environments.

🛠️ Research Methods:

– Design an outcome-based reward mechanism used exclusively during the training phase to teach models to improve self-generated world knowledge and effectively explore environments.

💬 Research Conclusions:

– The shift to native self-evolution in agents, such as Qwen3-30B and Seed-OSS-36B, demonstrates a 20% performance increase in web navigation tasks, establishing a new paradigm for evolving agents.

👉 Paper link: https://huggingface.co/papers/2604.18131

12. Meta-learning In-Context Enables Training-Free Cross Subject Brain Decoding

🔑 Keywords: Visual Decoding, fMRI, In-context Learning, Neural Encoding Patterns

💡 Category: Computer Vision

🌟 Research Objective:

– The study aims to develop a meta-optimized approach for semantic visual decoding from fMRI data that generalizes across different subjects and scanners without fine-tuning.

🛠️ Research Methods:

– The approach involves conditioning on a small set of image-brain activation examples for new individuals, optimizing for in-context learning, and performing hierarchical inference to invert the encoder.

💬 Research Conclusions:

– The method demonstrates strong generalization across subjects and scanners without requiring anatomical alignment or stimulus overlap, marking progress towards a generalizable foundation model for non-invasive brain decoding.

👉 Paper link: https://huggingface.co/papers/2604.08537

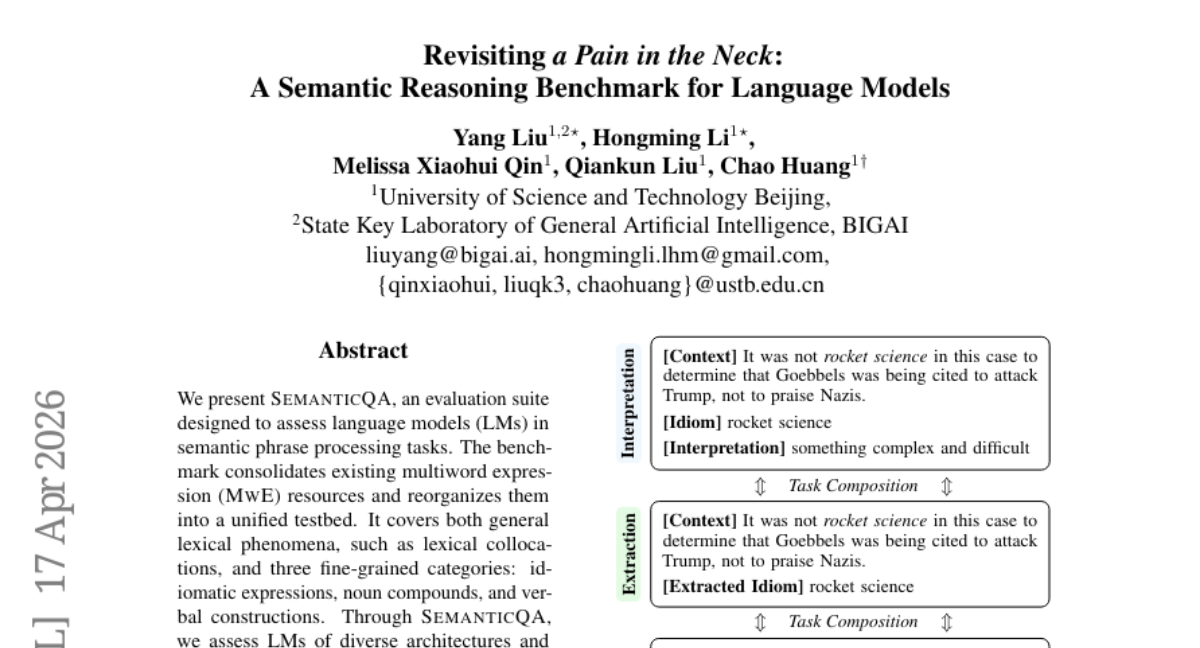

13. Revisiting a Pain in the Neck: A Semantic Reasoning Benchmark for Language Models

🔑 Keywords: SemanticQA, language models, semantic reasoning, semantic understanding, multiword expressions

💡 Category: Natural Language Processing

🌟 Research Objective:

– To evaluate language models on their ability to process semantic phrases through the SemanticQA benchmark, covering various expressions and categories.

🛠️ Research Methods:

– Consolidating existing multiword expression resources into a unified testbed and assessing diverse language model architectures in tasks like extraction, classification, and interpretation.

💬 Research Conclusions:

– Significant performance variations were observed in semantic reasoning tasks, providing insights into the differences in reasoning efficacy and semantic understanding of language models.

👉 Paper link: https://huggingface.co/papers/2604.16593

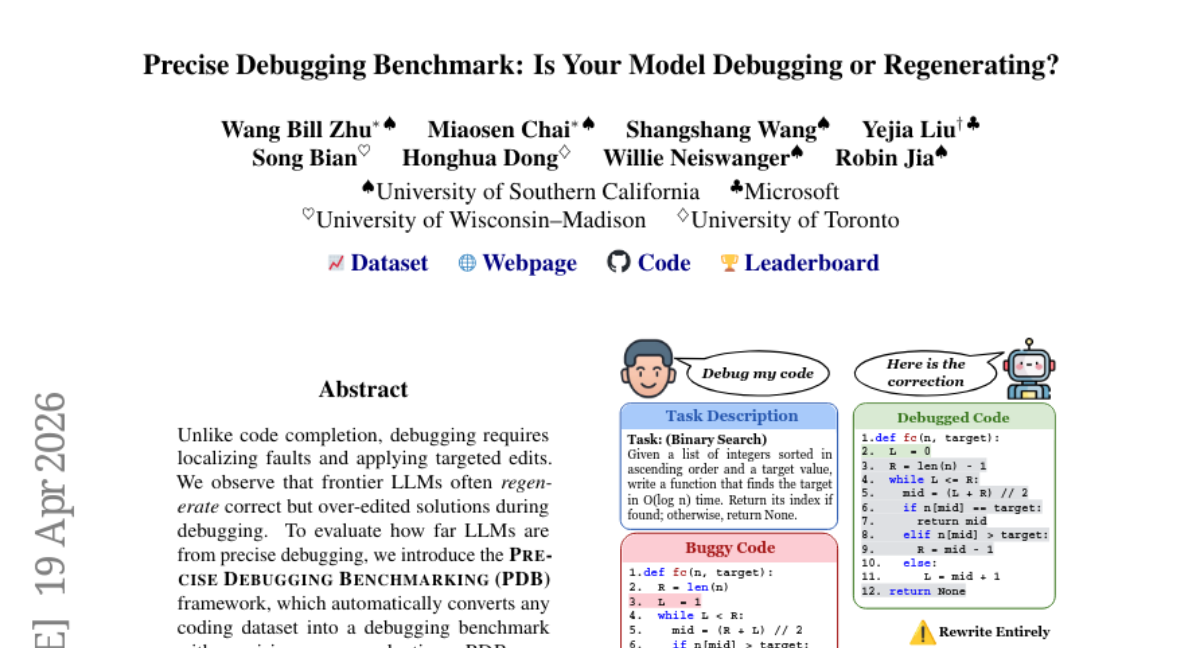

14. Precise Debugging Benchmark: Is Your Model Debugging or Regenerating?

🔑 Keywords: Precise Debugging Benchmark, edit-level precision, bug-level recall, iterative debugging, frontier models

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The research aims to assess the gap between functional correctness and precise fault localization in debugging tasks performed by frontier LLMs (Large Language Models).

🛠️ Research Methods:

– The paper introduces the Precise Debugging Benchmark (PDB) framework, which transforms any coding dataset into a debugging benchmark and evaluates it with precision-aware metrics. It defines two novel metrics: edit-level precision and bug-level recall.

💬 Research Conclusions:

– Frontier models, despite achieving high unit-test pass rates, show poor precision in debugging tasks, revealing the inadequacy of current debugging methodologies. Iterative and agentic debugging strategies do not significantly enhance precision or recall, suggesting a need for re-evaluating post-training pipelines for coding models.

👉 Paper link: https://huggingface.co/papers/2604.17338

15. MedConclusion: A Benchmark for Biomedical Conclusion Generation from Structured Abstracts

🔑 Keywords: Large language models, biomedical conclusion generation, MedConclusion, evidence-to-conclusion reasoning

💡 Category: AI in Healthcare

🌟 Research Objective:

– Introduction of a large-scale dataset, MedConclusion, containing 5.7 million PubMed structured abstracts aimed at evaluating the capability of large language models in reasoning and inferring scientific conclusions from structured biomedical evidence.

🛠️ Research Methods:

– Pairing non-conclusion sections of abstracts with original conclusions to naturally supervise evidence-to-conclusion reasoning.

– Includes evaluation of large language models under different prompting settings using reference-based metrics and judge-based scoring.

💬 Research Conclusions:

– Distinct behavioral differences exist between conclusion writing and summary writing in large language models.

– Current automatic metrics show strong models are closely clustered, and the identity of judges can substantially affect scoring outcomes.

👉 Paper link: https://huggingface.co/papers/2604.06505

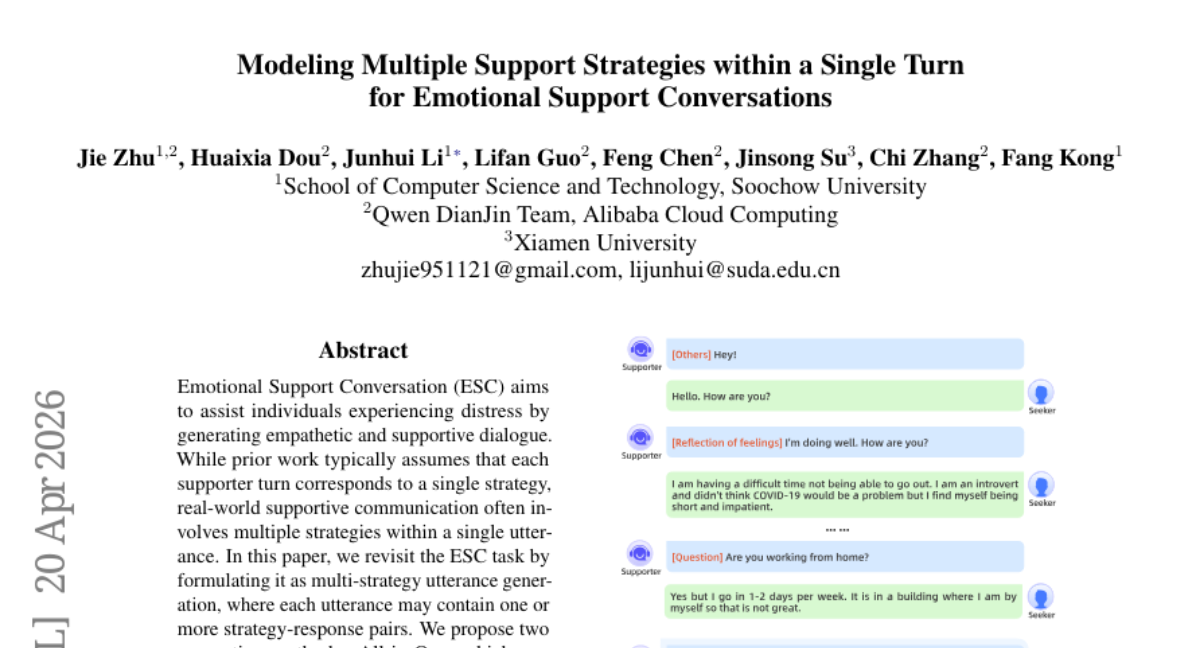

16. Modeling Multiple Support Strategies within a Single Turn for Emotional Support Conversations

🔑 Keywords: Emotional Support Conversation, Multi-strategy utterance generation, Strategy-response pairs, Cognitive reasoning, Reinforcement Learning

💡 Category: Natural Language Processing

🌟 Research Objective:

– The paper aims to enhance Emotional Support Conversation (ESC) by enabling multiple support strategies within a single utterance, revisiting the task as multi-strategy utterance generation.

🛠️ Research Methods:

– Two methods are proposed: All-in-One, which predicts all strategy-response pairs in a single step, and One-by-One, which iteratively generates pairs. Both methods utilize cognitive reasoning guided by reinforcement learning to improve strategy selection and response composition.

💬 Research Conclusions:

– Experimental results on the ESConv dataset indicate that the proposed methods effectively model multi-strategy utterances, leading to improved supportive quality and dialogue success. The research provides the first empirical evidence of the feasibility and benefits of using multiple strategies in single utterances for ESC.

👉 Paper link: https://huggingface.co/papers/2604.17972

17. MARCO: Navigating the Unseen Space of Semantic Correspondence

🔑 Keywords: semantic correspondence, fine-grained localization, self-distillation framework, diffusion backbones, DINOv2

💡 Category: Computer Vision

🌟 Research Objective:

– The paper introduces MARCO, a model aimed at improving semantic correspondence accuracy and generalization beyond training data through a coarse-to-fine objective and self-distillation framework.

🛠️ Research Methods:

– Employs a novel training framework combining DINOv2 and diffusion backbones to enhance fine-grained localization and semantic generalization.

💬 Research Conclusions:

– MARCO sets new benchmarks on datasets like SPair-71k, AP-10K, and PF-PASCAL, excelling in fine-grained localization, unseen keypoints generalization, and maintaining efficiency by being 3x smaller and 10x faster than similar models.

👉 Paper link: https://huggingface.co/papers/2604.18267

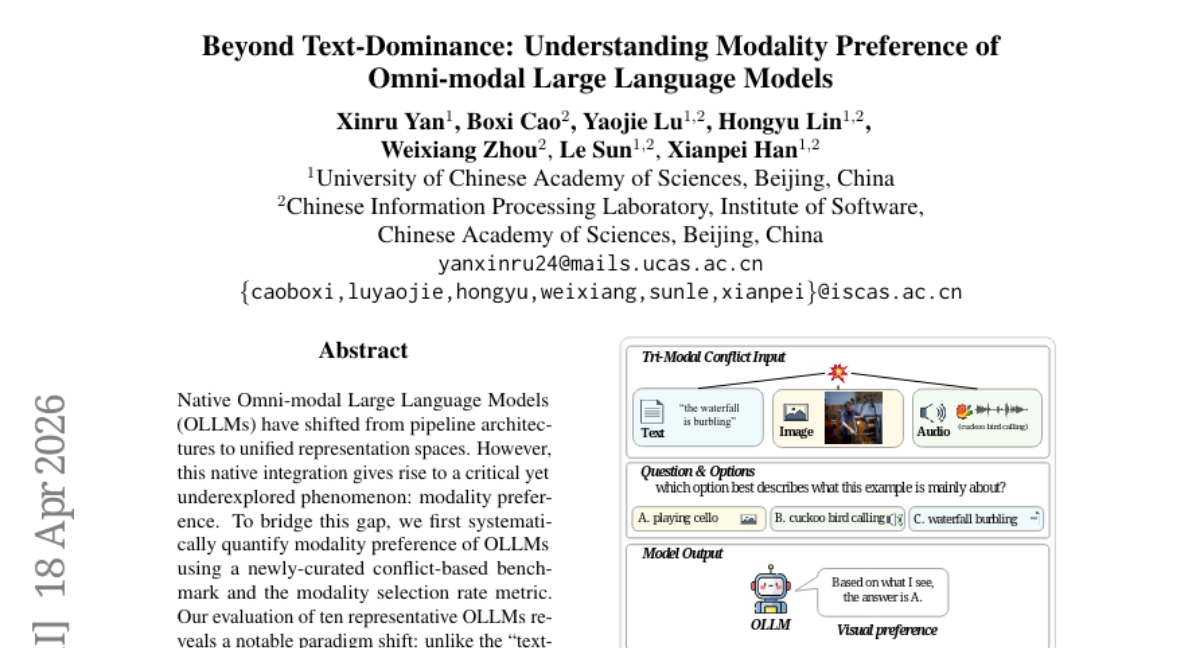

18. Beyond Text-Dominance: Understanding Modality Preference of Omni-modal Large Language Models

🔑 Keywords: Native Omni-modal Large Language Models (OLLMs), modality preference, cross-modal hallucinations, visual preference, layer-wise probing

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The study aims to examine the emerging modality preference in Native Omni-modal Large Language Models (OLLMs), focusing on their shift towards a visual preference over text.

🛠️ Research Methods:

– The researchers used a newly-curated conflict-based benchmark and the modality selection rate metric to quantify modality preference. They also employed layer-wise probing to analyze the progression of this preference in the model layers.

💬 Research Conclusions:

– The findings reveal a notable shift from traditional text-dominance to a pronounced visual preference in OLLMs. This modality preference emerges progressively in the mid-to-late layers, offering insights into diagnosing cross-modal hallucinations and improving the trustworthiness of OLLMs. The open-source code and resources are publicly available for further exploration.

👉 Paper link: https://huggingface.co/papers/2604.16902

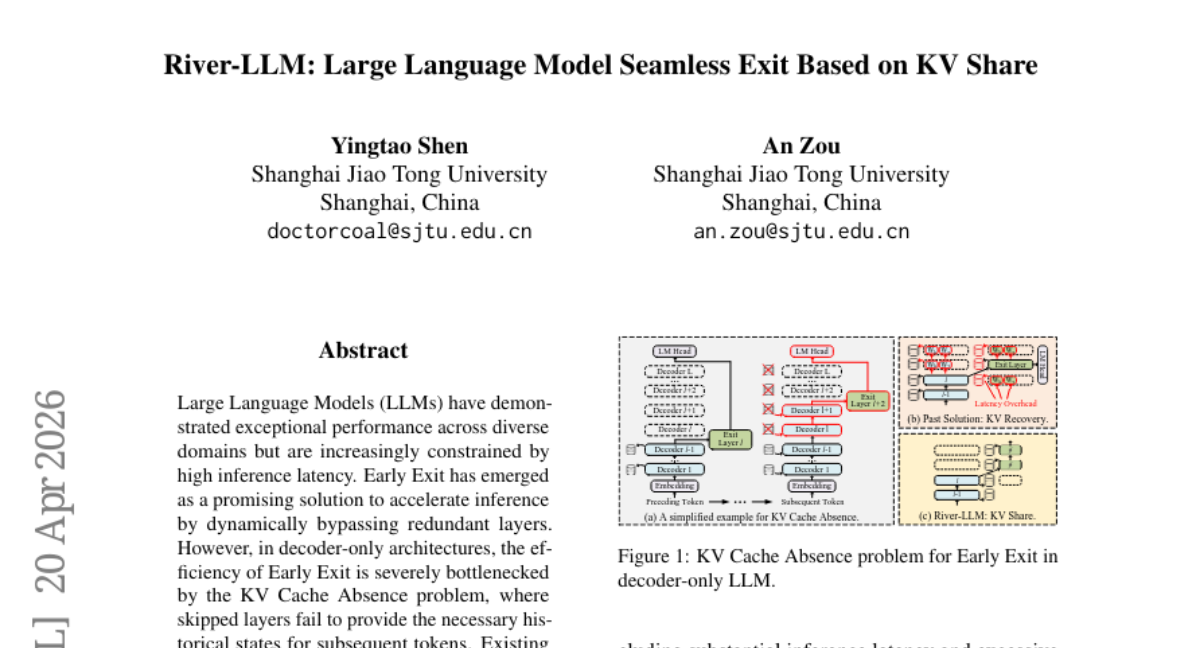

19. River-LLM: Large Language Model Seamless Exit Based on KV Share

🔑 Keywords: River-LLM, Early Exit, KV Cache Absence, decoder-only architectures, AI-generated summary

💡 Category: Generative Models

🌟 Research Objective:

– The study aims to address the bottleneck caused by KV Cache Absence in decoder-only architectures and enhance the efficiency of token-level Early Exit in LLMs using the River-LLM framework.

🛠️ Research Methods:

– Introduction of a training-free framework called River-LLM with a KV-Shared Exit River to allow seamless token-level Early Exit without the need for recomputation or masking, which typically introduce latency or precision issues.

💬 Research Conclusions:

– Experiments on tasks like mathematical reasoning and code generation showed that River-LLM offers a 1.71 to 2.16 times speedup in processing while maintaining high-quality output.

👉 Paper link: https://huggingface.co/papers/2604.18396

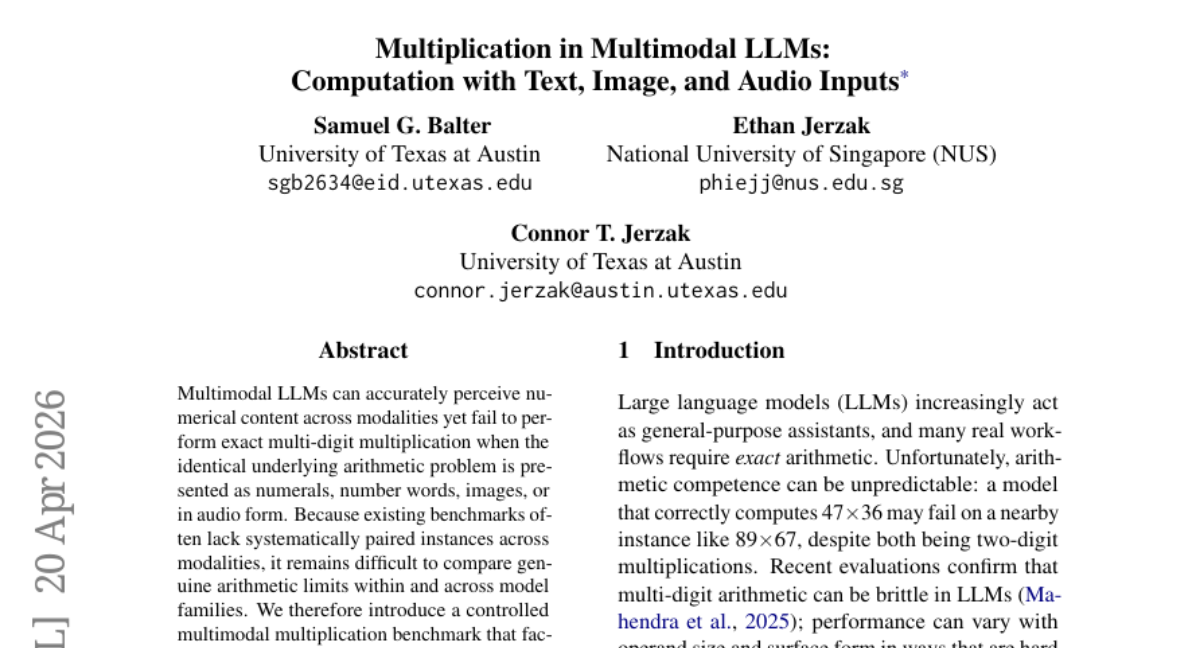

20. Multiplication in Multimodal LLMs: Computation with Text, Image, and Audio Inputs

🔑 Keywords: Multimodal LLMs, arithmetic load, multi-digit multiplication, heuristic-specific reasoning, LoRA adapters

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The research aims to evaluate the computational limitations of Multimodal large language models (LLMs) in performing exact multi-digit multiplication across different representations and modalities.

🛠️ Research Methods:

– Introduced a controlled multimodal multiplication benchmark varying factors such as digit length, sparsity, representation, and modality, utilizing a reproducible generator.

– Defined a novel arithmetic load metric as a proxy for operation count, analyzing its predictive power on model performance.

– Developed a forced-completion loss probe to assess heuristic-specific reasoning strategies across modalities.

💬 Research Conclusions:

– Models exhibit computational limitations highlighted by the arithmetic load metric, with performance sharply declining as the load increases.

– Despite perceptual proficiency across modalities, computational degradation is evident, suggesting models are more prone to certain procedural biases.

– LoRA adapters, although producing near-orthogonal updates, indicate that the base model’s internal routing remains well-tuned but can lead to accuracy degradation.

👉 Paper link: https://huggingface.co/papers/2604.18203

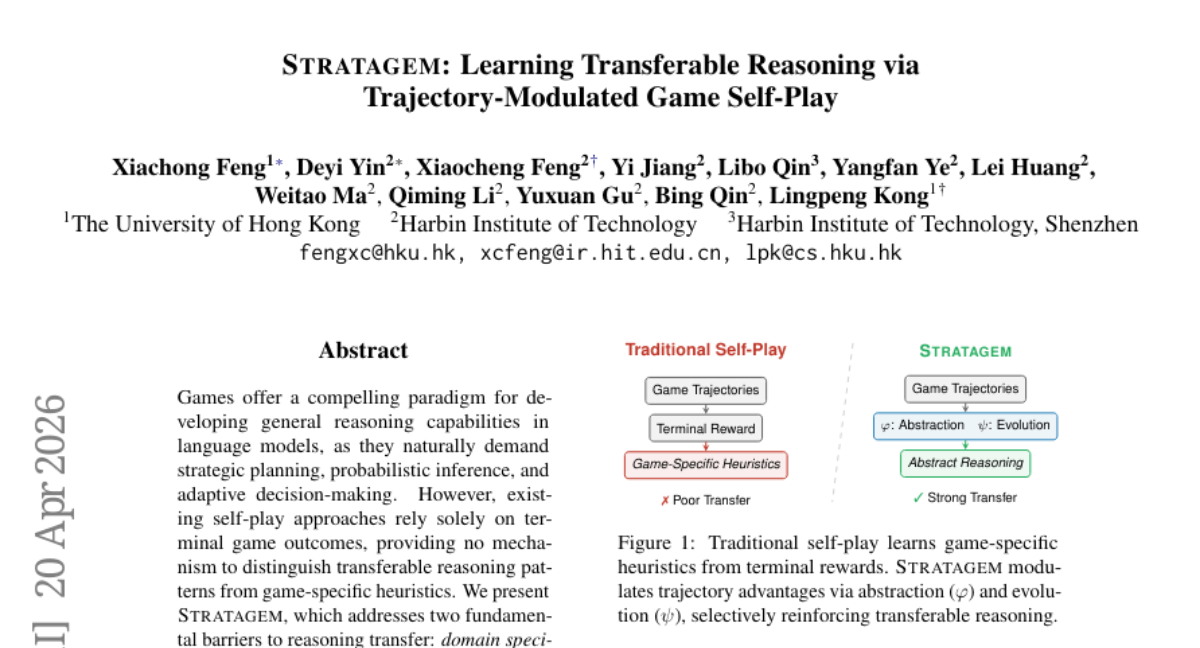

21. Stratagem: Learning Transferable Reasoning via Trajectory-Modulated Game Self-Play

🔑 Keywords: Reasoning Transferability Coefficient, Reasoning Evolution Reward, AI-generated Summary, Multi-step Reasoning

💡 Category: Natural Language Processing

🌟 Research Objective:

– STRATAGEM aims to overcome the limitations of reasoning transfer in language models, ensuring that reasoning patterns are domain-agnostic and adaptable.

🛠️ Research Methods:

– Introduces a Reasoning Transferability Coefficient and a Reasoning Evolution Reward to identify and reinforce domain-agnostic reasoning patterns across mathematical reasoning, general reasoning, and code generation benchmarks.

💬 Research Conclusions:

– STRATAGEM greatly improves reasoning transfer capabilities, especially in tasks requiring multi-step reasoning, as confirmed by experiments and human evaluations.

👉 Paper link: https://huggingface.co/papers/2604.17696

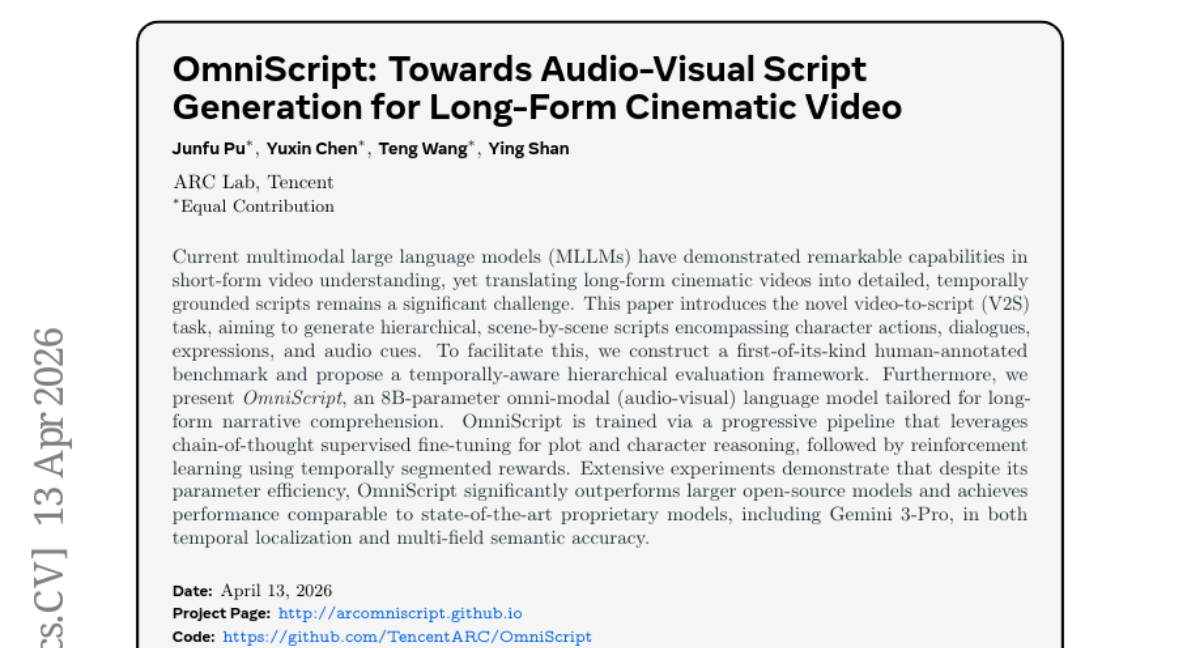

22. OmniScript: Towards Audio-Visual Script Generation for Long-Form Cinematic Video

🔑 Keywords: Video-to-Script, OmniScript, Progressive Pipeline, Reinforcement Learning, Temporal Localization

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The paper aims to introduce a novel Video-to-Script (V2S) task for generating hierarchical, detailed scripts of long-form videos, capturing various elements such as character actions, dialogues, and audio cues.

🛠️ Research Methods:

– A new human-annotated benchmark and a temporally-aware hierarchical evaluation framework were developed.

– OmniScript, an 8B-parameter omni-modal language model, was trained using progressive pipeline techniques with chain-of-thought supervised fine-tuning and reinforcement learning with temporally segmented rewards.

💬 Research Conclusions:

– OmniScript, despite being parameter-efficient, outperforms larger open-source models and matches the performance of state-of-the-art proprietary models like Gemini 3-Pro in temporal localization and multi-field semantic accuracy.

👉 Paper link: https://huggingface.co/papers/2604.11102

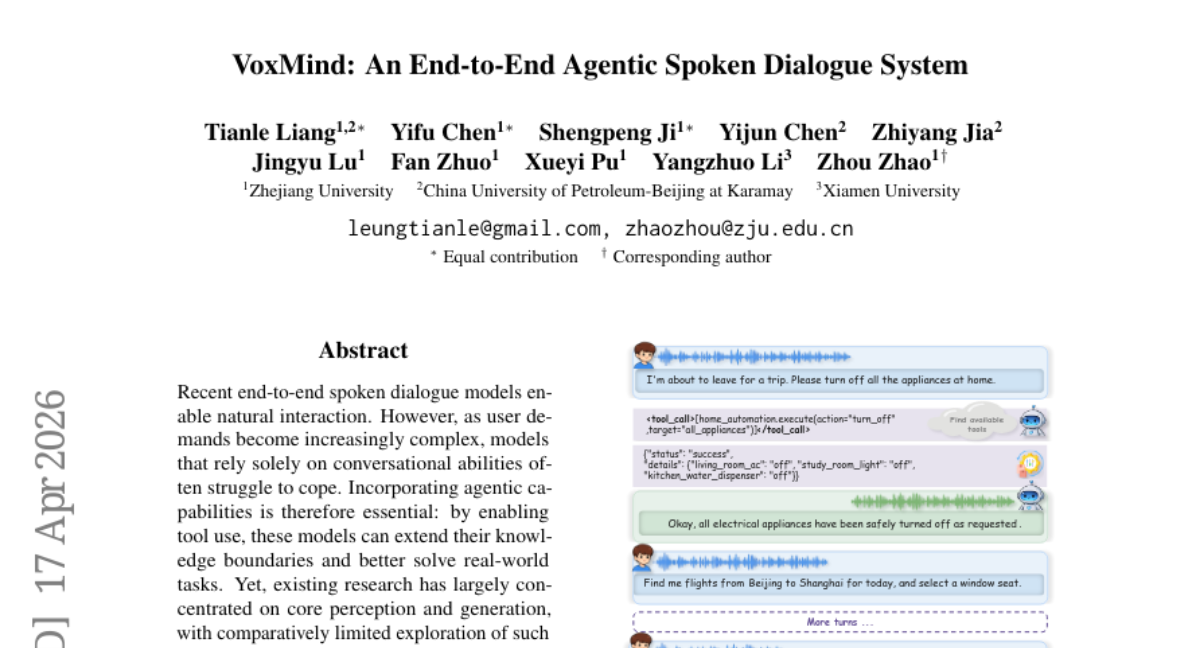

23. VoxMind: An End-to-End Agentic Spoken Dialogue System

🔑 Keywords: agentic capabilities, Think-before-Speak, end-to-end spoken dialogue models, Multi-Agent Dynamic Tool Management, task completion rate

💡 Category: Natural Language Processing

🌟 Research Objective:

– To enhance end-to-end spoken dialogue models by incorporating agentic capabilities and dynamic tool management for improving task completion rates and maintaining conversational quality.

🛠️ Research Methods:

– Utilized the 470-hour AgentChat dataset to implement a “Think-before-Speak” mechanism for structured reasoning and proposed a Multi-Agent Dynamic Tool Management architecture to reduce latency and improve performance.

💬 Research Conclusions:

– VoxMind demonstrated significant improvements in task completion rates, increasing performance from 34.88% to 74.57%, and preserved conversational quality, outperforming existing models like Gemini-2.5-Pro.

👉 Paper link: https://huggingface.co/papers/2604.15710

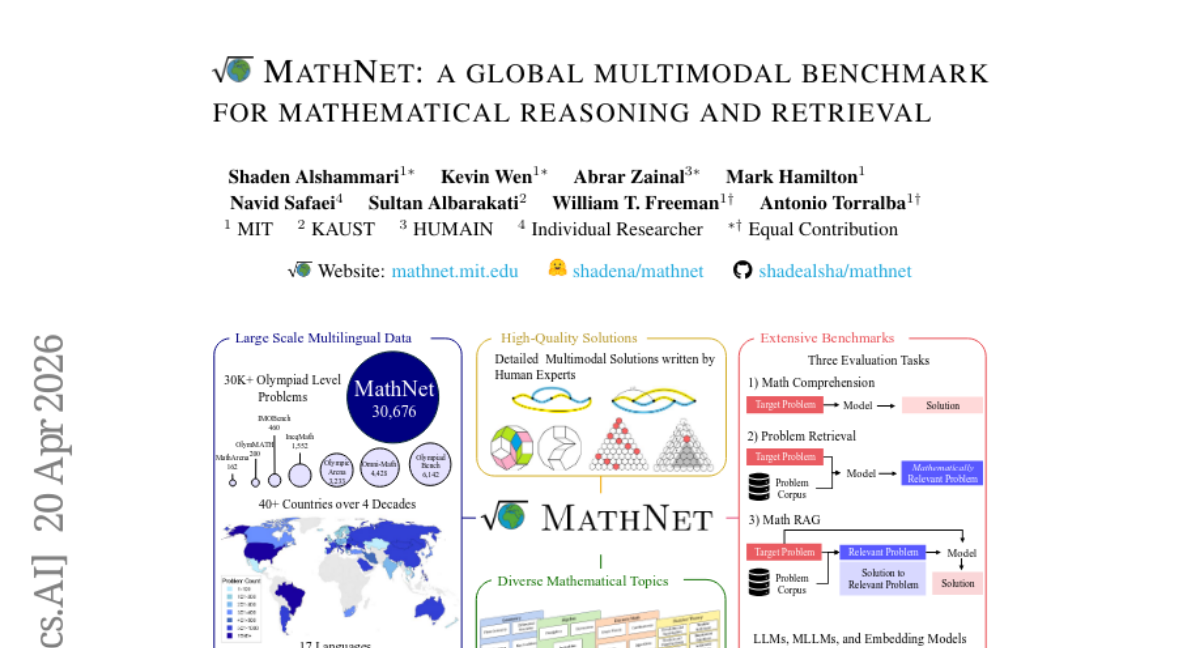

24. MathNet: a Global Multimodal Benchmark for Mathematical Reasoning and Retrieval

🔑 Keywords: MathNet, multilingual, multimodal, Olympiad-level, mathematical reasoning

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– The main objective is to introduce MathNet, a large-scale, high-quality dataset designed for evaluating mathematical reasoning and retrieval in generative and embedding-based systems.

🛠️ Research Methods:

– MathNet consists of Olympiad-level math problems from 47 countries in 17 languages, including a benchmark for mathematical reasoning evaluation. It supports three tasks: Problem Solving, Math-Aware Retrieval, and Retrieval-Augmented Problem Solving.

💬 Research Conclusions:

– Experimental results indicate that state-of-the-art reasoning and embedding models still face challenges. Notably, the performance of retrieval-augmented generation is sensitive to retrieval quality, with DeepSeek-V3.2-Speciale achieving significant performance increases.

👉 Paper link: https://huggingface.co/papers/2604.18584

25. Concrete Jungle: Towards Concreteness Paved Contrastive Negative Mining for Compositional Understanding

🔑 Keywords: Vision-Language Models, Compositional Reasoning, Lexical Concreteness, Cement Loss, Gradient Imbalance

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Address challenges in compositional reasoning for vision-language models by improving negative sample selection and loss function design.

🛠️ Research Methods:

– Introduce lexical concreteness-based negative sample selection and propose the Cement loss using a margin-based approach to balance gradient distribution.

💬 Research Conclusions:

– The Slipform framework, integrating these techniques, achieves state-of-the-art performance across compositional benchmarks and retrieval tasks.

👉 Paper link: https://huggingface.co/papers/2604.13313

26. Crowded in B-Space: Calibrating Shared Directions for LoRA Merging

🔑 Keywords: LoRA adapters, shared directions, task-specific information, Pre-merge interference calibration, merged adapters

💡 Category: Machine Learning

🌟 Research Objective:

– The study aims to improve the performance of LoRA adapter merging by separately calibrating the output-side matrix B to reduce interference and preserve task-specific information.

🛠️ Research Methods:

– The Pico method is introduced for pre-merge interference calibration in the output-space, which involves downscaling over-shared directions and rescaling the merged update.

💬 Research Conclusions:

– Pico enhances average accuracy by 3.4-8.3 points across various benchmarks and allows merged adapters to outperform LoRA trained with all task data, showing better performance when treating the two LoRA matrices separately.

👉 Paper link: https://huggingface.co/papers/2604.16826

27. ClawEnvKit: Automatic Environment Generation for Claw-Like Agents

🔑 Keywords: Automated pipeline, Natural language descriptions, ClawEnvKit, Benchmark, Claw-like agents

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– Develop ClawEnvKit, an automated pipeline to generate diverse and verified environments from natural language descriptions for claw-like agents.

🛠️ Research Methods:

– Utilizes a three-module system: a parser for extracting structured parameters, a generator for task specification and configuration, and a validator for ensuring environment feasibility and diversity.

💬 Research Conclusions:

– Auto-ClawEval benchmark demonstrates coherence and clarity at a significantly lower cost than human-curated environments.

– Harness engineering significantly boosts performance, and automated generation offers scalable evaluation and training capabilities.

– Enables live and continuous user-driven evaluation and adaptability in training environments.

👉 Paper link: https://huggingface.co/papers/2604.18543

28. When Can LLMs Learn to Reason with Weak Supervision?

🔑 Keywords: Reinforcement Learning, Weak Supervision, Reward Saturation Dynamics, Reasoning Faithfulness

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– Investigate model generalization in reasoning tasks under weak supervision, focusing on reward saturation dynamics and reasoning faithfulness.

🛠️ Research Methods:

– Conduct systematic empirical studies across diverse model families and reasoning domains under three weak supervision settings: scarce data, noisy rewards, and self-supervised proxy rewards.

💬 Research Conclusions:

– Generalization is dictated by training reward saturation dynamics; prolonged pre-saturation allows for learning while rapid saturation causes memorization.

– Reasoning faithfulness is identified as the pre-RL property predicting model regime, with supervised fine-tuning on explicit traces being crucial for weak supervision generalization.

👉 Paper link: https://huggingface.co/papers/2604.18574

29. EasyVideoR1: Easier RL for Video Understanding

🔑 Keywords: Reinforcement Learning, Video Understanding, Large Vision-Language Models, Reward System, Joint Image-Video Training

💡 Category: Reinforcement Learning

🌟 Research Objective:

– Develop an efficient reinforcement learning framework, EasyVideoR1, specifically designed for enhancing video understanding tasks.

🛠️ Research Methods:

– Implementation of a full video RL training pipeline with offline preprocessing and tensor caching.

– Introduction of a comprehensive, task-aware reward system covering various video and image problem types.

– Application of a mixed offline-online data training paradigm.

– Facilitation of joint image-video training with configurable pixel budgets.

💬 Research Conclusions:

– EasyVideoR1 achieves a 1.47 times throughput improvement by eliminating redundant video decoding.

– The framework enables effective video understanding with asynchronous multi-benchmark evaluation, demonstrating accuracy aligned with official scores.

👉 Paper link: https://huggingface.co/papers/2604.16893

30. OpenGame: Open Agentic Coding for Games

🔑 Keywords: OpenGame, Game Skill, GameCoder-27B, AI-generated summary, code agents

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To create OpenGame, an open-source agentic framework for end-to-end web game creation using specialized code models and evaluation benchmarks.

🛠️ Research Methods:

– Implemented Game Skill, composed of Template Skill and Debug Skill, for scaffolding stable architectures.

– Utilized GameCoder-27B, a large language model, through a three-stage pipeline involving continual pre-training, supervised fine-tuning, and execution-grounded reinforcement learning.

– Introduced OpenGame-Bench as an evaluation pipeline for agentic game generation.

💬 Research Conclusions:

– OpenGame sets a new standard for state-of-the-art game generation across 150 diverse game prompts.

– Aims to extend code agents beyond isolated programming tasks to develop complex, interactive real-world applications.

👉 Paper link: https://huggingface.co/papers/2604.18394

31. OneVL: One-Step Latent Reasoning and Planning with Vision-Language Explanation

🔑 Keywords: Chain-of-Thought reasoning, Latent CoT, Vision-Language-Action framework, trajectory prediction, auxiliary decoders

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– The paper introduces OneVL, a unified Vision-Language-Action (VLA) framework aimed at improving latent Chain-of-Thought reasoning in autonomous driving by integrating both language and visual world model supervision.

🛠️ Research Methods:

– OneVL employs a three-stage training pipeline to progressively align latent tokens with trajectory, language, and visual objectives. The framework includes dual auxiliary decoders—language and visual world model decoders—that guide latent space to internalize causal dynamics of driving scenarios.

💬 Research Conclusions:

– OneVL is the first latent CoT method to surpass explicit CoT, achieving state-of-the-art accuracy. It demonstrates that guided language and world-model supervision facilitates a more generalizable and compressed representation, enabling faster trajectory prediction without compromising accuracy.

👉 Paper link: https://huggingface.co/papers/2604.18486