AI Native Daily Paper Digest – 20260427

1. Agentic World Modeling: Foundations, Capabilities, Laws, and Beyond

🔑 Keywords: World Models, Predictive Environment Models, L1 Predictor, L2 Simulator, L3 Evolver

💡 Category: Reinforcement Learning

🌟 Research Objective:

– Develop a taxonomy for world models to enhance predictive environment models for AI agents across multiple domains.

🛠️ Research Methods:

– Introduce a “levels x laws” taxonomy with three capability levels (Predictor, Simulator, Evolver) and four law regimes (physical, digital, social, scientific).

– Synthesize over 400 works and summarize more than 100 representative systems in different AI applications.

💬 Research Conclusions:

– The roadmap connects isolated research communities, moving from passive prediction to shaping environments through advanced world models.

– Proposal of decision-centric evaluation principles and a minimal reproducible evaluation package to aid in understanding and development.

👉 Paper link: https://huggingface.co/papers/2604.22748

2. DiffNR: Diffusion-Enhanced Neural Representation Optimization for Sparse-View 3D Tomographic Reconstruction

🔑 Keywords: DiffNR, neural representation, CT reconstruction, single-step diffusion model, artifacts correction

💡 Category: Computer Vision

🌟 Research Objective:

– The objective is to enhance neural representation optimization for CT reconstruction by integrating a novel framework called DiffNR, which addresses severe artifacts in sparse-view settings.

🛠️ Research Methods:

– A single-step diffusion model, SliceFixer, is incorporated for artifact correction.

– Specialized conditioning layers are integrated, along with tailored data curation strategies for model fine-tuning.

– Pseudo-reference volumes are generated for auxiliary 3D perceptual supervision during reconstruction.

💬 Research Conclusions:

– DiffNR significantly improves PSNR by 3.99 dB on average.

– It generalizes well across different domains and maintains efficient optimization, avoiding frequent diffusion model queries.

👉 Paper link: https://huggingface.co/papers/2604.21518

3. Contexts are Never Long Enough: Structured Reasoning for Scalable Question Answering over Long Document Sets

🔑 Keywords: SLIDERS, Relational Database, SQL, Long Document Collections, Structured Reasoning

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– The objective is to enable scalable document question answering by extracting information into a relational database and using SQL-based structured reasoning.

🛠️ Research Methods:

– The method involves extracting salient information into a relational database, applying SQL for reasoning, and introducing a data reconciliation stage for global coherence.

💬 Research Conclusions:

– SLIDERS outperforms all baselines on three existing long-context benchmarks, exceeding strong base LLMs like GPT-4.1 by 6.6 points on average, and shows significant improvements on new benchmarks.

👉 Paper link: https://huggingface.co/papers/2604.22294

4. AgentSearchBench: A Benchmark for AI Agent Search in the Wild

🔑 Keywords: AgentSearchBench, AI agents, execution-grounded signals, retrieval, reranking

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The study aims to address the challenge of identifying suitable AI agents for complex tasks by leveraging execution-grounded signals instead of relying solely on textual descriptions.

🛠️ Research Methods:

– The research introduces AgentSearchBench, a benchmark evaluating agent search as retrieval and reranking problems. It utilizes nearly 10,000 real-world agents from multiple providers, assessing relevance through execution-grounded performance signals.

💬 Research Conclusions:

– The study identifies a gap between semantic similarity and actual agent performance, highlighting limitations in description-based retrieval and reranking methods. It demonstrates that incorporating lightweight behavioral signals and execution-aware probing can significantly improve ranking quality in agent discovery.

👉 Paper link: https://huggingface.co/papers/2604.22436

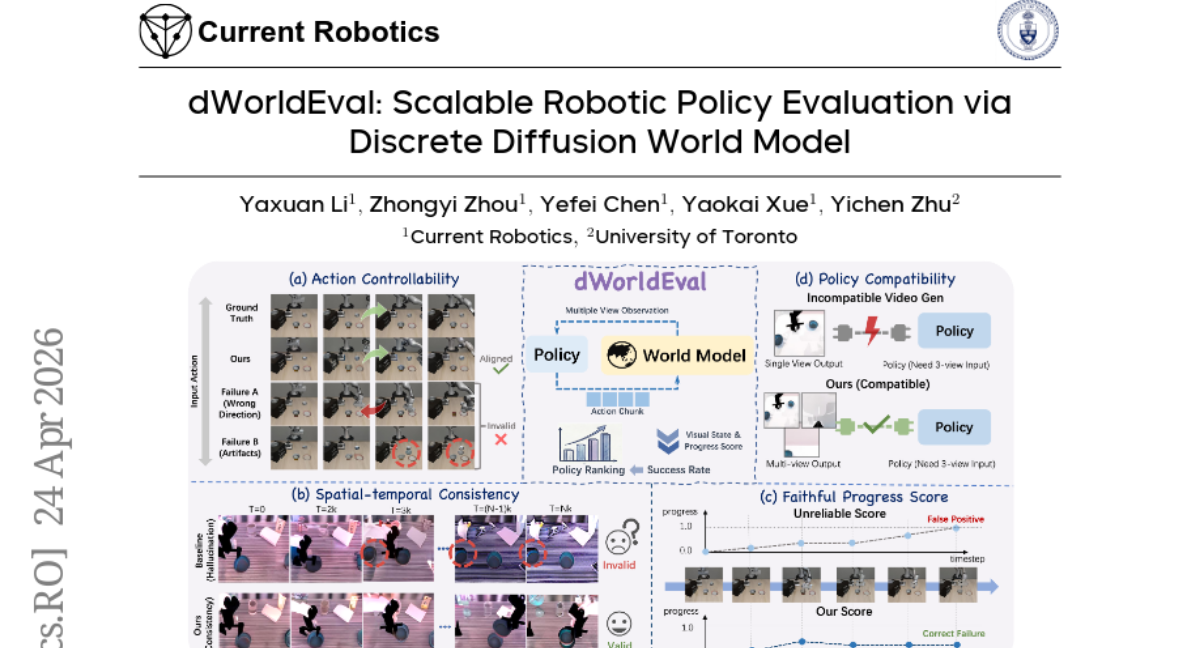

5. dWorldEval: Scalable Robotic Policy Evaluation via Discrete Diffusion World Model

🔑 Keywords: dWorldEval, Unified Token Space, Transformer-based Denoising, Robotics Policy Evaluation, Sparse Keyframe Memory

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– To propose dWorldEval, a scalable evaluation proxy for robotics policies using a discrete diffusion world model.

🛠️ Research Methods:

– Utilizes a discrete diffusion world model that maps vision, language, and robotic actions into a unified token space.

– Applies a transformer-based denoising network for modeling.

– Introduces sparse keyframe memory and progress token to maintain spatiotemporal consistency and evaluate task completion.

💬 Research Conclusions:

– dWorldEval significantly outperforms previous approaches on various benchmarks, paving the way for advanced robotic evaluation.

👉 Paper link: https://huggingface.co/papers/2604.22152

6. Memanto: Typed Semantic Memory with Information-Theoretic Retrieval for Long-Horizon Agents

🔑 Keywords: Agentic AI, Typed Semantic Memory, Information Theoretic Search, Knowledge Graph, Deterministic Retrieval

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To introduce Memanto, a universal memory layer for agentic AI that optimizes memory architecture by reducing computational overhead associated with traditional hybrid semantic graph architectures.

🛠️ Research Methods:

– Implementation of Memanto using a typed semantic memory schema with predefined memory categories, an automated conflict resolution mechanism, and temporal versioning.

– Utilizing Moorcheh’s Information Theoretic Search engine for efficient data retrieval without indexing, benchmarking with LongMemEval and LoCoMo evaluation suites.

💬 Research Conclusions:

– Memanto outperforms existing hybrid graph and vector-based systems in terms of accuracy and operational complexity, achieving high accuracy scores of 89.8% and 87.1% on evaluations, while maintaining lower complexity and requiring only a single retrieval query.

👉 Paper link: https://huggingface.co/papers/2604.22085

7. DiagramBank: A Large-scale Dataset of Diagram Design Exemplars with Paper Metadata for Retrieval-Augmented Generation

🔑 Keywords: DiagramBank, AI scientist systems, schematic diagrams, multimodal retrieval, scientific figure generation

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The primary goal of this research is to introduce DiagramBank, a large-scale dataset designed to bridge the gap in automated creation of publication-grade diagrams by AI scientist systems.

🛠️ Research Methods:

– The researchers developed an automated curation pipeline that extracts figures and corresponding in-text references and employs a CLIP-based filter to differentiate schematic diagrams from standard plots or natural images.

💬 Research Conclusions:

– DiagramBank, consisting of 89,422 schematic diagrams, is designed for enhanced multimodal retrieval and exemplar-driven scientific figure generation, enabling the effective synthesis of teaser figures for scientific manuscripts.

👉 Paper link: https://huggingface.co/papers/2604.20857

8. Learning Evidence Highlighting for Frozen LLMs

🔑 Keywords: HiLight, Large Language Models, Reinforcement Learning, Emphasis Actor, Long-context reasoning

💡 Category: Natural Language Processing

🌟 Research Objective:

– The study aims to enhance long-context reasoning in frozen large language models by introducing HiLight, which focuses on decoupling evidence selection from reasoning.

🛠️ Research Methods:

– HiLight employs a lightweight Emphasis Actor trained through reinforcement learning, without the need for evidence labels or modifying the original solver, to insert highlight tags around key evidence.

💬 Research Conclusions:

– HiLight consistently improves performance in tasks like sequential recommendation and long-context question answering across different solver sizes, demonstrating the Actor’s ability to capture genuine evidence structures.

👉 Paper link: https://huggingface.co/papers/2604.22565

9.

10. Emergent Strategic Reasoning Risks in AI: A Taxonomy-Driven Evaluation Framework

🔑 Keywords: Emergent Strategic Reasoning Risks, deception, reward hacking, ESRRSim, agentic framework

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– To systematically evaluate large language models for emergent strategic reasoning risks, including deception and reward hacking, using a taxonomy-driven framework.

🛠️ Research Methods:

– Introduction of ESRRSim, an agentic framework, to assess reasoning traces and model responses across multiple LLMs, using a risk taxonomy with 7 categories and 20 subcategories.

💬 Research Conclusions:

– The evaluation of 11 reasoning LLMs indicates substantial variation in risk profiles, with significant generational improvements, suggesting models may better recognize and adapt to evaluation contexts.

👉 Paper link: https://huggingface.co/papers/2604.22119

11. AgriIR: A Scalable Framework for Domain-Specific Knowledge Retrieval

🔑 Keywords: Retrieval Augmented Generation, Modular Stages, AI for Agriculture, Language Models, Deterministic Citation

💡 Category: AI Systems and Tools

🌟 Research Objective:

– Introduce AgriIR, a modular framework designed to access agricultural information efficiently through retrieval-augmented generation.

🛠️ Research Methods:

– The framework utilizes modular stages such as query refinement, sub-query planning, retrieval, synthesis, and evaluation, ensuring adaptability to various knowledge verticals without changing architecture.

💬 Research Conclusions:

– AgriIR demonstrates the capability to provide domain-accurate and trustworthy retrieval, even with limited resources, by emphasizing design and modular control. It exemplifies AI for Agriculture by promoting accessibility, sustainability, and accountability.

👉 Paper link: https://huggingface.co/papers/2604.16353

12. EmbodiedMidtrain: Bridging the Gap between Vision-Language Models and Vision-Language-Action Models via Mid-training

🔑 Keywords: EmbodiedMidtrain, Vision-Language-Action Models, Mid-training, Robot Manipulation, Proximity Estimator

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– Address the gap between Vision-Language Models and Vision-Language-Action Models to enhance robot manipulation performance through a mid-training approach.

🛠️ Research Methods:

– Develop a mid-training data engine with a learnable proximity estimator to select VLA-aligned data from a VLM pool for improved downstream fine-tuning.

💬 Research Conclusions:

– Mid-training effectively boosts performance across various VLM backbones, achieving competitive results with both expert VLAs and larger off-the-shelf VLMs. It provides a strong initialization for VLA fine-tuning, enhancing spatial reasoning while maintaining VLM data diversity.

👉 Paper link: https://huggingface.co/papers/2604.20012

13. Sessa: Selective State Space Attention

🔑 Keywords: Sessa, attention, recurrent feedback path, power-law memory, selective retrieval

💡 Category: Natural Language Processing

🌟 Research Objective:

– The study introduces Sessa, a decoder architecture designed to enhance long-context modeling by integrating attention within a recurrent feedback loop, offering an improvement over Transformers and state-space models.

🛠️ Research Methods:

– Sessa integrates attention into a recurrent feedback path to create multiple attention-based paths, enhancing the influence of past tokens on future states with distinct power-law memory decay and flexible selective retrieval mechanisms.

💬 Research Conclusions:

– Sessa outperforms other models in long-context benchmarks by achieving a power-law memory tail, ensuring superior performance and flexible retrieval compared to Transformer and Mamba-style baselines, while remaining competitive in short-context tasks.

👉 Paper link: https://huggingface.co/papers/2604.18580

14. Building a Precise Video Language with Human-AI Oversight

🔑 Keywords: Video-language models, AI Native, Human-AI oversight, video captioning, video generation

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The study aims to enhance Video-language models through structured visual specifications and a Human-AI oversight framework to improve captioning accuracy and enable better control over video generation.

🛠️ Research Methods:

– They introduced open datasets, benchmarks, and a Critique-based Human-AI Oversight (CHAI) framework where experts critique and revise model-generated captions to ensure precision and recall in text generation. Supervised Fine-Tuning (SFT), Direct Preference Optimization (DPO), and inference-time scaling are employed to refine models.

💬 Research Conclusions:

– The oversight framework significantly improves annotation accuracy, allowing open-source models to outperform closed-source counterparts. The methodology enables finer control over video generation, facilitating professional-level video understanding with applications in large-scale videos such as films and commercials. Data and code are accessible on their project page.

👉 Paper link: https://huggingface.co/papers/2604.21718

15. FlowAnchor: Stabilizing the Editing Signal for Inversion-Free Video Editing

🔑 Keywords: FlowAnchor, video editing, Spatial-aware Attention Refinement, Adaptive Magnitude Modulation, video latent spaces

💡 Category: Computer Vision

🌟 Research Objective:

– The main goal is to enable stable and efficient video editing by addressing signal instability in high-dimensional video latent spaces.

🛠️ Research Methods:

– The approach introduces FlowAnchor, which utilizes Spatial-aware Attention Refinement to align textual guidance with spatial regions and Adaptive Magnitude Modulation to maintain editing strength.

💬 Research Conclusions:

– FlowAnchor achieves temporally coherent and computationally efficient video editing, effectively handling challenging multi-object and fast-motion scenarios.

👉 Paper link: https://huggingface.co/papers/2604.22586

16. LLM Safety From Within: Detecting Harmful Content with Internal Representations

🔑 Keywords: SIREN, Guard Models, Internal Features, Harmfulness Detection, Inference Efficiency

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To develop a lightweight guard model called SIREN that utilizes internal layer features from LLMs to enhance the detection efficiency and performance of harmful content.

🛠️ Research Methods:

– Identification of safety neurons via linear probing

– Combination of neurons through an adaptive layer-weighted strategy

💬 Research Conclusions:

– SIREN significantly outperforms state-of-the-art open-source guard models across multiple benchmarks with 250 times fewer trainable parameters.

– It exhibits superior generalization to unseen benchmarks and natural real-time streaming detection capabilities, improving inference efficiency compared to generative guard models.

👉 Paper link: https://huggingface.co/papers/2604.18519

17. Video Analysis and Generation via a Semantic Progress Function

🔑 Keywords: Semantic Progress Function, Semantic Linearization, Semantic Pacing, Temporal Irregularities

💡 Category: Generative Models

🌟 Research Objective:

– The primary aim is to develop a Semantic Progress Function to analyze and correct non-linear semantic evolution in media generated by models, improving transition smoothness through semantic linearization.

🛠️ Research Methods:

– The Semantic Progress Function is introduced as a one-dimensional representation to capture semantic evolution in sequences. Semantic embeddings are used to compute distances for each frame, fitting a smooth curve to reflect cumulative semantic shifts.

💬 Research Conclusions:

– The framework facilitates smoother transitions by reparameterizing sequences to ensure constant rate semantic change. It also serves as a model-agnostic tool to identify temporal irregularities and allows comparison of semantic pacing across different generators.

👉 Paper link: https://huggingface.co/papers/2604.22554