AI Native Daily Paper Digest – 20260506

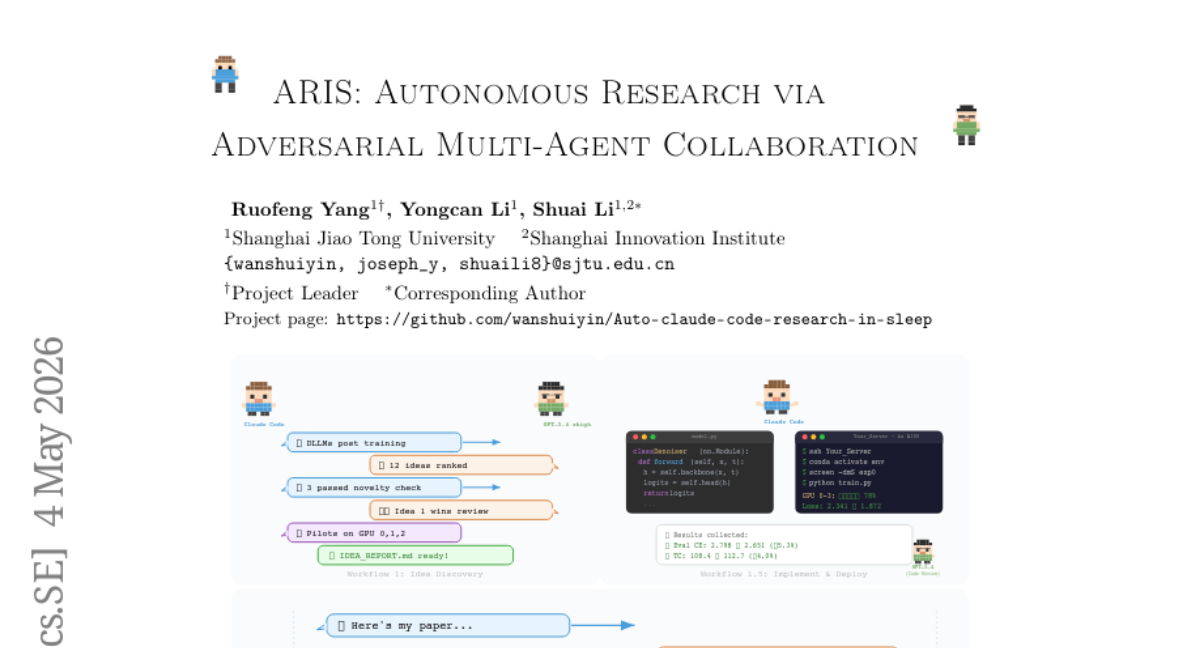

1. ARIS: Autonomous Research via Adversarial Multi-Agent Collaboration

🔑 Keywords: ARIS, cross-model adversarial collaboration, research harness, execution layer, assurance layer

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To ensure reliable long-term research outcomes through the deployment of ARIS, an open-source research harness that utilizes cross-model adversarial collaboration.

🛠️ Research Methods:

– ARIS employs three architectural layers: execution, orchestration, and assurance, with features like Markdown-defined skills, model integrations, a persistent research wiki, and a three-stage verification process.

💬 Research Conclusions:

– ARIS successfully coordinates machine-learning research workflows and mitigates failure modes in long-horizon research by implementing a default configuration of cross-model adversarial collaboration.

👉 Paper link: https://huggingface.co/papers/2605.03042

2. Beyond SFT-to-RL: Pre-alignment via Black-Box On-Policy Distillation for Multimodal RL

🔑 Keywords: distributional drift, multimodal models, supervised fine-tuning, reinforcement learning, Mixture-of-Experts

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– To tackle distributional drift in large multimodal models by introducing PRISM, a novel pipeline that incorporates a distribution-alignment stage to improve model performance.

🛠️ Research Methods:

– Utilizes a black-box adversarial game approach between policy and MoE discriminator to provide disentangled corrective signals.

– Curates additional high-fidelity supervision data for distribution alignment from Gemini 3 Flash, enriching SFT initialization.

💬 Research Conclusions:

– PRISM effectively enhances downstream reinforcement learning performance across various algorithms and benchmarks, achieving significant accuracy improvements, highlighting the efficacy of the distribution-alignment approach.

👉 Paper link: https://huggingface.co/papers/2604.28123

3. HeavySkill: Heavy Thinking as the Inner Skill in Agentic Harness

🔑 Keywords: HeavySkill, Complex Reasoning, Parallel Reasoning, Reinforcement Learning, Self-Evolving LLMs

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– Propose HeavySkill, a framework for internalizing complex reasoning as an intrinsic model skill, outperforming standard orchestration methods.

🛠️ Research Methods:

– Introduce a two-stage pipeline incorporating parallel reasoning and summarization, validated through empirical studies across various domains.

💬 Research Conclusions:

– HeavySkill consistently surpasses traditional Best-of-N strategies and can be enhanced via reinforcement learning, enabling scalable, self-evolving models.

👉 Paper link: https://huggingface.co/papers/2605.02396

4. PatRe: A Full-Stage Office Action and Rebuttal Generation Benchmark for Patent Examination

🔑 Keywords: Patent examination, legal reasoning, LLMs, benchmarks, multi-turn process

💡 Category: Knowledge Representation and Reasoning

🌟 Research Objective:

– The paper introduces PatRe, a new benchmark designed to model the complete patent examination process as a dynamic multi-turn interaction, addressing previous benchmarks’ limitations.

🛠️ Research Methods:

– PatRe utilizes 480 real-world cases and supports both oracle and retrieval-simulated evaluation settings to investigate performance differences between proprietary and open-source LLMs.

💬 Research Conclusions:

– The study reveals insights into model performance, highlighting the potential and limitations of LLMs in legal reasoning and technical novelty assessment within patent examination. It also underscores task asymmetries between examiner analysis and applicant rebuttal.

👉 Paper link: https://huggingface.co/papers/2605.03571

5. SVGS: Enhancing Gaussian Splatting Using Primitives with Spatially Varying Colors

🔑 Keywords: Gaussian Splatting, Spatially Varying Colors, Opacity, Novel View Synthesis, Geometric Reconstruction

💡 Category: Computer Vision

🌟 Research Objective:

– The paper aims to improve multi-view reconstruction by introducing Spatially Varying Gaussian Splatting (SVGS) which enhances Gaussian primitives with spatially varying colors and opacity.

🛠️ Research Methods:

– SVGS utilizes spatially varying functions implemented via bilinear interpolation, movable kernels, and tiny neural networks within 2D Gaussian surfels.

💬 Research Conclusions:

– SVGS significantly enhances novel view synthesis and maintains high-quality geometric reconstruction, outperforming baseline methods on multiple datasets, especially with movable kernels demonstrating superior results.

👉 Paper link: https://huggingface.co/papers/2411.18966

6. Workspace-Bench 1.0: Benchmarking AI Agents on Workspace Tasks with Large-Scale File Dependencies

🔑 Keywords: Workspace Learning, File Dependencies, AI Agents, Cross-File Retrieval, Contextual Reasoning

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The objective is to evaluate AI agents’ capability in workspace learning focusing on managing large-scale file dependencies and assessing their performance compared to human benchmarks.

🛠️ Research Methods:

– Construction of realistic workspaces featuring multiple worker profiles and extensive file types, coupled with curated tasks requiring complex decision-making.

– Introduction of Workspace-Bench and Workspace-Bench-Lite to facilitate in-depth performance evaluations of AI agents using both real-world file dependencies and a reduced-cost benchmarking subset.

💬 Research Conclusions:

– Experimental results highlight significant performance gaps between AI agents and human capabilities in workspace learning, with agents achieving a maximum of 68.7% versus human performance of 80.7%.

👉 Paper link: https://huggingface.co/papers/2605.03596

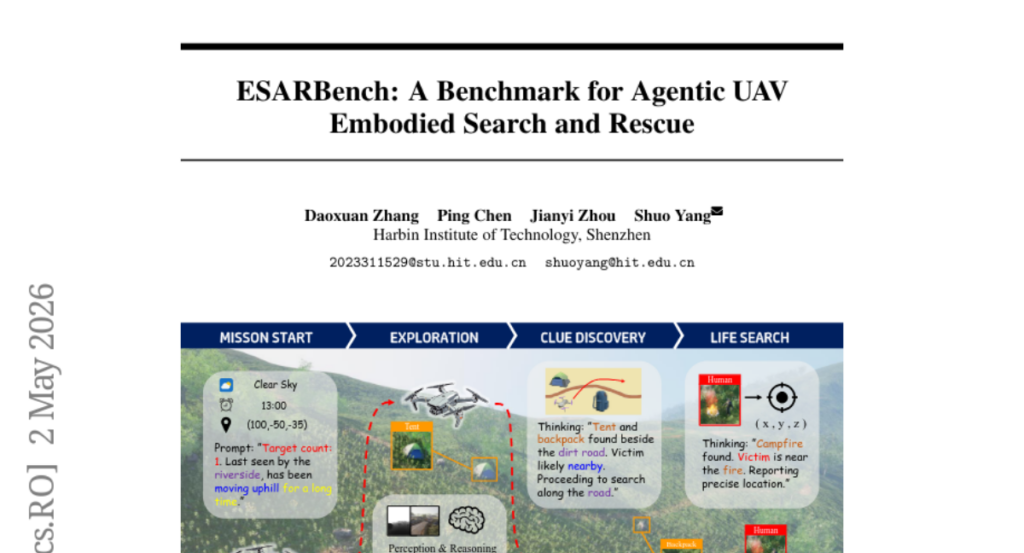

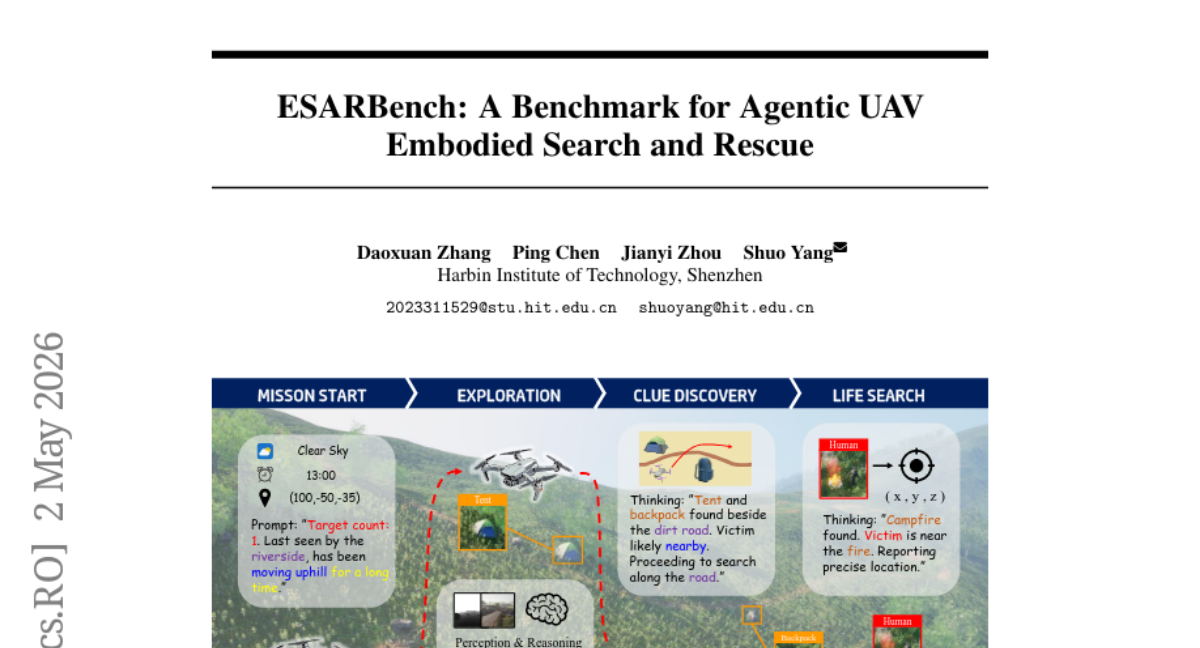

7. ESARBench: A Benchmark for Agentic UAV Embodied Search and Rescue

🔑 Keywords: Multimodal Large Language Models, Unmanned Aerial Vehicle, Search and Rescue, ESAR, AI Native

💡 Category: Robotics and Autonomous Systems

🌟 Research Objective:

– Introduce the Embodied Search and Rescue (ESAR) task and benchmark to evaluate Multimodal Large Language Model-driven UAVs in realistic SAR scenarios.

🛠️ Research Methods:

– Develop ESARBench using Unreal Engine 5 and AirSim to create photorealistic environments with dynamic variables for simulation.

– Construct a dataset of 600 tasks modeled on real-world disasters and propose comprehensive evaluation metrics.

💬 Research Conclusions:

– Highlight challenges in ESAR, like spatial memory limitations and the balance between search efficiency and flight safety.

– Establish ESARBench as a resource to advance research in the Embodied Search and Rescue domain.

👉 Paper link: https://huggingface.co/papers/2605.01371

8. The TTS-STT Flywheel: Synthetic Entity-Dense Audio Closes the Indic ASR Gap Where Commercial and Open-Source Systems Fail

🔑 Keywords: Niche-domain Indic ASR, Text-to-Speech, Entity-Hit-Rate, LoRA Fine-Tune, EDSA Corpus

💡 Category: Natural Language Processing

🌟 Research Objective:

– To improve niche-domain Indic ASR performance through a self-contained Text-to-Speech and Speech-to-Text method.

🛠️ Research Methods:

– The study implements a TTSSTT flywheel approach, using open-source Indic TTS to synthesize entity-dense utterances and applying LoRA fine-tuning on existing models.

💬 Research Conclusions:

– The proposed flywheel approach significantly increases EHR on Telugu test sets compared to existing open-source and commercial systems, demonstrating transferability to real speech.

– Cross-language performance varies, with notable improvements in beta-Hi and beta-Ta but not in Hindi.

– The SFR improvements through per-language LoRA are specifically effective for Telugu.

👉 Paper link: https://huggingface.co/papers/2605.03073

9. StateSMix: Online Lossless Compression via Mamba State Space Models and Sparse N-gram Context Mixing

🔑 Keywords: StateSMix, State Space Model, n-gram context mixing, arithmetic coding, BPE tokens

💡 Category: AI Systems and Tools

🌟 Research Objective:

– The development of StateSMix, a self-contained lossless compression model that operates without pre-trained weights or external dependencies.

🛠️ Research Methods:

– Utilizes a Mamba-style State Space Model trained online, combined with sparse n-gram context mixing and arithmetic coding.

– Implements an entropy-adaptive scaling mechanism and OpenMP parallelization for efficiency.

💬 Research Conclusions:

– StateSMix achieves superior compression rates compared to existing methods, such as xz -9e, across various data sizes.

– Establishes the State Space Model as a dominant force in compression, with n-gram tables providing additional gains.

👉 Paper link: https://huggingface.co/papers/2605.02904

10. Generate, Filter, Control, Replay: A Comprehensive Survey of Rollout Strategies for LLM Reinforcement Learning

🔑 Keywords: Reinforcement Learning, Large Language Models, Rollout Strategies, Generate-Filter-Control-Replay

💡 Category: Reinforcement Learning

🌟 Research Objective:

– To analyze reinforcement learning post-training methods for large language models using a unified framework that focuses on rollout processes.

🛠️ Research Methods:

– The introduction of a lifecycle taxonomy named Generate-Filter-Control-Replay (GFCR) to decompose rollout pipelines into four modular stages for systematic evaluation and improvement.

– Synthesis of various methods including RL with verifiable rewards, process supervision, judge-based gating, and adaptive compute allocation frameworks.

💬 Research Conclusions:

– The study synthesizes current techniques and grounds the framework with case studies across different reasoning tasks such as math and code/SQL.

– A diagnostic index is introduced to map rollout pathologies to GFCR modules, highlighting areas for improvement in building reproducible and trustworthy rollout pipelines.

👉 Paper link: https://huggingface.co/papers/2605.02913

11. Skills-Coach: A Self-Evolving Skill Optimizer via Training-Free GRPO

🔑 Keywords: Skills-Coach, Automated Framework, Large Language Model, Task Generation, Skill Evolution

💡 Category: AI Systems and Tools

🌟 Research Objective:

– To enhance the self-evolution of skills within LLM-based agents using Skills-Coach, addressing skill ecosystem fragmentation and achieving comprehensive competency coverage.

🛠️ Research Methods:

– Development of four core modules: Task Generation, Lightweight Optimization, Comparative Execution, and Traceable Evaluation, for testing and optimizing skill capabilities.

💬 Research Conclusions:

– Skills-Coach demonstrates significant performance improvements in skill capability across a wide range of categories, validating its potential to develop more robust and adaptive LLM-based agents.

👉 Paper link: https://huggingface.co/papers/2604.27488

12.

13. How Fast Should a Model Commit to Supervision? Training Reasoning Models on the Tsallis Loss Continuum

🔑 Keywords: Tsallis q-logarithm, reinforcement learning, cold-start stalling, Gradient-Amplified RL, Posterior-Attenuated Fine-Tuning

💡 Category: Reinforcement Learning

🌟 Research Objective:

– The paper aims to address the challenge of cold-start stalling in reinforcement learning from verifiable rewards by introducing a loss family J_Q that interpolates between RLVR and log-marginal-likelihood.

🛠️ Research Methods:

– The study utilizes the Tsallis q-logarithm to define a new loss family and introduces two Monte Carlo estimators: Gradient-Amplified RL (GARL) and Posterior-Attenuated Fine-Tuning (PAFT), to handle gradient amplification for cold-start scenarios.

💬 Research Conclusions:

– GARL at q=0.75 effectively mitigates cold-start stalling, outperforming other methods such as GRPO, especially in scenarios like FinQA, HotPotQA, and MuSiQue. In warm-start conditions, GARL and PAFT are analyzed for their respective effectiveness, with PAFT providing more stable gradients in certain setups.

👉 Paper link: https://huggingface.co/papers/2604.25907

14. Chain of Evidence: Pixel-Level Visual Attribution for Iterative Retrieval-Augmented Generation

🔑 Keywords: Chain of Evidence, Vision-Language Models, Iterative Retrieval-Augmented Generation, Visual Attribution, Bounding Boxes

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The research aims to bridge the gap in Iterative Retrieval-Augmented Generation systems by introducing the Chain of Evidence framework, which enhances precision in pixel-level evidence localization using Vision-Language Models over document screenshots.

🛠️ Research Methods:

– The study utilizes a retriever-agnostic visual attribution framework that leverages Vision-Language Models to process screenshots of document candidates, eliminating the need for format-specific parsing and enabling precise bounding box outputs.

💬 Research Conclusions:

– The experiments on benchmarks such as Wiki-CoE and SlideVQA reveal that the fine-tuned Qwen3-VL-8B-Instruct model significantly outperforms traditional text-based baselines in understanding visual layouts, offering robust performance and establishing an interpretable solution for iRAG systems at the pixel level.

👉 Paper link: https://huggingface.co/papers/2605.01284

15. Healthcare AI GYM for Medical Agents

🔑 Keywords: Clinical reasoning, Reinforcement learning, Multi-turn agentic RL, Self-distillation, TT-OPD

💡 Category: AI in Healthcare

🌟 Research Objective:

– Investigate the degradation of multi-turn clinical reasoning training into verbose single-turn interactions and propose a solution to improve training stability and performance.

🛠️ Research Methods:

– Utilized a gymnasium-compatible environment across 10 clinical domains with 3.6K+ tasks and 135 tools, exploring vanilla GRPO and proposing Turn-level Truncated On-Policy Distillation (TT-OPD) as a novel framework.

💬 Research Conclusions:

– TT-OPD significantly enhances performance, achieves the best results on 10 out of 18 benchmarks, offers faster convergence, controls response lengths, and sustains multi-turn tool use compared to non-RL baselines.

👉 Paper link: https://huggingface.co/papers/2605.02943

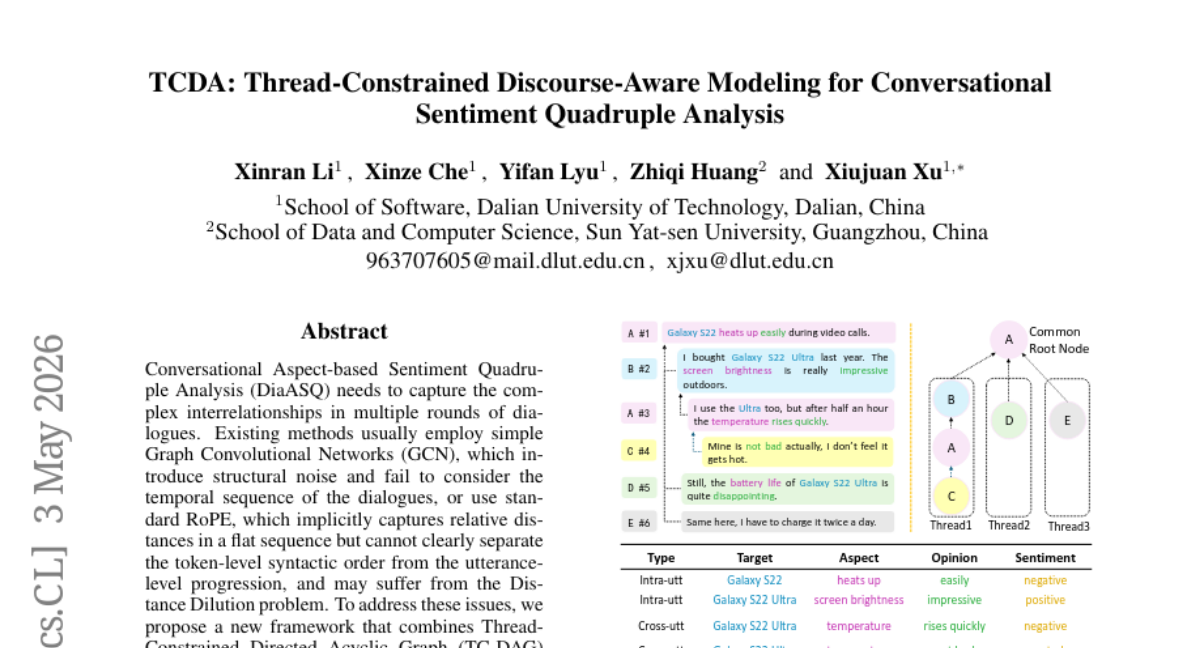

16. TCDA: Thread-Constrained Discourse-Aware Modeling for Conversational Sentiment Quadruple Analysis

🔑 Keywords: Conversational Sentiment Analysis, Thread-Constrained Directed Acyclic Graph, Discourse-Aware Rotary Position Embedding, Temporal Sequence, Distance Dilution

💡 Category: Natural Language Processing

🌟 Research Objective:

– To address the limitations in Conversational Aspect-based Sentiment Quadruple Analysis (DiaASQ) by capturing complex interrelationships and effectively handling the dialogue structure and temporal sequences.

🛠️ Research Methods:

– Proposed a novel framework combining Thread-Constrained Directed Acyclic Graph (TC-DAG) and Discourse-Aware Rotary Position Embedding (D-RoPE) to enhance the structure and sequence capturing in dialogues.

💬 Research Conclusions:

– Demonstrated state-of-the-art performance on benchmark datasets by using the framework to alleviate structural noise, integrate temporal sequences, and resolve Distance Dilution issues.

👉 Paper link: https://huggingface.co/papers/2605.01717

17. A Benchmark for Interactive World Models with a Unified Action Generation Framework

🔑 Keywords: iWorld-Bench, world models, physical interaction capabilities, video datasets, AI-generated summary

💡 Category: Machine Learning

🌟 Research Objective:

– The primary aim is to develop iWorld-Bench, a comprehensive benchmark to evaluate the physical interaction capabilities of world models.

🛠️ Research Methods:

– Construction of a diverse video dataset with 330k clips, selection of 2.1k high-quality samples, and introduction of an Action Generation Framework with six unified task types generating 4.9k test samples.

💬 Research Conclusions:

– Evaluated 14 representative world models, identifying key limitations and providing insights for future research, with the iWorld-Bench model leaderboard publicly available.

👉 Paper link: https://huggingface.co/papers/2605.03941

18. SplAttN: Bridging 2D and 3D with Gaussian Soft Splatting and Attention for Point Cloud Completion

🔑 Keywords: Cross-Modal Entropy Collapse, Differentiable Gaussian Splatting, point cloud completion, continuous image-plane representation, gradient flow

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– The study aims to address the issue of Cross-Modal Entropy Collapse during point cloud completion by proposing a novel method named SplAttN.

🛠️ Research Methods:

– SplAttN replaces hard projection with Differentiable Gaussian Splatting to maintain a dense, continuous image representation and enhance the learnability of cross-modal connections.

💬 Research Conclusions:

– SplAttN achieves state-of-the-art performance across multiple benchmarks, including PCN and ShapeNet-55/34, and demonstrates robust performance on the real-world KITTI benchmark, maintaining dependency on visual cues better than existing baselines.

👉 Paper link: https://huggingface.co/papers/2605.01466

19. Reinforcement Learning for LLM-based Multi-Agent Systems through Orchestration Traces

🔑 Keywords: Reinforcement Learning, LLM Agents, Multi-Agent Systems, Orchestration Traces, Communication

💡 Category: Reinforcement Learning

🌟 Research Objective:

– To optimize reinforcement learning for large language model-based multi-agent systems by focusing on task orchestration and coordination among agents.

🛠️ Research Methods:

– Utilization of orchestration traces, which are temporal interaction graphs, to study events such as spawning, delegation, communication, and stopping within LLM-based agent teams.

💬 Research Conclusions:

– Identified critical aspects of reward design and credit assignment in RL for LLM systems, highlighting areas like parallelism, correctness, and aggregation quality. The study also notes a lack of explicit RL training methods for the stopping decision within current academic and industrial evaluations.

👉 Paper link: https://huggingface.co/papers/2605.02801

20. SymptomAI: Towards a Conversational AI Agent for Everyday Symptom Assessment

🔑 Keywords: Conversational AI Agents, Symptom Assessment, Differential Diagnosis, Wearable Health Data

💡 Category: AI in Healthcare

🌟 Research Objective:

– To explore the performance of SymptomAI, a set of conversational AI agents, in conducting end-to-end patient interviews and differential diagnosis compared to clinicians.

🛠️ Research Methods:

– A large-scale randomized study involving 13,917 participants using the Fitbit app to interact with five AI agents, assessing performance via structured and user-guided interviews.

– Analysis included 1,509 conversations from a general US population panel for broader validation.

💬 Research Conclusions:

– SymptomAI’s diagnostic accuracy was significantly higher than clinicians when conducting structured symptom interviews.

– The research demonstrated that dedicated symptom interviews eliciting additional information outperform baseline user-guided conversations.

– Results indicate strong associations between acute infections and physiological shifts, validated across diverse populations beyond wearable device users.

👉 Paper link: https://huggingface.co/papers/2605.04012

21. Video Generation with Predictive Latents

🔑 Keywords: Predictive Learning, Video Generative Modeling, Latent Space, Temporal Coherence, Motion Priors

💡 Category: Generative Models

🌟 Research Objective:

– The study explores enhancing video generative modeling using a Predictive Video VAE model by unifying predictive learning with video reconstruction to improve latent space representation and generative performance.

🛠️ Research Methods:

– Introduced a predictive reconstruction objective that integrates predictive learning with video reconstruction by discarding future frames randomly and encoding partial past observations to reconstruct observed frames and predict future ones.

💬 Research Conclusions:

– The proposed Predictive Video VAE (PV-VAE) model achieves superior video generation performance, including 52% faster convergence and a 34.42 FVD improvement over existing models on UCF101, demonstrating improved scalability and effective temporal coherence capture in its latent space representation.

👉 Paper link: https://huggingface.co/papers/2605.02134

22. X2SAM: Any Segmentation in Images and Videos

🔑 Keywords: X2SAM, Multimodal Large Language Models, segmentation, video segmentation, conversational instructions

💡 Category: Multi-Modal Learning

🌟 Research Objective:

– Introduce X2SAM, a unified segmentation model that extends multimodal segmentation capabilities from images to videos, supporting both conversational instructions and visual prompts.

🛠️ Research Methods:

– Use a Mask Memory module for temporally consistent video mask generation and joint training strategy over heterogeneous datasets.

💬 Research Conclusions:

– X2SAM shows strong video segmentation performance, remains competitive on image segmentation benchmarks, and supports interactive, visual grounded segmentation across image and video inputs.

👉 Paper link: https://huggingface.co/papers/2605.00891

23. OpenSeeker-v2: Pushing the Limits of Search Agents with Informative and High-Difficulty Trajectories

🔑 Keywords: State-of-the-art, Supervised Fine-Tuning, Large Language Model, Academic-led Development, OpenSeeker-v2

💡 Category: Natural Language Processing

🌟 Research Objective:

– This study presents a simplified approach to achieving state-of-the-art deep search capabilities in Large Language Model agents using minimal data.

🛠️ Research Methods:

– The research utilizes a supervised fine-tuning strategy, enhanced by scaling knowledge graph size, expanding the tool set size, and implementing strict low-step filtering.

💬 Research Conclusions:

– OpenSeeker-v2, trained with only 10.6k data points, exceeds performance benchmarks compared to complex industrial pipelines, highlighting the potential of academic-led advancements in this field.

👉 Paper link: https://huggingface.co/papers/2605.04036